Automated Evaluation: Prompt for Judge Model Scoring and Preprocessing/Postprocessing Format Requirements

Download

Mode fokus

Ukuran font

Automated evaluation provides the feature of automatic scoring by a judge model, which significantly improves scoring efficiency and reduces the cost of manual scoring.

The judge model scoring feature provides a wizard-based and customizable way to define the evaluation process and configuration for scoring. It allows users to directly customize the evaluation process based on the platform's built-in scoring nodes — preprocessing, judge model, and postprocessing. It fully supports users in highly customizing scoring prompts and preprocessing/postprocessing scripts to achieve the best scoring results for the evaluation set. The scoring nodes are defined as follows:

Judge model: evaluates the evaluation set by using an AI model for scoring. Its core feature is to support users in choosing Tencent Cloud TI-ONE Platform (TI-ONE) online services or inputting API calls for scoring.

Scoring prompt: tailors appropriate scoring rules for the evaluation set through custom input methods. The rules primarily include the system definition of the judge model, scoring criteria, and the need to combine questions, reference answers, and outputs of the model under evaluation into a string, which is then input to the judge model. To achieve better scoring results, users are allowed to customize the scoring prompt for each judge model. The input content for the judge model needs to be dynamically constructed using Jinja2 template syntax.

To obtain better evaluation results, in some cases, data needs to be processed before and after the judge model's scoring. The platform supports inputting Python scripts for data processing, which includes two types: preprocessing and postprocessing.

Preprocessing: typically used to process the raw data set and the output (response) of the model under evaluation, serving as the final scoring object input to the judge model.

Postprocessing: typically used to perform data processing on the output of the judge model to obtain the final scoring results.

The following sections detail the scoring prompt and preprocessing/postprocessing formats for the judge model.

I. Usage Guidelines for Scoring Template of the Judge Model

The scoring prompt is used to define the input for the judge model, including the system definition of the judge model, scoring criteria, and the need to combine questions, reference answers, and outputs of the model under evaluation into a string, which is then input to the judge model. Users need to dynamically construct the input content of the judge model using Jinja2 template syntax.

Note:

What Is Jinja2?

Jinja2 is a powerful and flexible template engine that supports syntax such as variable interpolation, control statements, and filters, and is widely used for generating dynamic text content.

In judge model evaluation, we use Jinja2 templates to dynamically construct the question and the response of the model under evaluation seen by the judge model, enabling it to make evaluations based on a clear and standardized context.

When editing the prompt, users can apply the corresponding field content from the response of the model under evaluation and the data of the evaluation set. Additionally, the platform by default uses the user data set and provides predefined variables for users to reference during editing.

1. Field Definitions and Variable Descriptions

Description of Predefined Variables

The platform predefines the following 3 core variables by default to quickly obtain key information in chats. Users can directly reference these variables in the template.

Variable Name | Definition Method | Data Source | Default Value |

data.question | Content of the last message where the role is user | Extracts the content of the last message with role: "user" from a complete chat record (data.messages) in the data set. | None |

data.gt | Content of the last message where the role is assistant | Extracts the content of the last message with role: "assistant" from a complete chat record (data.messages) in the data set. | Defaults to None if the role of the last message is not assistant. |

data.history | Chat history (remaining messages excluding question and gt) | The text formed by sequentially concatenating the remaining messages after filtering out the last user and assistant messages from data.messages. | The default value is None for a single-turn chat. |

Note:

All variables are derived from partial references to the evaluation set, as shown in the following example:

{

"messages": [

{"role": "user", "content": "What is the climate like in Beijing?"},

{"role": "assistant", "content": "Beijing has a temperate monsoon climate..."}, // The above content constitutes data.history.

{"role": "user", "content": "How about in summer?"}, // Last user message > data.question.

{"role": "assistant", "content": "Summer is hot and rainy..."} // Last assistant message > data.gt.

],

"ref_answer": "Beijing's summer climate features high temperatures and abundant rainfall...", // Optional field.

}

Description of Other Available Fields

Besides predefined variables, users can reference custom fields in the evaluation set based on task requirements (Note: ensure the field exists in the data of the evaluation set or the response of the model under evaluation). The following are commonly available fields:

Field Name | Definition and Purpose |

data.ref_answer | Reference answer, corresponding to the ref_answer field in the original data (return None if not included in the original data). |

response.content | Actual response content from the model under evaluation. |

data.other_user_defined_fields | All user-defined data fields in the evaluation data can be referenced. |

response.other_fields_returned_by_model | All other fields returned by the model under evaluation can be referenced; common examples include response.reasoning_content and response.tool_calls. |

2. Example of Scoring Prompt Concatenation

Note:

When a judge model is used for evaluation, the input to the judge model must be a structured, clear string that includes system definitions, scoring criteria, and the combination of questions, reference answers, and the outputs from the model under evaluation. Since the evaluation set contains multiple entries, and the content of each question, reference answer, and output from the model under evaluation changes dynamically, the above content needs to be concatenated into the prompt for the judge model. Jinja allows users to define placeholders (variables) within a piece of text (the scoring prompt) and replace these variables with actual values by passing in a context dictionary, thereby dynamically generating the final scoring prompt to be input to the judge model.

Combining predefined variables and custom fields, the following provides template examples for several different types of tasks.

Example 1: Single-Turn Q&A Template Concatenation

Raw data sample

"data": {"messages": [{"role": "user", "content": "What is the core content of Newton's First Law?"},{"role": "assistant", "content": "Every object remains at rest, or in motion at a constant speed in a straight line, unless it is acted upon by a force"}]}

Sample of response from the model under evaluation

"response": {"content": "Newton's First Law states: An object will remain at rest or in uniform motion in a straight line unless acted upon by an external force.","reasoning_content": "The user is asking about Newton's First Law. First, I need to determine which discipline this belongs to..."}

Scoring prompt and concatenation result

Act as a fair judge to evaluate the quality of the AI assistant's response to the user's question shown below. You will be provided with the current user question, a reference answer, and the assistant's response.

When evaluating the assistant's response, you first need to compare it with the reference answer and identify any errors or inaccuracies in the assistant's response.

Then, consider whether the assistant's response is helpful, relevant, and concise.

Helpful: The response correctly addresses the user's question or follows the request. If the user's question is ambiguous or could be interpreted in multiple ways, a more helpful and appropriate approach is to ask the user for clarification or more information, rather than answering based on assumptions.

Relevant: Every part of the response is closely related to or appropriately corresponds to the question asked.

Concise: The response is clear and straightforward, not verbose, wordy, or containing unnecessary information.

Then, if applicable, also consider the creativity and novelty in the assistant's response. Finally, identify any important information missing from the assistant's response that would help respond to the user's question, especially content present in the reference answer.

Your evaluation should comprehensively consider the following factors: compared with the reference answer, assess the assistant's response in terms of correctness, helpfulness, relevance, accuracy, depth, creativity, and level of detail.

First, provide a detailed evaluation note, focusing on analyzing how well the assistant's response matches the reference answer, or whether it is superior to the reference answer in certain aspects. Try to be as objective as possible.

After completing the note, strictly follow the format below to rate the assistant's response on a scale of 1 to 10:

"Score: [[score]]", for example: "Score: [[5]]".

[User question]

{{ data.question }}

[Reference answer]

{{ data.gt }}

[Assistant response]

{{ response.content }}

[Model inference process]

{{ response.reasoning_content }}

Act as a fair judge to evaluate the quality of the AI assistant's response to the user's question shown below. You will be provided with the current user question, a reference answer, and the assistant's response and reasoning process.

When evaluating the assistant's response, you first need to compare it with the reference answer and identify any errors or inaccuracies in the assistant's response.

Then, consider whether the assistant's response is helpful, relevant, and concise.

Helpful: The response correctly addresses the user's question or follows the request. If the user's question is ambiguous or could be interpreted in multiple ways, a more helpful and appropriate approach is to ask the user for clarification or more information, rather than answering based on assumptions.

Relevant: Every part of the response is closely related to or appropriately corresponds to the question asked.

Concise: The response is clear and straightforward, not verbose, wordy, or containing unnecessary information.

Then, if applicable, also consider the creativity and novelty in the assistant's response. Finally, identify any important information missing from the assistant's response that would help respond to the user's question, especially content present in the reference answer.

Your evaluation should comprehensively consider the following factors: compared with the reference answer, assess the assistant's response in terms of correctness, helpfulness, relevance, accuracy, depth, creativity, and level of detail.

First, provide a detailed evaluation note, focusing on analyzing how well the assistant's response matches the reference answer, or whether it is superior to the reference answer in certain aspects. Try to be as objective as possible.

After completing the note, strictly follow the format below to rate the assistant's response on a scale of 1 to 10:

"Score: [[score]]", for example: "Score: [[5]]".

[User question]

What is the core content of Newton's First Law?

[Reference answer]

Every object remains at rest, or in motion at a constant speed in a straight line, unless it is acted upon by a force.

[Assistant response]

Newton's First Law states: An object will remain at rest or in uniform motion in a straight line unless acted upon by an external force.

[Model inference process]

The user is asking about Newton's First Law. First, I need to determine which discipline this belongs to...

Example 2: Multi-Turn Q&A Template Concatenation

Raw data sample

"data": {"messages": [{"role": "system", "content": "You are an intelligent assistant developed by Company A, capable of accurately answering user questions."},{"role": "user", "content": "Hello, could you help explain the general theory of relativity to me?"},{"role": "assistant", "content": "The general theory of relativity was proposed by Einstein, introducing the relationship between the gravitational field and the curvature of spacetime."},{"role": "user", "content": "Then what is special relativity?"},{"role": "assistant", "content": "When an object moves at speeds approaching the speed of light, time, space, mass, and energy all exhibit laws entirely different from everyday experience, and the speed of light is the ultimate speed limit for all objects in the universe."}]}

Sample of response from the model under evaluation

"response": {"content": "Special relativity primarily studies the laws of motion in inertial frames, including time dilation and length contraction.","reasoning_content": "The user is asking about special relativity, so the core content should be explained. Common expressions include the nature of spacetime under high-speed motion, such as time dilation and length contraction."}

Scoring prompt and concatenation result

Please act as a fair judge to evaluate the quality of the AI assistant's response to the user's question shown below. You will be provided with the chat history between the assistant and the user, the current user question, a reference answer, and the assistant's response.

When evaluating the assistant's response, you first need to compare it with the reference answer and identify any errors or inaccuracies in the assistant's response. Additionally, check whether the assistant's response aligns with the information provided in the chat history, as well as how closely it matches the reference answer.

Then, evaluate whether the assistant's response is helpful, relevant, and concise.

Helpful: The response correctly addresses the user's current question or follows the request, while providing appropriate replies based on the chat context (if applicable). If the user's question is ambiguous or could be interpreted in multiple ways, a more helpful and appropriate approach is to ask the user for clarification or more information, rather than answering based on assumptions.

Relevant: Every part of the response is closely related to or appropriately addresses the question asked, considering both the current question and the relevant contextual information from the chat history.

Concise: The response is clear and straightforward, not verbose, wordy, or containing unnecessary information.

Then, if applicable, also consider the creativity and novelty in the assistant's response. Finally, identify any important information missing from the assistant's response that would help respond to the user's question, especially content present in the reference answer or information from the chat history that should have been referenced but was not mentioned.

Your evaluation should comprehensively consider the following factors: compared with the reference answer, assess the assistant's response in terms of correctness, helpfulness, relevance, accuracy, depth, creativity, and level of detail.

First, provide a detailed evaluation note, focusing on analyzing how well the assistant's response matches the reference answer, or whether it is superior to the reference answer in certain aspects. Try to be as objective as possible.

After completing the note, strictly follow the format below to rate the assistant's response on a scale of 1 to 10:

"Score: [[score]]", for example: "Score: [[5]]" or "Score: [[8]]".

[Chat history]

{{ data.history }}

[User question]

{{ data.question }}

[Reference answer]

{{ data.gt }}

[Assistant response]

{{ response.content }}

Please act as a fair judge to evaluate the quality of the AI assistant's response to the user's question shown below. You will be provided with the chat history between the assistant and the user, the current user question, a reference answer, and the assistant's response.

When evaluating the assistant's response, you first need to compare it with the reference answer and identify any errors or inaccuracies in the assistant's response. Additionally, check whether the assistant's response aligns with the information provided in the chat history, as well as how closely it matches the reference answer.

Then, evaluate whether the assistant's response is helpful, relevant, and concise.

Helpful: The response correctly addresses the user's current question or follows the request, while providing appropriate replies based on the chat context (if applicable). If the user's question is ambiguous or could be interpreted in multiple ways, a more helpful and appropriate approach is to ask the user for clarification or more information, rather than answering based on assumptions.

Relevant: Every part of the response is closely related to or appropriately addresses the question asked, considering both the current question and the relevant contextual information from the chat history.

Concise: The response is clear and straightforward, not verbose, wordy, or containing unnecessary information.

Then, if applicable, also consider the creativity and novelty in the assistant's response. Finally, identify any important information missing from the assistant's response that would help respond to the user's question, especially content present in the reference answer or information from the chat history that should have been referenced but was not mentioned.

Your evaluation should comprehensively consider the following factors: compared with the reference answer, assess the assistant's response in terms of correctness, helpfulness, relevance, accuracy, depth, creativity, and level of detail.

First, provide a detailed evaluation note, focusing on analyzing how well the assistant's response matches the reference answer, or whether it is superior to the reference answer in certain aspects. Try to be as objective as possible.

After completing the note, strictly follow the format below to rate the assistant's response on a scale of 1 to 10:

"Score: [[score]]", for example: "Score: [[5]]" or "Score: [[8]]".

[Chat history]

[SYSTEM] You are an intelligent assistant developed by Company A, capable of accurately answering user questions.

[USER] Hello, could you help explain the general theory of relativity to me?

[BOT] The general theory of relativity was proposed by Einstein, introducing the relationship between the gravitational field and the curvature of spacetime.

[User question]

Then what is special relativity?

[Reference answer]

When an object moves at speeds approaching the speed of light, time, space, mass, and energy all exhibit laws entirely different from everyday experience, and the speed of light is the ultimate speed limit for all objects in the universe.

[Assistant response]

Special relativity primarily studies the laws of motion in inertial frames, including time dilation and length contraction.

II. Specifications for Preprocessing/Postprocessing Script Writing

When you need to customize the preprocessing logic for evaluation data or the postprocessing logic for the model output response, you must provide a Python script that complies with the following specifications. The evaluation system will import and execute the agreed-upon functions in your script as a module.

1. Function Naming and API Conventions

Preprocessing and postprocessing scripts must each contain the following two functions: preprocess and postprocess. The evaluation module will call the preprocessing functions based on these names.

2. Preprocessing Function

The preprocessing function is typically used to process the raw data set and the response of the model under evaluation to construct the final prompt input to the model. You need to implement this function.

Function name: preprocess

Feature: Process the response of the model under evaluation or the raw data set, ultimately returning a value for use by subsequent evaluation modules or returning no value.

Input parameters: data, resp, **kwargs

Output parameter: processing result, which serves as the result of the current processing node [displayed in the processing results].

Content of the data structure: Users can add new fields to the structure for subsequent reference via the dictionary.

data [dict type]: Automatically parsed based on the content of the evaluation set, containing the messages field in OpenAI's chat format. When data is prepared according to the evaluation format, any other fields will be included in this structure, such as the ref_answer field.

Field Name | Included or Not | Field Description | Field Example |

messages | Yes | OpenAI chat format | "messages": [{"role": "user", "content": "Hello"}, {"role": "assistant", "content": "Hello, how can I help you"}, {"role": "user", "content": "What is the sum of 12 and 12"}, {"role": "assistant", "content": "24"}] |

ref_answer | Parsed from the user data set; None if the original data set does not contain this field. | Reference answer | "The answer is 24" |

gt | If the last message in the messages field has the role of assistant, then its content is taken as this field. | Standard answer | "24" |

question | Parse the content of the last message where the role is user in the messages field as the content of this field. | User question | "What is the sum of 12 and 12" |

history | The remaining content after eliminating the gt and question fields from messages, rendered as a string according to the Jinja template of the platform. | Chat history | "[USER] Hello [BOT] Hello, how can I help you" |

Other Fields | Other fields in user data. Users can also add them to processing scripts. | Custom fields | str | int | float | dict and other JSON-serializable content |

response [dict type]: The parsed result of the LLM's response, containing the content field. If the inference framework supports separate_reasoning, it includes the reasoning_content field. Other possible fields include tool_calls.

Field Name | Included or Not | Field Description | Field Example |

content | Yes | Inference result of the LLM | "The answer is 24" |

reasoning_content | Included if the inference framework supports separate display and return of the chain of thought (CoT); otherwise, the value is None. | Content of LLM inference CoT | "Hmm, the user is asking 'What is the sum of 12 and 12'. This seems like a basic question, but someone just starting to learn math might need a detailed explanation..." |

tool_calls | Included if tool calls are executed and this field is returned; otherwise, the value is None. | Content of the LLM tool call | [{"id":"call_id","type":"function","function":{"name":"get_current_weather","arguments":"{\\"location\\": \\"San Francisco, USA\\", \\"format\\": \\"celsius\\"}"}}] |

Other Fields | Other fields returned by the model. Users can also add them to processing scripts. | Custom fields | str | int | float | dict and other JSON-serializable content. |

Function signature:

def preprocess(data: dict, resp: dict, **kwargs) -> bool | int | str | float | None:""":data: Dict, a dictionary containing the following key information:- 'messages': Multi-turn chat history.- 'ref_answer': (Optional) Reference answer.- And all other user-defined fields:resp: Dict, the inference result of the LLM, containing the following:- content: Model-generated response.- reasoning_content: Inference process of the model-generated response (if any).- tool_calls: List of tools called by the model (if any)- And all other user-defined fields:**kwargs: Extension fields of the platform, which may not be provided during preprocessing.:return: Preprocessing result, which can be referenced in the jinja template for judge model evaluation."""# --- Implement your logic here. ---pass

Note:

1. The preprocessing function must include the fields data, resp, and **kwargs. The **kwargs field is used for future platform extensions to provide more operable data items.

2. data and resp support accessing or adding fields via dictionary/object methods.

3. To prevent the evaluation process from being interrupted due to unexpected data formats, it is recommended to incorporate basic exception handling in the script.

def preprocess(data: dict, resp: dict) -> bool | int | str | float | None:try:# Your processing logicexcept Exception as e:print(f"[Error in the preprocessing script]: {e}")return 1 # Return the default value as needed.

Usage examples:

Example 1: Determine whether the model's response contains a CoT.

def preprocess(data: dict, resp: dict) -> bool:# Check whether the model response contains reasoning_content.return resp['reasoning_content'] == ''

Example 2: Remove the CoT from the content.

import redefault_cot_pairs = [('<think>', '</think>'), ('<Think>', '</Think>')]def remove_cot(text: str, cot_pairs=None) -> str:if cot_pairs is None:cot_pairs = default_cot_pairsret = textfor t0, t1 in cot_pairs:ret = re.sub(f"{t0}.*?{t1}", '', ret, flags=re.DOTALL)return ret.strip()def preprocess(data: dict, resp: dict) -> bool | int | str | float | None:field = 'clean'resp[field] = remove_cot(resp['content'])return resp[field]

Perform data processing only, without returning a value.

def preprocess(data: dict, resp: dict) -> None:# Example: Add the content of the model response to the dataif resp['content']:data['resp'] = resp['content']# Does not affect the evaluation judgment, and returns None.return None

3. Postprocessing Function

The postprocessing function is typically used to perform postprocessing on the output of the judge model to obtain the final scoring results.

Function name: preprocess

Feature: Process the response of the judge model.

Input parameters [required]: judge_reqs, judge_resps, judge_models, data, resp, **kwargs

Output parameter: processing result, which serves as the result of the current processing node [displayed in the processing results].

Content of the data structure: Users can add new fields to the structure for subsequent reference via the dictionary.

judge_reqs [list[dict] type]: A list where inference requests for judge models are added sequentially according to the processing order defined in the evaluation metric configuration. Each item in the list is generated by parsing the user's prompt template and configuration of inference hyperparameters, and by default contains the messages field with stream enabled, and the separate_reasoning field.

Field Name | Included or Not | Field Description | Field Example |

messages | Yes | OpenAI chat format. | [{"role": "system", "content": "You are a helpful assistant. 0827-adjustment" },{"role": "user","content": "You are a judge. Please score the response.\\n\\n\\n[Historical information and question]\\n\\n<|im_start|>user\\nHello<|im_end|>\\n\\n\\n<|im_start|>assistant\\nHello, how can I help you?<|im_end|>\\n\\n\\n<|im_start|>user\\nWhat is the sum of 18 and 18<|im_end|>\\n\\n\\n\\n[Answer from the to-be-evaluated model]\\n{'content': '18 + 18 = 36'}\\n\\n[Reference answer]\\nThe answer is 24\\n\\n\\nProvide your score, with a maximum of 5 and a minimum of 1."}] |

Other Fields | Other fields returned by the model. Users can also add them to processing scripts. | Custom fields | str | int | float | dict and other JSON-serializable content |

judge_models [list[dict] type]: A list where each item contains the basic configuration of a judge model, added sequentially according to the processing order defined in the evaluation metric configuration.

Field Name | Included or Not | Field Description | Field Example |

name | Yes | Name of the judge model | "deepseek" |

judge_template_content | Yes | Scoring template for the judge model | """Scoring template for the judge, for example: You are a judge. Please score the response. [Historical information and question] {% for message in data.messages %} {{'<|im_start|>' + message['role'] + '\\n' + message['content'] + '<|im_end|>' + '\\n'}} {% endfor %} [Answer from the to-be-evaluated model] {{ response.content }} [Reference answer] {{ data.ref_answer }} Provide your score, with a maximum of {{ max_score }} and a minimum of {{ min_score }}. """ |

generation_params | Not necessarily, parsed based on user configuration. | Inference parameters of the judge model | {\\"temperature\\": 0.8, \\"top_p\\": 0.85} |

system_prompt | Not necessarily, parsed based on user configuration. | System prompt for judge model inference | "You are a helpful assistant. " |

data [dict type]: The data structure is the same as the data structure in preprocessing.

judge_resps [list[dict] type]: A list where the inference results of the judge models are added sequentially according to the processing order defined in the evaluation metric configuration. The structure of each item in the list is the same as the resp structure in preprocessing.

Function signature:

def postprocess(judge_reqs: list[dict],judge_resps: list[dict],judge_models: list[dict],data: dict,resp: dict,**kwargs,) -> bool | int | str | float | None:""":param judge_reqs: Scoring request data for the judge model.:param judge_resps: Inference results of the judge model.:param judge_models: Relevant configuration for the judge model.:return: performs custom postprocessing logic and returns a value of type bool | int | str | float | None for final evaluation result judgment, display, or statistics to facilitate metric calculation."""# --- Implement your logic here. ---pass

Note:

The postprocessing function must include the fields judge_reqs, judge_resps, judge_models, data, resp, and **kwargs. The **kwargs parameter is used for future platform extensions to provide more operable data items. By default, the judge_req, judge_resp, and judge_model of the last node of the judge model are injected and can be accessed as follows:

def postprocess(judge_reqs: list[dict],judge_resps: list[dict],judge_models: list[dict],data: dict,resp: dict,**kwargs,) -> bool | int | str | float | None:judge_req = kwargs.get('judge_req')judge_resp = kwargs.get('judge_resp')judge_model = kwargs.get('judge_model')

Usage examples:

Calculate the average score from all judges.

def postprocess(judge_reqs, judge_resps, judge_models, data, resp, **kwargs):scores = []for jr in judge_resps:try:# Assume the judge returns "The answer scores 4 points" or "Score: 4".content = jr['content']score = int(content.strip().split(" ")[-2]) # Simple example; adjust according to the actual format.scores.append(score)except:continueif scores:return round(sum(scores) / len(scores), 2)return None

Determine whether the majority of judges consider the answer correct.

def postprocess(judge_reqs, judge_resps, judge_models, data, resp, **kwargs):positive_count = 0for jr in judge_resps:content = jr['content']if "good" in content or "correct" in content or "4" in content or "5" in content:positive_count += 1return positive_count > len(judge_resps) / 2 # Return true if more than half consider it correct.

Perform data organization only, and return None.

def postprocess(judge_reqs, judge_resps, judge_models, data, resp, **kwargs):# Data storage, structure transformation, and so on, can be performed here without affecting the final evaluation.return None

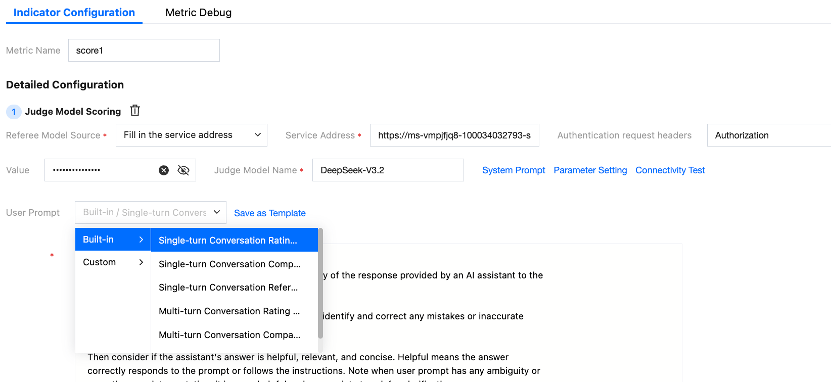

III. Platform Built-in Scoring Prompt Template and Recommended Postprocessing Scripts

Overview: To improve user configuration efficiency, the platform has built-in scoring prompt templates for single-turn and multi-turn chats, along with corresponding postprocessing methods.

1. Scoring Method for Single-Turn Chats

This method directly scores the responses of the model under evaluation, with a final score ranging from 1 to 10. If no custom postprocessing script is defined, the platform will help users perform postprocessing by default to extract the specific score as the final result.

2. Comparative Scoring Method for Single-Turn Chats

Based on the original question, the judge model scores by comparing the reference answer (the ref_answer field in the data set; users may modify this to other custom fields) with the response from the model under evaluation. The judge model outputs whether A or B is better, worse, or equal. The postprocessing script then extracts this result and maps it to the corresponding score.

The corresponding postprocessing script is provided below for user reference:

import refrom typing import Optionalans_map = {"A>>B": 1,"A>B": 2,"A=B": 3,"B>A": 4,"B>>A": 5,}def parse_last_comparison(text: str) -> Optional[str]:pattern = r"\\[\\[(A>>B|A>B|A=B|B>A|B>>A)\\]\\]"matches = re.findall(pattern, text)return matches[-1] if matches else Nonedef postprocess(judge_reqs: list[dict],judge_resps: list[dict],judge_models: list[dict],data: dict,resp: dict,**kwargs,) -> Optional[int]:last_comparison = parse_last_comparison(judge_resps[-1]['content'])return ans_map.get(last_comparison)

3. Reference-based Scoring Method for Single-Turn Chats

This method scores the responses of the model under evaluation by referring to the ref_answer field, with a final score ranging from 1 to 10. If no custom postprocessing script is defined, the platform will help users perform postprocessing by default to extract the specific score as the final result.

4. Scoring Method for Multi-Turn Chats

This method scores the responses of the model under evaluation (applicable to usage scenarios requiring context), with a final score ranging from 1 to 10. If no custom postprocessing script is defined, the platform will help users perform postprocessing by default to extract the specific score as the final result.

5. Comparative Scoring Method for Multi-Turn Chats

This method scores the responses of the model under evaluation (applicable to usage scenarios requiring context). The judge model outputs whether A or B is better, worse, or equal. The postprocessing script then extracts this result and maps it to the corresponding score.

The corresponding postprocessing script is provided below for user reference:

import refrom typing import Optionalans_map = {"A>>B": 1,"A>B": 2,"A=B": 3,"B>A": 4,"B>>A": 5,}def parse_last_comparison(text: str) -> Optional[str]:pattern = r"\\[\\[(A>>B|A>B|A=B|B>A|B>>A)\\]\\]"matches = re.findall(pattern, text)return matches[-1] if matches else Nonedef postprocess(judge_reqs: list[dict],judge_resps: list[dict],judge_models: list[dict],data: dict,resp: dict,**kwargs,) -> Optional[int]:last_comparison = parse_last_comparison(judge_resps[-1]['content'])return ans_map.get(last_comparison)

6. Reference-based Scoring Method for Multi-Turn Chats

This method scores the responses of the model under evaluation by referring to the ref_answer field (applicable to usage scenarios requiring context), with a final score ranging from 1 to 10. If no custom postprocessing script is defined, the platform will help users perform postprocessing by default to extract the specific score as the final result.

Bantuan dan Dukungan

Apakah halaman ini membantu?

Anda juga dapat Menghubungi Penjualan atau Mengirimkan Tiket untuk meminta bantuan.

masukan