Currently, the basic running environment for DLC's PySpark uses Python 3.9.2.

Python dependencies for Spark jobs can be specified in the following two methods:

1. Use --py-files to specify dependency modules and files.

2. Use --archives to specify a virtual environment.

If your module or file is compiled by using pure Python to implement customized function, it is recommended to specify Python dependencies using the --py-files.

The --archives option allows you to package and use the entire development and test environment. This method supports compiled installations of C-related dependencies and is recommended when the environment is more complex.

Note:

The two methods mentioned above can be used simultaneously based on your needs.

Using --py-files to Specify Dependency Packages

This method is suitable for modules or files implemented in pure Python, without any C dependencies.

Step 1: Packaging Modules/Files

For external PyPI packages, use the pip command to install and package common dependencies in the local environment. The dependencies should be implemented in pure Python and should not be dependent on any C-related databases.

pip install -i https://mirrors.tencent.com/pypi/simple/ <packages...> -t dep

cd dep

zip -r ../dep.zip .

The single-file module (e.g., functions.py) and custom Python modules can be packaged by using the method mentioned above. It is important to ensure that custom Python modules are standardized according to Python's official requirements. For more details, see the official Python Packaging User Guide. Step 2: Importing the Packaged Module

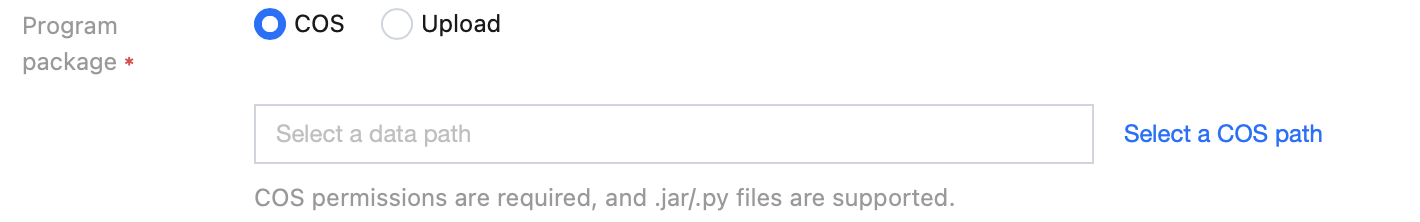

In the Data Lake DLC Console, create a job in the Data Job module. Use the --py-files parameter to import the packaged dep.zip file, which can be uploaded either through COS or directly from your local device. Using a Virtual Environment

A virtual environment can resolve issues with some Python dependency packages that are dependent on C databases. Users can compile and install dependency packages into the virtual environment as needed, and then upload the entire environment.

Since C-related dependencies involve compilation and installation, it is recommended to use an x86 architecture machine, Debian 11 (Bullseye) system, and Python 3.9.2 environment for packaging.

Step 1: Packaging the Virtual Environment

There are two methods to package a virtual environment: using Venv or Conda.

1. Packaging with Venv.

python3 -m venv pyvenv

source pyvenv/bin/activate

(pyvenv)> pip3 install -i [https://mirrors.tencent.com/pypi/simple/](https://mirrors.tencent.com/pypi/simple/) packages

(pyvenv)> deactivate

tar czvf pyvenv.tar.gz pyvenv/

2. Packaging with Conda.

conda create -y -n pyspark_env conda-pack <packages...> python=<3.9.x>

conda activate pyspark_env

conda pack -f -o pyspark_env.tar.gz

After packaging is completed, upload the packaged virtual environment file pyvenv.tar.gz to COS.

Note:

Use the tar command for packaging.

3. Use the provided packaging script. To use the packaging script, you need to have docker installed. The script currently supports Linux and macOS environments.

bash pyspark_env_builder.sh -h

Usage:

pyspark-env-builder.sh [-r] [-n] [-o] [-h]

-r ARG, the requirements for python dependency.

-n ARG, the name for the virtual environment.

-o ARG, the output directory. [default:current directory]

-h, print the help info.

|

-r | Specifies the location of the requirements.txt file. |

-n | Specifies the name of the virtual environment (default: py3env). |

-o | Specifies the local directory to save the virtual environment (default: the current directory). |

-h | Prints help information. |

requests

bash pyspark_env_builder.sh -r requirement.txt -n py3env

After the script running is completed, you can obtain py3env.tar.gz in the current directory and then upload this file to COS.

Step 2: Specifying the Virtual Environment

In the Data Lake DLC console, create a job in the Data Operation Module following the instructions as shown in the screenshot below. 1. For the --archives parameter, enter the full path to the virtual environment. The name of the decompressed folder is After the #.

Note:

The # symbol is used to specify the decompression directory. The decompression directory will affect the configuration of the subsequent running environment parameters.

2. In the --config parameter, specify the running environment settings.

For the Venv packaging method, configure: spark.pyspark.python = venv/pyspark_venv/bin/python3

For the Conda packaging method, configure: spark.pyspark.python = venv/bin/python3

For the script packaging method, configure: spark.pyspark.python = venv/bin/python3

Note:

Due to the differences in packaging methods between Venv and Conda, the directory structure will vary. You can decompress the .tar.gz file to check the relative path of the Python file.