Common Experiment

Feature Overview

Model experimentation is one of the key modules in WeData's implementation model for manageable processes and reproducible experiments in production, supporting the following core features:

Support submitting, storing, managing, and viewing key information during experiment/operation, such as experiment/operation name, model file, code package, hyperparameter, environment, dataset/feature, training metrics, and creation time.

Support comparing key information between experiments/operations.

Support version management and association records for easy information tracking and problem localization.

The model experimentation module of WeData is implemented based on the mainstream MLFlow toolkit in the industry. Its manageable implementation process, reproducible experiment workflow, and module features are as follows:

Operation Steps

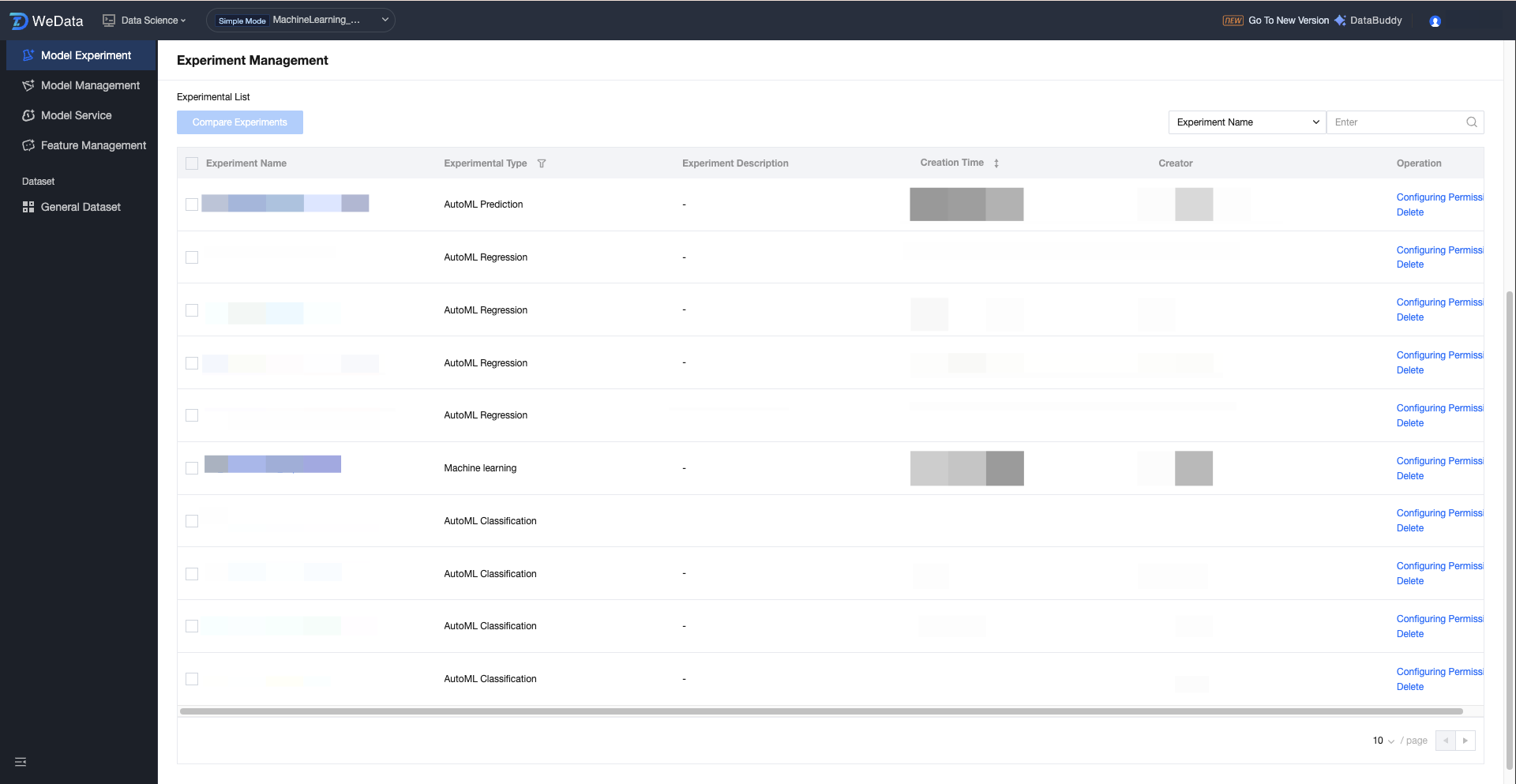

Experimental List

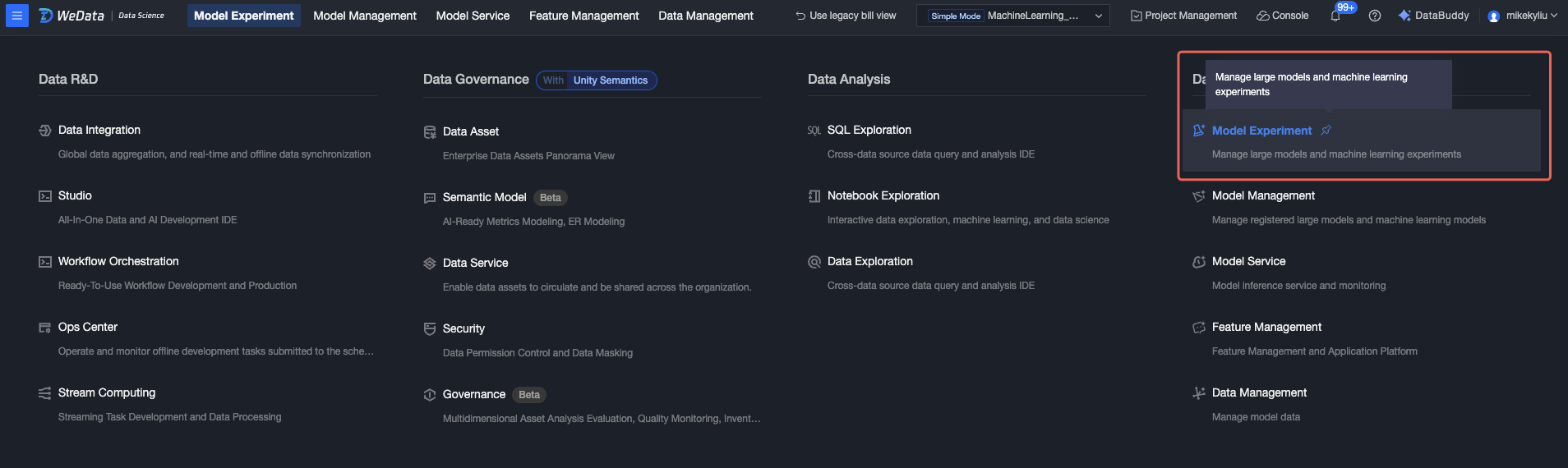

1. Click to enter the "Model Experiment" feature menu.

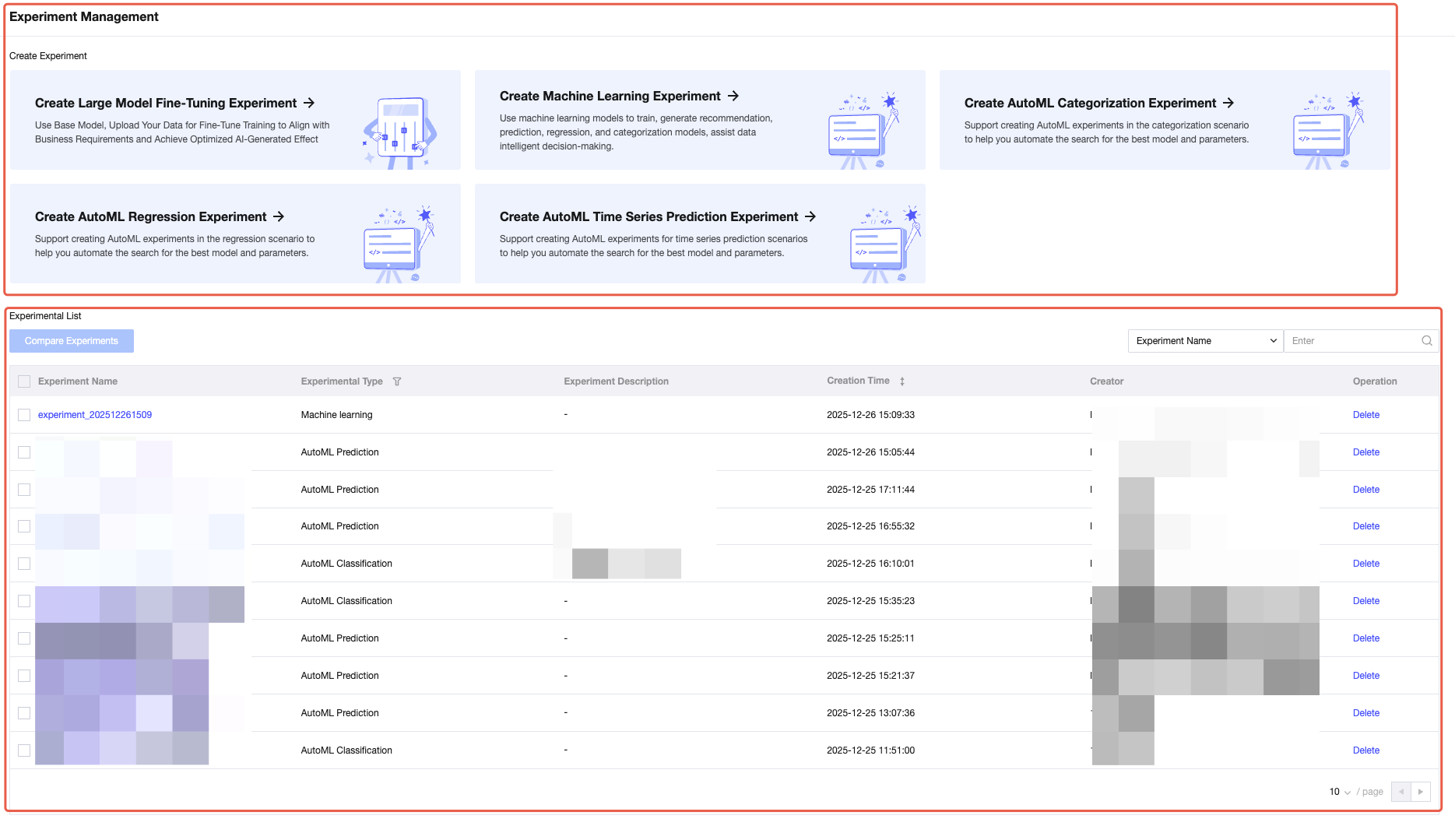

2. Home page displays create experiment and experiment list.

Creating an Experiment

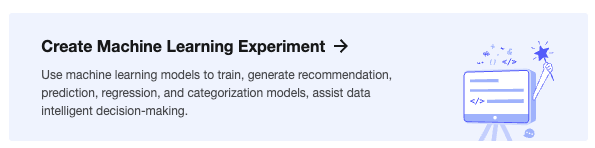

1. You can create a machine learning experiment on the current page by clicking the create button.

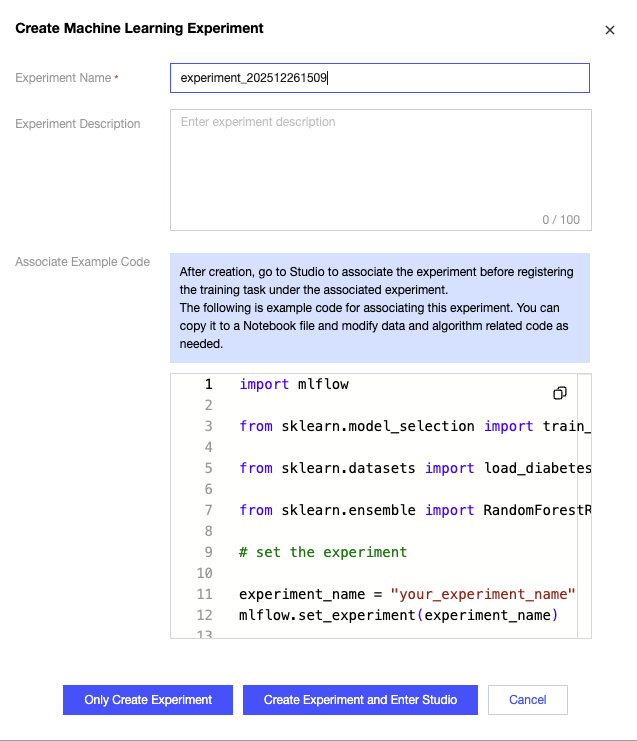

2. Click Create Machine Learning Experiment, fill in the Experiment Name in the popup to create the instance successfully.

Note:

After creating an experiment, go to Studio to associate it before registering the training task under the linked experiment.

3. You can use the mlflow.create_experiment() function in the training script to create an experiment, for example, define the experiment name as "experiment_202512261509". After running the code, the experiment will appear in the list.

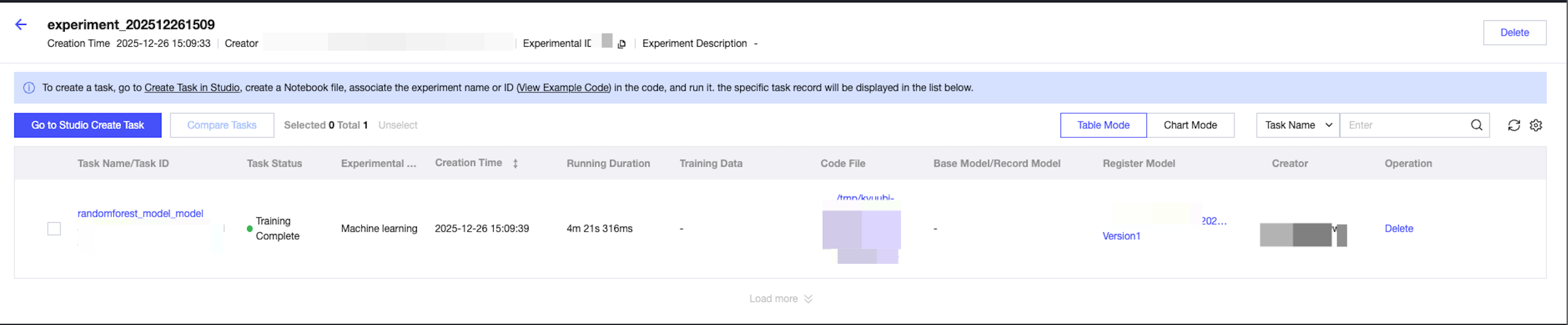

Experimental Details

1. Click "Experiment Name", and the running list of the experiment will be displayed on the right, showing relevant information including: run name, running state, experiment name, creation time, running time, dataset, code file, registered model, etc.

2. In the current list, you can click the code source file and registered model to navigate to Studio and the model details page for viewing.

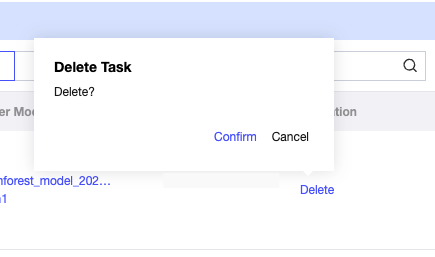

3. You can also delete tasks in the operation column.

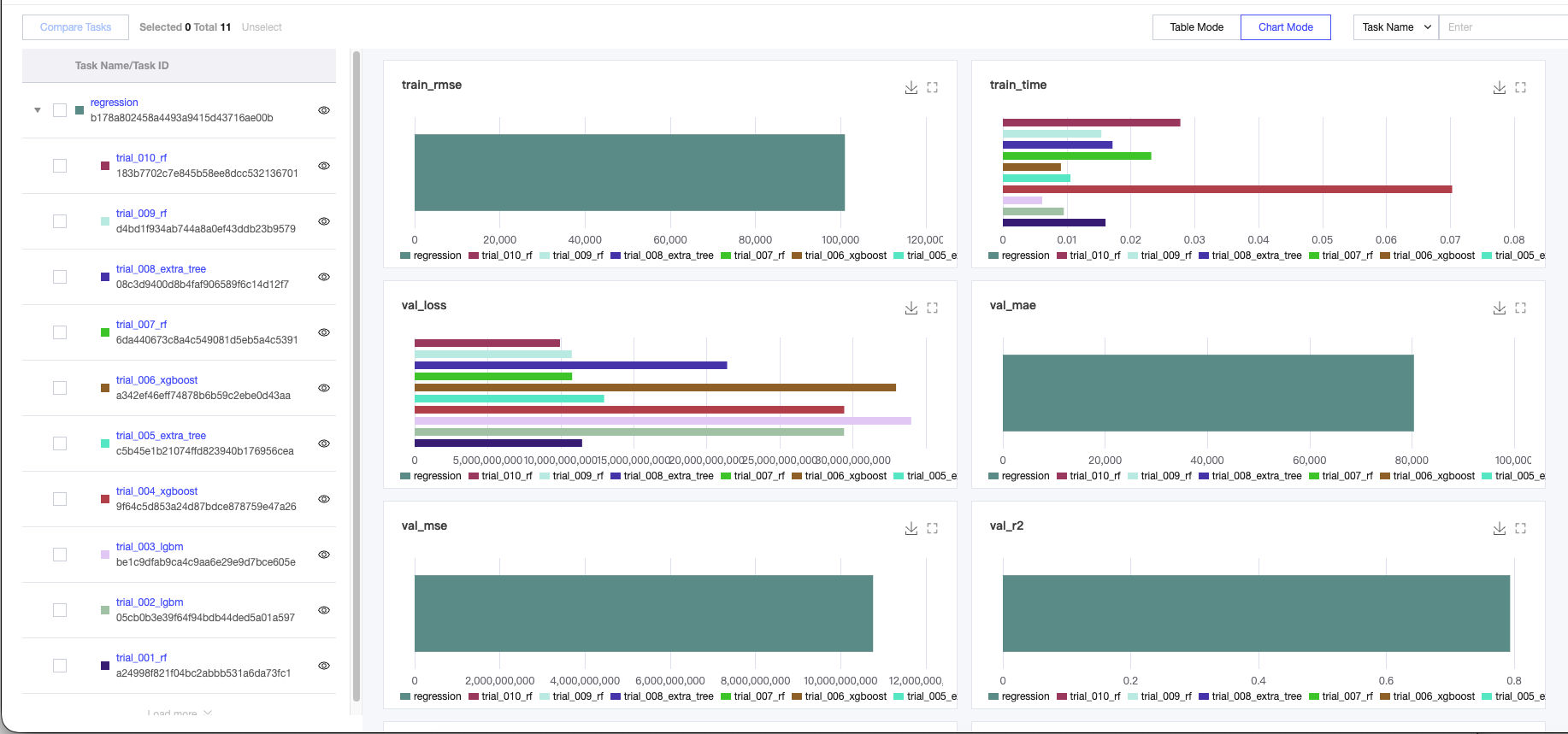

4. Running tasks chart view.

Display model metrics of running tasks. Support click to show or hide the histogram of the running task. Global search for chart name and parameters is supported at the top.

Support downloading (default PNG file) and full screen display in the top-right corner.

Note:

When the number of running tasks exceeds 10, the first 10 running tasks are displayed sequentially by default to ensure a smooth reading experience. Tasks exceeding the limit are automatically hidden.

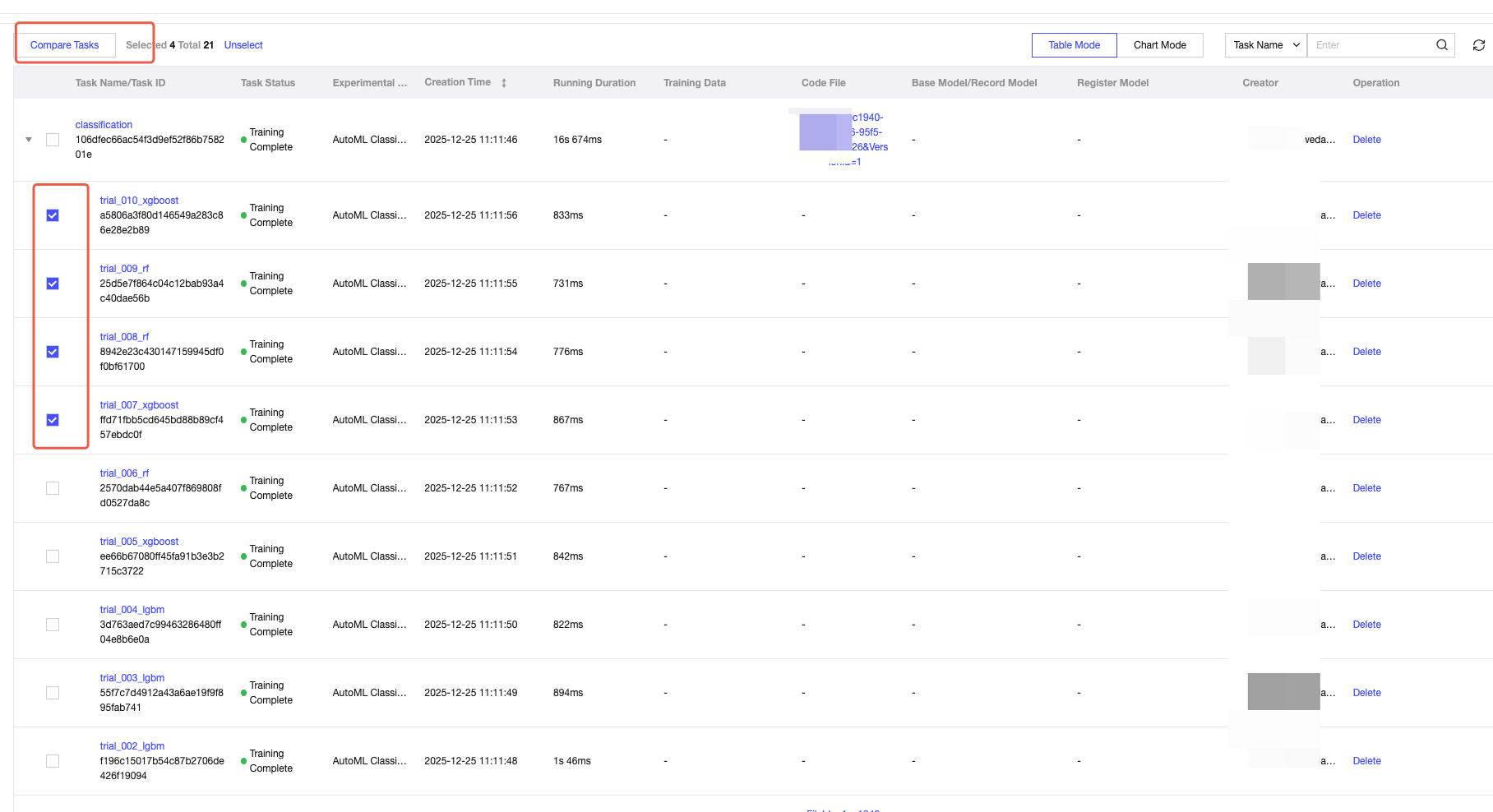

5. Compare tasks.

6. Select tasks via checkboxes, click the Compare tasks button to compare parameters and objects of running tasks. This allows detailed comparison of experimental running tasks to finally pick over for application.

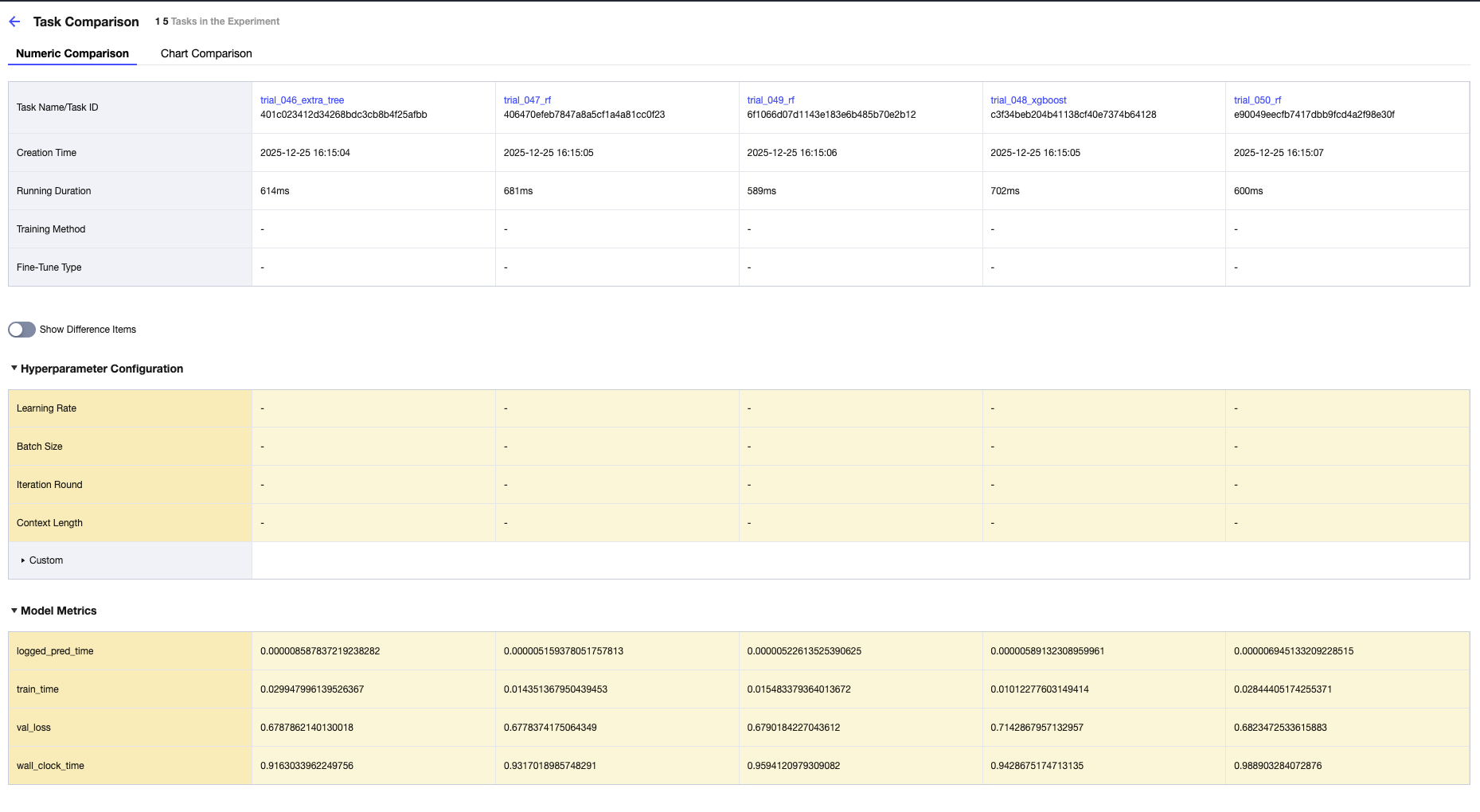

Numeric value comparison display view of selected running task details, parameters, and metrics.

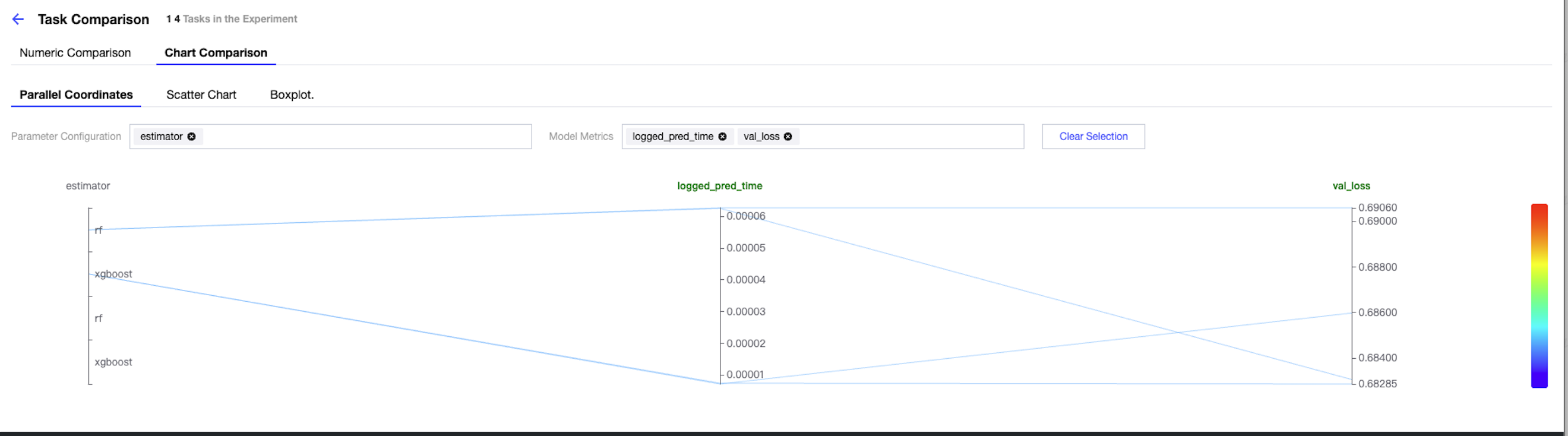

Parallel coordinates

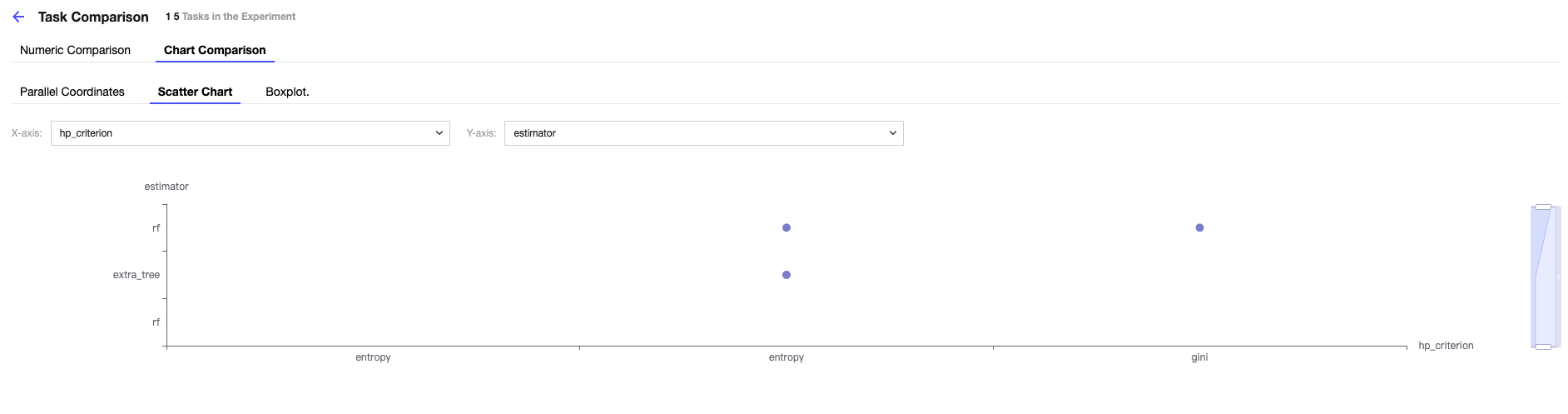

Scatter chart

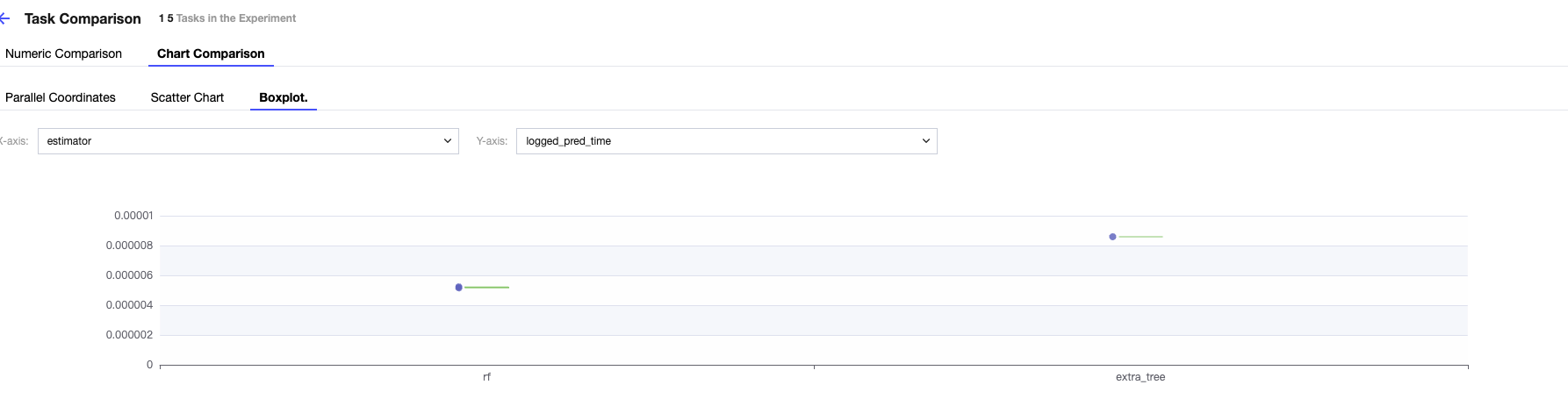

Boxplot

Chart comparison allows you to manually select three types of visual charts: parallel coordinates, scatter chart, and boxplot. The X-axis and Y-axis can be customized as needed in your scenario.

Running Details

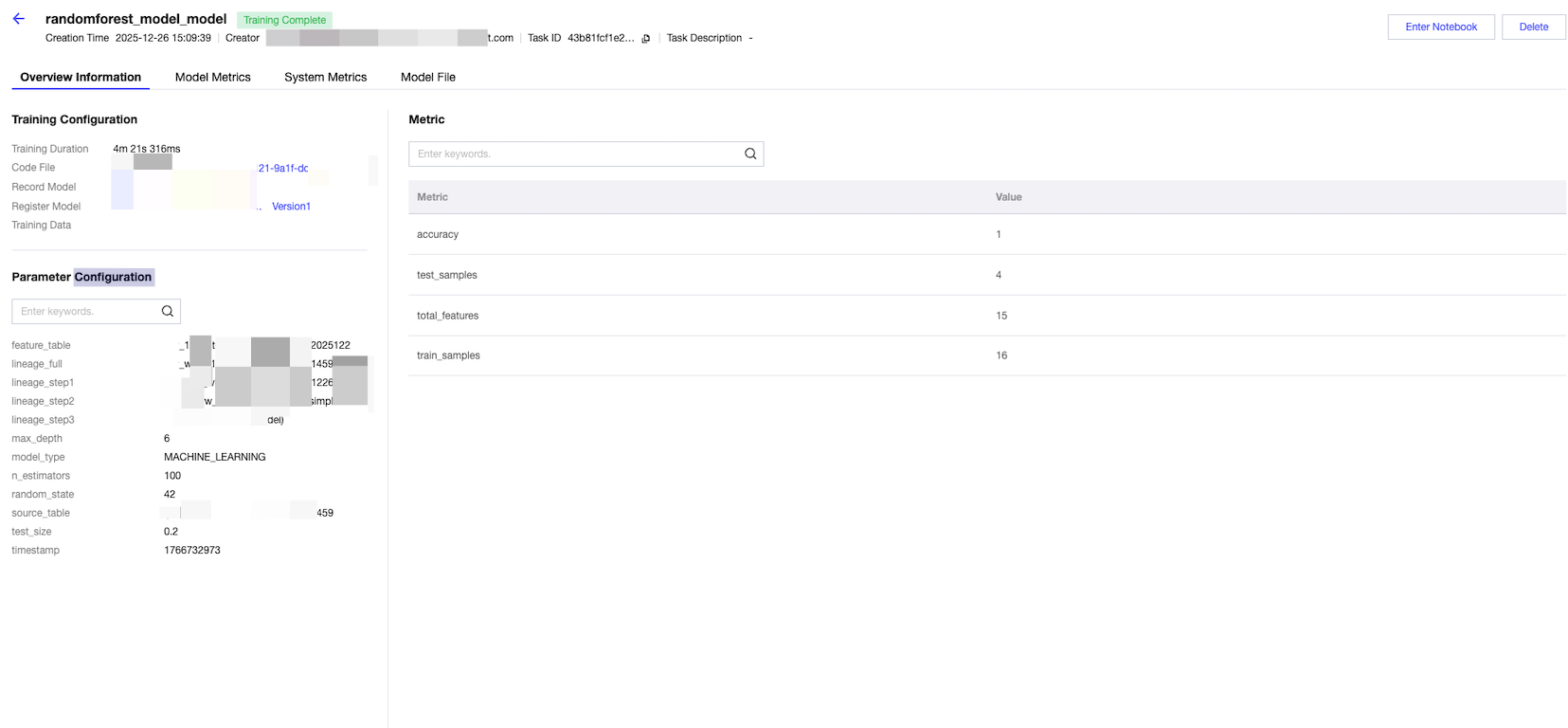

1. Overview Information.

Click "Running Name" to enter the running details page. By default, it enters the overview page and displays the following content:

Involved queries:

Key information of operation: creation time, creator, experiment ID, running state, running ID, running time, dataset, tag, code, Base Model, registered model.

Hyperparameters Configuration: model hyperparameters in the training process, such as weight in this example.

Metrics: metrics submitted during the model training process (based on the training test set or training validation set).

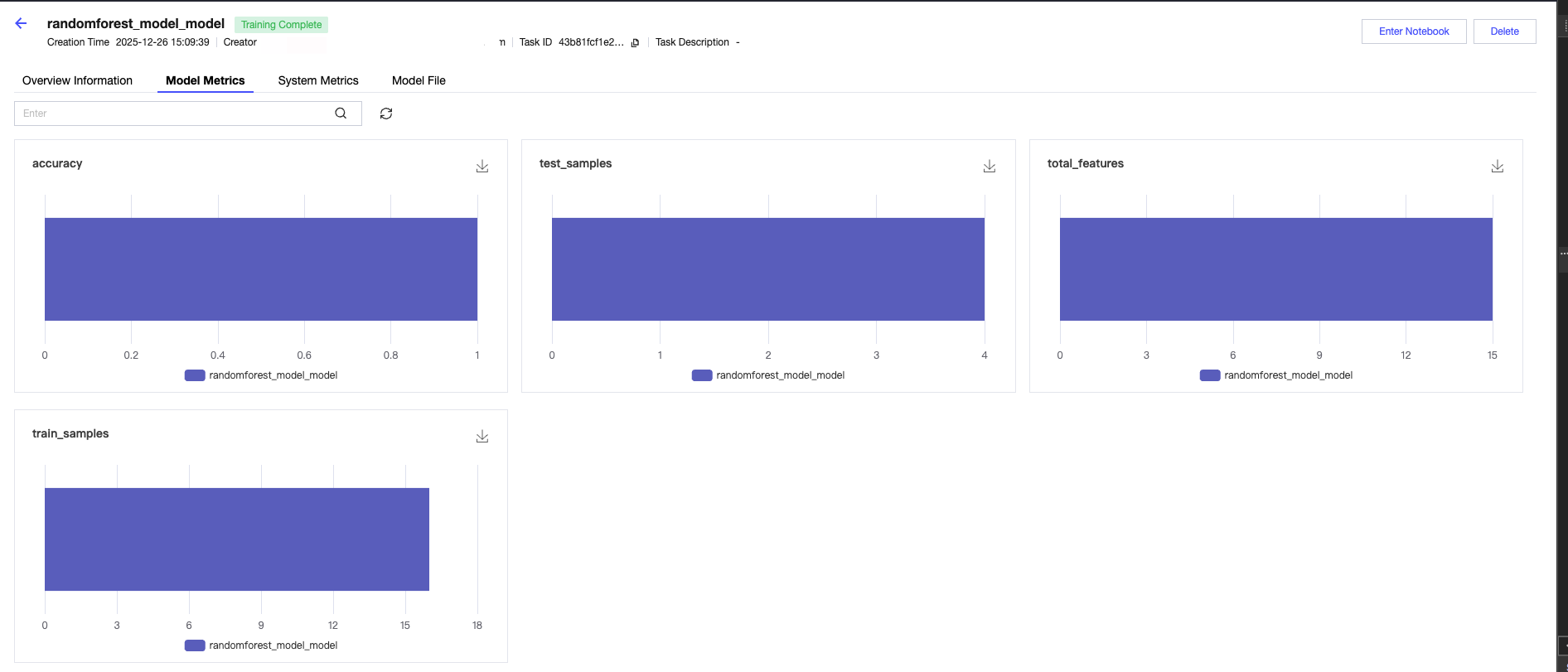

2. View model metrics.

Click Model Metrics to view model metrics. This feature supports recording and assessing the model's performance metrics, such as accuracy, precision, and recall rate. These metrics help you understand the model's performance and make comparisons.

You can use the mlflow.log_metric() function in the training script to record model performance metrics, for example:

import mlflowfrom sklearn.metrics import accuracy_score, precision_score, recall_score# Assume you have a trained model and test datay_true = [...] # actual tagy_pred = [...] # prediction tag# Start MLflow runwith mlflow.start_run():# Computation model performance metricaccuracy = accuracy_score(y_true, y_pred)precision = precision_score(y_true, y_pred)recall = recall_score(y_true, y_pred)# Log model performance metricsmlflow.log_metric("accuracy", accuracy)mlflow.log_metric("precision", precision)mlflow.log_metric("recall", recall)...

3. View system metrics.

System Metrics are used to monitor and log System performance Metrics during model training, such as CPU usage and memory usage. These Metrics help you understand the resource consumption status of model training. The System Metrics recorded by MLFlow by default are as follows:

cpu_utilization_percentage

system_memory_usage_megabytes

system_memory_usage_percentage

network_receive_megabytes

network_transmit_megabytes

disk_usage_megabytes

disk_available_megabytes

You can use three methods in the training script to record system performance metrics, for example:

import mlflowimport psutil # to obtain system performance metrics#Enable method 1: use environment variablesimport osos.environ["MLFLOW_ENABLE_SYSTEM_METRICS_LOGGING"] = "true"#Enable method 2: use mlflow.enable_system_metrics_logging() to logmlflow.enable_system_metrics_logging()#Enable method 3: target a designated run to startwith mlflow.start_run(log_system_metrics=True):...

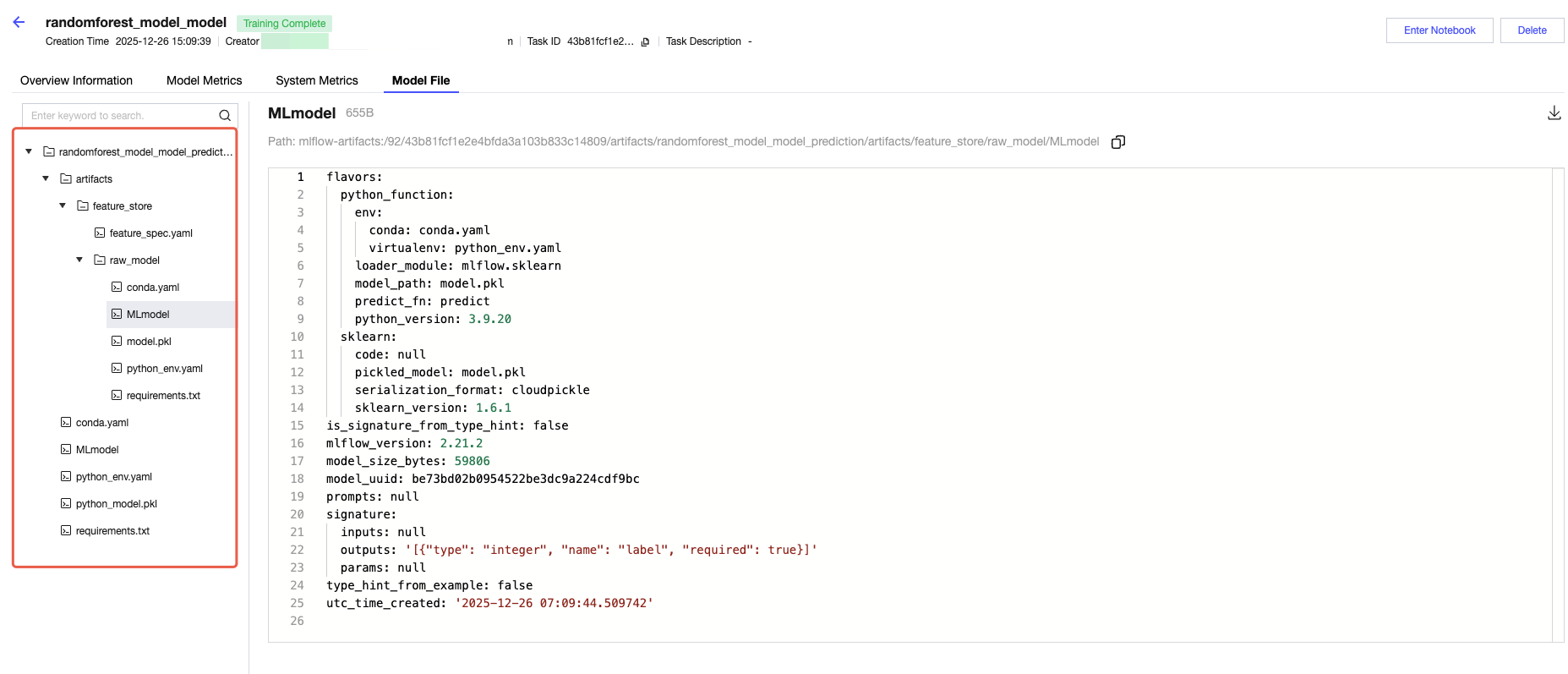

4. View model file.

Artifacts refer to files related to the model, such as model files, charts, datasets, and log files.

As shown in the example diagram below, this is the model file generated this time, including the model path, input and output metadata, and example code for debugging and running. It also allows you to click to navigate to model management and view the published model.

Help and Support

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback