AutoML Experiment

Feature Overview

AutoML (Automated Machine Learning) is a technology that automates the machine learning process. It helps users efficiently complete tasks like data preprocessing, feature engineering, model selection, parameter optimization, and model assessment without requiring deep expertise in machine learning algorithms or tuning details. AutoML platforms automatically try multiple algorithms and parameter combinations to quickly find the best machine learning model.

In short, AutoML makes machine learning easier and more efficient, suitable for business personnel without deep algorithm background, and can help professional data scientists enhance model development efficiency. AutoML automatically prepares data for these tasks, attempts multiple mainstream algorithms, and generates Python notebooks with complete training process, convenient for you to review, reuse and modify code.

Note:

AutoML currently only supports tasks of the Data Lake Compute (DLC) engine. And only when the DLC engine selects the "wedata-data-science" mirror to create a resource group can it be used for AutoML experimentation.

Use Cases

Task Type | Application Scenario | Core Goal |

AutoML - Category | Predict category (for example, customer risk level, product category) | Output category tag (for example, "high-risk", "medium-risk", low risk) |

AutoML - Regression | Predict consecutive values (for example, sales revenue, inventory quantity) | Output specific values (for example, "500 pieces") |

AutoML - Time Series Forecasting | Predict future trends based on historical time sequence (for example, sales prediction) | Output the value sequence for the future time period (sales in the next 7 days). |

Operation Steps

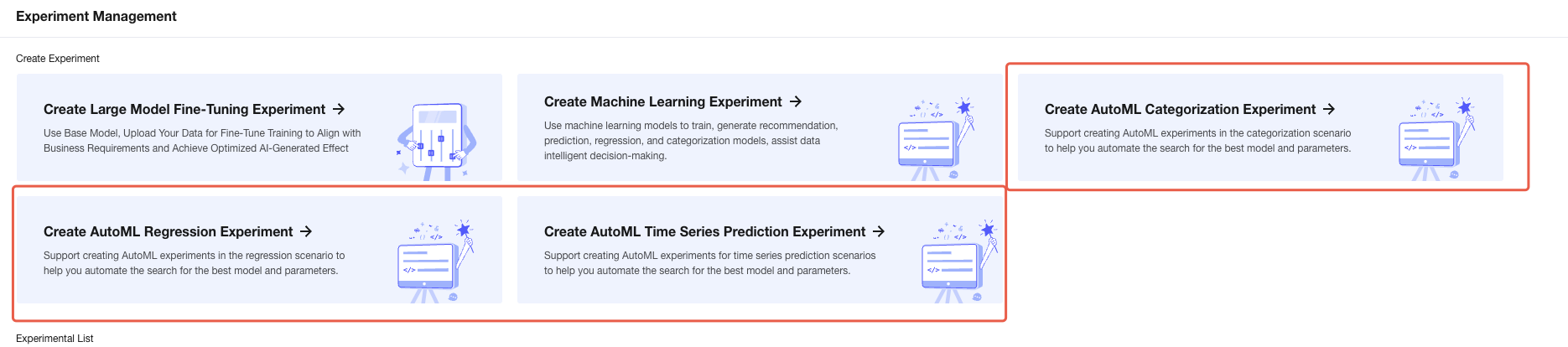

AutoML Experiment Creation Portal

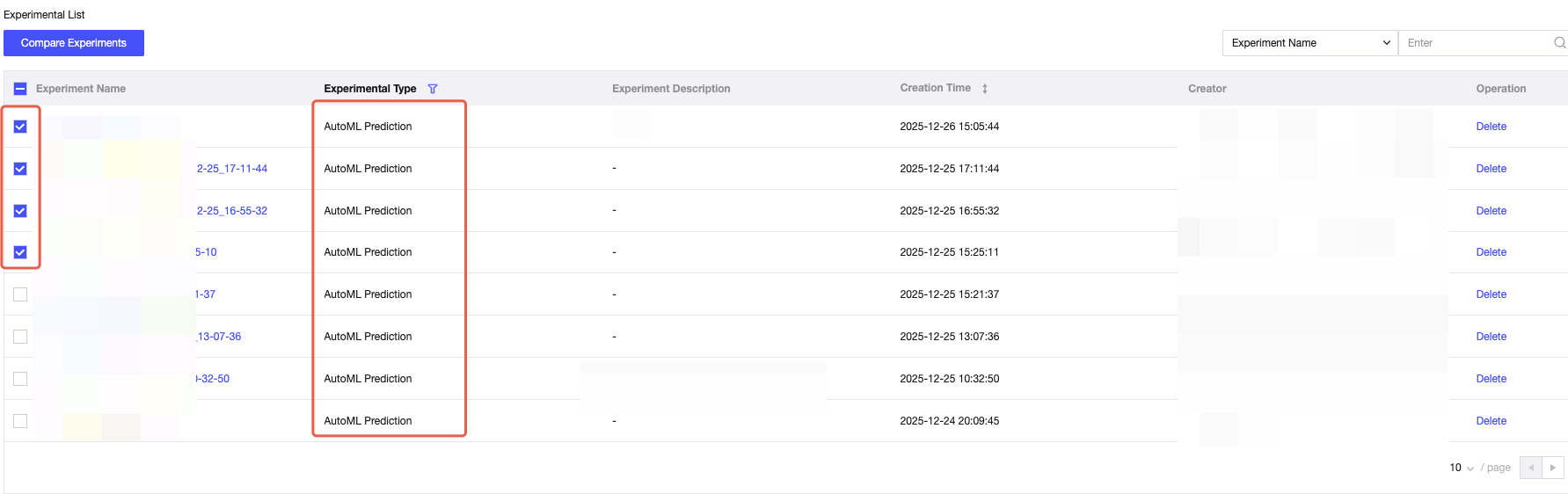

1. Log in to the WeData platform, find the "Model Experiment" module in the left sidebar, and click to enter the "Experiment List" page.

2. At the top of the page, display 3 AutoML experiment portals: create AutoML - categorization experiment, create AutoML - regression experiment, create AutoML - time series prediction experiment. Select the corresponding portal based on business needs.

Support creating AutoML experiments in categorization scenarios to help you automate the search for the best model and parameters.

Create an experiment and automatically generate AutoML experiment code.

Initiate AutoML training based on configuration and automatically search for models and parameters.

Enter experiment management, monitor the model training process, and compare the model training results.

Click to go to create form.

Creating an AutoML Experiment

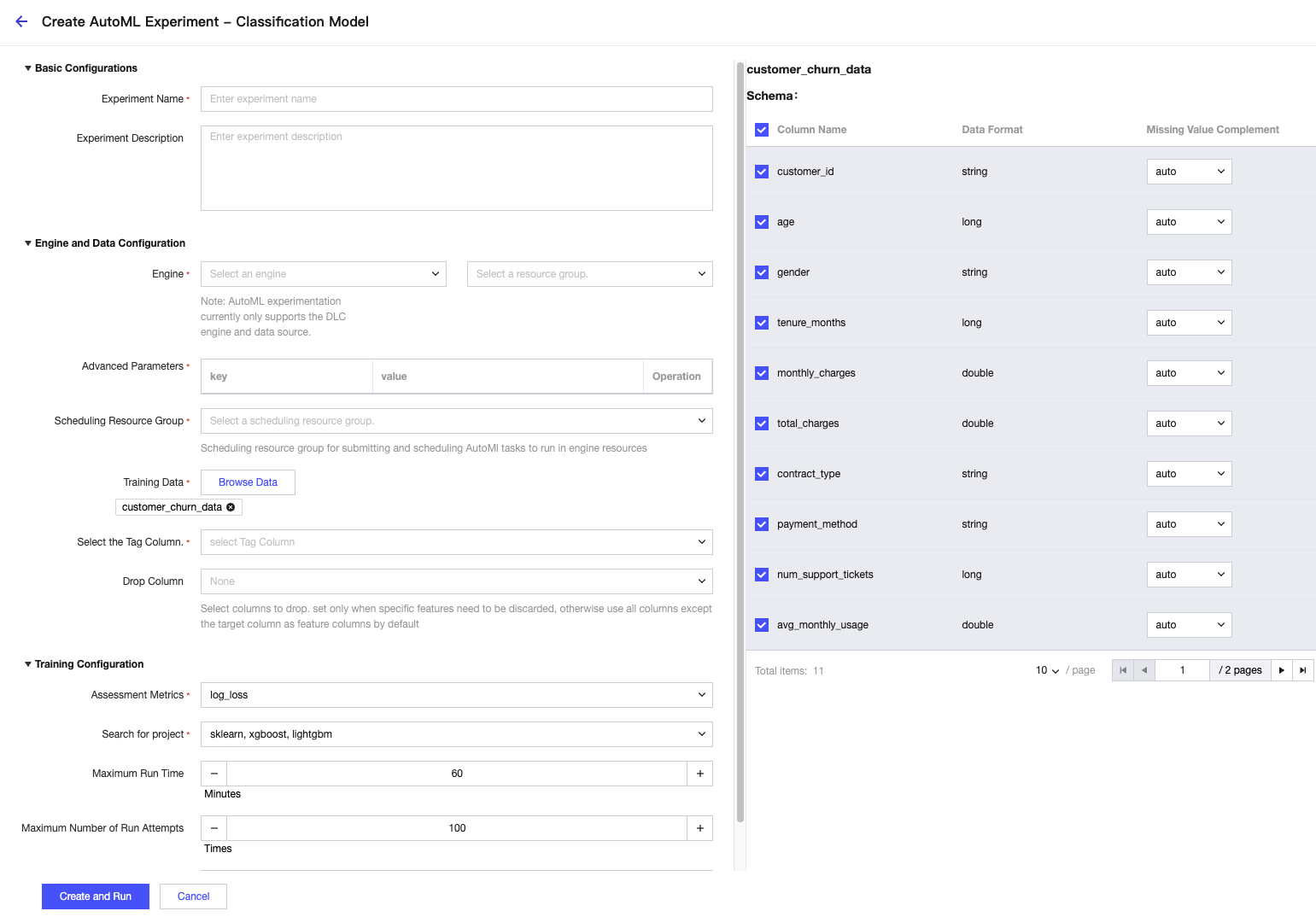

Classification Experiment

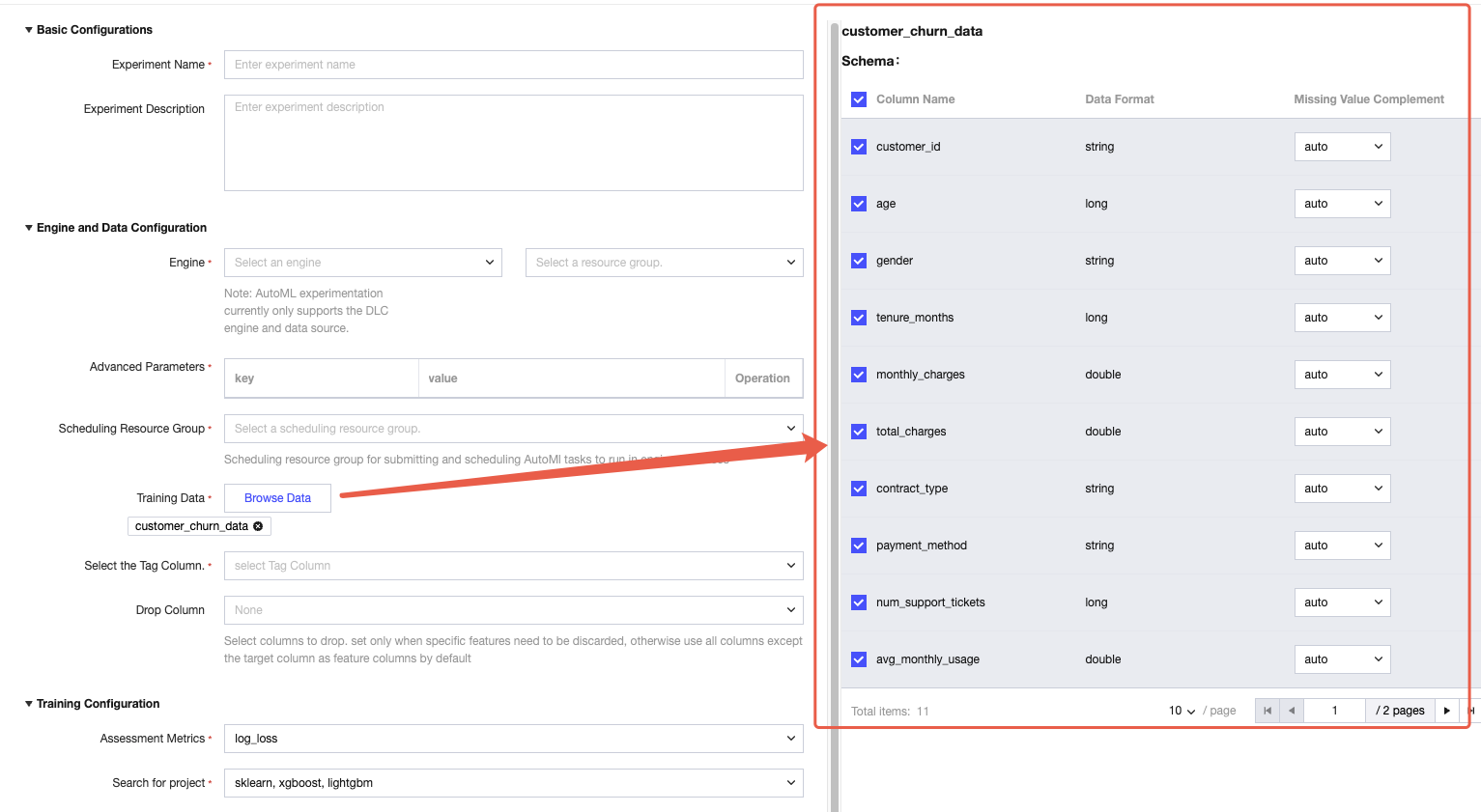

Procedure 1: Basic Configuration

Experiment Name: Enter a name with no more than 50 characters (for example "2025Q4 Customer Risk Classification Experiment").

Experiment description: Optional, enter a description with no more than 100 characters (for example "Risk level prediction based on customer consumption data").

Procedure 2: Engine and Data Configuration

1. Engine selection: Only supports "DLC engine". Select the corresponding resource group from the dropdown menu (Note: The resource group must match the network location of the data).

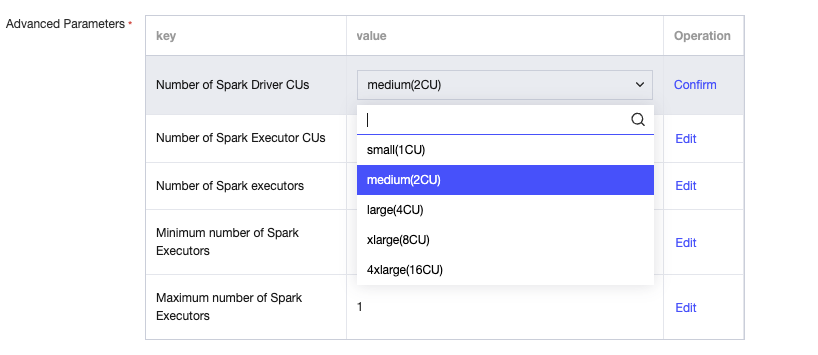

Advanced parameters: Optional. If needed to configure kernel parameters (such as memory, CPU), click "Edit" to fill in, then click Confirm to save.

2. Training data selection:

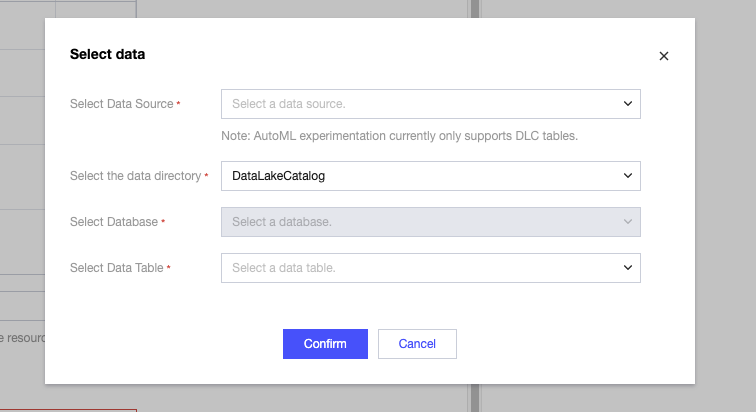

Click "Browse Data", select "Data directory → Database → Data table" where the data resides in the pop-up (only display data under the DLC engine).

Note:

Data limit: Only supported for databases/data tables under the DLC engine. Non-DLC data must be migrated to DLC first.

Data preview: Select it, then the data table Schema will display on the right with all columns selected by default.

Missing value completion: Dropdown selection for completion method (auto / mean / median / mode).

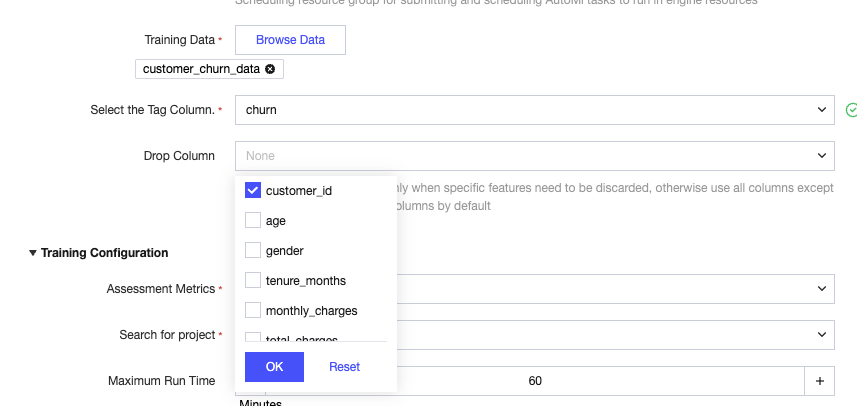

Select the Tag Column: Single-selection "target category".

Drop Column: Multiple selection for columns that do not need to be used as features (e.g., "Customer ID", dropped columns will not participate in training).

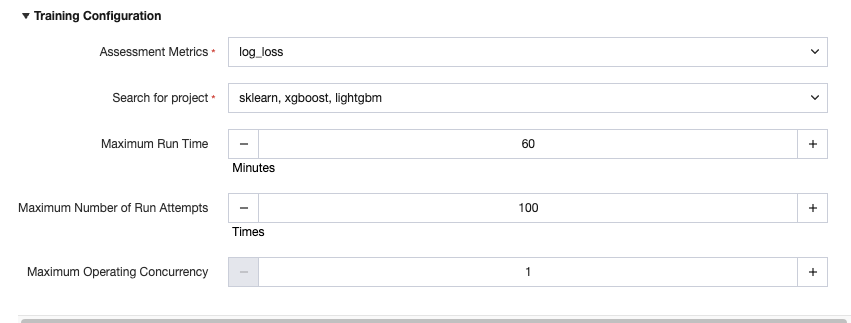

Procedure 3: Training Configuration

Assessment metrics: Single selection (default "log_loss", selectable "f1", "precision", "accuracy", "roc_auc").

Search for project: Multiple selection for model frameworks to be automatically tested (selected by default, selectable "sklearn", "xgboost", "lightgbm").

Maximum Run Time: Default 60 minutes (adjustable, the experiment will stop on timeout).

Maximum Number of Run Attemptss: Default 100 (adjustable, refers to the number of parameter combinations for automatic model testing).

Maximum Operating Concurrency: Default 1 (adjustable, actual concurrency depends on DLC resource availability).

Procedure 4: Creating an Experiment and Viewing Results

1. Click "Create and Run" at the bottom of the page. The system will automatically generate AutoML experiment code and initiate training.

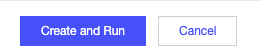

2. Return to the "Experiment List", find the target experiment, and view the "Experiment Status" (green = successful, red = unsuccessful, hover to display the reason for failure).

3. View details: Click the experiment name to enter "Task Detail". The "Best Run" field displays the best model. Click to view its parameters, evaluation score, and generated Notebook code.

Note:

Upon success, you can download the Notebook code in the "Best Running" details for follow-up manual model adjustment.

Regression Experiment

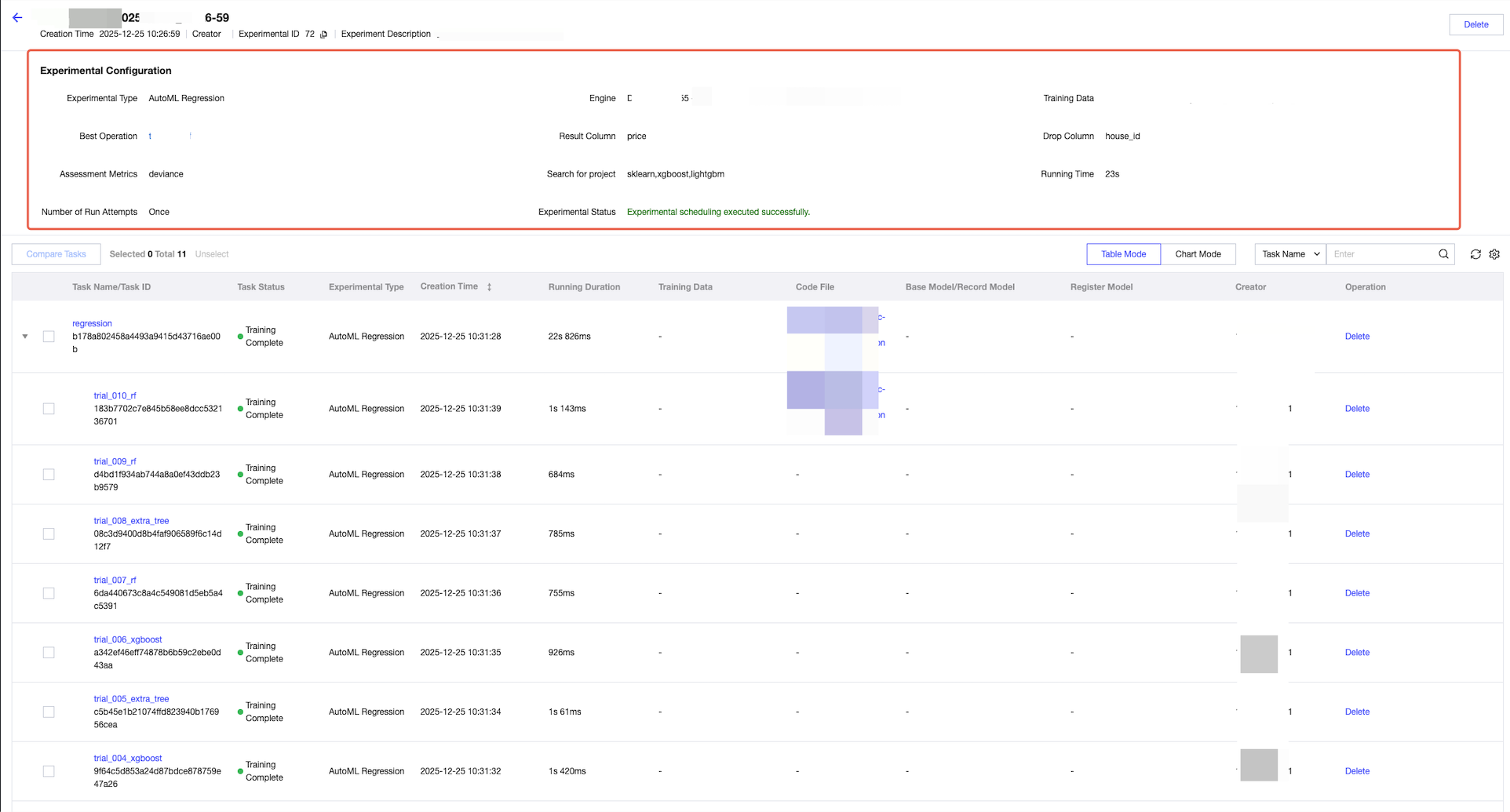

Procedure 1-3: Closely Match "Classification Experiment" with the Following Configuration Different

Step 3 Metrics: Default "deviance", selectable "RMSE", "MAE", "R2", "MSE".

Other configuration (engine, data, search space) is the same as the categorization experiment.

Procedure 4: Submitting and Viewing Results

Same as step 4 of "Classification Experiment" (status, best running, deployment process match).

Time Series Prediction Experiment

Procedure 1: Basic Configuration

Same as "Categorization Experiment" (Experiment Name, description match).

Procedure 2: Engine and Data Configuration (Core Point of Difference)

1. Engine selection: Same-category experiments (DLC engine + resource group only).

2. Training data selection:

Data selection logic is the same as the categorization experiment (DLC table only).

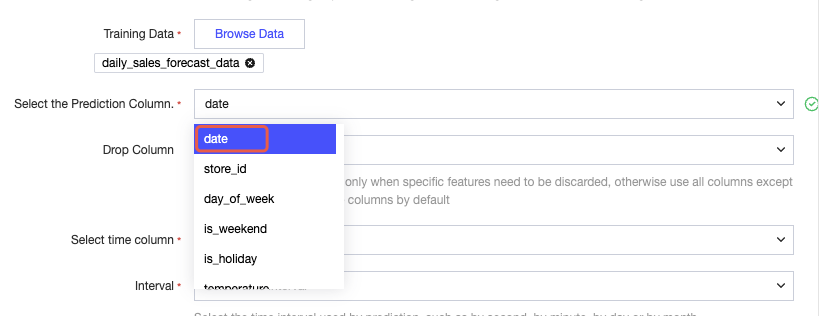

Select time column: Single selection "time column" (such as "date", must be of date/time type, cannot be discarded after selection).

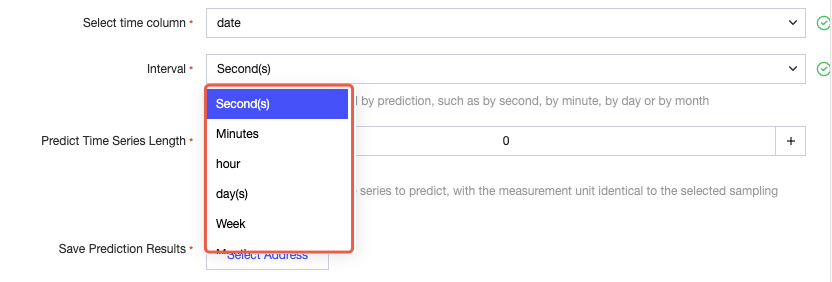

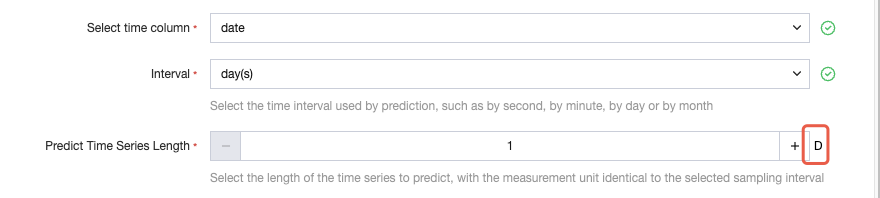

Time interval: Dropdown selection for data time frequency (such as "day", "hour", "month", must match actual data frequency).

Prediction time series length: Enter number (such as "30", means predict value for 30 time intervals in the future).

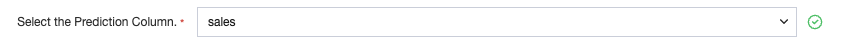

Select prediction column: Single selection "prediction column" (such as "sales").

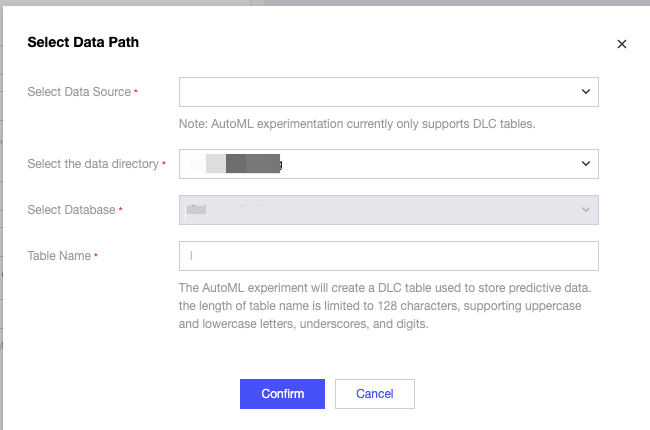

Prediction result save:

Click "Select data path" and select the DLC database for result storage.

Fill in the table name (limited to 128 characters, supports letters, digits, underscore). The system will automatically create the table to store prediction results.

Procedure 3: Training Configuration (Point of Difference)

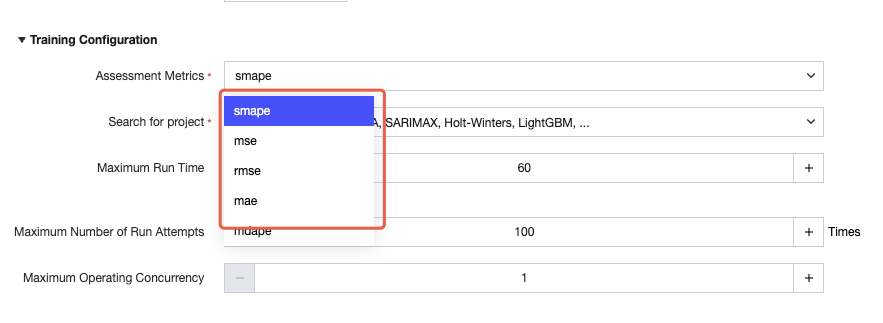

Metrics: Default "smape", selectable "mse", "rmse", "mae", "mdape".

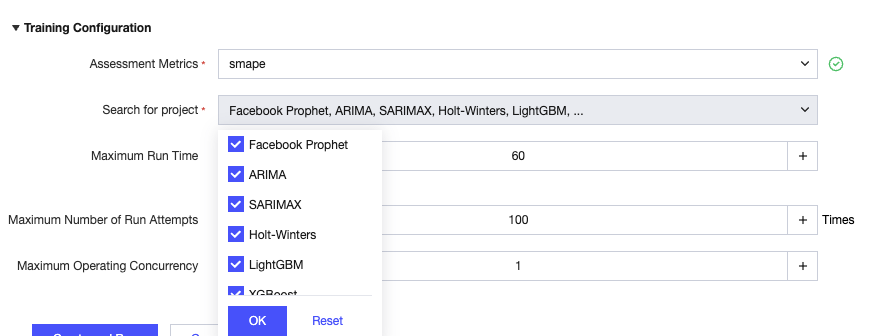

Search space: selected by default, selectable "Facebook Prophet", "ARIMA", "SARIMAX", "Deep-AR", "XGBoost", "LightGBM", "Holt-Winters".

Other configuration (running time, count, concurrency) is the same as the categorization experiment.

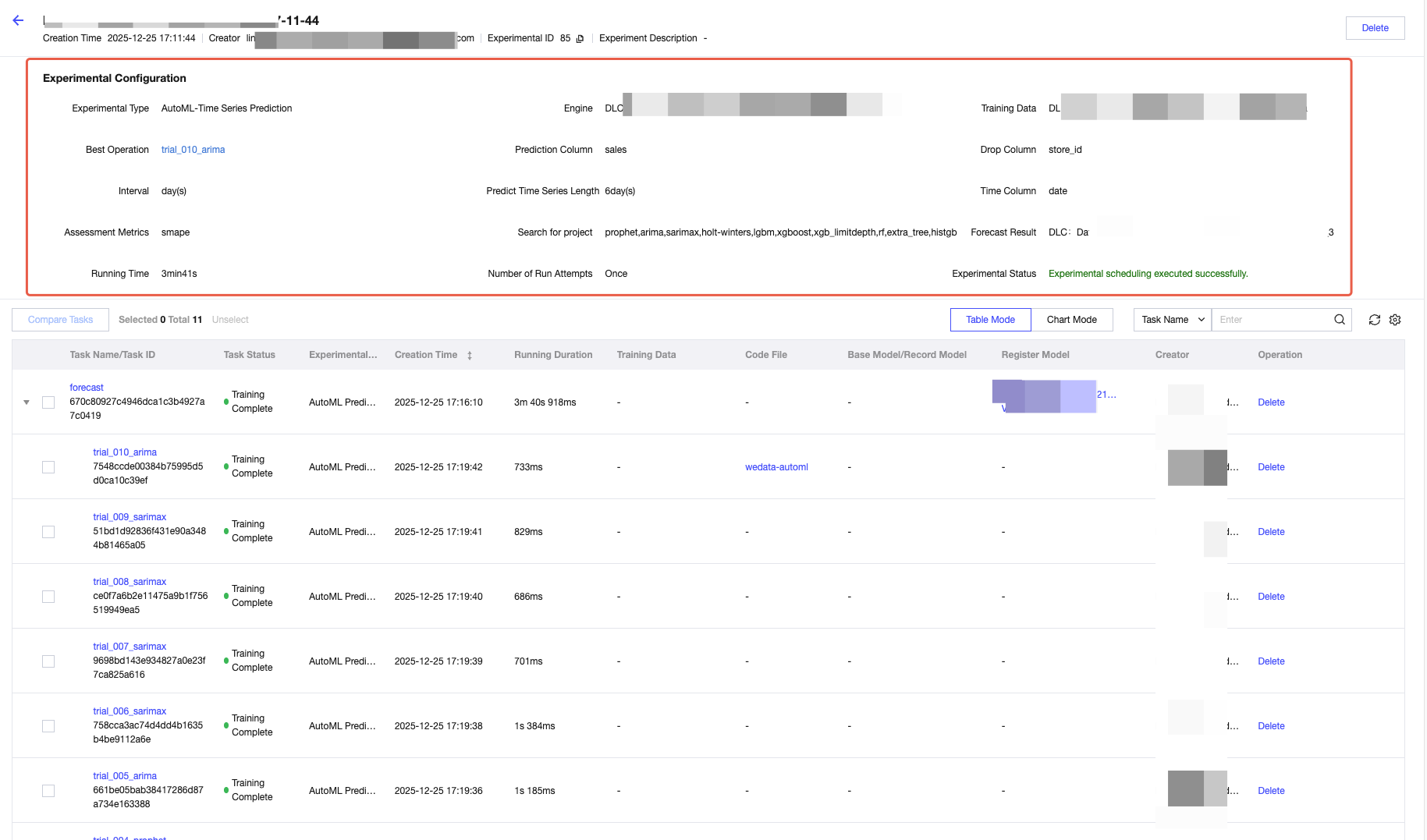

Procedure 4: Submitting and Viewing Results

1. After submitting the experiment, view status in the "Experiment List".

2. Click "Best Running" to view the best predictive model.

AutoML Experiment Comparison

1. Experiments of the same type can be compared horizontally. Check the same type of experiments in the experiment list and click the Compare Experiments button.

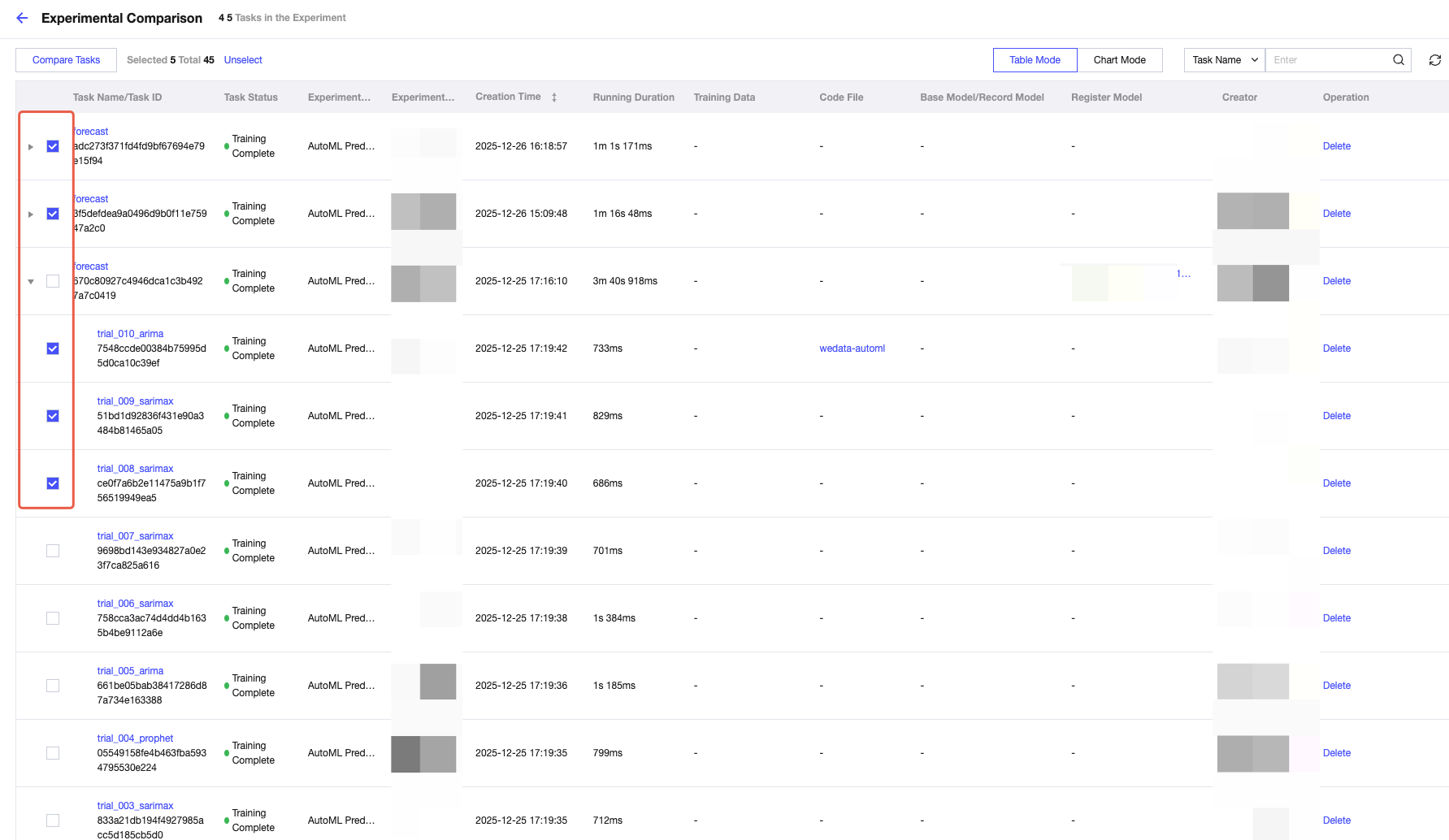

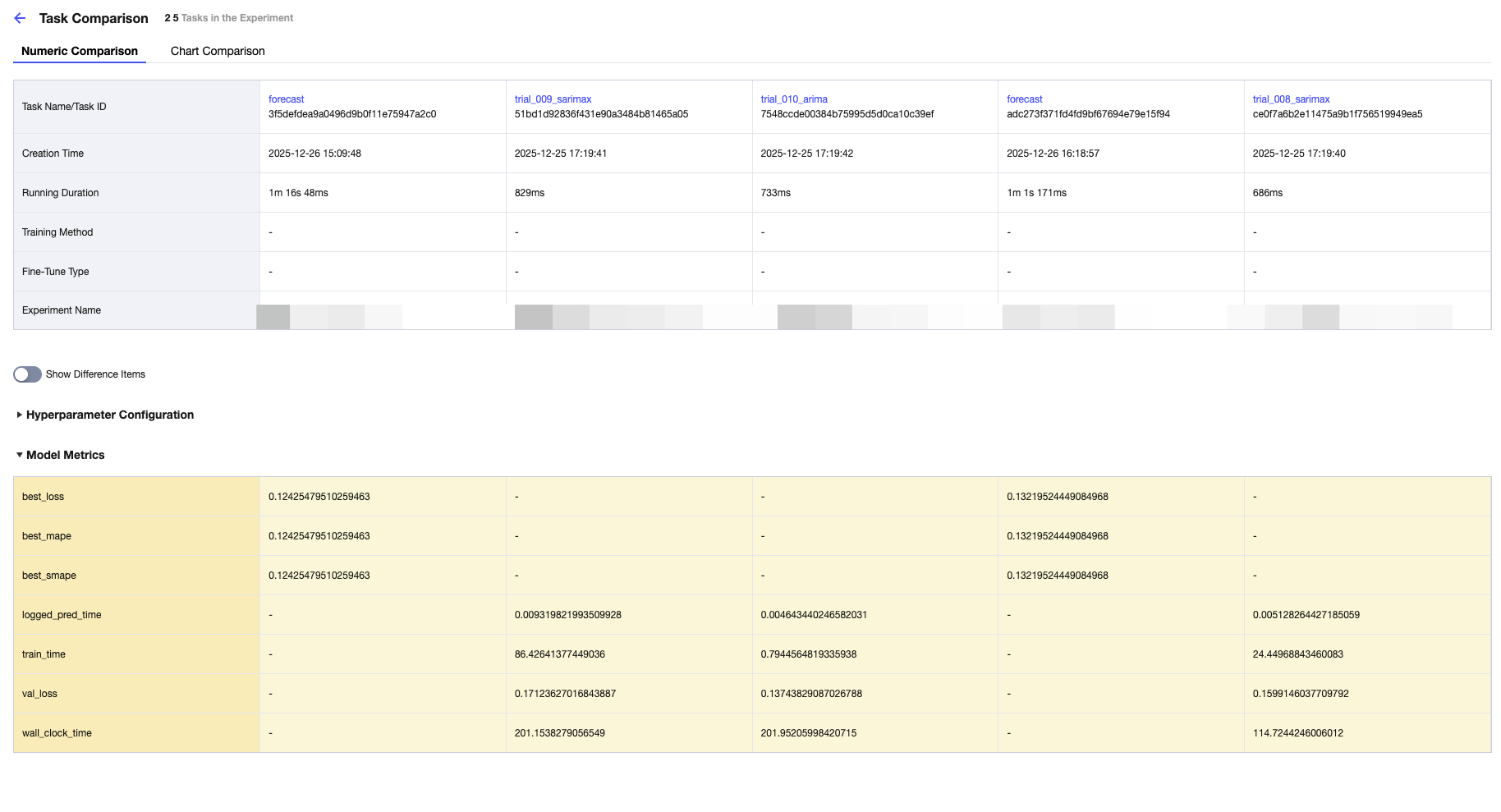

2. Check tasks in different experiments and click Compare Tasks.

Comparison details of tasks are the same as the task comparison webpage in the experiment.

Common Issues

1. What do the "tag column" and "prediction column" in the prediction table represent?

Same as "target column", meaning varies by task type.

Categorization experiment: represents "true category labels" (such as "high-risk", "low-risk"), used to compare with the "prediction" column of model prediction and evaluate accuracy.

Regression experiment: represents "actual consecutive values" (such as "actual sales revenue of 5 million"), used to calculate the deviation between predicted value and actual value.

Time series prediction experiment: in some scenarios, the "prediction column" is the "historical real value", which together with the "predicted value" column forms a time series, making it easy to observe the prediction trend.

2. How to calculate predictive accuracy?

Accuracy is calculated based on the metrics selected in the training configuration. The task correspondence and logic are as follows:

Task Type | Common Metrics | Computation Logic |

Category | accuracy | (correct prediction / total number of samples) × 100% |

Category | f1 score | The harmonic average of "precision" and "recall rate", with a value ranging from 0 to 1 (the closer to 1, the better). |

regression | R2 (R2 metric) | Measure the model's ability to interpret data mutation, with a value ranging from 0 to 1 (the closer to 1, the more accurate the prediction). |

regression | MAE (mean absolute error) | The average of the absolute values of "real value - predicted value" for ALL samples (the smaller, the better). |

Time Series Forecasting | smape (symmetric mean absolute percentage error) | Measure the percentage error between the predicted value and the actual value, with a value range of 0-100% (the smaller, the better). |

Help and Support

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback