Data Science Practical Tutorial

Deep Learning Practical Tutorial: Image Classification Model Development Based on GPU Resource

Note:

The prerequisites for this tutorial are: the data science feature has been enabled, DLC GPU resources have been purchased, and a machine learning resource group with GPU cards has been created.

Basic Information

Data

This tutorial uses the CIFAR-10 dataset for operation instructions. The dataset is an Image Analysis dataset provided by the Canadian Institute for Advanced Research (CIFAR) and is widely used for deep learning and computer vision research and experiments. Details:

Data volume: 60,000 color images.

Data distribution: Among them, 50,000 are training images and 10,000 are test images.

Image size: Each image is 32×32 pixels.

Categories: Divided into 10 classes with 6,000 images each, which are airplane, vehicle, bird, cat, deer, dog, frog, horse, ship, and truck.

Features: Images are bright and small, suitable for beginners and quick experiments. Each category has a variety of images with certain overlapping features, increasing the challenge of classification.

Model

This tutorial implements the ResNet-18 model, a lightweight Residual Network proposed by Microsoft's Kaiming He team in 2015. Its core lies in residual connections to address gradient disappearance/degradation problems in deep networks. It consists of 18 parameterized layers (convolution + full join) with approximately 11.7M parameters. It is a classic visual model commonly used for image classification, feature extraction, and lightweight scenarios.

Resource

DLC GPU resource groups

20CU/1GPU [GN7-t4]

Environment

spark3.5-tensorflow2.20-gpu-py311-cu124

Editing Code in Studio to Initiate Model Training

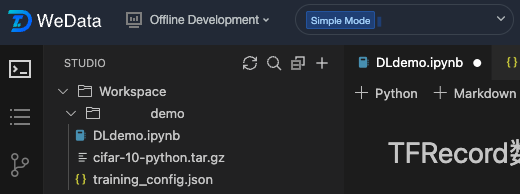

1. Step 1, create a folder, upload a data file, and create a configuration file and an ipynb file.

2. Step 2, we define functions to read data and perform data transformation.

import tensorflow as tfimport numpy as npimport osimport tarfileimport picklefrom typing import Dict, Anydef load_cifar10_from_local(local_path):"""Load the CIFAR-10 dataset from the local cifar-10-python.tar.gz file."""if not os.path.exists(local_path):raise FileNotFoundError(f"CIFAR-10 dataset file not found: {local_path}")# Extraction directoryextract_dir = 'cifar-10-batches-py'# If the extraction directory does not exist, extract the file.if not os.path.exists(extract_dir):print("Decompress CIFAR-10 dataset...Extract CIFAR-10")with tarfile.open(local_path, 'r:gz') as tar:tar.extractall()print("Decompress done!Extraction completed")else:print("CIFAR-10 dataset unpacked, skip decompress stepThe CIFAR-10 dataset has been extracted, skipping the extraction step.")return extract_dirdef unpickle(file):"""Read the CIFAR-10 pickle files"""with open(file, 'rb') as fo:dict = pickle.load(fo, encoding='bytes')return dictdef create_cifar10_tfrecord(local_path='cifar-10-python.tar.gz'):"""Create TFRecord files for the CIFAR-10 dataset from local files."""# Load the dataset from local files.cifar_dir = load_cifar10_from_local(local_path)# Load training datax_train = []y_train = []# Load 5 train batchfor i in range(1, 6):batch_file = os.path.join(cifar_dir, f'data_batch_{i}')dict = unpickle(batch_file)# Get data and labeldata = dict[b'data']labels = dict[b'labels']x_train.append(data)y_train.extend(labels)x_train = np.concatenate(x_train, axis=0)y_train = np.array(y_train)# Load test datatest_file = os.path.join(cifar_dir, 'test_batch')dict_test = unpickle(test_file)x_test = dict_test[b'data']y_test = np.array(dict_test[b'labels'])# Reshape picture to 32x32x3x_train = x_train.reshape(-1, 3, 32, 32).transpose(0, 2, 3, 1)x_test = x_test.reshape(-1, 3, 32, 32).transpose(0, 2, 3, 1)# Create output directoryos.makedirs('./tfrecords', exist_ok=True)def _bytes_feature(value):"""Convert to byte feature"""if isinstance(value, type(tf.constant(0))):value = value.numpy()return tf.train.Feature(bytes_list=tf.train.BytesList(value=[value]))def _int64_feature(value):"""Convert to Int feature"""return tf.train.Feature(int64_list=tf.train.Int64List(value=[value]))# Write trainset TFRecordwith tf.io.TFRecordWriter('./tfrecords/cifar10_train.tfrecord') as writer:for i in range(len(x_train)):image = x_train[i]label = y_train[i]# Make sure the image data type is uint8.image = image.astype(np.uint8)feature = {'image': _bytes_feature(tf.compat.as_bytes(image.tobytes())),'label': _int64_feature(label),'height': _int64_feature(32),'width': _int64_feature(32),'depth': _int64_feature(3)}example = tf.train.Example(features=tf.train.Features(feature=feature))writer.write(example.SerializeToString())# Write valset TFRecordwith tf.io.TFRecordWriter('./tfrecords/cifar10_val.tfrecord') as writer:for i in range(len(x_test)):image = x_test[i]label = y_test[i]image = image.astype(np.uint8)feature = {'image': _bytes_feature(tf.compat.as_bytes(image.tobytes())),'label': _int64_feature(label),'height': _int64_feature(32),'width': _int64_feature(32),'depth': _int64_feature(3)}example = tf.train.Example(features=tf.train.Features(feature=feature))writer.write(example.SerializeToString())print(f"TFRecord file creation completed: training sample {len(x_train)}, validation sample {len(x_test)}")return len(x_train), len(x_test)def parse_tfrecord_fn(example_proto, config: Dict[str, Any], is_training: bool = True):"""Parse TFRecord examples"""feature_description = {'image': tf.io.FixedLenFeature([], tf.string),'label': tf.io.FixedLenFeature([], tf.int64),'height': tf.io.FixedLenFeature([], tf.int64),'width': tf.io.FixedLenFeature([], tf.int64),'depth': tf.io.FixedLenFeature([], tf.int64)}example = tf.io.parse_single_example(example_proto, feature_description)# Parse image dataimage = tf.io.decode_raw(example['image'], tf.uint8)image = tf.reshape(image, [32, 32, 3])image = tf.cast(image, tf.float32) / 255.0# Data augmentationif is_training and config.get('data_augmentation', True):if config.get('horizontal_flip', True):image = tf.image.random_flip_left_right(image)if config.get('brightness_delta', 0) > 0:image = tf.image.random_brightness(image, config['brightness_delta'])if config.get('contrast_range'):image = tf.image.random_contrast(image,config['contrast_range'][0],config['contrast_range'][1])# Normalizationimage = (image - tf.constant([0.4914, 0.4822, 0.4465])) / tf.constant([0.2023, 0.1994, 0.2010])label = tf.cast(example['label'], tf.int32)return image, labeldef create_dataset(config: Dict[str, Any], is_training: bool = True):"""Create TensorFlow dataset pipe"""tfrecord_path = config['train_tfrecord_path'] if is_training else config['val_tfrecord_path']# Check TFRecord fileif not os.path.exists(tfrecord_path):raise FileNotFoundError(f"TFRecord file not found: {tfrecord_path}")dataset = tf.data.TFRecordDataset(tfrecord_path)dataset = dataset.map(lambda x: parse_tfrecord_fn(x, config, is_training),num_parallel_calls=tf.data.experimental.AUTOTUNE)if is_training:dataset = dataset.shuffle(buffer_size=10000)dataset = dataset.batch(config.get('batch_size', 128))dataset = dataset.prefetch(tf.data.experimental.AUTOTUNE)return dataset

3. Step 3, we define the ResNet model.

import tensorflow as tffrom tensorflow.keras import layers, Modelclass BasicBlock(layers.Layer):"""ResNet Basic Residual Block"""def __init__(self, filter_num, stride=1):super(BasicBlock, self).__init__()self.conv1 = layers.Conv2D(filter_num, (3, 3), strides=stride,padding='same', use_bias=False)self.bn1 = layers.BatchNormalization()self.relu = layers.ReLU()self.conv2 = layers.Conv2D(filter_num, (3, 3), strides=1,padding='same', use_bias=False)self.bn2 = layers.BatchNormalization()# Connection Handlingif stride != 1:self.downsample = tf.keras.Sequential([layers.Conv2D(filter_num, (1, 1), strides=stride, use_bias=False),layers.BatchNormalization()])else:self.downsample = lambda x: xdef call(self, inputs, training=None):residual = inputsx = self.conv1(inputs)x = self.bn1(x, training=training)x = self.relu(x)x = self.conv2(x)x = self.bn2(x, training=training)identity = self.downsample(residual)output = tf.nn.relu(x + identity)return outputclass ResNet(Model):"""ResNet model"""def __init__(self, layer_dims, num_classes=10):super(ResNet, self).__init__()# Preprocessing Layerself.stem = tf.keras.Sequential([layers.Conv2D(64, (3, 3), strides=1, padding='same', use_bias=False),layers.BatchNormalization(),layers.ReLU(),])# Construct the Residual Layerself.layer1 = self._build_resblock(64, layer_dims[0], stride=1)self.layer2 = self._build_resblock(128, layer_dims[1], stride=2)self.layer3 = self._build_resblock(256, layer_dims[2], stride=2)self.layer4 = self._build_resblock(512, layer_dims[3], stride=2)# Classifierself.avgpool = layers.GlobalAveragePooling2D()self.fc = layers.Dense(num_classes)def _build_resblock(self, filter_num, blocks, stride=1):Build a sequence of residual blocks"""Construct the sequence of residual blocks"""res_blocks = tf.keras.Sequential()res_blocks.add(BasicBlock(filter_num, stride))for _ in range(1, blocks):res_blocks.add(BasicBlock(filter_num, stride=1))return res_blocksdef call(self, inputs, training=None):x = self.stem(inputs, training=training)x = self.layer1(x, training=training)x = self.layer2(x, training=training)x = self.layer3(x, training=training)x = self.layer4(x, training=training)x = self.avgpool(x)x = self.fc(x)return xdef resnet18(num_classes=10):"""Construct ResNet-18 model"""return ResNet([2, 2, 2, 2], num_classes)

4. Step 4, we define the MLFlow training process.

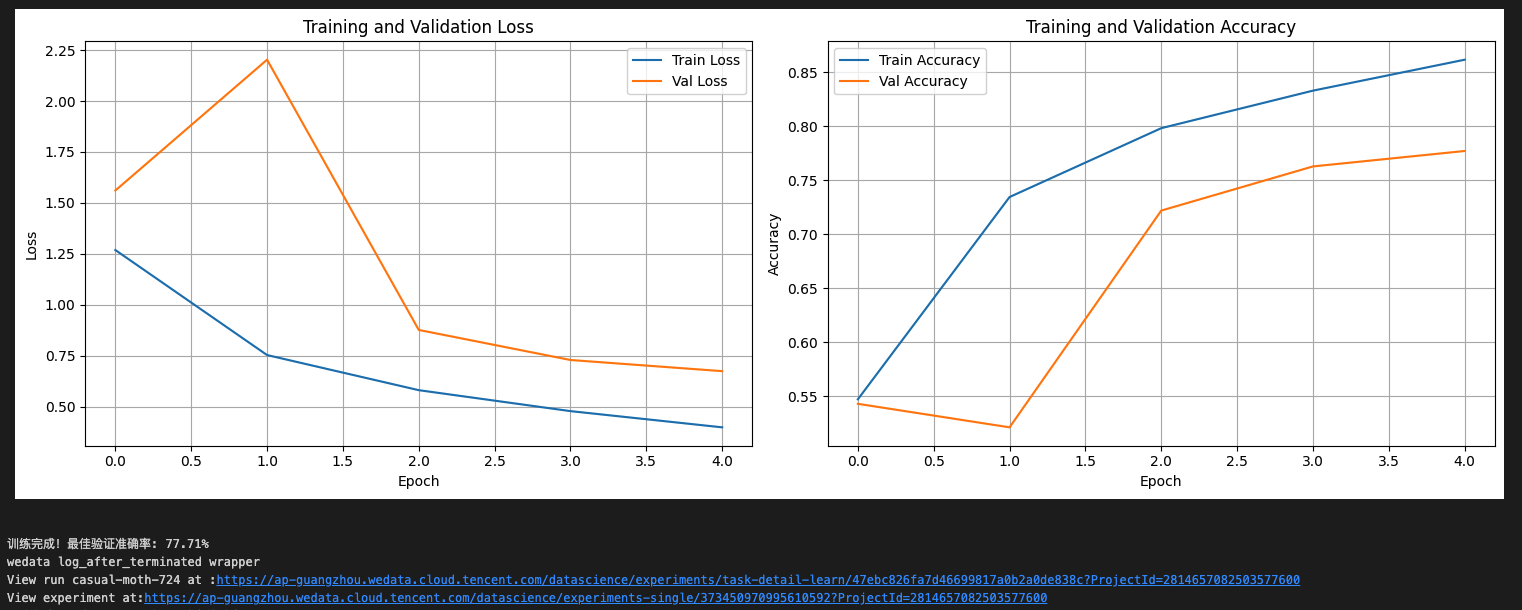

import mlflowimport mlflow.tensorflowimport jsonimport matplotlib.pyplot as pltfrom datetime import datetimeclass CIFAR10Trainer:def __init__(self, config_path='config/training_config.json'):self.config = self.load_config(config_path)self.setup_mlflow()self.model = Noneself.optimizer = Nonedef load_config(self, config_path):Load hyperparameter configuration from a JSON file"""Load hyperparam config from JSON file"""with open(config_path, 'r') as f:return json.load(f)def setup_mlflow(self):Set MLflow experiment tracking"""Set MLflow experiment tracking"""mlflow.set_tracking_uri("30.22.40.186:5000")mlflow.set_experiment("cifar10-resnet-tf2.2-local")def create_optimizer(self):Create optimizer"""Construct optimizer"""if self.config['optimizer'] == 'adam':return tf.keras.optimizers.Adam(learning_rate=self.config['learning_rate'])else:return tf.keras.optimizers.SGD(learning_rate=self.config['learning_rate'],momentum=self.config.get('momentum', 0.9))def train(self):"""Training model"""# Construct TFRecord file(if not exist)if not os.path.exists(self.config['train_tfrecord_path']):print("Creating TFRecord file...Construct TFRecord file...")try:train_samples, test_samples = create_cifar10_tfrecord(self.config['cifar10_local_path'])print(f"Successfully loaded: training sample {train_samples}, test sample {test_samples}. Load success: {train_samples},{test_samples}")except FileNotFoundError as e:print(f"Error: {e} error: {e}")print("Please ensure cifar-10-python.tar.gz exists in the current directory")returnwith mlflow.start_run():# Log hyperparammlflow.log_params(self.config)mlflow.log_param("framework", "TensorFlow 2.2")mlflow.log_param("dataset", "CIFAR-10")mlflow.log_param("data_source", "local_file")mlflow.log_param("start_time", datetime.now().isoformat())# Construct datasettrain_dataset = create_dataset(self.config, is_training=True)val_dataset = create_dataset(self.config, is_training=False)# Construct model and optimizerself.model = resnet18(num_classes=self.config['num_classes'])self.optimizer = self.create_optimizer()# Compile modelself.model.compile(optimizer=self.optimizer,loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),metrics=['accuracy'])# Construct model and show abstractself.model.build((None, self.config['image_size'],self.config['image_size'], 3))self.model.summary()# train paramtrain_loss = tf.keras.metrics.Mean(name='train_loss')train_accuracy = tf.keras.metrics.SparseCategoricalAccuracy(name='train_accuracy')val_loss = tf.keras.metrics.Mean(name='val_loss')val_accuracy = tf.keras.metrics.SparseCategoricalAccuracy(name='val_accuracy')@tf.functiondef train_step(images, labels):with tf.GradientTape() as tape:predictions = self.model(images, training=True)loss = tf.keras.losses.sparse_categorical_crossentropy(labels, predictions, from_logits=True)gradients = tape.gradient(loss, self.model.trainable_variables)self.optimizer.apply_gradients(zip(gradients, self.model.trainable_variables))train_loss(loss)train_accuracy(labels, predictions)return lossbest_val_acc = 0.0train_losses = []train_accs = []val_losses = []val_accs = []print("Start training...")for epoch in range(self.config['epochs']):# Reset metricstrain_loss.reset_state()train_accuracy.reset_state()val_loss.reset_state()val_accuracy.reset_state()# Training stepfor batch, (images, labels) in enumerate(train_dataset):loss = train_step(images, labels)if batch % 50 == 0:#print(f'Epoch {epoch+1}, Batch {batch}, Loss: {loss.numpy():.4f}')print(f'Epoch {epoch+1}, Batch {batch}, Loss: {tf.reduce_mean(loss).numpy():.4f}')# Validate stepfor images, labels in val_dataset:predictions = self.model(images, training=False)v_loss = tf.keras.losses.sparse_categorical_crossentropy(labels, predictions, from_logits=True)val_loss(v_loss)val_accuracy(labels, predictions)# Calculate epoch metricepoch_train_loss = train_loss.result().numpy()epoch_train_acc = train_accuracy.result().numpy()epoch_val_loss = val_loss.result().numpy()epoch_val_acc = val_accuracy.result().numpy()# Log to MLflowmlflow.log_metric("train_loss", epoch_train_loss, step=epoch)mlflow.log_metric("train_accuracy", epoch_train_acc, step=epoch)mlflow.log_metric("val_loss", epoch_val_loss, step=epoch)mlflow.log_metric("val_accuracy", epoch_val_acc, step=epoch)mlflow.log_metric("learning_rate",self.optimizer.learning_rate.numpy(), step=epoch)# Save training historytrain_losses.append(epoch_train_loss)train_accs.append(epoch_train_acc)val_losses.append(epoch_val_loss)val_accs.append(epoch_val_acc)print(f'Epoch {epoch+1}/{self.config["epochs"]}: 'f'Train Loss: {epoch_train_loss:.4f}, Train Acc: {epoch_train_acc*100:.2f}%, 'f'Val Loss: {epoch_val_loss:.4f}, Val Acc: {epoch_val_acc*100:.2f}%')# Save best modelif epoch_val_acc > best_val_acc:best_val_acc = epoch_val_accmlflow.tensorflow.log_model(self.model, "best_model")print(f"The new best model is saved, validation accuracy: {epoch_val_acc*100:.2f}%")# Log final resultmlflow.log_metric("best_val_accuracy", best_val_acc)mlflow.tensorflow.log_model(self.model, "final_model")# Plot the training curves.self.plot_training_history(train_losses, train_accs, val_losses, val_accs)print(f"Training complete! The best validation accuracy: {best_val_acc*100:.2f}%")def plot_training_history(self, train_losses, train_accs, val_losses, val_accs):"""Plot the training history curves""""""Plot the training history curves"""fig, (ax1, ax2) = plt.subplots(1, 2, figsize=(15, 5))# Loss curvesax1.plot(train_losses, label='Train Loss')ax1.plot(val_losses, label='Val Loss')ax1.set_title('Training and Validation Loss')ax1.set_xlabel('Epoch')ax1.set_ylabel('Loss')ax1.legend()ax1.grid(True)# Accuracy curvesax2.plot(train_accs, label='Train Accuracy')ax2.plot(val_accs, label='Val Accuracy')ax2.set_title('Training and Validation Accuracy')ax2.set_xlabel('Epoch')ax2.set_ylabel('Accuracy')ax2.legend()ax2.grid(True)plt.tight_layout()plt.savefig('training_history.png')mlflow.log_artifact('training_history.png')plt.show()

5. Step 5, create the main training code.

# Initial trainer, and read training hyperparam from training_config.jsontrainer = CIFAR10Trainer('training_config.json')# Begin trainingtrainer.train()# Start MLflow UI (run in terminal)print("Training complete! Please view execution results in experiment management.")

6. Step 6, we define the training hyperparameters in the configured JSON file.

{"model_name": "resnet18","num_classes": 10,"batch_size": 128,"learning_rate": 0.001,"epochs": 5,"optimizer": "adam","weight_decay": 0.0001,"momentum": 0.9,"image_size": 32,"train_tfrecord_path": "./tfrecords/cifar10_train.tfrecord","val_tfrecord_path": "./tfrecords/cifar10_val.tfrecord","data_augmentation": true,"horizontal_flip": true,"brightness_delta": 0.1,"contrast_range": [0.9, 1.1],"cifar10_local_path": "cifar-10-python.tar.gz","local_path": "cifar-10-python.tar.gz"}

7. Finally, we will run each piece of code sequentially, view the print result, and report the result after training is completed.

Viewing Execution Results in Experiment Management

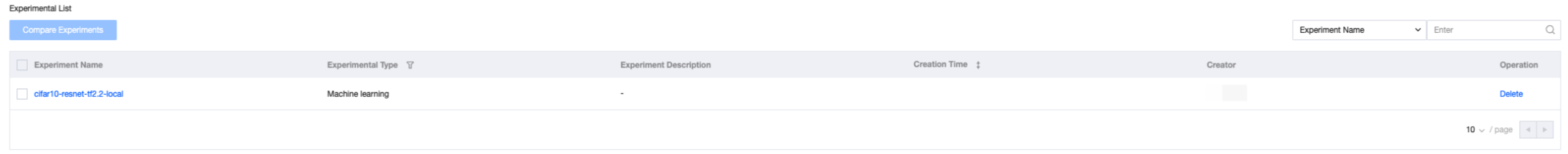

1. Click to enter the data science experiment management module, and the records from the just-run code are already viewable in experiment management. The interface displays Experiment Name, experiment type, experiment description, creation time, responsible person, operation item, etc.

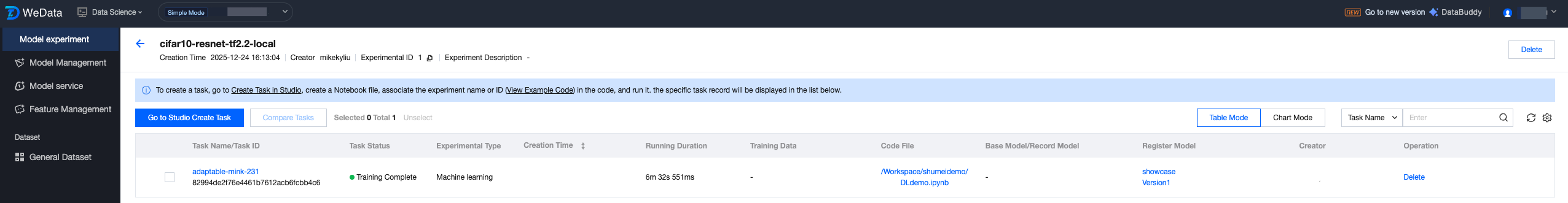

2. Click the Experiment Name to enter the record page, where you can view the just-submitted task record. The interface displays task name, task status, creation time, duration, code files, registered model, etc.

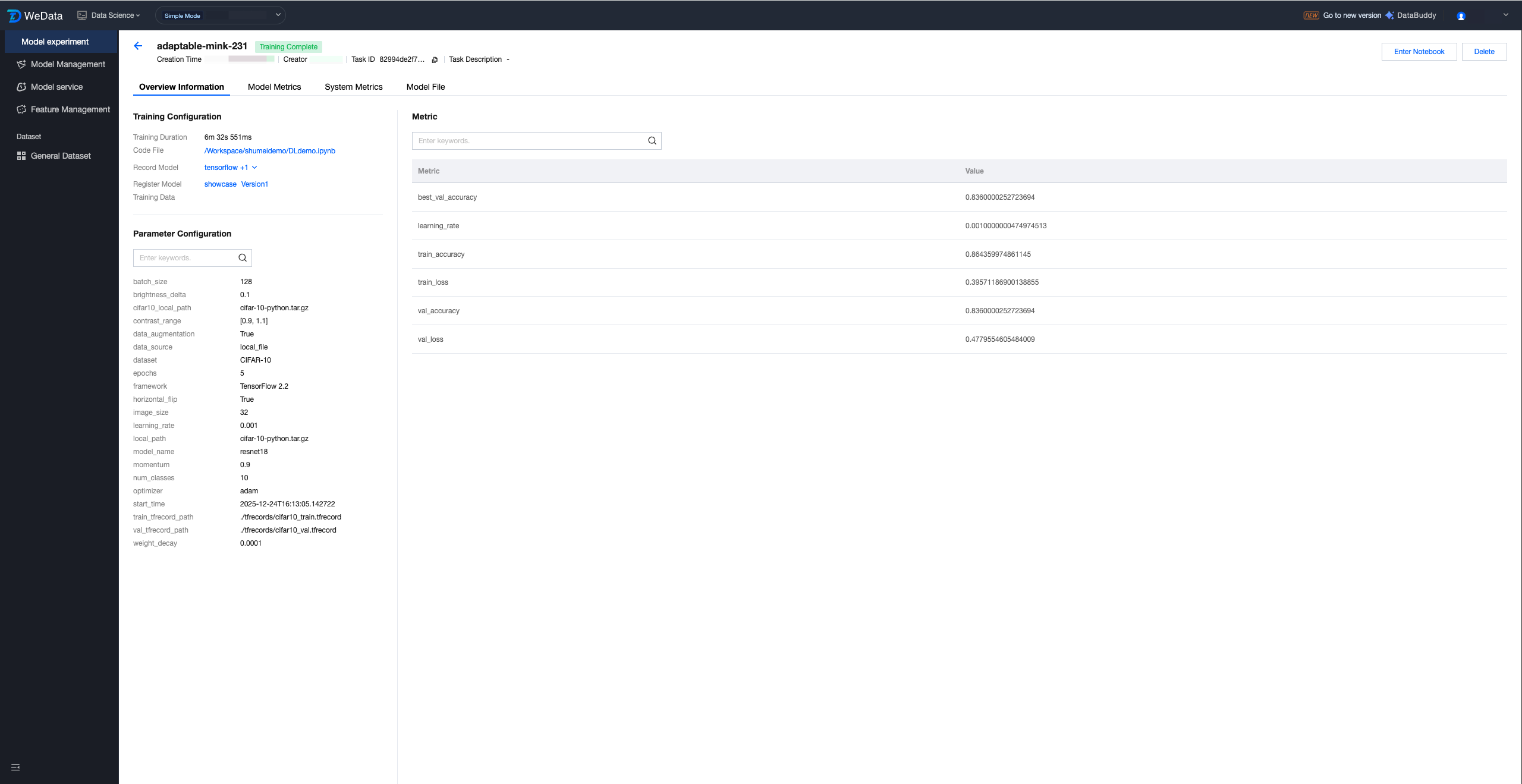

3. Click the task name to enter the overview page of running details, where you can view submitted training hyperparameters, model metrics, etc.

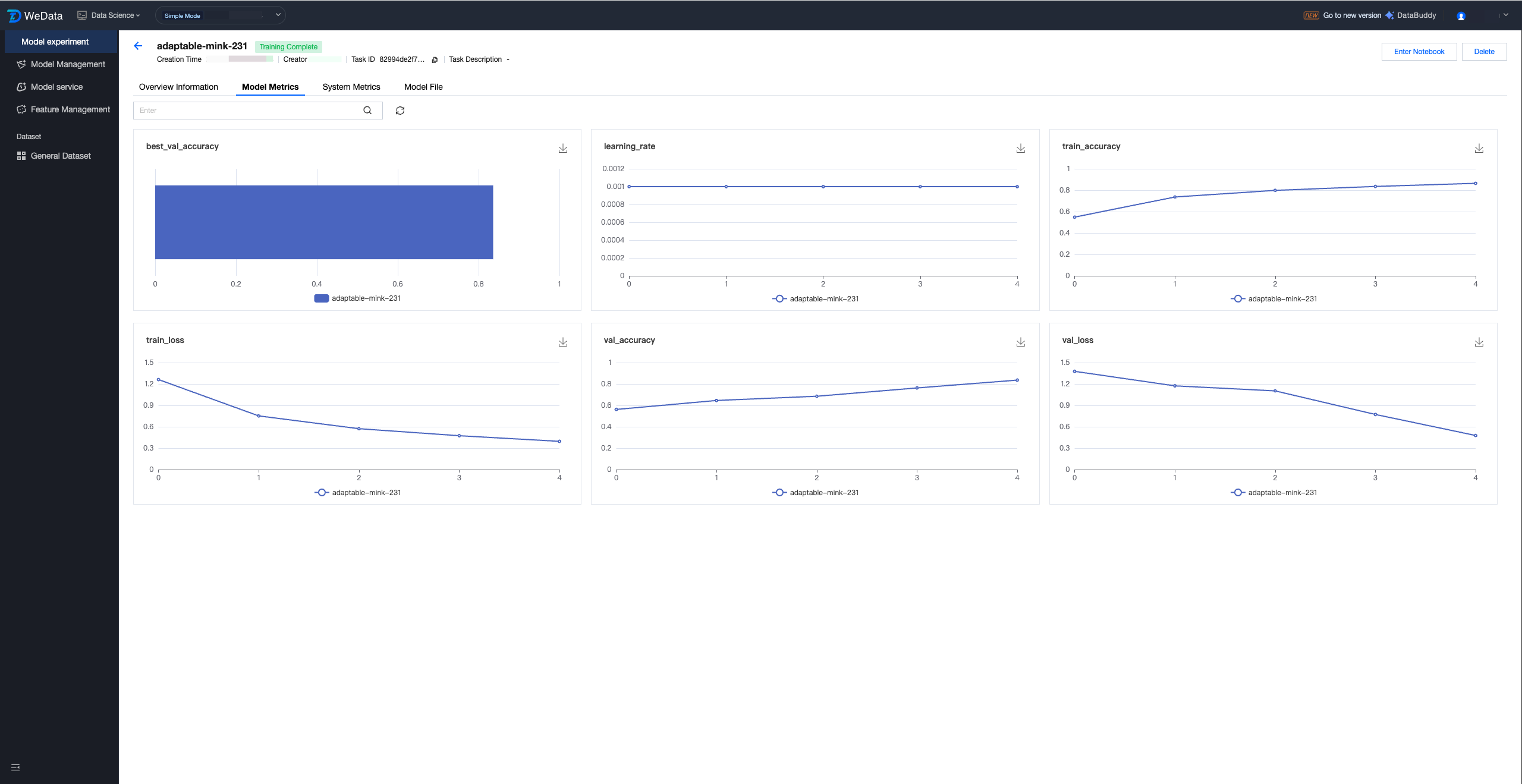

4. View model metric records.

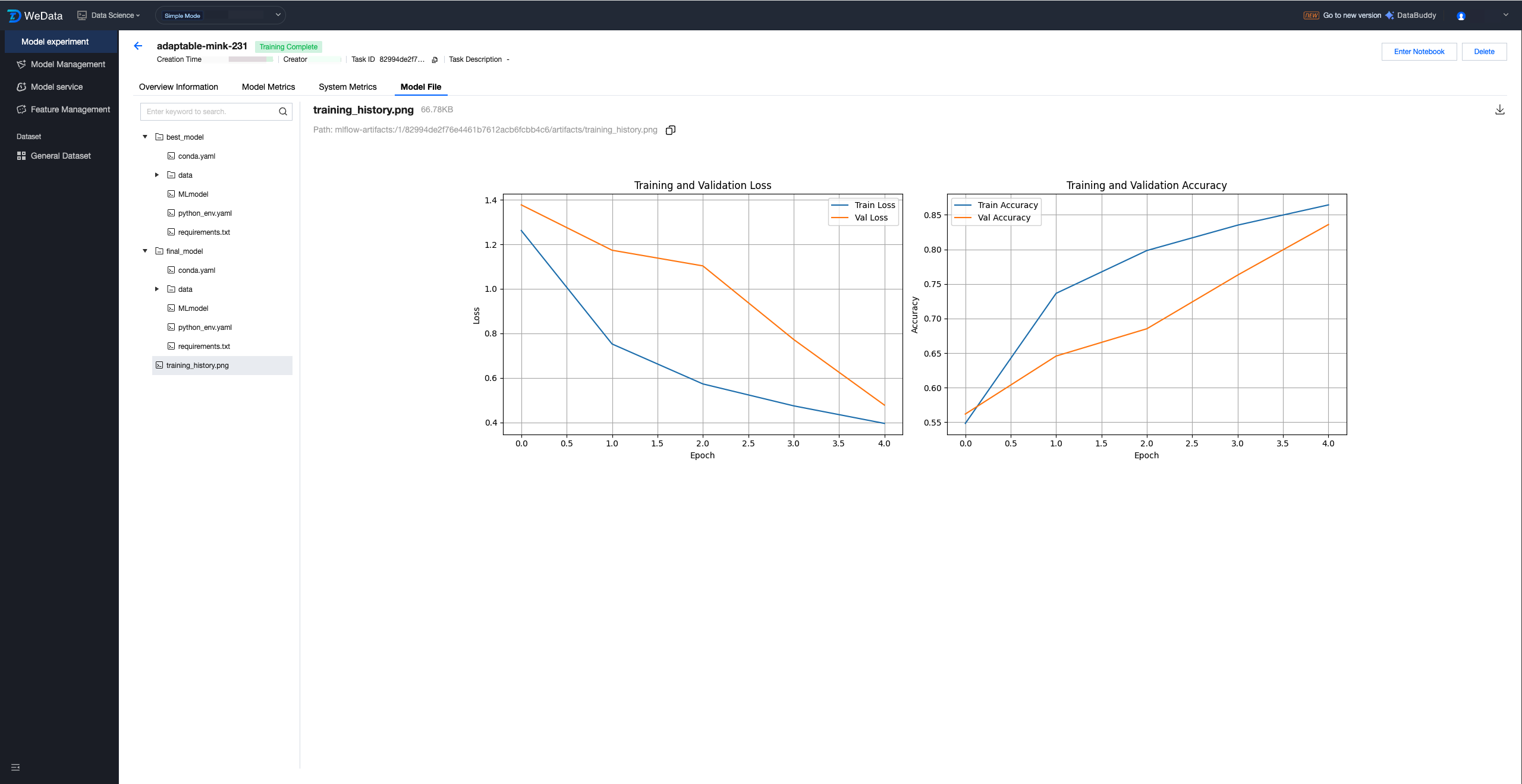

5. View model files and other records such as submitted images.

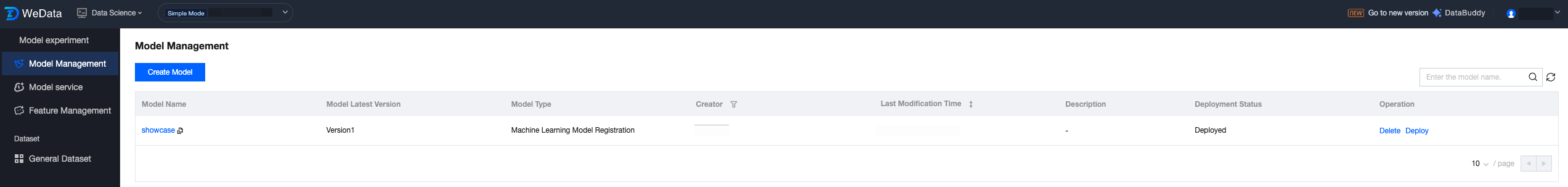

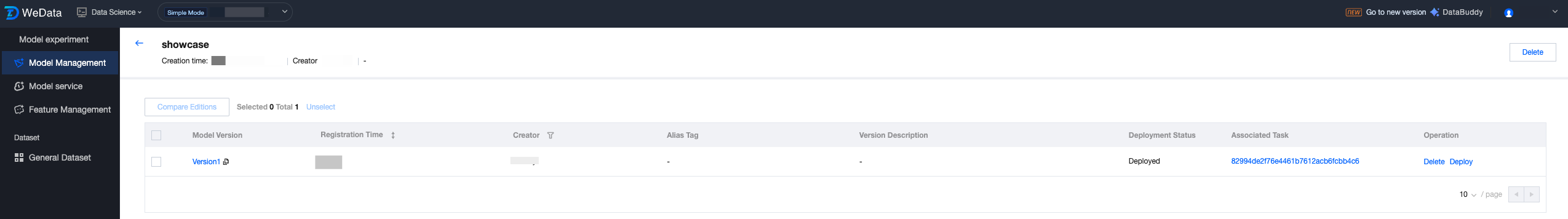

Viewing Registered Models in Model Management

1. Click the model management page to view recorded models.

2. Click the model name to enter the model version list and view version records.

Machine Learning Practical Tutorial: Feature-Based Wine Predictive Model Development

Note:

The prerequisites for this tutorial are: the data science feature has been enabled, DLC CPU resources have been purchased, and a machine learning resource group has been created.

Basic Information

Data

This tutorial uses the Wine Quality Dataset, a classic machine learning dataset for multi-classification and regression tasks. It is provided by a research team at the University of Aveiro in Portugal and is commonly used to assess model performance in prediction tasks that relate physicochemical properties to quality scores.

Model

This tutorial implements the ResNet-18 model, a lightweight Residual Network proposed by Microsoft's Kaiming He team in 2015. Its core lies in residual connections to address gradient disappearance/degradation problems in deep networks. It consists of 18 parameterized layers (convolution + full join) with approximately 11.7M parameters. It is a classic visual model commonly used for image classification, feature extraction, and lightweight scenarios.

Resource

DLC CPU resources: "Standard-S 1.1" or EMR CPU resources: "Version 350, includes EG component"

Environment

StandardSpark

Editing Code in Studio to Initiate Model Training

1. Step 1, we enable the feature engineering client.

# Construct Feature Store Clientfrom datetime import datetime,datefrom pytz import timezonefrom wedata.feature_store.client import FeatureStoreClientfrom wedata.feature_store.entities.feature_lookup import FeatureLookupfrom wedata.feature_store.entities.training_set import TrainingSetfrom pyspark.sql.types import StructType, StructField, StringType, TimestampType, IntegerType, DoubleType, DateTypefrom pyspark.sql.functions import colfrom wedata.feature_store.common.store_config.redis import RedisStoreConfigimport os# SecretID and SecretKey of Tencent cloudcloud_secret_id = ""cloud_secret_key = ""# Data source namedata_source_name = ""# Construct Feature Store Clientclient = FeatureStoreClient(spark, cloud_secret_id=cloud_secret_id, cloud_secret_key=cloud_secret_key)# Define feature table nametable_name = ""database_name = ""register_table_name = ""

2. Step 2, we define feature search to create a training set from existing feature tables.

from wedata.feature_store.utils import env_utilsproject_id = env_utils.get_project_id()expirement_name = f"{table_name}_{project_id}"model_name = f"{table_name}_{project_id}"# Define feature lookupwine_feature_lookup = FeatureLookup(table_name=table_name,lookup_key="wine_id",timestamp_lookup_key="event_timestamp")# Construct train datainference_data_df = wine_df.select(f"wine_id", "quality", "event_timestamp")# Construct trainsettraining_set = client.create_training_set(df=inference_data_df, # basic data dataframefeature_lookups=[wine_feature_lookup], # feature search configuration setlabel="quality", # tag columnexclude_columns=["wine_id", "event_timestamp"] # exclude unnecessary columns)# Get final train DataFrametraining_df = training_set.load_df()# print trainset dataprint(f"\\n=== Training set data ===")training_df.show(10, True)

3. Step 3, we initiate model training.

# Training modelfrom sklearn.model_selection import train_test_splitfrom sklearn.metrics import classification_reportfrom sklearn.ensemble import RandomForestClassifierimport mlflow.sklearnimport pandas as pdimport osproject_id=os.environ["WEDATA_PROJECT_ID"]mlflow.set_experiment(experiment_name=expirement_name)# Convert Spark DataFrame to Pandas DataFrame for trainingtrain_pd = training_df.toPandas()# Delete timestamp columns# train_pd.drop('event_timestamp', axis=1)# Prepare features and tagsX = train_pd.drop('quality', axis=1)y = train_pd['quality']# Split trainset and testsetX_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)# Convert datatime to timestamp(second)for col in X_train.select_dtypes(include=['datetime', 'datetimetz']):X_train[col] = X_train[col].astype('int64') // 10**9 # Convert nanoseconds to seconds# Verify that no missing values cause the dtype to be downgraded to object.X_train = X_train.fillna(X_train.median(numeric_only=True))# Initialize, train and log the model.model = RandomForestClassifier(n_estimators=100, max_depth=3, random_state=42)model.fit(X_train, y_train)with mlflow.start_run():client.log_model(model=model,artifact_path="wine_quality_prediction", # model artifact pathflavor=mlflow.sklearn,training_set=training_set,registered_model_name=model_name, # model name (if catalog is enabled, must be catalog model name))

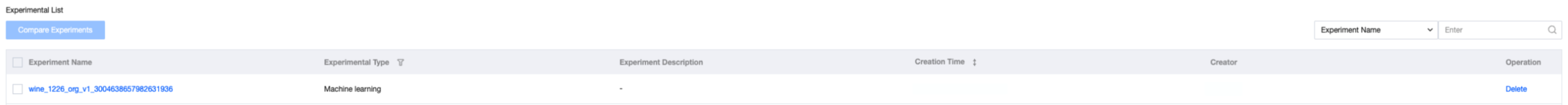

Viewing Execution Results in Experiment Management

1. Click to enter the data science experiment management module, and the records from the just-run code are already viewable in experiment management. The interface displays Experiment Name, experiment type, experiment description, creation time, responsible person, operation item, etc.

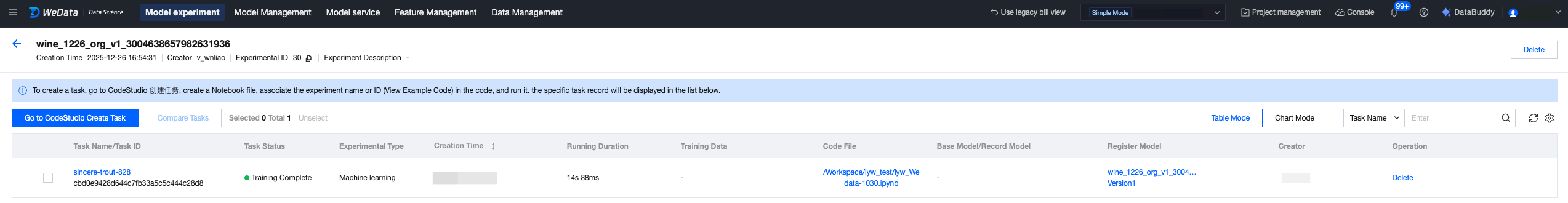

2. Click the Experiment Name to enter the record page, where you can view the just-submitted task record. The interface displays task name, task status, creation time, duration, code files, registered model, etc.

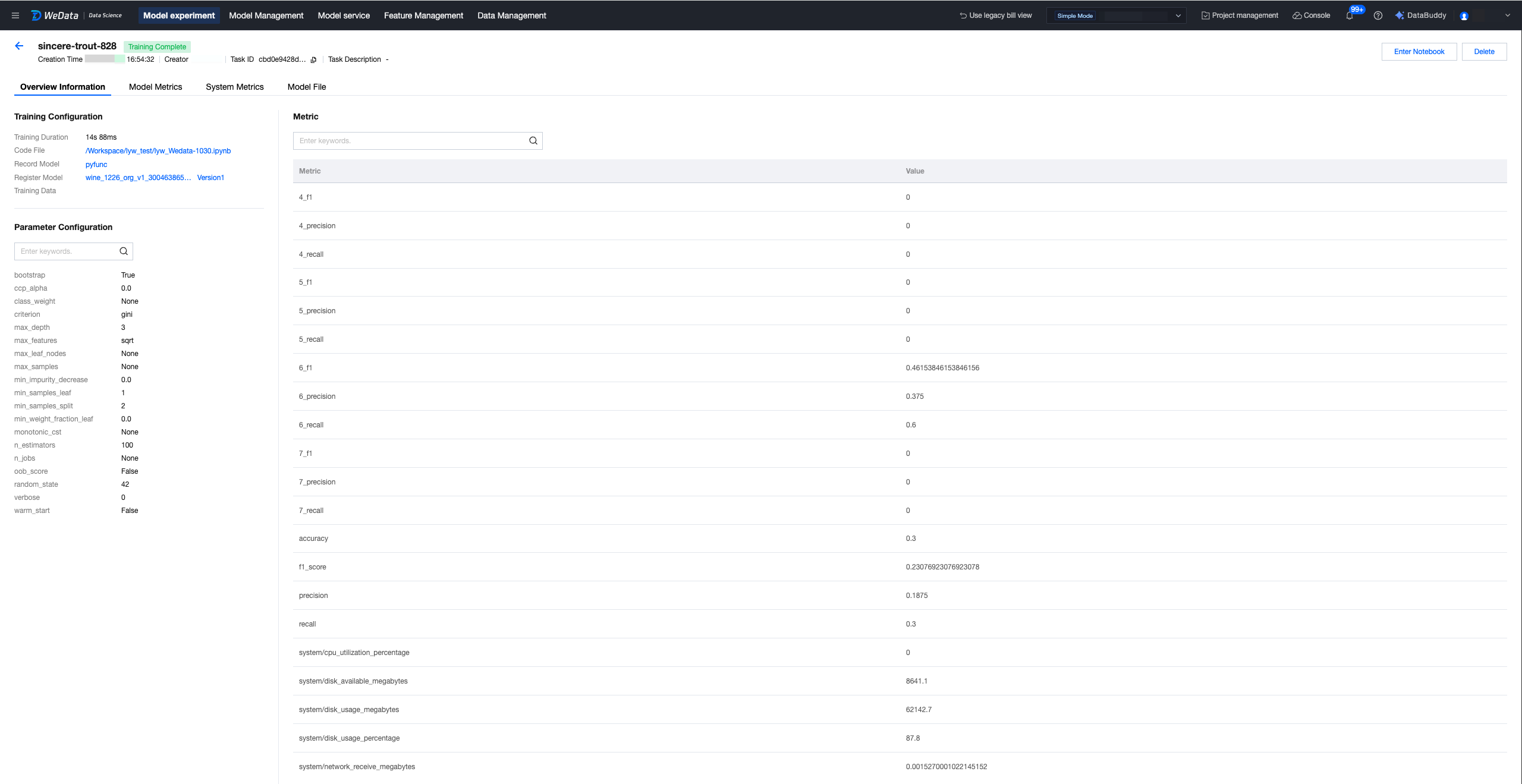

3. Click the task name to enter the overview page of running details, where you can view submitted training hyperparameters, model metrics, etc.

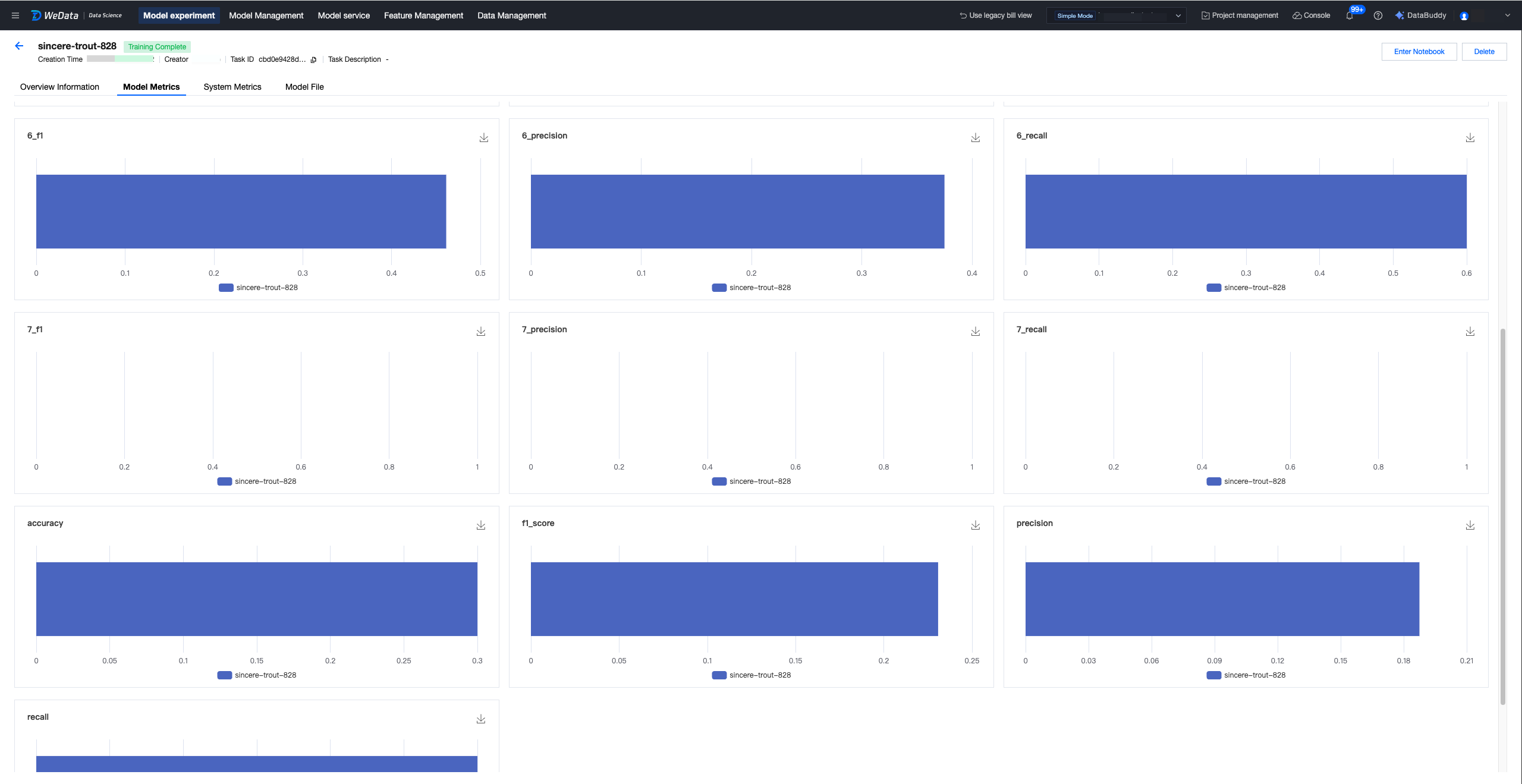

4. View model metrics.

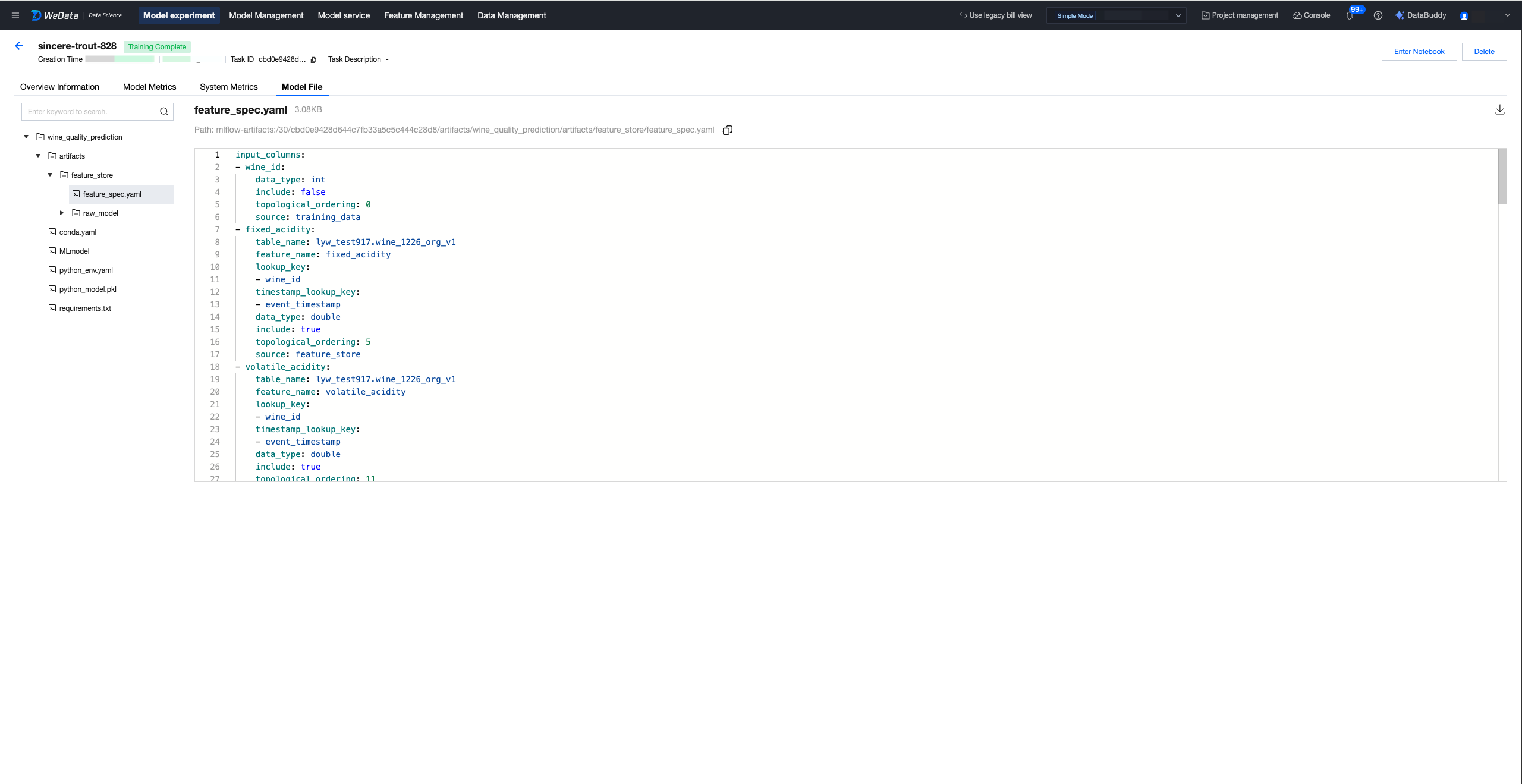

5. View model files. If you use features to build a training dataset and record the model with feature engineering APIs, a feature spec file will be stored simultaneously to help guide the source of training features.

Viewing Registered Models in Model Management

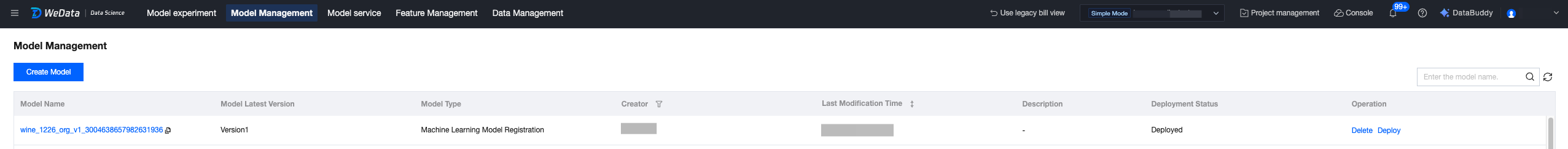

1. Click the model management page to view recorded models.

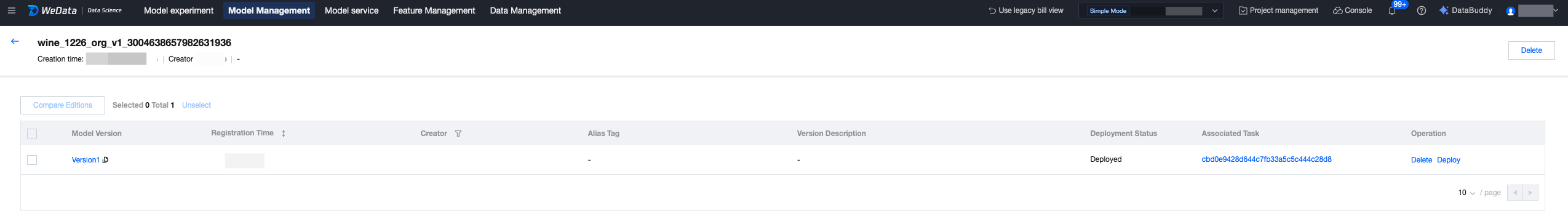

2. Click the model name to enter the model version list and view version records.

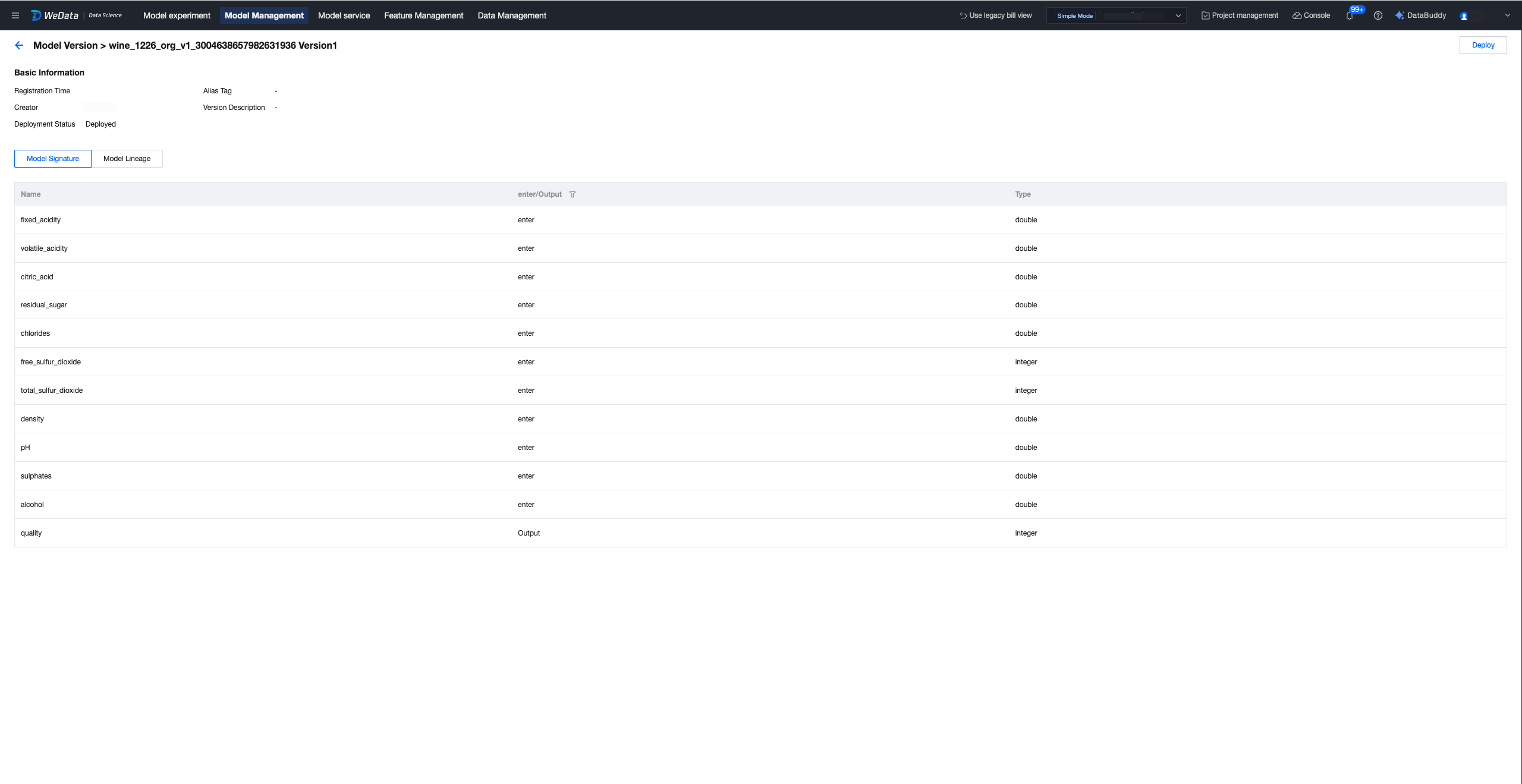

3. Click the model version to view the model signature, lineage, etc.

Help and Support

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback