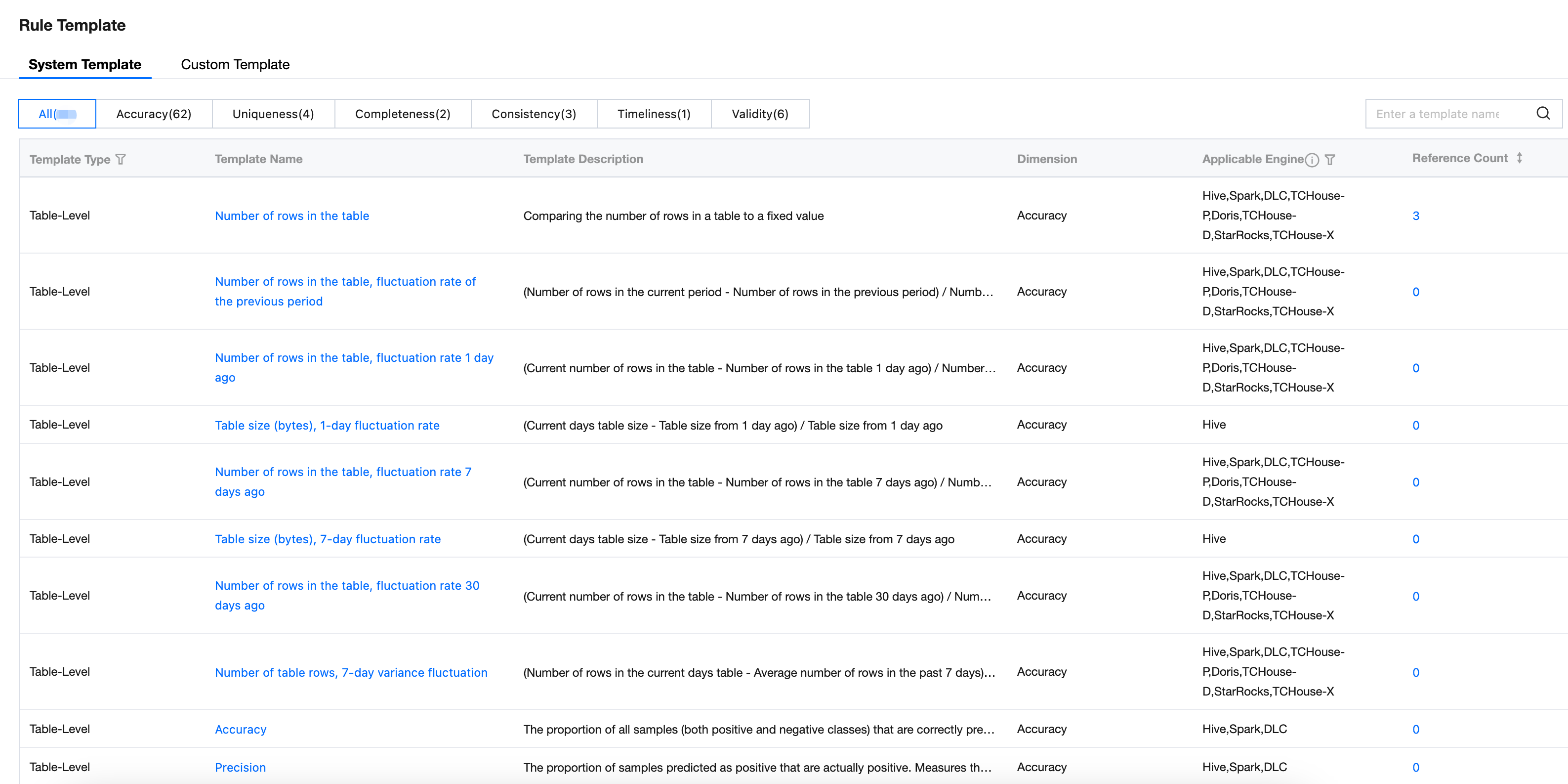

View System Built-In Template

The system has 76 built-in rule templates that can be used directly. Before using, carefully understand the use cases of each template.

View Template List

On the rule template management webpage, you can view the system template list.

Users can search for or filter by template name, description keyword, type, dimension, and applicable engine. Meanwhile, users can create and perform bulk management operations in the custom template interface.

Field | Details |

Template Type | Currently supported template types include table-level and field-level, with filtering support. |

Template Name | Template naming |

Template Description | Detailed description of the execution logic and formula for this template rule. |

Level | Accuracy, uniqueness, integrity, consistency, timeliness, validity, support filtering |

Applicable Engine | The engine types applicable to this template include: Hive, Spark, DLC, TCHouse-D, TCHouse-P, TCHouse-X, Doris, StarRocks, with engine filtering support. |

number of references | Currently referenced associated number of rules, support sorting |

Template Distribution

Provided 76 templates from dimensions of accuracy, uniqueness, integrity, consistency, timeliness, and validity, as follows:

Normal Table Template

Monitoring Object | Rule Dimension | Compute Item | Calculation Subitem | Description | Numeric Value | Numeric-Volatility Type | Numeric-Standard Score | Other | ||||||||

| | | | | Fixed Value | Value Range | Previous Period | 1 day ago | 7 days ago | 30 days ago | 7 days | 30 days | empty/unique/repeat | Format match | Enumeration range | value |

Table permissions | accuracy | Number of Rows | | Count data rows | ✅ | - | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | - | - | - | - |

| | Table size (byte) | | Calculate the size of the data table (Hive tables only) | ✅ | - | - | ✅ | ✅ | - | - | - | - | - | - | - |

| timeliness | data output timeliness | | Count the number of data rows. If the row count is 0, it is considered as no data produced. | ✅ = 0 | - | - | - | - | - | - | - | - | - | - | - |

field-level | accuracy | Field value | Average value | Compute numerical average | ✅ | - | - | ✅ | ✅ | ✅ | ✅ | ✅ | - | - | - | - |

| | | Aggregate value | Compute numerical aggregate value | ✅ | - | - | ✅ | ✅ | ✅ | ✅ | ✅ | - | - | - | - |

| | | Median | Compute numerical median | ✅ | - | - | ✅ | ✅ | ✅ | ✅ | ✅ | - | - | - | - |

| | | Minimum value | Compute the minimum numerical value | ✅ | - | - | ✅ | ✅ | ✅ | ✅ | ✅ | - | - | - | - |

| | | Maximum Value | Compute the maximum numerical value | ✅ | - | - | ✅ | ✅ | ✅ | ✅ | ✅ | - | - | - | - |

| uniqueness | Unique field value | Number of unique values | Validate unique values | - | - | - | - | - | - | - | - | ✅ | - | - | - |

| | | Number of unique values/Total number of lines | | - | - | - | - | - | - | - | - | ✅ | - | - | - |

| | Duplicate field values | Duplicate count | Duplicate check | - | - | - | - | - | - | - | - | ✅ | - | - | - |

| | | Duplicate count/Total number of lines | | - | - | - | - | - | - | - | - | ✅ | - | - | - |

| integrity | Field null value | Null value count | Validate null value | - | - | - | - | - | - | - | - | ✅ | - | - | - |

| | | Null value count/total number of lines | | - | - | - | - | - | - | - | - | ✅ | - | - | - |

| validity | Phone number format | Invalid count | Regular expression check, compliant with mainland China mobile number format | - | - | - | - | - | - | - | - | - | ✅ | - | - |

| | | Invalid count/Total number of lines | | - | - | - | - | - | - | - | - | - | ✅ | - | - |

| | email format | Invalid count | Regular expression check, compliant with email format | - | - | - | - | - | - | - | - | - | ✅ | - | - |

| | | Invalid count/Total number of lines | | - | - | - | - | - | - | - | - | - | ✅ | - | - |

| | ID card format | Invalid count | Regular expression check, compliant with Mainland ID card format | - | - | - | - | - | - | - | - | - | ✅ | - | - |

| | | Invalid count/Total number of lines | | - | - | - | - | - | - | - | - | - | ✅ | - | - |

| Consistency | Field data range | Value Range | Check whether the value is within the range | - | ✅ | - | - | - | - | - | - | - | - | - | - |

| | | Enumeration range | Check whether the character value is within the enumeration value | - | - | - | - | - | - | - | - | - | - | ✅ | - |

| | Field data correlation | | Compare size with a field in another database table | - | - | - | - | - | - | - | - | - | - | - | ✅ |

Reasoning Table Template

For the model inference table, from the accuracy dimension, it provided 20 templates of model drift, model classification, and model regression, as follows:

Note:

Currently applicable only to DLC engine.

Monitoring Object | Level | Metric Name | Applicable Feature Type | Meaning & Calculation Method |

Model Inference Table | classification model | accuracy | - | Percentage of correct predictions True Positive (TP, actual positive): Actually class i and predicted as class i. For example: label is class A and predict is class A. |

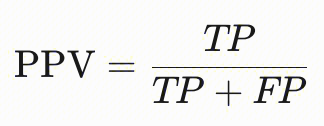

| | Precision | - | Proportion of actual positives among samples predicted as positive, TP/(TP+FP) True Positive (TP, actual positive): Actually positive and predicted as positive. For example: label is class A and predict is class A. False Positive (FP, false positive): Actually negative but predicted as positive. For example: label is not class A and predict is class A. False Negative (FN, false negative): Actually positive but predicted as negative. For example: label is class A and predict is not class A. True Negative (TN, true negative): Actually negative and predicted as negative. For example: label is not class A and predict is not class A. |

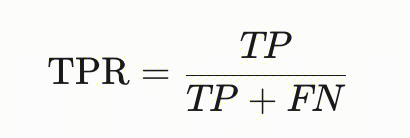

| | recall rate | - | Proportion of correctly predicted positives among actual positive samples, TP/(TP+FN) True Positive (TP, actual positive): Actually positive and predicted as positive. For example: label is class A and predict is class A. False Positive (FP, false positive): Actually negative but predicted as positive. For example: label is not class A and predict is class A. False Negative (FN, false negative): Actually positive but predicted as negative. For example: label is class A and predict is not class A. True Negative (TN, true negative): Actually negative and predicted as negative. For example: label is not class A and predict is not class A. If it is multi-category: |

| | F1 score | - | F1 score. 2*(precision*recall)/(precision+recall) equivalent to F1-Score = 2 * TP / (2 * TP + FP + FN) |

| | predict parity | - | The model should ensure equal positive predictive value (PPV) across different groups (e.g., gender, race). The core idea is that the model's accuracy in predicting positive classes should be identical for all groups, meaning the percentage of correctly predicted positive samples must be consistent. Calculate the PPV of the specified group's attribute, such as fetching credit approval results for ALL white and black people to conduct computing.  |

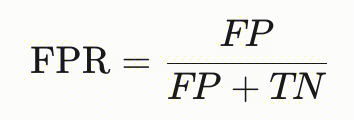

| | Predictive parity | - | Used to measure the consistency of false positive rate (FPR) across different groups (e.g., groups divided by sensitive attributes such as gender or race). The core idea is that the model's error rate in predicting negative classes should be identical for all groups, meaning the percentage of incorrectly predicted negative samples must be consistent. Calculate the FPR of the specified group's attribute, such as fetching the credit approval results of all white and black people to calculate FPR.  |

| | Equal opportunity | - | Used to measure the consistency of true positive rate (TPR) across different groups (e.g., groups divided by sensitive attributes such as gender or race). The core idea is that the model's probability of predicting positive classes for "actual positive" samples should be identical for all groups, ensuring both advantaged and disadvantaged groups obtain the same opportunity. Calculate the TPR of the specified group's attribute, such as fetching the credit approval results of all white and black people to calculate TPR.  |

| | Statistical parity | - | Used to measure the balanced distribution of prediction results across different groups (e.g., groups divided by sensitive attributes such as gender or race). The core idea is that the probability of predicting positive classes should be the same for all groups, ensuring equal opportunity to obtain positive predictions regardless of group attributes. Calculate the statistical parity of the specified group's attribute, such as fetching the credit approval results of all white and black people to calculate selection rate. For example, the selection rate for white people = number of samples predicted as positive for white people / total number of white people. |

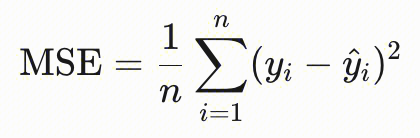

| regression model | mean squared error (MSE) | - | The value ranges from 0 to infinity, and a smaller value is better. It reflects the deviation between the predicted value and the actual value, with a smaller value indicating higher accuracy. yi is the label value, and another with a caret is the predicted value.  |

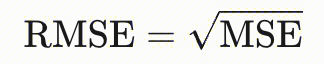

| | root mean squared error (RMSE) | - | The value ranges from 0 to infinity, and a smaller value is better. It is the square root of MSE, sensitive to abnormal values, with a smaller value indicating higher accuracy. yi is the label value, and another with a caret is the predicted value.  |

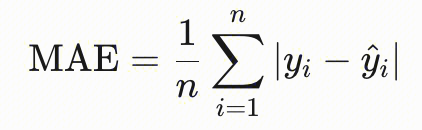

| | mean absolute error (MAE) | - | The value ranges from 0 to infinity, and a smaller value is better. It directly measures the absolute value of prediction error. yi is the label value, and another with a caret is the predicted value.  |

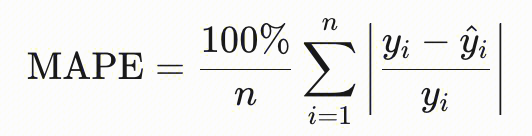

| | mean absolute percentage error (MAPE) | - | The value ranges from 0 to infinity, and a smaller value is better. The penalty for underestimated error (predicted value < actual value) is higher than for overestimated error. yi is the label value, and another with a caret is the predicted value.  |

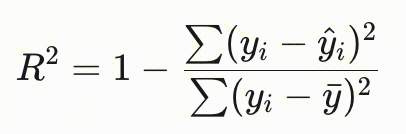

| | R2 metric score | - | The R2 score ranges between 0 and 1. The closer it is to 1, the better the regression fitting effect. yi is the label value, and another with a caret is the predicted value.  |

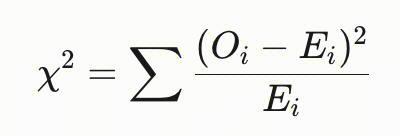

| Model drift | Chi-Squared Test | Category | It is a statistical method used to detect significant changes in data distribution, especially suitable for comparing distributions of categorical or discrete data. The core idea is to compare the differences between actual observed frequencies and theoretically expected frequencies, determining whether this difference is caused by random fluctuations or reflects a true distribution shift. Oi: The observed frequency of the i-th category. Ei: The expected frequency of the i-th category (based on the benchmark distribution).  |

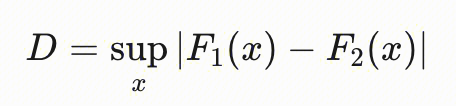

| | Kolmogorov-Smirnov test | Numeric value | It is a non-parametric statistical method used to detect whether there is a significant difference in the distribution of two groups of data. The KS test compares the cumulative distribution functions (CDF) of two groups of data, calculates the maximum vertical distance (D value) as the statistic, and determines distribution consistency. F1(x) and F2(x) are the CDFs of the two group data. The larger the D value, the more distinct the distribution difference; reject the null hypothesis when p<0.05 (consider the existence of distribution offset).  |

| | Total variation distance | Category | It is a core metric for measuring the difference between two probability distributions, especially used to detect data distribution offset. |

| | Chebyshev distance | Category | It is a method used to measure the maximum single-dimensional difference between two probability distributions or data samples, especially for detecting extreme values or local distinct offsets. |

| | Jensen-Shannon divergence | Category | It is a symmetric measure for quantifying differences between two probability distributions, built upon the Kullback-Leibler (KL) divergence. Commonly used for detecting distribution drift, it quantifies the level of change in data distribution, particularly in machine learning model monitoring and data drift analysis as an important application. |

| | Wasserstein distance | Numeric value | Calculate the offset between two data distributions. |

| | Population Stability Index (PSI) | Numeric value | It is a metric to measure the distribution difference between two groups (such as training set and test set, samples from different time periods), widely used in risk control model monitoring and feature stability evaluation. |

Usage Instructions

Term | Description | |

Monitoring Object | Table permissions | When the monitored object is a normal table, you can monitor the number of rows, table size, and data output timeliness (equivalent to the number of rows). When the monitored object is a model inference table, you can monitor multiple features of the table from three dimensions: model drift, model classification, and model regression. |

| field-level | When the monitoring object is field-level, you can monitor the field value (including mean, maximum value, minimum value, median, aggregate value), field value format (mobile number, mailbox, identity card number), and whether the field is empty. |

Rule dimension | - | The rule dimension is used to calculate the quality score, reflecting the proportion of different types of rules in quality. The system has 6 built-in rule dimensions: accuracy, uniqueness, integrity, consistency, timeliness, validity. |

Check Method | Numeric | Mainly includes value comparison and numeric range comparison. |

| volatility type | Term explanation: volatility type reflects value fluctuation, indicating the rise/drop range compared with a certain point in time. Calculation Formula: Volatility = This scanning result / Scanning result at a certain time point * 100%. Note: The computed result of volatility is a percentage. When using the volatility Template, you must specify a partition. Example 1: Cyclical fluctuation 7 days ago When a partition is specified and the baseline value selects the data of 7 days ago, if the computed result is 100%, It indicates that this time the partition data has increased by 100% compared with that of 7 days ago. Example 2: Cyclical fluctuation last cycle: When a partition is specified and the baseline value selects the last run cycle, and associates the rule with a production scheduling task (for example: a certain offline development task), if the computed result is 100%, It indicates that the stats after this offline development task operation completed have increased by 100% compared to last time. Example 3: Cycle volatility + default period: When using the cycle volatility template setting for quality rules and setting the default period, such as 7 days ago. If this rule is not associated with a production scheduling task, when the computed result is 100%. It indicates that the partition data this time has increased by 100% compared with the data 7 days ago. That is: compare the current data with the data from 7 days ago. |

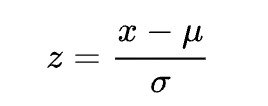

| Standard score type variance fluctuation | Term explanation: Standard score is an important statistical concept that reflects if a certain value falls within a credible interval range. If the calculation result is too large or too low, there is a very high probability that this data is an abnormal value. Calculation Formula:  Note: The standard score is a unitless decimal that reflects whether data is abnormal in data centralization. It is generally considered that when the standard score absolute value is above 3, it is considered an exception, at this point the normal probability is only 0.28%. [-1,1]: normal probability: 68.26% [-2,2]: normal probability: 95.44% [-3,3]: normal probability: 99.72% NOT_IN [-3,3]: normal probability: 0.28% |

| Others | No limit on validate field type. Empty/unique/duplicate: count or calculate the ratio of empty values/unique values/duplicate values; Format match: count or calculate the ratio of entries that do not match the format; Enumeration range: count entries not in the enumeration value; Note: Fill in the expected value here. When the field is not in range, it will trigger an alarm. Field relevance: check whether the field value is the same as that in another database table. Comparison operators: larger than, less than, equal Target data: database table, field, filter condition Association condition: associated fields between two tables. Note: The comparison table must have a one-to-one correspondence with the detection table data. |

Help and Support

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback