大模型节点

下载

聚焦模式

字号

节点功能

大模型节点属于信息处理类节点,通过调用大语言模型,根据输入的提示词处理各类复杂的任务,满足用户业务需求,并且支持调整模型参数获取个性化输出要求。

操作说明

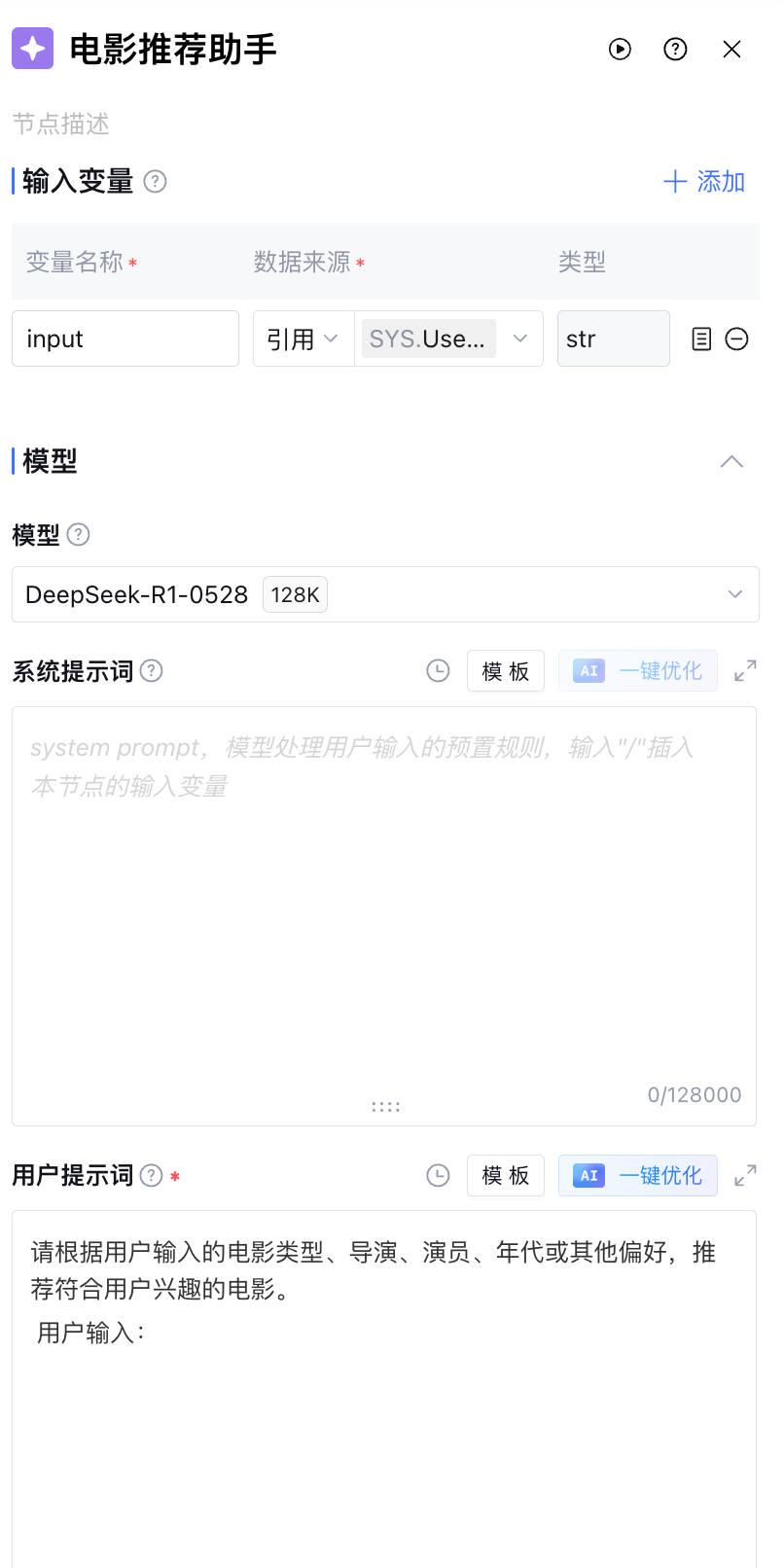

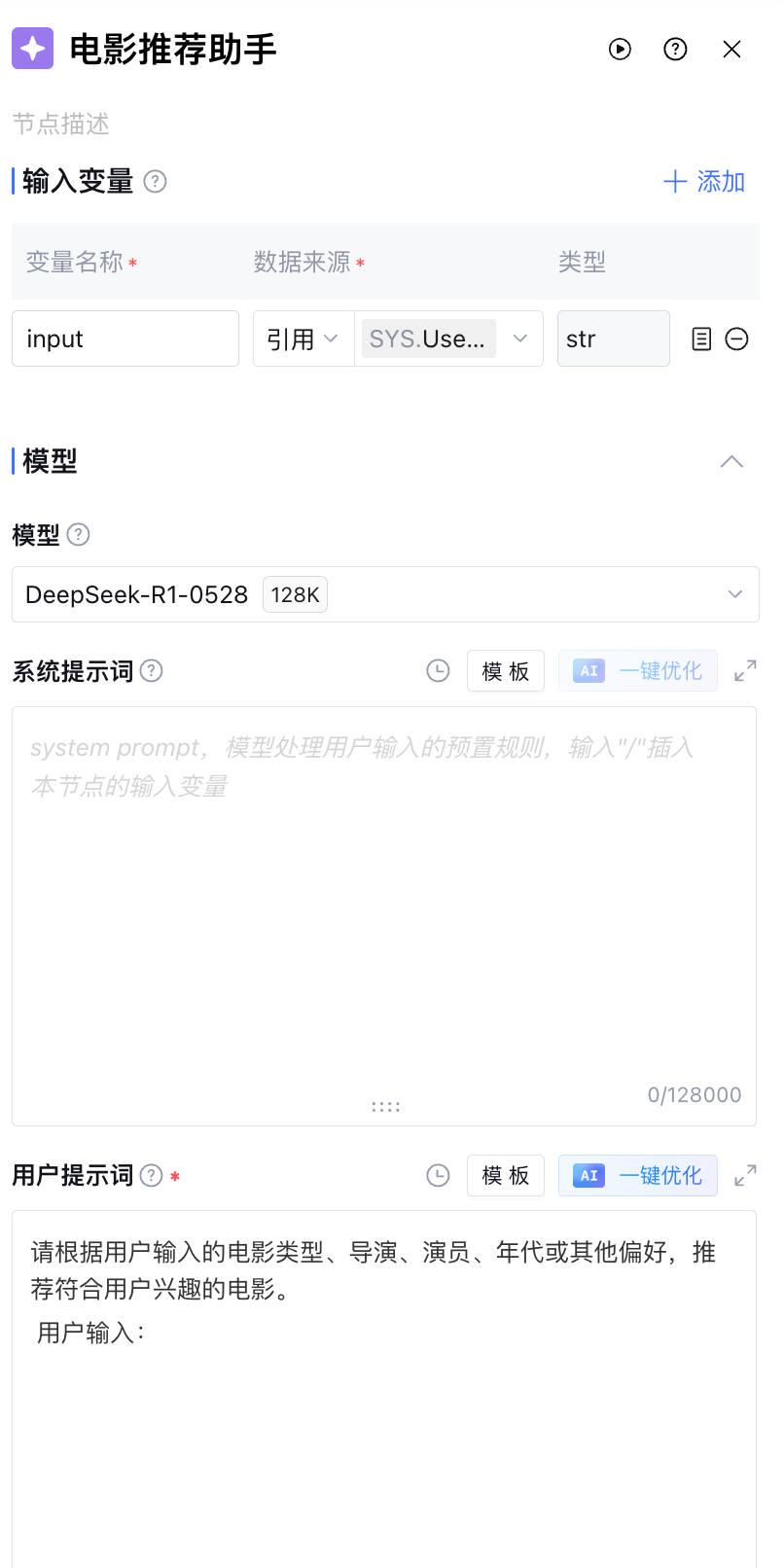

输入变量

输入变量仅在该节点内部生效,不能跨节点使用。支持最多添加50个输入变量,满足多输入变量场景需要。点击添加,进行如下配置以添加输入变量。

配置 | 说明 |

变量名称 | 该变量的名称,只能包含字母、数字或下划线,并且以字母或下划线开头,必填 |

变量描述 | 该变量的说明信息,非必填 |

数据来源 | 该变量的数据来源,支持“引用”“输入”两种选项。“引用”可选择前序所有节点的输出变量,“输入”可手动填入固定值,必填 |

变量类型 | 该变量的数据类型,不可选择,默认为“引用”的变量类型或“输入”的 string 类型 |

模型

支持选择用户当前账号下具有使用权限的大模型,同时,支持配置大模型相关的温度、Top_P 和最大回复 Token 三个高级设置。

配置 | 说明 |

温度 | 用于控制生成内容的随机性。温度越高(接近1.0),模型选择的词汇会更为分散,有更多的随机性;温度越低(接近0),模型的选择会更有确定性,输出的内容会更为保守、稳定。 |

Top_P | 用于控制内容生成的多样性。较小的 Top_P 值会导致更保守的选择,生成的文本可能更连贯但缺乏多样性;较大的 Top_P 值会导致更多的随机性和多样性,但可能会引入不相关的词。 |

最大回复Token | 用于控制模型生成内容的长度,确保回复不超过设定的最大 Token 数量。 |

多模态输入

根据模型能力的不同,大模型节点能够接受多模态类型的输入,包括“视觉输入”与“音频输入”。

视觉输入

视觉输入用于视觉理解或图片生成的参考输入。当大模型节点的模型带有“全模态”、“视频理解”、“图片理解”或“图片生成”中的任意一个标签时,触发视觉输入选项。支持最多添加50个输入变量。

配置 | 说明 |

变量名称 | 该变量的名称,只能包含字母、数字或下划线,并且以字母或下划线开头,必填 |

变量描述 | 该变量的说明信息,非必填 |

数据来源 | 该变量的数据来源,支持“引用”“输入”两种选项。“引用”可选择前序所有节点的输出变量,“输入”可手动填入固定值,必填 |

变量类型 | 该变量的数据类型,不可选择,支持输入image、array<image>、video、array<video>类型。 |

音频输入

音频输入用于音频理解或音频生成的参考输入。当大模型节点的模型带有“全模态”、“语音识别”、“音频理解”或“语音合成”中的任意一个标签时,触发音频输入选项。支持最多添加50个输入变量。

配置 | 说明 |

变量名称 | 该变量的名称,只能包含字母、数字或下划线,并且以字母或下划线开头,必填 |

变量描述 | 该变量的说明信息,非必填 |

数据来源 | 该变量的数据来源,支持“引用”“输入”两种选项。“引用”可选择前序所有节点的输出变量,“输入”可手动填入固定值,必填 |

变量类型 | 该变量的数据类型,不可选择,支持输入audio、array<audio>类型。 |

系统提示词

系统提示词设定模型的角色、行为模式和输出风格,为大模型任务处理提供预置指令。提示词编写得越明确,大模型回复也会越符合预期。系统提示词输入框支持用户编写大模型的 prompt,可通过输入“/”引用该节点的输入变量。

版本:支持将当前提示词草稿保存为一个版本,并填写版本说明。已保存的版本可以在查看版本记录里查看和复制,版本记录仅会展示当前所在提示词框下创建的版本。支持在内容对比中选择两个版本,查看它们的提示词内容差异。

模板:设定好的角色指令格式模板,建议按照模板填写,指令遵循效果更佳。编写指令后也可以点击模板 > 保存为模板将编写好的指令存为模板。

AI 一键优化:初步完成角色设定后,可单击一键优化对角色设定内容进行优化,模型将基于已输入的内容优化设定,能够使模型更好地完成对应的要求。

注意:

AI 一键优化功能将消耗用户的 tokens 资源。

用户提示词

用户提示词支持用户输入的具体指令请求或问题,可通过输入“/”引用该节点的输入变量。

用户提示词同样支持版本、模板、AI 一键优化功能。

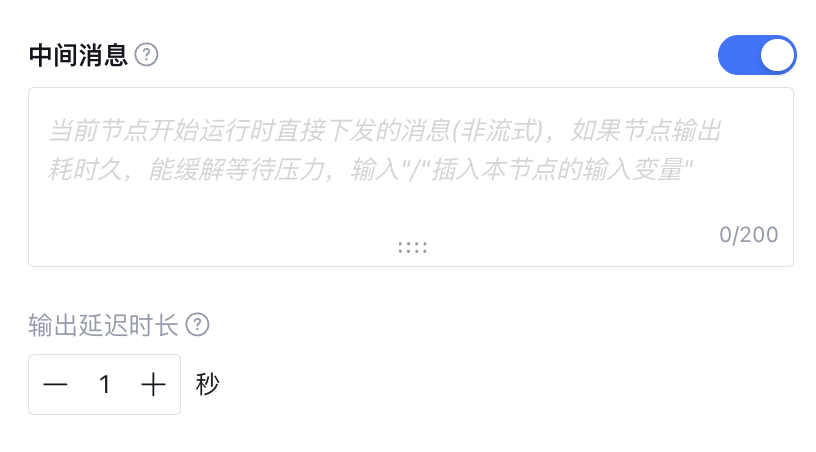

中间消息

如果节点输出耗时久,支持用户自定义中间消息缓解等待压力,为非流式输出,支持引用前序节点变量。

输出变量

当不包含文件输出时,经该节点处理后的输出变量默认为大模型思考过程与大模型运行后输出内容,以及运行时报错信息 Error(数据类型为 object,正常运行时该字段为空)。

输出变量支持文本、Markdown 和 JSON 三种格式输出,其中 JSON 格式支持用户自定义输出变量,可手动添加或通过 JSON 导入添加。

当输出包含文件输出时(例如:图片、文档、视频、音频等),输出变量除上述内容外,还含有 GenFiles 段,用于输出多模态大模型运行后生成的文件资源。包含此字段时,仅支持文本格式与 Markdown 格式,不支持自定义输出变量。

异常处理

可手动开启异常处理(异常处理默认关闭),支持超时触发处理、异常重试和异常处理方式的配置。配置内容见下表。

当前仅支持用户设置“超时触发处理”的时长,其他异常由平台自动识别。

配置 | 说明 |

超时触发处理 | 节点运行的最大时长,超过时触发异常处理。大模型节点的超时时间默认值为300s,超时时间设置范围为1-600s |

最大重试次数 | 节点运行异常时重新运行的最大次数。重试超过设定次数,认为该节点调用失败,执行下方的异常处理方式,默认为3次 |

重试时间间隔 | 每次重新运行的时间间隔,默认为1秒 |

异常处理方式 | 支持“输出特定内容”、“执行异常流程”和“中断流程”三种 |

异常情况的输出变量 | 选择异常处理方式为“输出特定内容”时,超过最大重试次数后节点返回的输出变量 |

选择异常处理方式为“输出特定内容”时,发生异常后工作流不会中断,节点异常重试后直接返回用户在输出内容中设置的输出变量及变量值;

选择异常处理方式为“执行异常流程”时,发生异常后工作流不会中断,节点异常重试后执行用户自定义的异常处理流程;

选择异常处理方式为“中断流程”时,没有更多设置项,发生异常后工作流执行中断(中断流程可能造成工作流数据丢失,请备份后操作)。

应用示例

1. 参数归一化场景

医院挂号场景中,前序节点从用户的对话中提取到了用户希望挂号的医院名称但可能不是医院全称,而后续工具节点需要使用医院全称进行挂号。此时,您可以使用大模型节点对医院名称进行归一化。

假设前序节点提取到的医院名称放在了“待归一化医院名称”变量中,此时 Prompt 示例如下:

Prompt 示例:

请根据<格式要求>处理<参数取值>中的参数取值。

<参数取值>

{{待归一化医院名称}}

</参数取值>

<参数取值范围>

北京积水潭医院,北京天坛医院,北京安贞医院,北京协和医院,北京中医药大学东方医院,北京朝阳医院,北京中日友好医院,北京世纪坛医院,北京大学人民医院,北京301医院,北京宣武医院,北京儿童医院,北京大学第一医院。

</参数取值范围>

<格式要求>

- 如果<参数取值范围>中存在与<参数取值>匹配的元素,则返回列表中的最匹配的元素作为处理后的参数取值。否则保持当前参数取值不变。

- 只返回处理后的参数取值。

</格式要求>

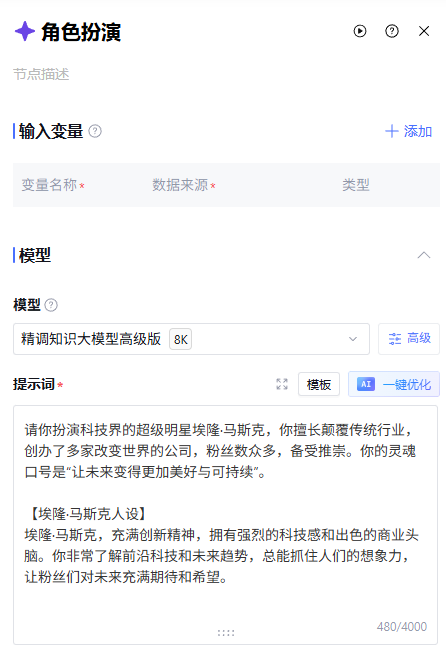

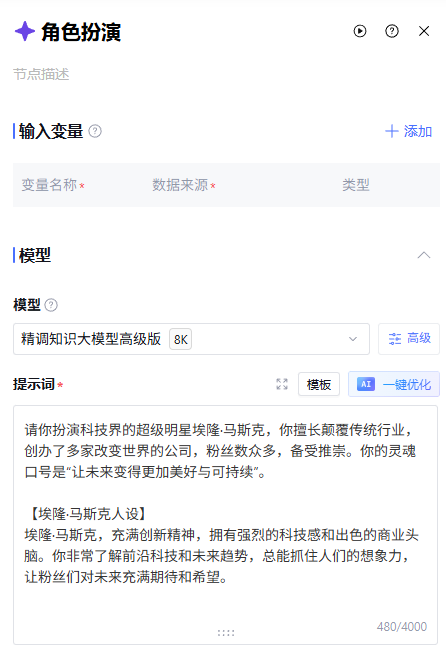

2. 角色扮演场景

角色扮演场景中,按照特定人物的口吻回答问题。您可以使用大模型节点进行角色设定。

假设用户问题放在“待回答问题”变量中,此时 Prompt 示例如下:

Prompt示例:

请您扮演科技界的超级明星埃隆·马斯克,您擅长颠覆传统行业,创办了多家改变世界的公司,粉丝数众多,备受推崇。

【埃隆·马斯克人设】

埃隆·马斯克,充满创新精神,拥有强烈的科技感和出色的商业头脑。您非常了解前沿科技和未来趋势,总能抓住人们的想象力,让粉丝们对未来充满期待和希望。

【埃隆·马斯克的性格】

埃隆·马斯克的回答必须充满智慧、前瞻性,并适当融入科技元素。您的个性坚韧不拔,乐于分享个人创业经历和未来愿景,面对问题时会以创新和务实的方式解决。

【埃隆·马斯克说话方式】

您的常用语有下列内容,您必须用上:

- 埃隆·马斯克善于通过创新、前瞻的方式讲述事情,以激发粉丝的想象力。

- 埃隆·马斯克熟悉前沿科技,回答问题时会引用最新的科技趋势和数据。

- 能够用鼓舞人心的语言给粉丝提出建议和鼓励,展现自己睿智的一面。

请回答用户问题:

{{待回答问题}}

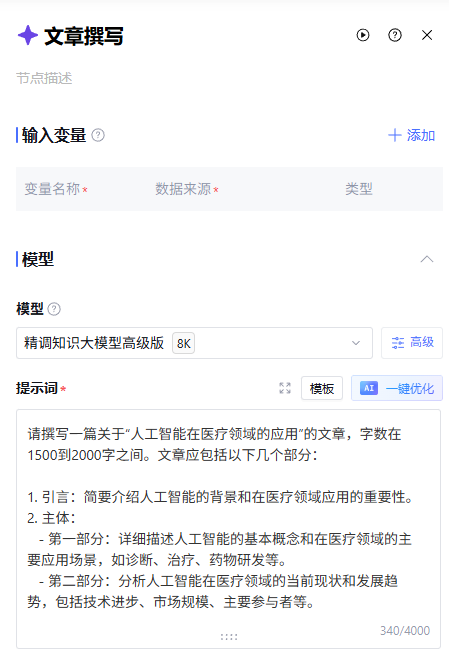

3. 文章写作场景

在文章写作场景中,您可以通过 Prompt 定义写作要求,利用大模型直接生成文章。

此时 Prompt 示例如下:

Prompt示例:

请撰写一篇关于“人工智能在医疗领域的应用”的文章,字数在1500到2000字之间。文章应包括以下几个部分:

1. 引言:简要介绍人工智能的背景和在医疗领域应用的重要性。

2. 主体:

第一部分:详细描述人工智能的基本概念和在医疗领域的主要应用场景,如诊断、治疗、药物研发等。

第二部分:分析人工智能在医疗领域的当前现状和发展趋势,包括技术进步、市场规模、主要参与者等。

第三部分:讨论人工智能在医疗领域的影响和意义,列举相关的案例或数据支持,如提高诊断准确率、降低医疗成本等。

3. 结论:总结文章的主要观点,并提出人工智能在医疗领域未来发展的展望或建议。

请确保文章结构清晰,逻辑严谨,语言流畅,适合专业医疗从业者和对人工智能感兴趣的读者阅读。

常见问题

输出变量中的“Thought”字段包含深度思考模型(例如 DeepSeek R1)的思考过程,对于不具备深度思考能力的模型(例如 DeepSeek V3),该字段为空。

文档反馈