Release Notes

Announcements

Release Notes

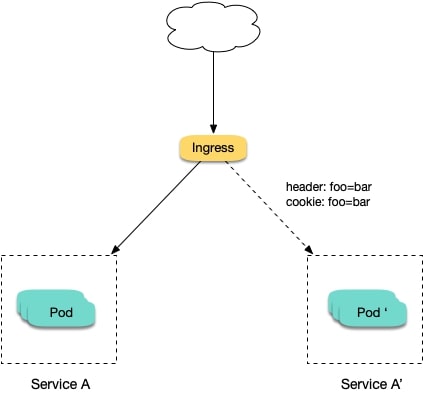

nginx.ingress.kubernetes.io/canary: "true" is called a Canary Ingress. The Canary Ingress usually represents the new version of a service. By configuring this and the annotation of the traffic splitting policy, you can implement Canary Release in multiple scenarios. The following section introduces relevant annotations in detail:nginx.ingress.kubernetes.io/canary-by-header

Indicates that if the request header contains the specified header name and the value is always, the request will be forwarded to the corresponding real server defined by the Ingress. If the value is never, it will not be forwarded and can be used for rollback to the old version. In case of other values, this annotation will be ignored.nginx.ingress.kubernetes.io/canary-by-header-value

This annotation can be a supplement to canary-by-header. You can specify a custom value for the request header, including but not limited to always or never. When the value of the request header matches the specified custom value, the request will be forwarded to the corresponding real server defined by the Ingress. In case of other values, this annotation will be ignored.nginx.ingress.kubernetes.io/canary-by-header-pattern

This annotation is similar to canary-by-header-value. The difference is that this annotation uses a regular expression, instead of a fixed value, to match the value of the request header. If this annotation and canary-by-header-value exist at the same time, this annotation will be ignored.nginx.ingress.kubernetes.io/canary-by-cookie

Similar to canary-by-header, this annotation is used for cookie and supports only always and never.nginx.ingress.kubernetes.io/canary-weight

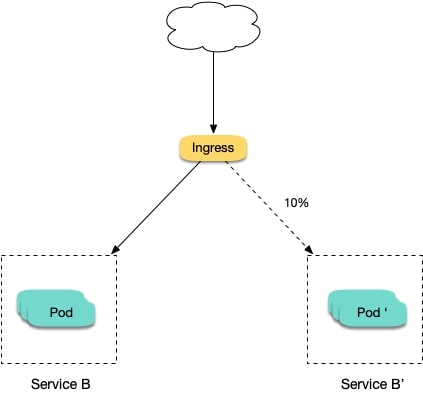

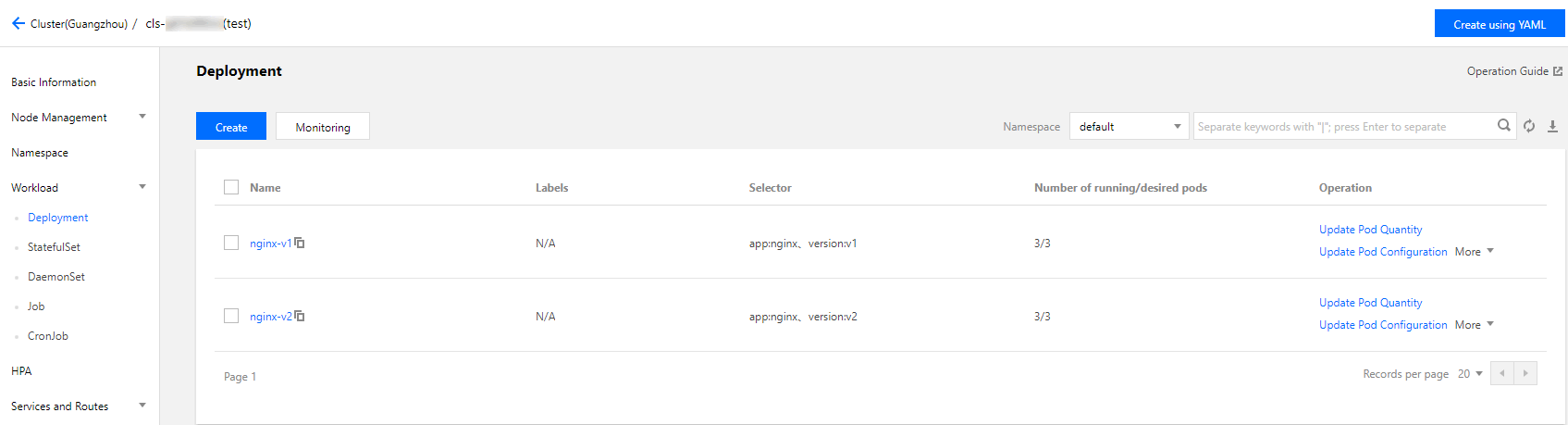

Indicates the proportion of the traffic assigned to the Canary Ingress. The value range is [0-100]. For example, the value 10 indicates that 10% of the traffic is assigned to the corresponding real server of the Canary Ingress.canary-by-header > canary-by-cookie > canary-weight.nginx.ingress.kubernetes.io/load-balance and nginx.ingress.kubernetes.io/upstream-hash-by, will be ignored.kubectl apply -f xx.yaml.apiVersion: apps/v1kind: Deploymentmetadata:name: nginx-v1spec:replicas: 1selector:matchLabels:app: nginxversion: v1template:metadata:labels:app: nginxversion: v1spec:containers:- name: nginximage: "openresty/openresty:centos"ports:- name: httpprotocol: TCPcontainerPort: 80volumeMounts:- mountPath: /usr/local/openresty/nginx/conf/nginx.confname: configsubPath: nginx.confvolumes:- name: configconfigMap:name: nginx-v1---apiVersion: v1kind: ConfigMapmetadata:labels:app: nginxversion: v1name: nginx-v1data:nginx.conf: |-worker_processes 1;events {accept_mutex on;multi_accept on;use epoll;worker_connections 1024;}http {ignore_invalid_headers off;server {listen 80;location / {access_by_lua 'local header_str = ngx.say("nginx-v1")';}}}---apiVersion: v1kind: Servicemetadata:name: nginx-v1spec:type: ClusterIPports:- port: 80protocol: TCPname: httpselector:app: nginxversion: v1

apiVersion: apps/v1kind: Deploymentmetadata:name: nginx-v2spec:replicas: 1selector:matchLabels:app: nginxversion: v2template:metadata:labels:app: nginxversion: v2spec:containers:- name: nginximage: "openresty/openresty:centos"ports:- name: httpprotocol: TCPcontainerPort: 80volumeMounts:- mountPath: /usr/local/openresty/nginx/conf/nginx.confname: configsubPath: nginx.confvolumes:- name: configconfigMap:name: nginx-v2---apiVersion: v1kind: ConfigMapmetadata:labels:app: nginxversion: v2name: nginx-v2data:nginx.conf: |-worker_processes 1;events {accept_mutex on;multi_accept on;use epoll;worker_connections 1024;}http {ignore_invalid_headers off;server {listen 80;location / {access_by_lua 'local header_str = ngx.say("nginx-v2")';}}}---apiVersion: v1kind: Servicemetadata:name: nginx-v2spec:type: ClusterIPports:- port: 80protocol: TCPname: httpselector:app: nginxversion: v2

apiVersion: extensions/v1beta1kind: Ingressmetadata:name: nginxannotations:kubernetes.io/ingress.class: nginxspec:rules:- host: canary.example.comhttp:paths:- backend:serviceName: nginx-v1servicePort: 80path: /

curl -H "Host: canary.example.com" http://EXTERNAL-IP # EXTERNAL-IP should be replaced with the opened IP address of Nginx Ingress.

nginx-v1

apiVersion: extensions/v1beta1kind: Ingressmetadata:annotations:kubernetes.io/ingress.class: nginxnginx.ingress.kubernetes.io/canary: "true"nginx.ingress.kubernetes.io/canary-by-header: "Region"nginx.ingress.kubernetes.io/canary-by-header-pattern: "cd|sz"name: nginx-canaryspec:rules:- host: canary.example.comhttp:paths:- backend:serviceName: nginx-v2servicePort: 80path: /

$ curl -H "Host: canary.example.com" -H "Region: cd" http://EXTERNAL-IP # EXTERNAL-IP should be replaced with the opened IP address of Nginx Ingress.nginx-v2$ curl -H "Host: canary.example.com" -H "Region: bj" http://EXTERNAL-IPnginx-v1$ curl -H "Host: canary.example.com" -H "Region: cd" http://EXTERNAL-IPnginx-v2$ curl -H "Host: canary.example.com" http://EXTERNAL-IPnginx-v1

Region is cd or sz.user_from_cd will be forwarded to the current Canary Ingress. The YAML sample is as follows:apiVersion: extensions/v1beta1kind: Ingressmetadata:annotations:kubernetes.io/ingress.class: nginxnginx.ingress.kubernetes.io/canary: "true"nginx.ingress.kubernetes.io/canary-by-cookie: "user_from_cd"name: nginx-canaryspec:rules:- host: canary.example.comhttp:paths:- backend:serviceName: nginx-v2servicePort: 80path: /

$ curl -s -H "Host: canary.example.com" --cookie "user_from_cd=always" http://EXTERNAL-IP # EXTERNAL-IP should be replaced with the opened IP address of Nginx Ingress.nginx-v2$ curl -s -H "Host: canary.example.com" --cookie "user_from_bj=always" http://EXTERNAL-IPnginx-v1$ curl -s -H "Host: canary.example.com" http://EXTERNAL-IPnginx-v1

user_from_cd is always.apiVersion: extensions/v1beta1kind: Ingressmetadata:annotations:kubernetes.io/ingress.class: nginxnginx.ingress.kubernetes.io/canary: "true"nginx.ingress.kubernetes.io/canary-weight: "10"name: nginx-canaryspec:rules:- host: canary.example.comhttp:paths:- backend:serviceName: nginx-v2servicePort: 80path: /

$ for i in {1..10}; do curl -H "Host: canary.example.com" http://EXTERNAL-IP; done;nginx-v1nginx-v1nginx-v1nginx-v1nginx-v1nginx-v1nginx-v2nginx-v1nginx-v1nginx-v1

Apakah halaman ini membantu?

Anda juga dapat Menghubungi Penjualan atau Mengirimkan Tiket untuk meminta bantuan.

masukan