OpenClaw Tips - Reduce Token Usage and See Immediate Results

Note for first-time users: If you haven’t deployed OpenClaw yet, start with Tencent Cloud OpenClaw first. You can launch your OpenClaw instance in seconds with one click, then come back to this guide to explore more advanced use cases.

As you use OpenClaw over time, it accumulates more and more context and memory. If left unchecked, the token cost for the same question can grow from a few thousand to tens or even hundreds of thousands. This article focuses on tactics that deliver immediate results and introduces several simple, practical ways to reduce cost.

1. Slash Commands: Quickly “Slim Down” the Current Session

For everyday conversations, the fastest way to see results is to make good use of a few built-in slash commands. They are all very easy to use: just send them directly to OpenClaw in the chat box with no extra prefix.

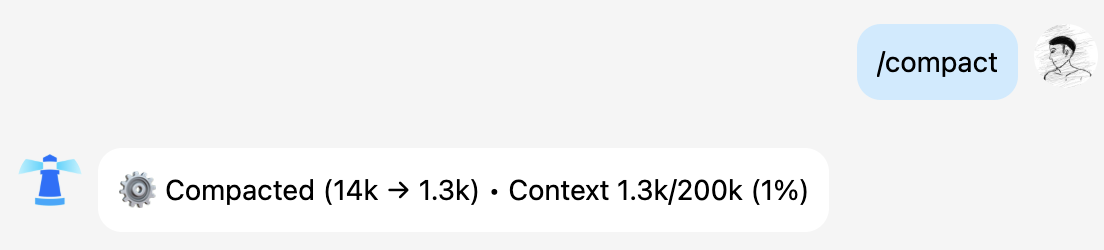

1. /compact: Compress the Current Session Context

Purpose:

Ask OpenClaw to summarize and compress the current conversation history, keeping the key information while dropping unnecessary detail, so that token usage is significantly reduced in later turns.

When to use it:

- You have been chatting with the bot for a long time, and each new question drags along a huge amount of history.

- Responses are clearly getting slower and more expensive, but you do not want to wipe the context entirely.

- You still want to continue the current topic, but you do not need every detail preserved.

How to use it:

Send this directly in the current chat window:

/compact

OpenClaw will try to condense the previous conversation into a shorter “summary memory,” and future responses will prioritize that summary, reducing the amount of context sent in each request.

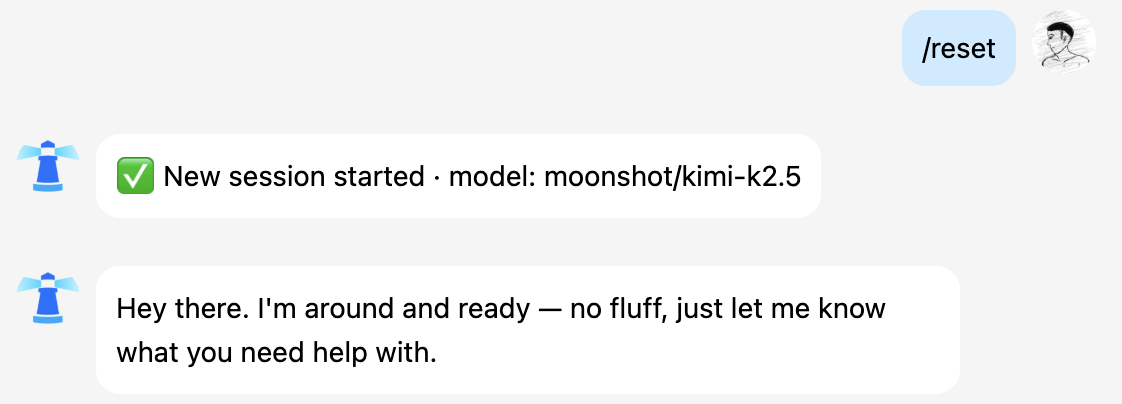

2. /reset: Keep Memory, Reset the Current Topic

Purpose:

Reset the short-term context of the current conversation while preserving long-term memory and global configuration. In other words: “start this conversation over, but keep the important things you already remembered for me.”

When to use it:

- The current topic is finished, and you want to switch to something completely different.

- The context has become bloated, and token usage will keep increasing if you continue.

- But you still do not want to erase all historical memory, such as personal preferences, team code names, or project background.

How to use it:

Send this directly in the current chat window:

/reset

After it runs:

- The history of the current conversation thread will be cleared.

- The Agent’s long-term memory, such as content written into

MEMORYor other important saved records, will remain. - Subsequent questions will continue under the context of a “new topic.”

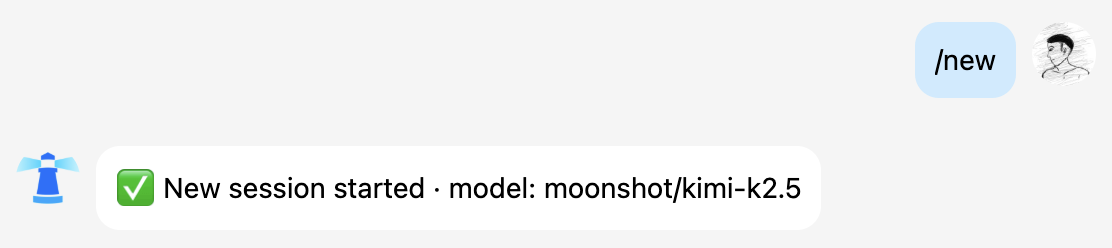

3. /new: Start a Brand-New Conversation

Purpose:

Create a truly fresh session from scratch, similar to opening a new chat tab.

When to use it:

- You want to start a completely new conversation in the same channel.

- You do not want any historical context to affect the current question.

- You need to run an A/B test: one session keeps context, while another starts from zero.

How to use it:

Send this directly in the current chat window:

/new

After execution, OpenClaw will talk to you as a brand-new session. In practice, this is usually more token-efficient than continuing to pile new questions onto a long-running thread.

2. Split Work Across Multiple Agents: Reduce Token Usage at the Architectural Level

When you start using OpenClaw for multiple tasks at once—such as writing documentation, coding, operations, and team management—and pile all of that into a single Agent’s brain, two obvious problems appear:

- Memory becomes increasingly cluttered

Code, weekly reports, announcements, internal code names—everything lives in the same workspace. Every time the model generates a response, it has to sift through a large amount of unrelated information to find what matters. - Each session grows longer and longer

To make sure it “remembers” prior discussions, you keep feeding history into the same Agent, which continuously inflates token usage on every call.

A more sensible approach is to split Agents the way you would organize a real team:

- Configure separate Agents for different teams, different Lark groups, or different roles.

- Each Agent has its own workspace, memory, skills, and model.

- Long conversations and accumulated knowledge from one team only consume that Agent’s context instead of polluting the entire system.

The benefits include:

- Each Agent has cleaner context, so the model does not need to search for a needle in a haystack.

- Each type of task uses its own conversation history, making overall token usage easier to control.

- When problems occur, troubleshooting and optimization can be done for a specific Agent independently.

If you want to implement the “Lark group → independent Agent” model, refer to this detailed tutorial, which already includes command-line examples and binding strategies: 👉 Tutorial on Integrating Custom Large Models with OpenClaw (Clawdbot)

In real projects, the best approach is often a combination: first split work across multiple Agents to cleanly separate responsibilities at the architectural level, then use /compact and /reset inside each Agent to control the context length of individual conversations.

3. Use memory-search Instead of “Infinite Conversations”

Besides directly controlling context itself, there is a smarter way to reduce token usage: do not stuff everything into the same conversation. Instead, let the Agent learn to look things up.

OpenClaw provides a memory-search capability that allows the Agent to actively retrieve past memory when needed, instead of forcing all conversation history into the context every time. A common pattern is:

- Store important information in memory, files, or a knowledge base.

- Enable

memory-search. - Let the Agent retrieve small, relevant pieces of information through search, rather than carrying the entire conversation history into every inference.

This gives you several advantages:

- The model input contains only the current question plus a small, highly relevant piece of memory.

- You do not need to repeat a large amount of background information in every conversation just because you are afraid it will forget.

- In complex projects, this is more economical and more stable over the long run than relying only on context.

memory-search is enabled by default in OpenClaw. What you need to do is build the habit of asking OpenClaw to remember important information after a complete round of discussion or after it finishes a task. You can simply tell it directly in the chat box.

Summary

These three approaches can be combined:

- Use

/compact,/reset, and/newin day-to-day work to control the length of the current conversation. - Split tasks across multiple Agents at the architecture level so each job has its own brain.

- Gradually move long-term knowledge into memory or a knowledge base, and use

memory-searchfor precise retrieval instead of brute-force context stuffing.

When you combine these methods well, you will quickly notice two changes: responses become more stable with fewer off-topic drifts, and your monthly token bill stops climbing month after month.

🚀 Developer Community & Support

1️⃣ OpenClaw Developer Community

Unlock advanced tips on Discord

Click to join the community

Note: After joining, you can get the latest plugin templates and deployment playbooks

2️⃣ Dedicated Support

Join WhatsApp / WeCom for dedicated technical support

| Channel | Scan / Click to join |

|---|---|

| WhatsApp Channel |

|

| WeCom (Enterprise WeChat) |

|

Learn more on the official page: Tencent Cloud OpenClaw