Release Notes

Announcements

{"InputInfo": {"Type": "URL","UrlInputInfo": {"Url": "https://facedetectioncos-1251132611.cos.ap-guangzhou.myqcloud.com/video/xxx.mp4" // Replace it with the URL of the video to be summarized.}},"AiAnalysisTask": {"Definition": 22, //Preset LLM summarize template ID."ExtendedParameter": "{\\"des\\":{\\"split\\":{\\"method\\":\\"llm\\",\\"model\\":\\"deepseek-v3\\"}}}"},"OutputStorage": {"CosOutputStorage": {"Bucket": "test-mps-123456789","Region": "ap-guangzhou"},"Type": "COS"},"OutputDir": "/output/","TaskNotifyConfig": {"NotifyType": "URL","NotifyUrl": "http://qq.com/callback/qtatest/?token=xxxxxx"},"Action": "ProcessMedia","Version": "2019-06-12"}

{"des": {"split": {"method": "llm","model": "deepseek-v3","max_split_time_sec": 100,"extend_prompt": "This video is a medical scenario video, which is segmented according to domain-specific medical knowledge points."},"need_ocr": true,"ocr_type": "ppt","only_segment": 0,"text_requirement": "summary is within 40 characters","dstlang": "zh"}}

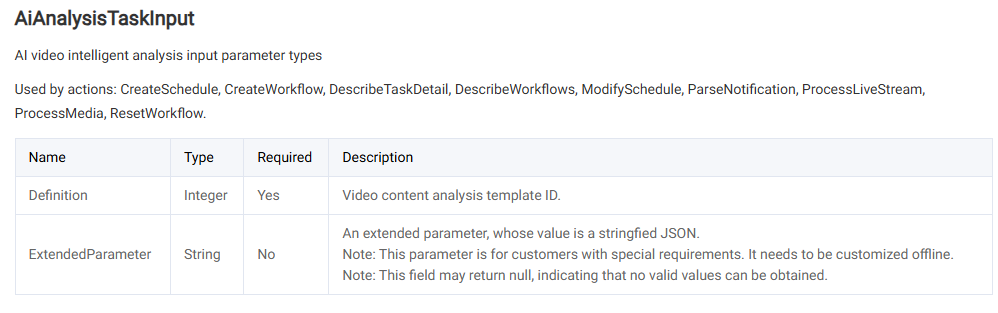

Parameter | Required | Type | Description |

split.method | No | string | Segmentation Method: llm indicates Large Language Model-based segmentation, nlp indicates traditional NLP-based segmentation. The default value is llm. |

split.model | No | string | Segmentation llm: Available options include Hunyuan, DeepSeek-V3, DeepSeek-R1. The default value is DeepSeek-V3. |

split.max_split_time_sec | No | int | Forces the maximum segmentation time in seconds to be specified. It is recommended to use it only if necessary, it may affect the segmentation effect. The default value is 3600. |

split.extend_prompt | No | string | Requirements for segmentation task prompts. For example: "This instructional video is segmented by knowledge points". It is recommended to initially leave blank for testing and supplement prompts only when results fall short of expectations. |

need_ocr | No | bool | Whether to use Optical Character Recognition (OCR) to assist segmentation, true means enabled. The default value is false. If disabled, the system only recognizes the video's speech content to assist in video segmentation; if enabled, it also recognizes the text content on the video image to assist in video segmentation. |

ocr_type | No | string | OCR auxiliary type: ppt: Processes on-screen content as PowerPoint slides and segments videos based on slide transitions. other: Applies alternative segmentation methods. The default value is ppt. |

only_segment | No | int | Whether to only segment without generating a summary. The default value is 0. 1: Only segment without generating a summary. 0: Segment and generate a summary. |

text_requirement | No | string | Requirements for generating a summary. For example, the character limit is "summary is within 40 characters". |

dstlang | No | string | Title and summary language. The default value is "zh". "zh": Chinese. "en": English. |

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback