Monitoring Alarm Best Practices

Download

포커스 모드

폰트 크기

The health and performance of a database directly impact application stability and user experience. A comprehensive monitoring system not only enables real-time detection and resolution of potential issues but also provides early warning of risks, providing solid data support for system optimization and resource planning. This document describes the construction method of a read-only analysis engine monitoring system and explains key focus metrics such as performance, capacity, and synchronization.

Common Performance Evaluation Metrics

Metric 1: Analysis Engine Average Response Time

Average response time is a core metric for measuring engine performance, reflecting the average execution time of all SQL queries during the monitoring period. If this metric shows abnormal fluctuation, it usually originates from the following scenarios:

High-consumption SQL queries are added, prolonging the overall execution time.

The business traffic growth and the increase in queries per second (QPS) lead to increased processing latency.

The database system itself encounters an exception.

Monitoring recommendations:

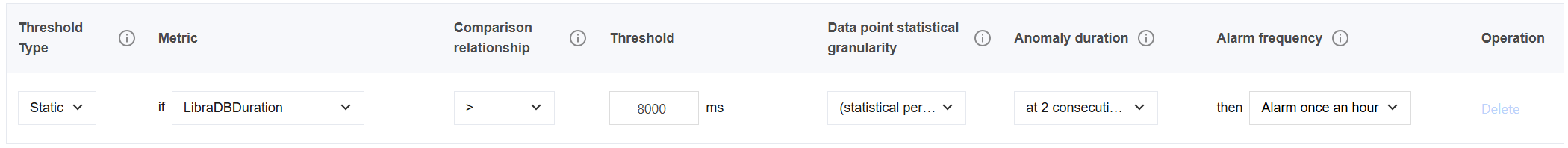

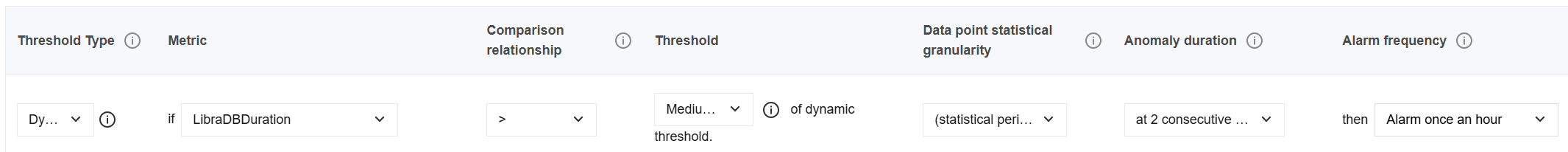

The alarm threshold can be set based on the highest execution latency during stable business operations (static threshold). You can also select a dynamic threshold based on actual latency requirements (a medium sensitivity is recommended, with two or more abnormal data points).

Metric 2: Analysis Engine QPS

QPS directly reflects the pressure scale of business requests and is a key metric to assess the instance processing capacity.

Monitoring recommendations:

Assess in advance the QPS capacity of the corresponding instance specifications, and use this as a benchmark to set alarms.

Analyze comprehensively with average response time: If the QPS increases but the response time remains stable, it indicates that the current load is controllable; if both increase simultaneously, scale-out or optimization may be required.

Metric 3: Analysis Engine CPU Utilization

The analysis engine usually adopts a multi-thread parallel execution mode, with naturally high CPU utilization. Therefore, it is not recommended to use this metric as a core performance evaluation metric.

Monitoring recommendations:

Node CPU utilization can be monitored in multi-node instance scenarios to observe whether load balancing is achieved on each node.

If the CPU utilization remains higher than 90% for a long time (multiple data points), it may indicate system pressure approaching the limit. Stay vigilant for slow query and response time degradation.

If an instance has no query load but high CPU utilization, it indicates high data synchronization pressure, with most resources used for data synchronization. Consider traffic throttling or scale-out.

Metric 4: Size of the Result Set Returned by the Analysis Engine

This metric reflects the data volume returned per query. An excessively large result set may cause the client to experience receive latency or even out-of-memory (OOM) issues.

Monitoring recommendations:

If the result set shows an abnormal increase, troubleshoot whether there is a lack of pagination mechanism or unoptimized query logic.

Metric 5: Analysis Engine Memory Utilization

The memory usage is mainly composed of Block Cache (configurable) and runtime memory (Runtime Mem).

Monitoring recommendations:

The Block Cache usage is usually stable. A sudden increase in Runtime Mem indicates an oversized SQL intermediate result set. Optimize the query or adjust the cache policy.

Capacity Evaluation Metrics

Metric 1: Analysis Engine Storage Utilization/Usage

Disk space is pre-allocated. When the utilization exceeds 90%, the protection mechanism will be triggered (data synchronization is disabled, and only read operations are allowed).

Monitoring recommendations:

Set the utilization alarm threshold at 80% and plan in advance for scale-out to avoid business interruption.

Synchronization Evaluation Metrics

Metric 1: Analysis Engine Data Latency

This metric is used to monitor data synchronization latency between row-based nodes and columnar nodes and is critical for ensuring real-time consistency of data.

Monitoring recommendations:

If a latency exception occurs, it is required to promptly troubleshoot the network, load, or synchronization linkage failures.

피드백