Practical Tutorial on Implementing Machine Learning with DLC + WeData

Data Lake Compute (DLC) supports creating machine learning resource groups via Spark engines, assisting users in machine learning scenarios, including model training.

Through this document, you can experience the practice of model training within the scikit-learn framework based on our provided demo dataset and example code.

Note:

Resource group: As a secondary queue division of the computing resources within a Spark Standard Engine, resource groups belong to the parent Standard Engine. Resource groups under the same engine share resources with each other.

The computing units (CUs) of the DLC Spark Standard Engine can be allocated into multiple resource groups as needed. You can configure the minimum and maximum usable CU limits of each resource group, start and stop policies, concurrency quantity, dynamic or static parameters, and other items to efficiently manage computing resources isolation and workload in complex scenarios, including multi-tenant and multi-tasking. This feature ensures resource isolation between different types of tasks and prevents resources from being preempted for extended periods by a few large queries.

Currently, the DLC machine learning resource group, WeData Notebook exploration, and machine learning are all allowlisted features. If needed, submit a ticket to contact the DLC and WeData teams to enable the machine learning resource group, Notebook, and MLflow services.

Activating Accounts and Products

Activating Accounts and Products

The DLC account and product activation features need to be enabled with the Tencent Cloud root account. Once the root account completes the operations, all sub-accounts under the default root account can use these features. Adjustments can be made using the CAM feature if needed. For specific operation guides, see the Complete Process for New User Activation.

For WeData account and product activation, see Preparations and Data Lake Compute (DLC).

The feature and MLflow service activation are performed at the root account granularity. Once the operations are completed with the root account, all sub-accounts under the root account can use these features.

You need to provide the customer regional information, APPID, root account UIN, VPC ID, and subnet ID. The VPC and subnet information are used for the network interconnection operation of the MLflow service.

Note:

Since multiple features on the product require network access operations, to ensure network connectivity, it is recommended that subsequent operations (including purchasing execution resource groups and creating Notebook workspaces) be performed within this VPC and subnet.

Configuring Data Access Policies

A data access policy (CAM role arn) allows users to configure data access permissions in Cloud Access Management (CAM) to ensure the security of data sources and Cloud Object Storage (COS) during data job execution.

When configuring a data job in DLC, you need to specify the corresponding data access policy to ensure data security.

Purchasing Computing Resources on DLC

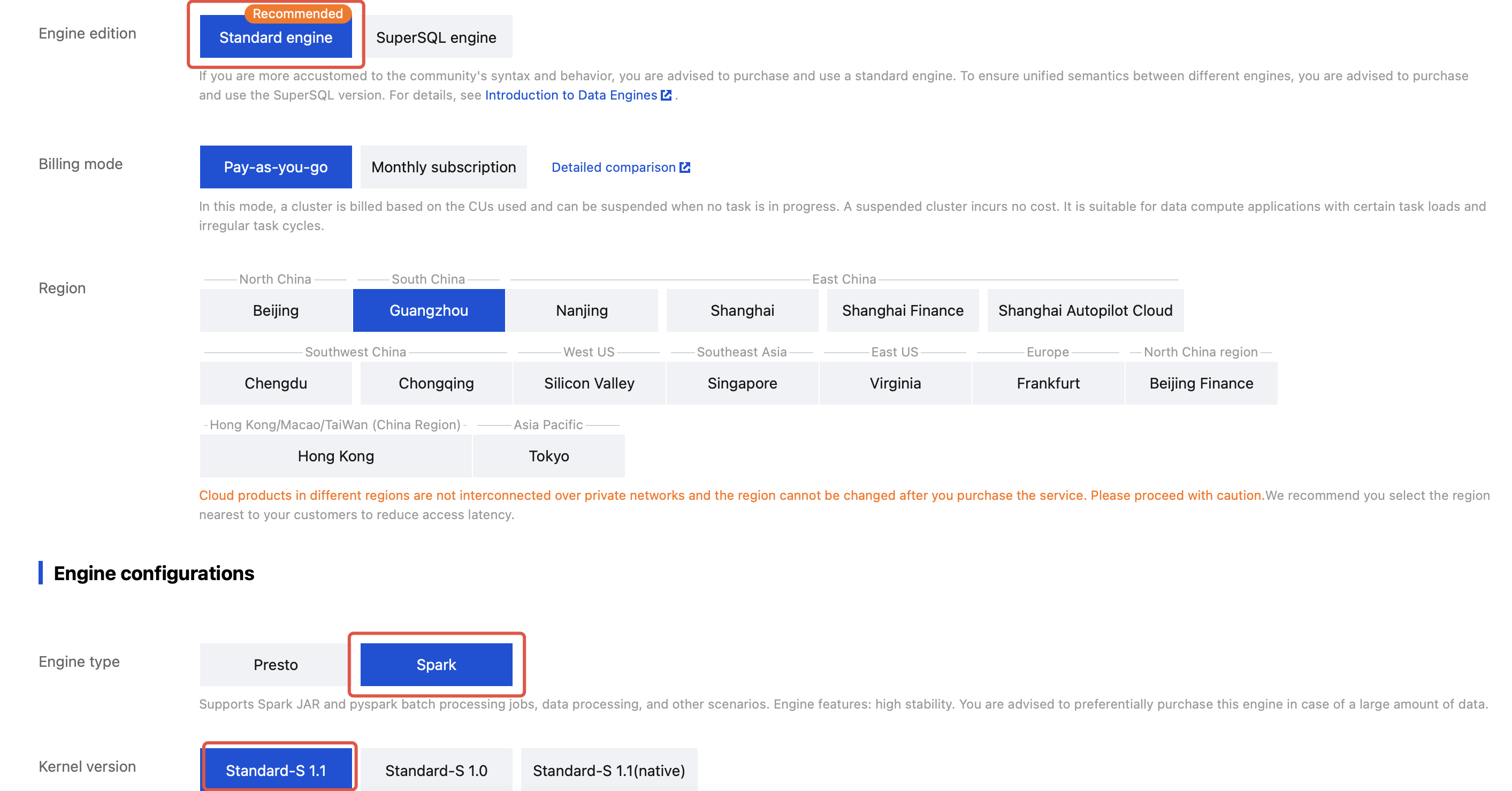

After the product service activation is completed, you can first purchase computing resources through DLC. If you need to use the machine learning feature, confirm that the purchased engine type is Standard Engine-spark, and the kernel version is Standard-S 1.1.

1. Go to the Data Lake Compute (DLC) > Standard Engine page.

2. Select "Creating Resources".

3. Purchase Standard Engine-spark, and select the kernel version: Standard-S 1.1.

Note:

1. Purchase should be made with the root account or an account having financial permission.

2. You can select the billing mode according to your business scenarios.

3. It is recommended to select cluster specifications with 64 CUs or more.

4. The initial launch may require several minutes of waiting time after purchase. If the startup cannot be completed for a long time, submit a ticket.

Creating Machine Learning Resource Groups

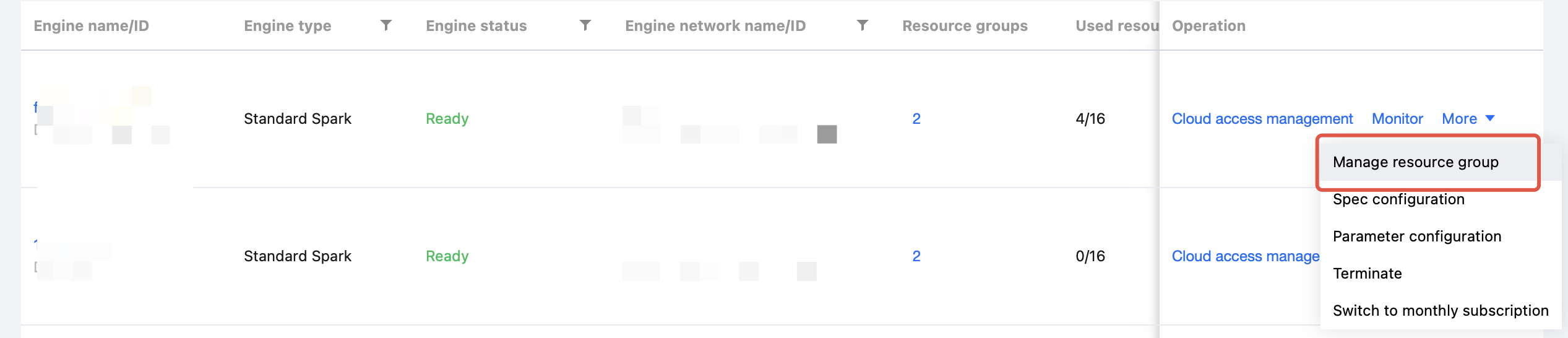

After purchasing the Standard Engine, return to the Engine Management page. You need to create a machine learning resource group under this engine to start performing machine learning-related features.

Note:

Once the resource group is created, it cannot be edited or modified. You can manage it by deleting and recreating the resource group.

1. Click Manage Resource Group.

2. After going to the resource group page, click the Create Resource Group button in the upper-left corner.

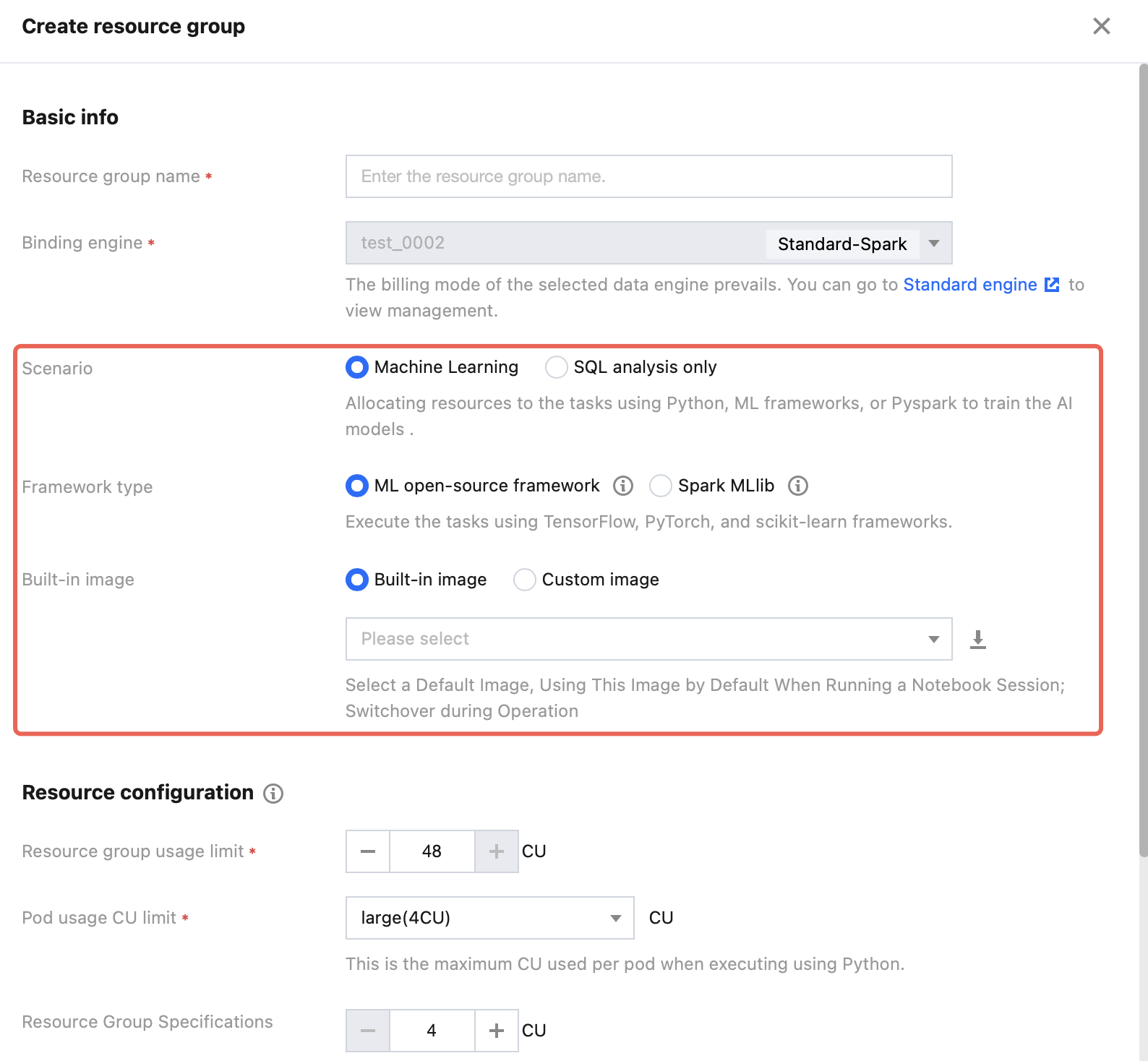

3. Create a resource group for machine learning.

Note:

DLC AI resource groups currently support machine learning through the following frameworks: scikit-learn (1.6.0), TensorFlow (2.18.0), PyTorch (2.5.1), and Spark MLlib (3.5).

Business scenario selection: Machine learning.

Framework type: You can select a suitable framework to create based on actual business scenarios.

If you need to experience the features through the demo, select an ML open-source framework. For the image package, select: scikit-learn-v1.6.0.

Resource configuration: Select resources as needed.

After the configuration is completed, click Confirm to return to the resource group list page. After several minutes, you can click the Refresh button at the top of the list page for confirmation.

Uploading Machine Learning Datasets to COS

If you need to perform machine learning via DLC and WeData, use Cloud Object Storage (COS) in combination, because only interaction with cloud data is supported currently.

Note:

Currently, only direct reading of COS data via Spark is supported.

If you have requirements for other frameworks, try the following workaround: first, upload the data to COS, then download it to a local device to generate a local file, and proceed with the learning operation. This workaround may result in relatively long upload and download times.

We are currently developing this feature to optimize the support.

1. Activate the COS product service and create a bucket. For activation methods, see the console quick start.

2. Log in to COS, select a bucket, and upload the dataset.

3. After the upload is completed, go to Metadata Management, click Create Data Catalog, or use an existing data catalog to upload an external table.

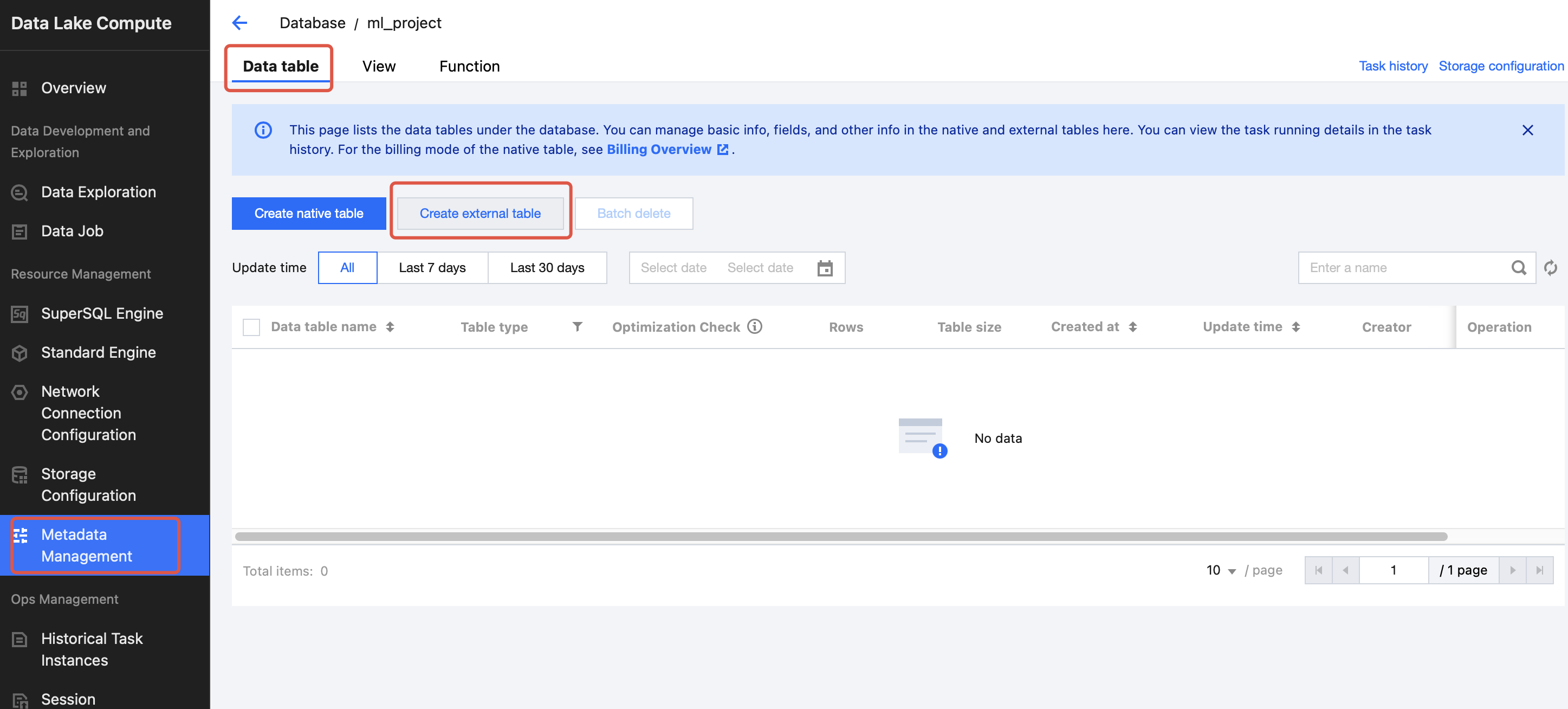

4. Go to the DLC console > Metadata Management and click on the Database tab.

5. Click to create a database named: database_testnotebook.

6. Go to the created database and click to create an external table.

Note:

Check the database table name of the uploaded external table. The Notebook will use SELECT to call the database and table names.

7. Select the COS bucket path and find the demo dataset.

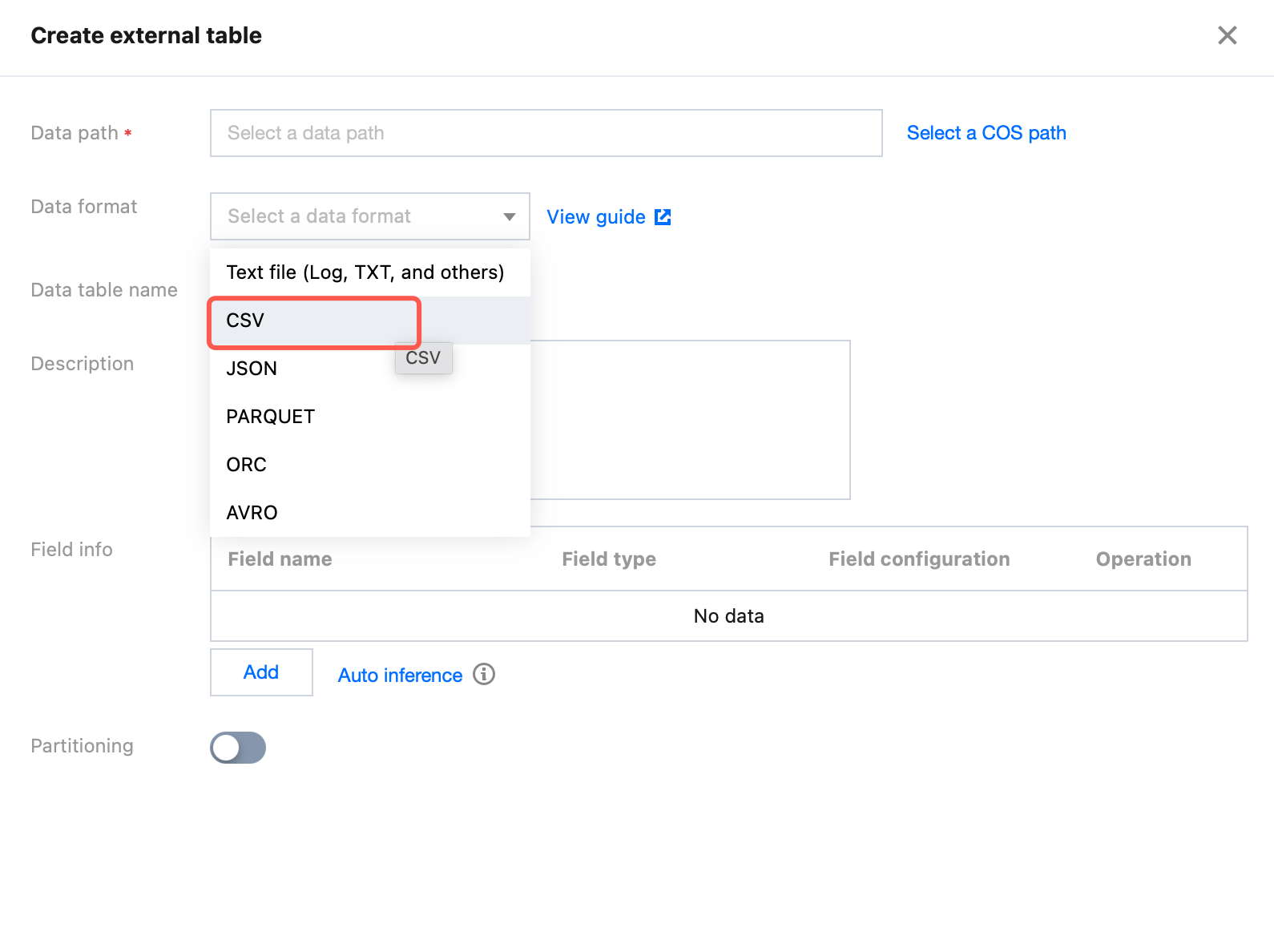

8. Select the data format as CSV and configure related options.

9. Create a table named: demo_test_sklearn.

10. After the creation is completed, click Confirm to return.

You can also perform creation via SQL. For details, see Table Creation Practice > Creating External Tables in CSV Format.

CREATE TABLE database_testnotebook.demo_test_sklearn (at STRING COMMENT 'from deserializer',v STRING COMMENT 'from deserializer',ap STRING COMMENT 'from deserializer',rh STRING COMMENT 'from deserializer',pe STRING COMMENT 'from deserializer')USING csvLOCATION 'cosn://your cos location'

Going to the WeData-Notebook Feature for Demo Practice

After the resource group and demo dataset are created, go to WeData to practice model training using Notebook and MLflow.

Creating WeData Projects and Associating Them with DLC Engines

1. Create a project or select an existing project. For details, see Project List.

2. Select the required DLC engine in the configuration of the storage and computing engine.

Purchasing Execution Resource Groups and Associating Them with Projects

If you need to schedule Notebook tasks periodically in the orchestration space, purchase a scheduling resource group and associate it with a designated project. For details, see Scheduling Resource Group Configuration.

Directions:

1. Go to "Execution Resource Group > Scheduling Resource Group > Standard Scheduling Resource Group" and click Create.

2. Configure the resource group.

Region: The region where the scheduling resource group is located should be consistent with the region where the storage and computing engine is located. For example, if you purchase a DLC engine in the Singapore region of the international site, you need to purchase a scheduling resource group in the same region.

VPC and subnet: It is recommended to select the VPC and subnet in Standard-S 1.1. If other VPCs and subnets are selected, you need to ensure that the selected VPCs and subnets are interconnected with the VPCs and subnets in Standard-S 1.1.

Specifications: Select specifications according to the task volume.

3. After the creation is completed, click "Associate Project" in the operation column of the resource group list to associate this scheduling resource group with the desired project.

Creating Notebook Workspaces

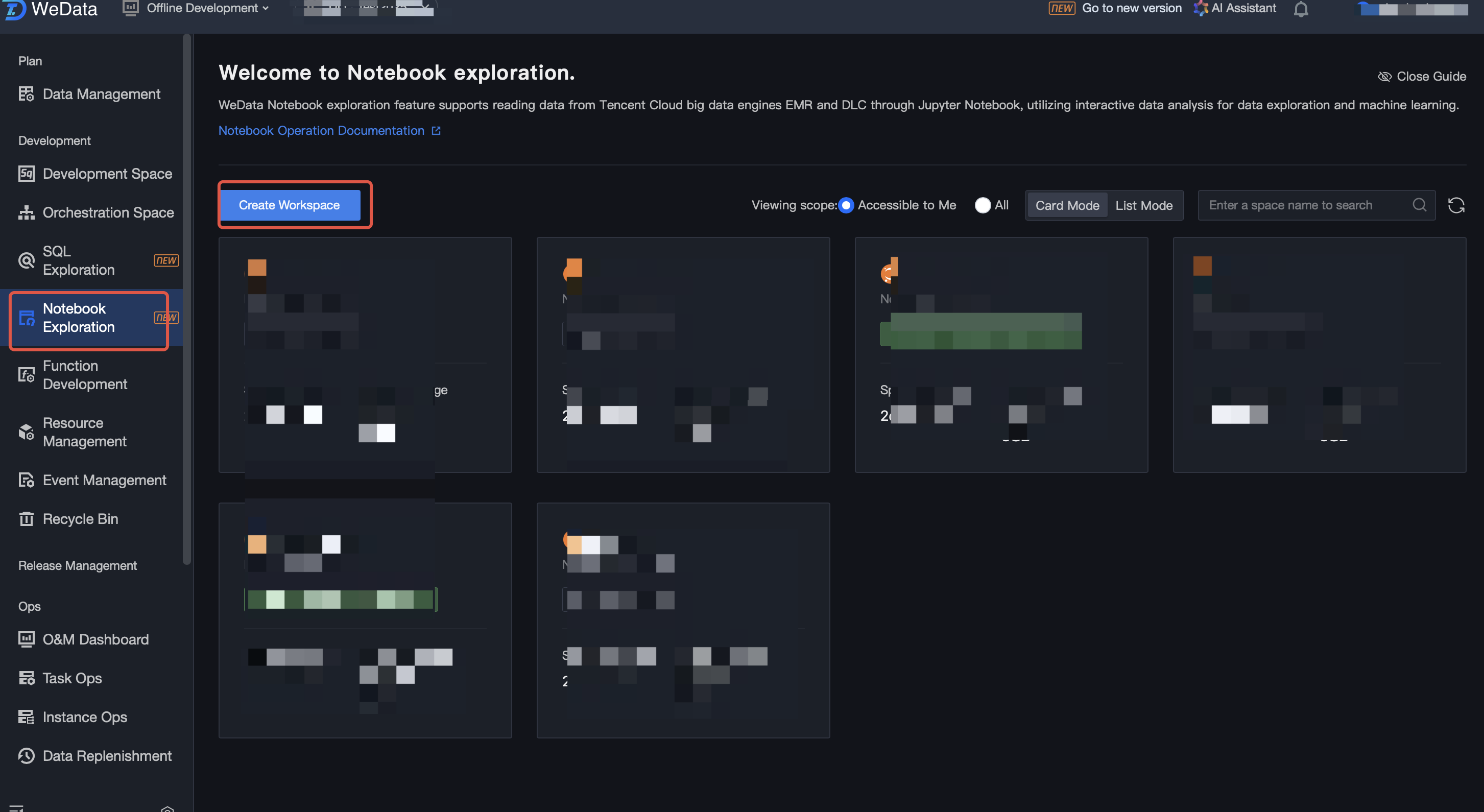

1. In the project, select the data governance feature, click the Notebook feature, and create or use an existing workspace.

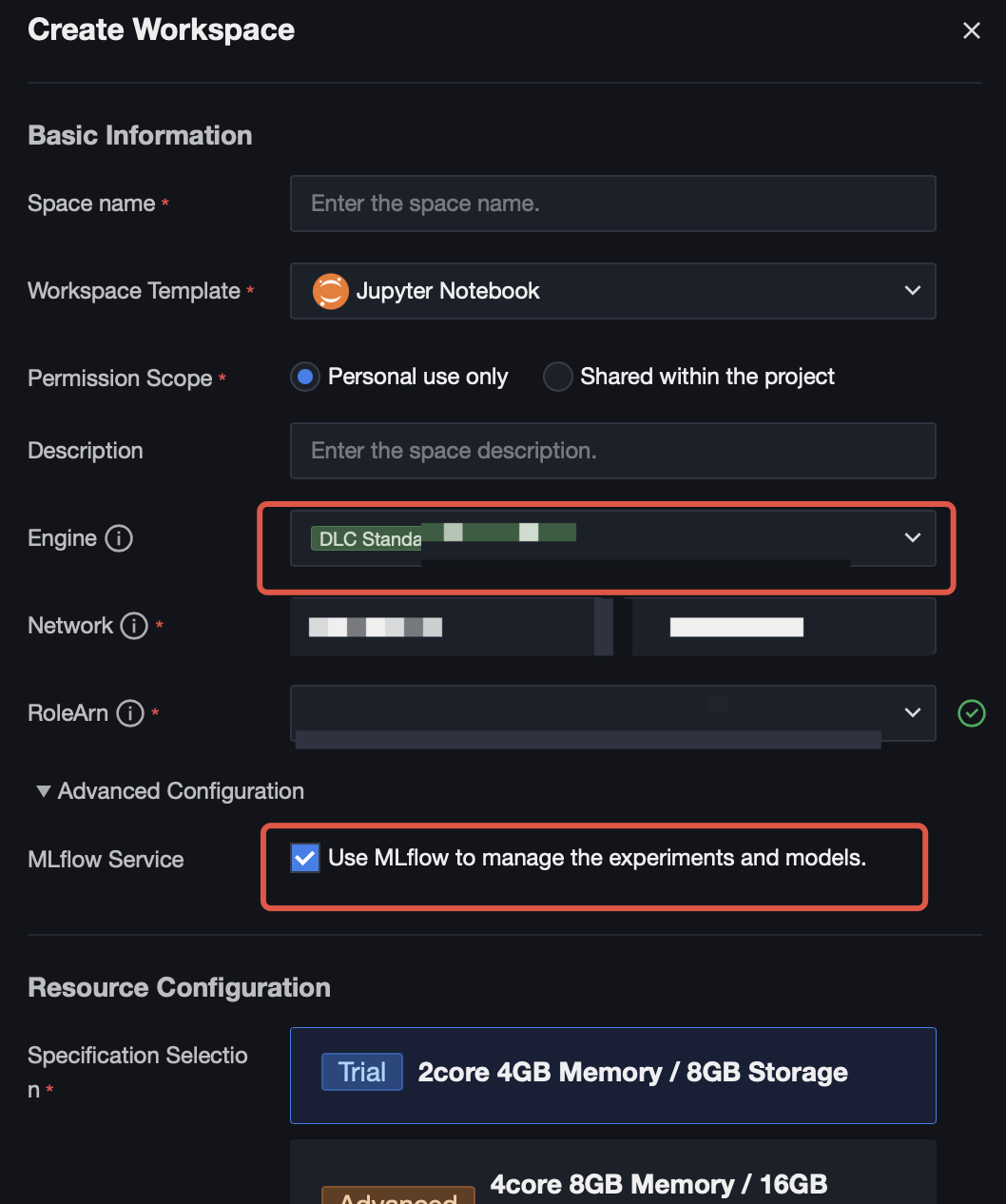

2. When creating a workspace, select to purchase a Standard Spark Engine, and the version is Standard-S 1.1. Select the machine learning and MLflow service options.

Basic Information | Attribute Item Name | Attribute Item Configuration |

Engines | Select a DLC engine that you need to use for accessing a Notebook task. The DLC engine bound in the current project management. | |

DLC Data Engine | Select a DLC data engine that you need to use for accessing a Notebook task. | |

Machine Learning | If the DLC data engine you select contains a "machine learning" type resource group, this option will appear and be selected by default. | |

Network | It is recommended to select the VPC and subnet in Standard-S 1.1. If other VPCs and subnets are selected, you need to ensure that the selected VPCs and subnets are interconnected with the VPCs and subnets in Standard-S 1.1. | |

RoleArn | RoleArn is the data access policy (CAM role arn) for the DLC engine to access Cloud Object Storage (COS). For details, see Configuring Data Access Policies. | |

Advanced Configuration | MLflow Service | Use MLflow to manage experiments and models, which is not selected by default. After selecting, the creation of experiments and machine learning using MLflow functions in Notebook tasks will be reported to the MLflow service deployed in Standard-S 1.1. You can later view them in Machine Learning > Experiment Management and Model Management. |

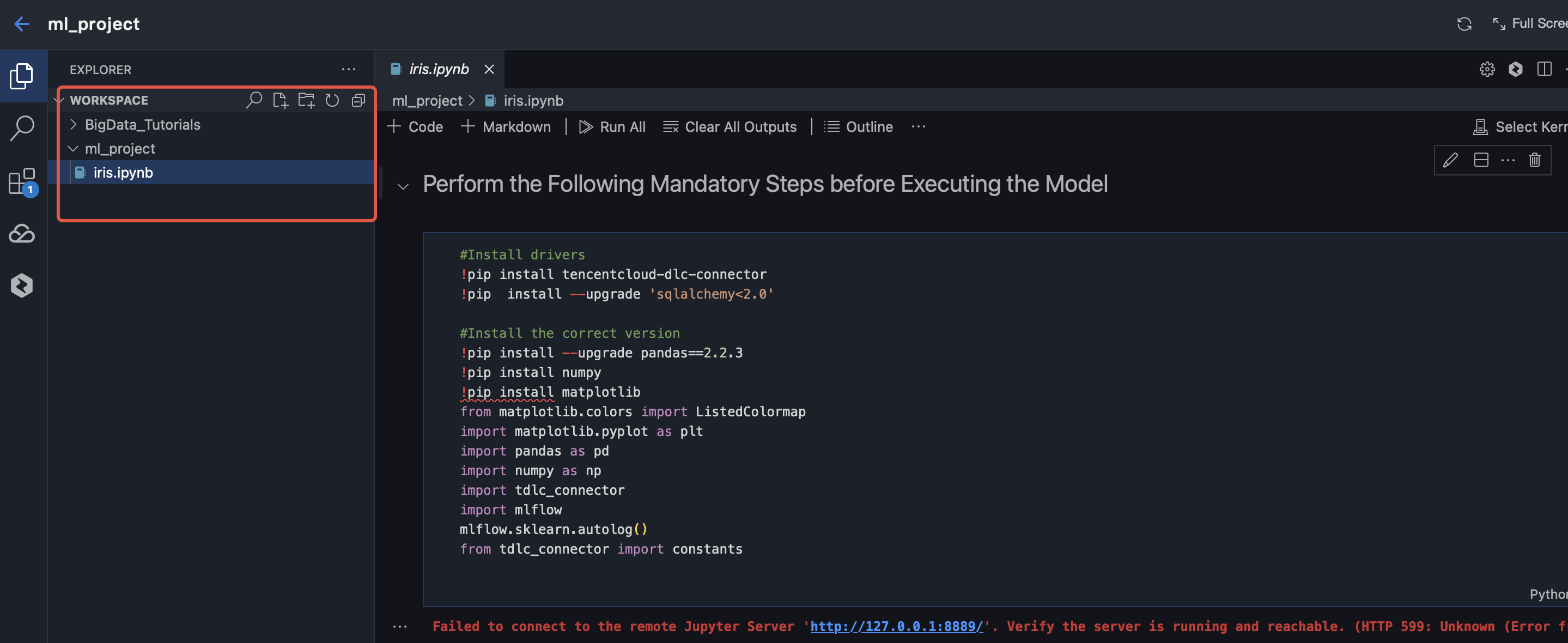

Creating Notebook Files

In the resource explorer on the left, you can create folders and Notebook files. Note: Notebook files need to end with (.ipynb.) The resource explorer includes 3 preset Big Data series tutorials for out-of-the-box use.

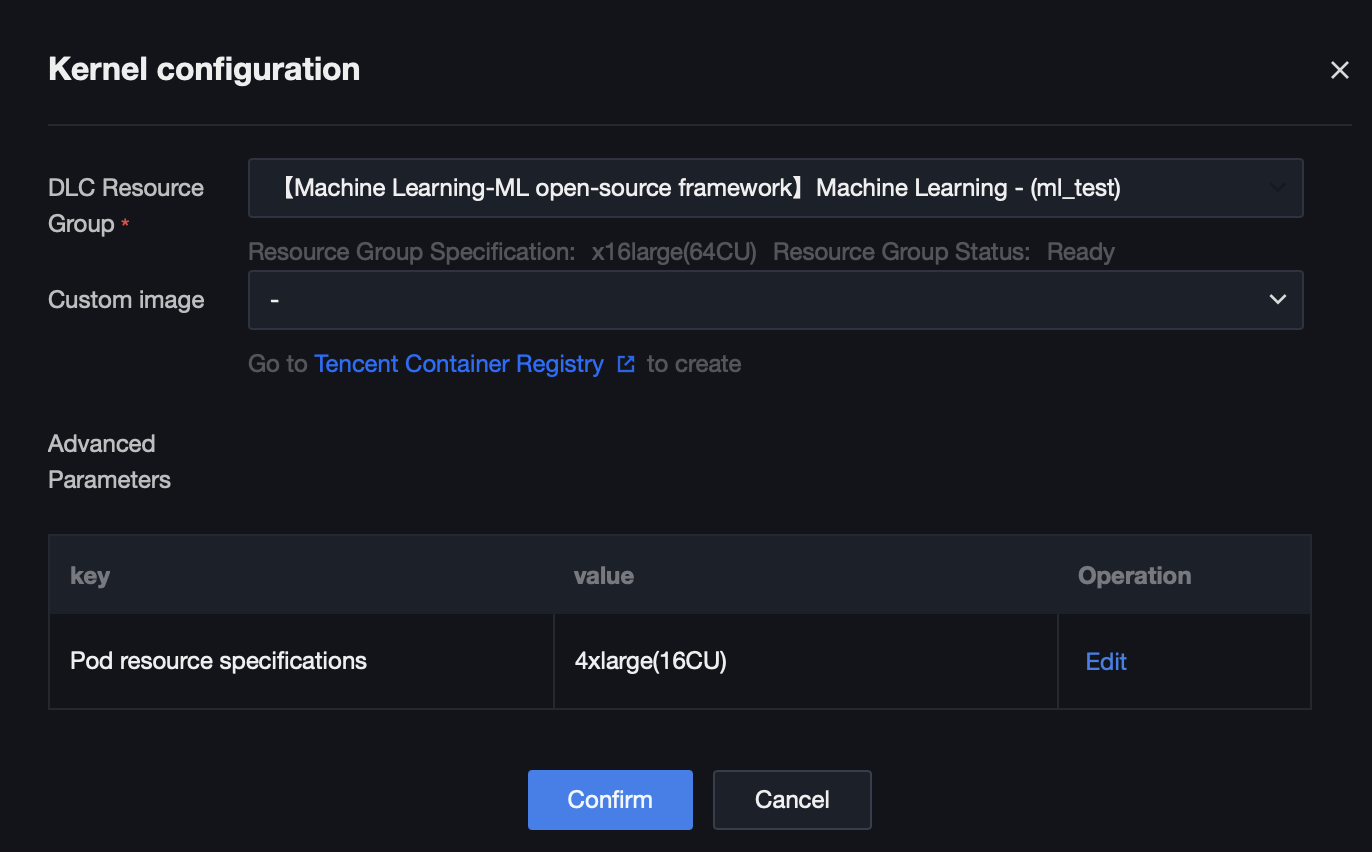

Selecting Kernels

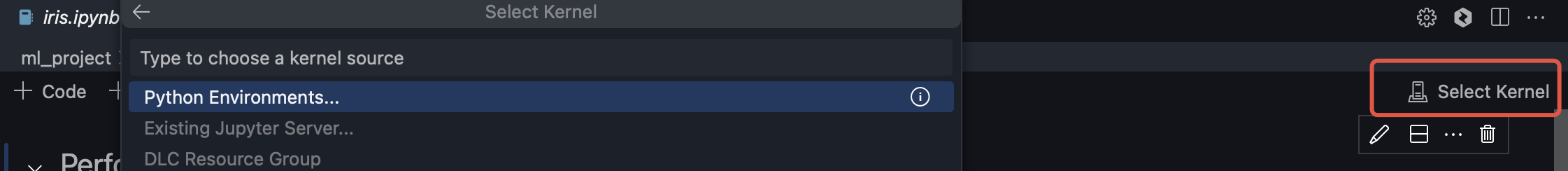

1. Click Select Kernel.

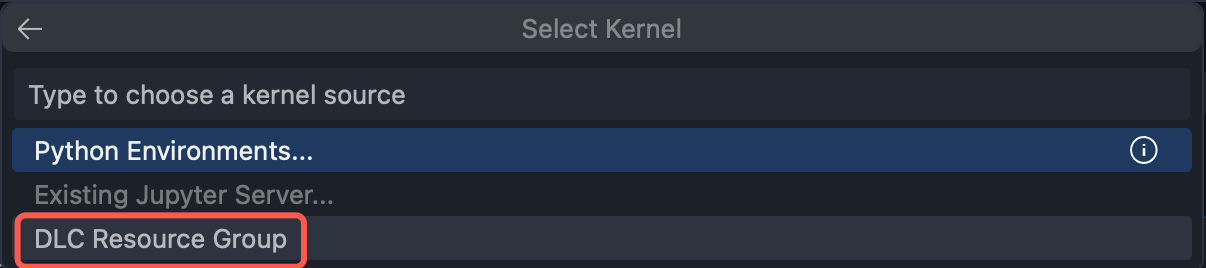

2. Select "DLC resource group" in the pop-up dropdown option.

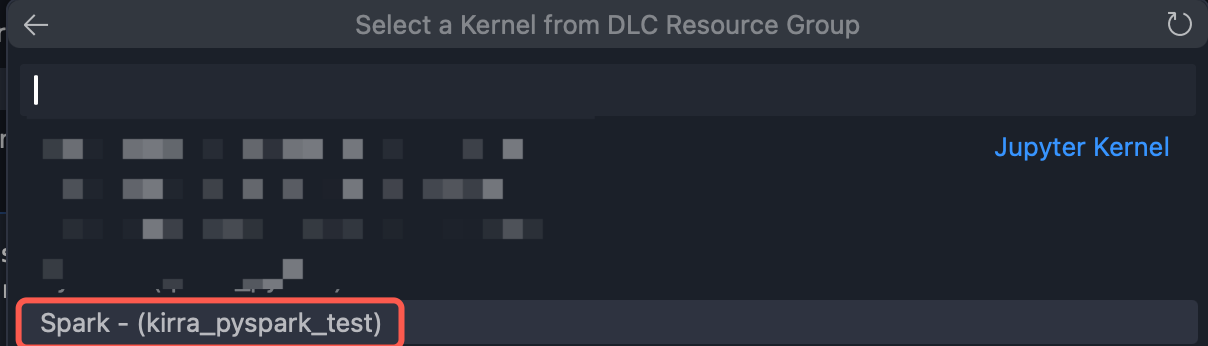

3. Select the scikit-learn resource group you created in the DLC data engine from the next-level options.

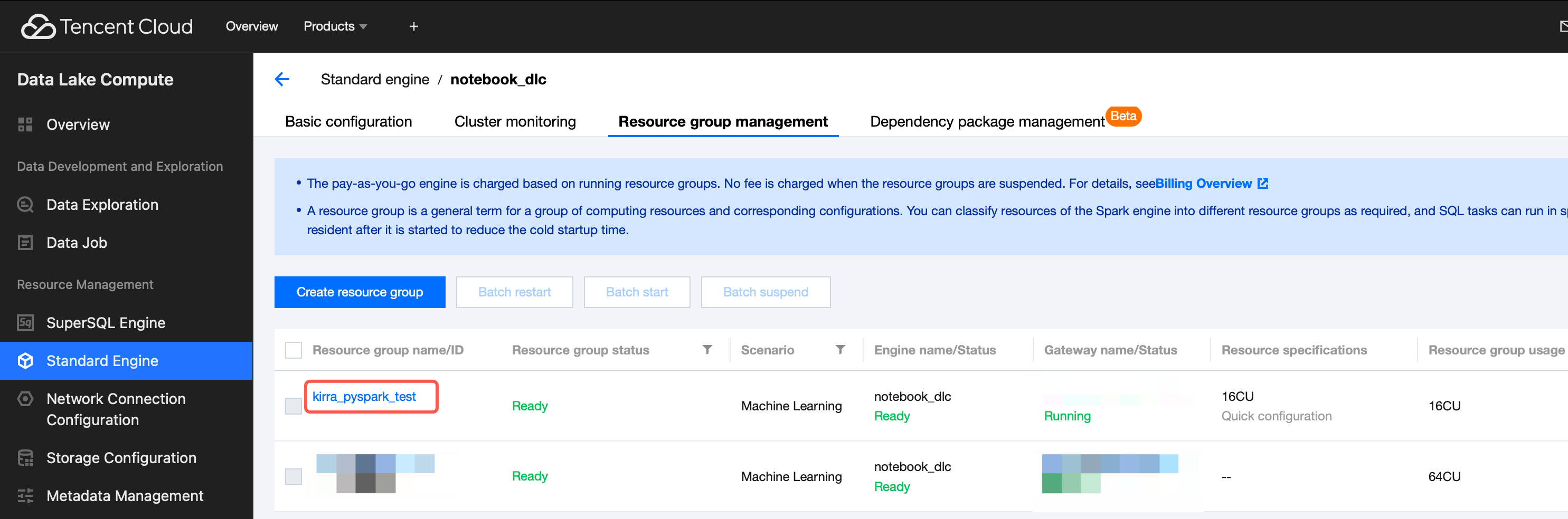

For example, the resource group named "Spark - (kirra_pyspark_test)" selected in the above figure is consistent with the resource group name in the DLC data engine:

Running Notebook Files

1. Confirm the initialization configuration.

2. Execute best practices: Use the public Iris dataset for demonstration, implement a logistic regression model to classify different types of flowers, and visualize the classification results.

Note:

Before running the model, you need to install the required tencentcloud-dlc-connector and complete the corresponding configurations.

#Install the driver.!pip install tencentcloud-dlc-connector!pip install --upgrade 'sqlalchemy<2.0'#Installation version.!pip install --upgrade pandas==2.2.3!pip install numpy!pip install matplotlibimport pandas as pdimport numpy as npimport matplotlib.pyplot as pltfrom matplotlib.colors import ListedColormapimport tdlc_connectorfrom tdlc_connector import constantsimport mlflowmlflow.sklearn.autolog()#Use tdlc-connector to access in table mode.conn = tdlc_connector.connect(region="ap-***", #Fill in the correct address, for example: ap-Singapore and ap-Shanghaisecret_id="*******",secret_key="*******",engine="your engine", #Fill in the purchased engine name.resource_group=None,engine_type=constants.EngineType.AUTO,result_style=constants.ResultStyles.LIST,download=True)query = """SELECTsepal.length,sepal.width,petal.length,petal.width,species FROM at_database_testnotebook.demo_test_sklearn"""#Read data.iris = pd.read_sql(query, conn)iris.head()#Divide the dataset.X = iris[['petal.length', 'petal.width']].valuescategory_map = {'setosa': 0,'versicolor': 1,'virginica': 2}y= iris['species'].replace(category_map)from sklearn.model_selection import train_test_splitX_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=1, stratify=y)print('Labels count in y:', np.bincount(y))print('Labels count in y_train:', np.bincount(y_train))print('Labels count in y_test:', np.bincount(y_test))#Data normalization.from sklearn.preprocessing import StandardScalersc = StandardScaler()sc.fit(X_train)X_train_std = sc.transform(X_train)X_test_std = sc.transform(X_test)X_combined_std = np.vstack((X_train_std, X_test_std))y_combined = np.hstack((y_train, y_test))#Perform logistic regression classification, and visualize the classification result.from sklearn.linear_model import LogisticRegressionlr = LogisticRegression(C=100.0, random_state=1, solver='lbfgs', multi_class='ovr')lr.fit(X_train_std, y_train)plot_decision_regions(X_combined_std, y_combined,classifier=lr, test_idx=range(105, 150))plt.xlabel('petal length [standardized]')plt.ylabel('petal width [standardized]')plt.legend(loc='upper left')plt.tight_layout()plt.show()#View model accuracy.y_pred = lr.predict(X_test_std)print(accuracy_score(y_test, y_pred))

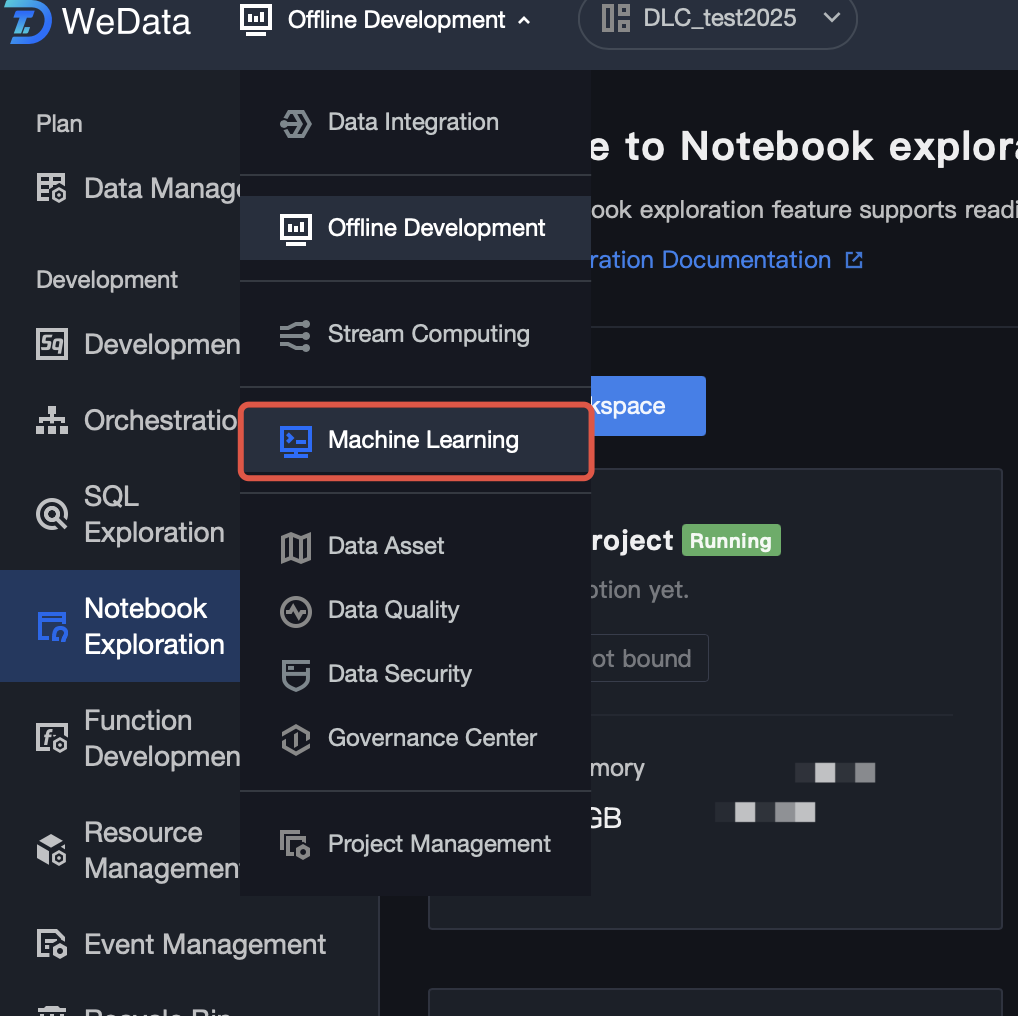

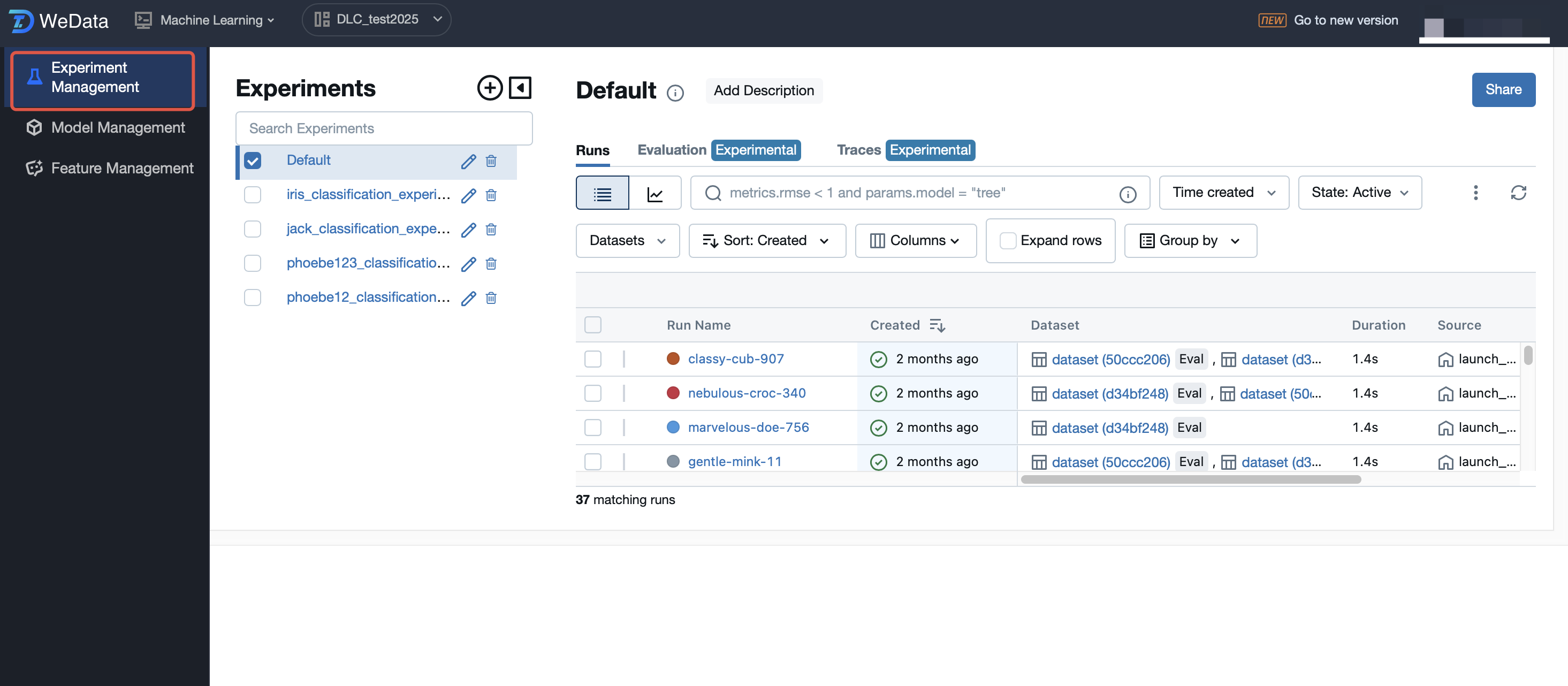

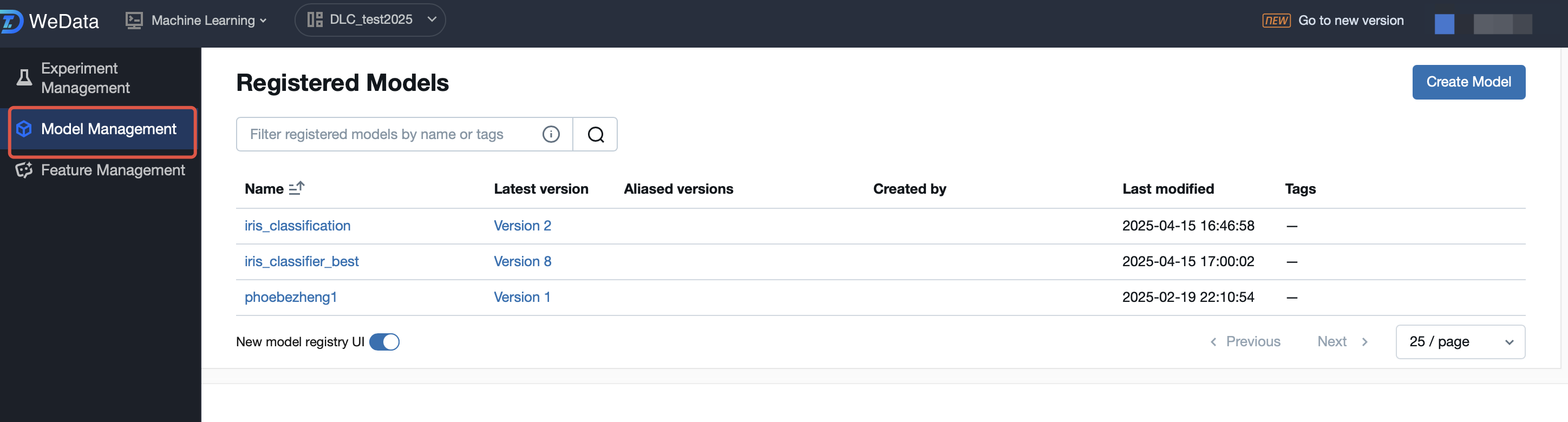

Going to MLflow to View Training Results and Registered Models

1. Select the machine learning feature.

2. View the experiment records and select the best training result to register as a model.

Help and Support

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback