Feature Management

Overview

The feature management module of WeData provides offline and online storage services to achieve unified management of features. It helps data scientists and data engineers store, read, analyze, and reuse features efficiently during model development, avoiding siloed collaboration.

Capability Description

The feature management of WeData mainly provides the following capacities:

Type | Description |

interface operation function | 1. View feature table details: You can view the basic information and feature fields. 2. Support batch import of feature tables. 3. Support creating feature synchronization. |

Feature Engineering API | Support performing CRUD operations, synchronization, and consumption on online/offline feature tables in Studio. |

Required Description

Type | Description |

offline feature store | The offline feature tables managed by WeData currently only support DLC Iceberg tables and EMR Hive tables. During feature table registration, the primary key and timestamp key must be specified. Subsequent operations will perform feature indexing based on the designated primary key and timestamp key. |

Table operation permission | DLC: If Catalog is enabled, you need to authorize corresponding database and table permissions in Data Lake Compute (DLC) and grant permissions for the corresponding Catalog in WeData under "Data Assets > Catalog Directory". If Catalog is not enabled, you need to authorize corresponding database and table permissions in Data Lake Compute (DLC). EMR: Set the engine access account and account mapping in WeData "Project Management > Storage-Compute Engine Settings" to determine table privileges. |

online feature store | WeData supports Redis as an online feature store. Preparation as follows: Region and network should be consistent with the scheduling resource group and engine (DLC or EMR) used. Step 2: Add a Redis data source in WeData's "Project Management > Data Source Management" and test connectivity with the scheduling resource group. Step 3: Add the default feature library (online) in WeData feature management. |

Feature Engineering Package | Connect to the engine and run the following command to install the latest feature engineering package.

If you use the DLC engine, select the "wedata-data-science" mirror when building the resource group. The feature engineering package has been pre-installed. Execute command check version:

|

Key management description | The call of the feature engineering package requires the use of the ID and key of TencentCloud API. You can go to Tencent Cloud CAM to create an access key. If your organization requires that key information cannot be transmitted in plain text, you can use Tencent Cloud SSM or Tencent Cloud KMS to manage keys. |

Interface Operation

Feature Import and Default Library Settings

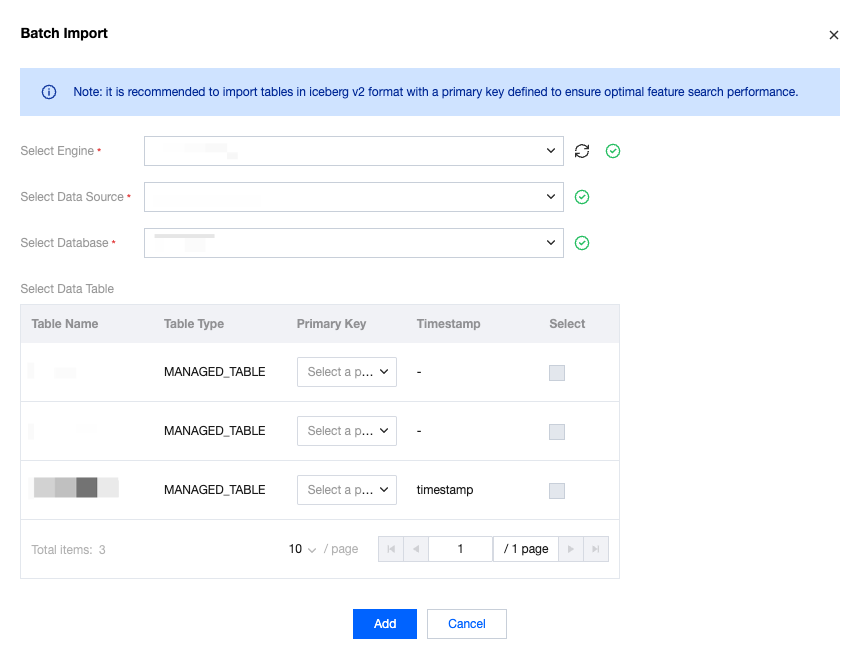

Offline Feature Table Batch Import/Registration

WeData supports batch import/registration of existing feature tables. Enter the feature management page, click the import button, select the engine, data source, and database based on the prompt, check the primary key and corresponding table, then just import in batches.

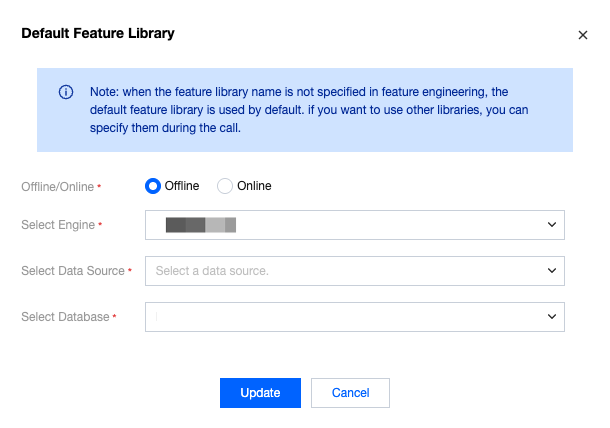

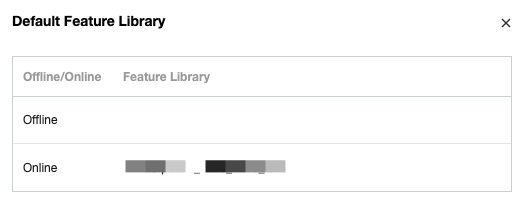

Default Library Settings

WeData supports setting default offline and online feature libraries. When you call the API without specifying feature library information, the default feature library information will be used.

and allows you to view the set default library info on the right of the module name.

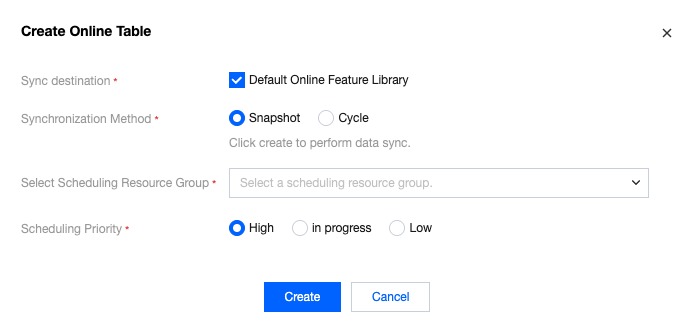

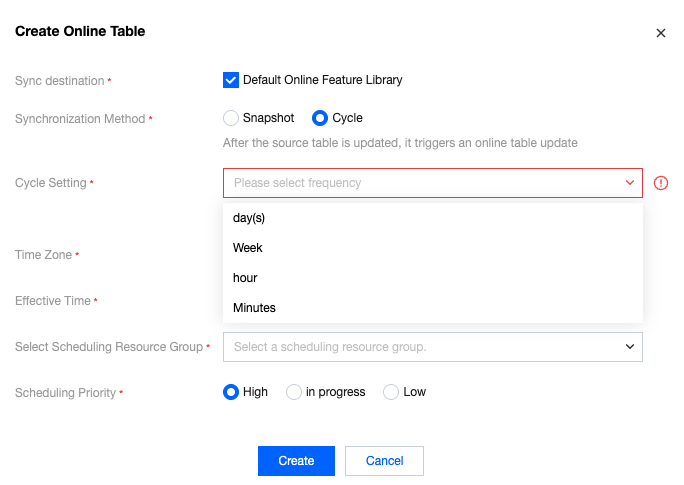

Creating Online Tables/Feature Synchronization

Supports clicking the operation item to create an online table from an offline table, creating a sync task from the offline table to the online table. Supports selecting two sync methods: snapshot and cycle.

The snapshot method supports one-time synchronous characteristics, set computational resources, task priority, etc.

The periodic method supports setting a synchronization cycle, allowing daily, weekly, hourly, or minute-based cycles, and allows configuring time intervals, computational resources, task priority, etc.

Feature Table Detail Viewing

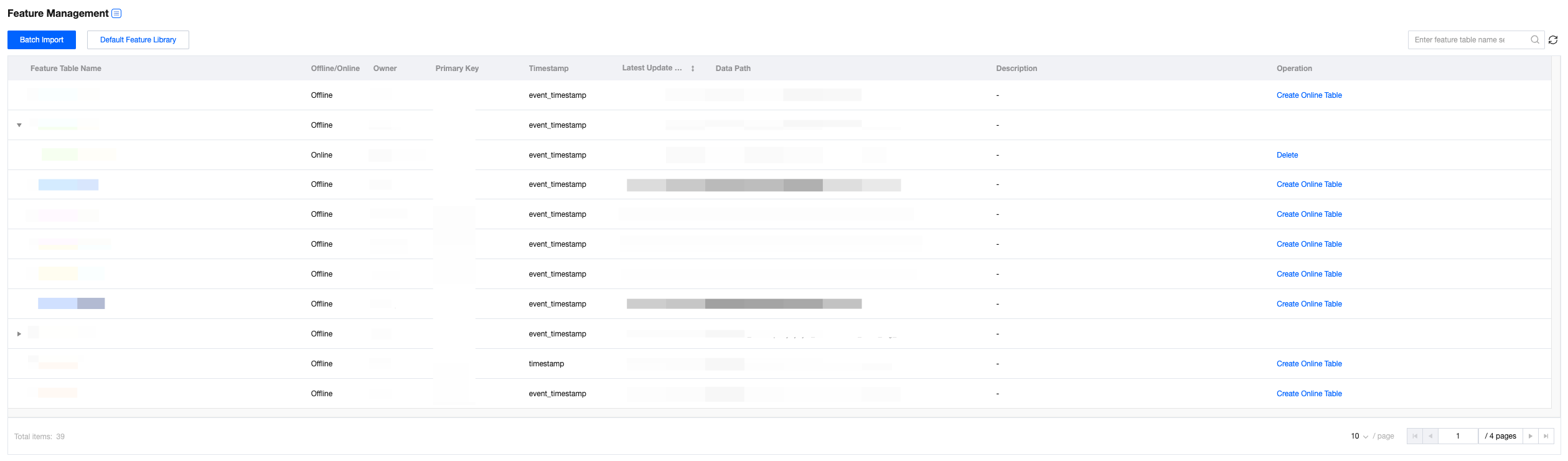

The feature management webpage supports indexing to view meta-information of offline and online feature tables.

The list page supports viewing feature table names, online/offline status, responsible person, primary key, timestamp, last modification time, data address, description, and operation items.

Online feature table information in the list is collapsed by default

Support creating an online feature table when none exists, with an operation item provided.

When an online feature table has been created, no operation items are provided for it, and you can unfold the corresponding online feature table information by clicking the triangle on the left side of the table name.

An offline feature table can only create one online feature table.

Before deleting an offline feature table, you must first delete the corresponding online feature table, otherwise the delete button will not be provided.

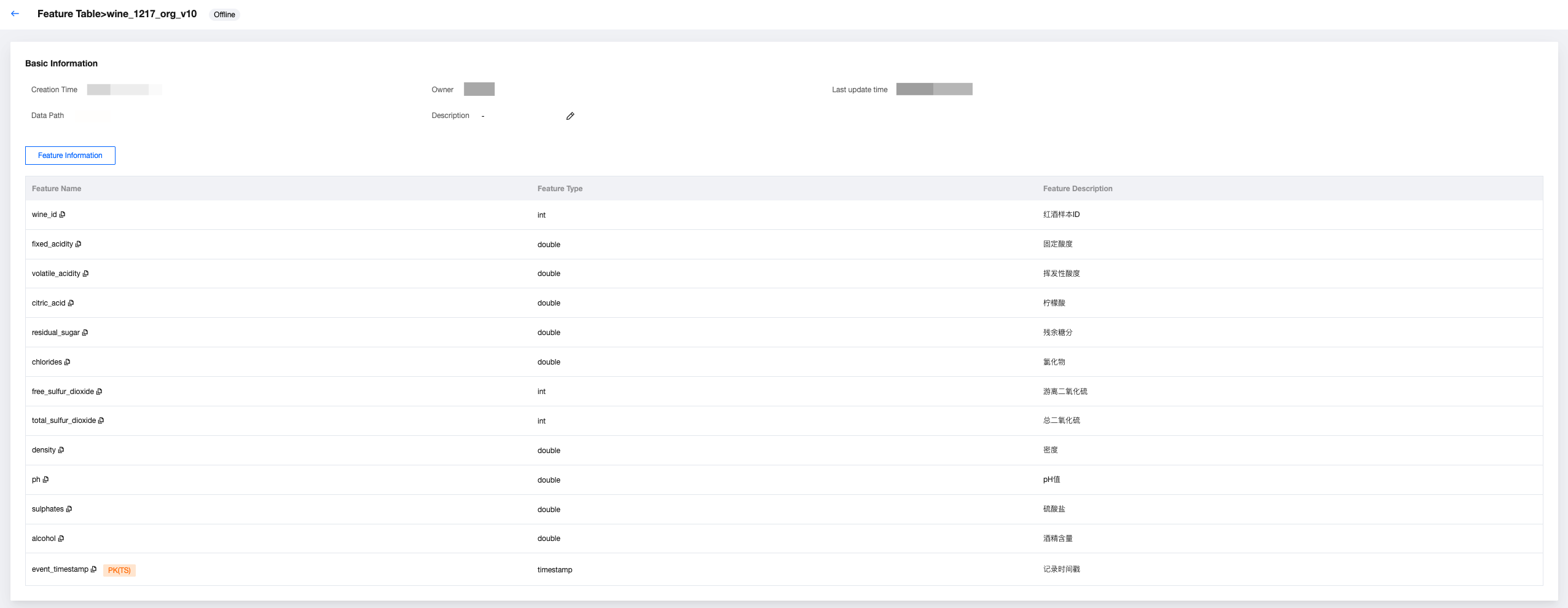

Offline Feature Table Detail

After clicking to enter the offline feature table detail, you can view the creation time, responsible person, last modification time, data address, and description. The offline tag is displayed at the top.

And supports viewing feature information, including feature name, field type, feature description, and marks the primary key and timestamp.

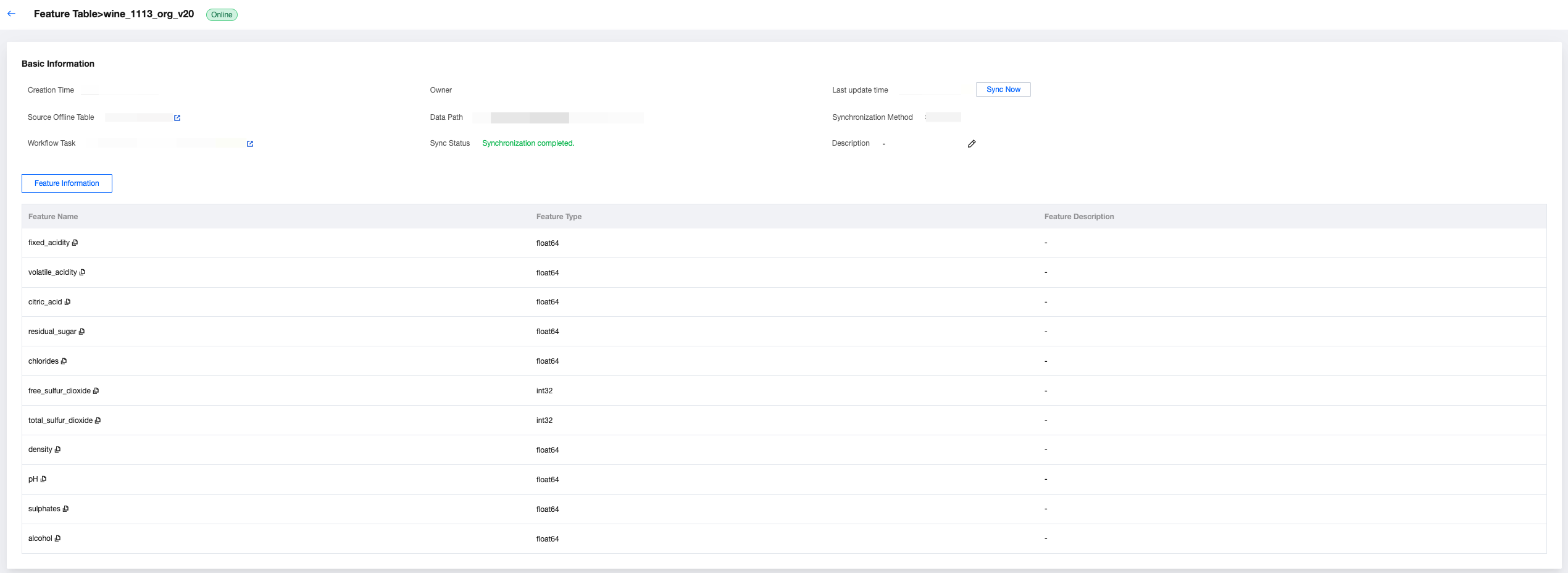

Online Feature Table Detail

After clicking to enter the online feature table detail, you can view the creation time, responsible person, sync time (supports sync now), source offline table (supports click to jump to the appropriate offline table page), data address, sync method, workflow task (supports click to jump to the appropriate feature sync workflow page), sync status, and description.

And supports viewing feature information, including feature name, field type, feature description, and marks the primary key and timestamp.

Feature Engineering API

FeatureStoreClient is the unified client for WeData feature storage, providing full lifecycle management capability for features, including feature table creation, registration, read-write, training dataset creation, model records, and other features.

Note:

To call the feature engineering package, you need the ID and key of TencentCloud API. You can go to Tencent Cloud CAM to create an access key. If your organization requires that key information cannot be transmitted in plain text, you can use Tencent Cloud SSM or Tencent Cloud KMS to manage keys.

When the Catalog feature is enabled, some APIs require the input of Catalog_name. Please check the API document.

Before using, install the latest feature engineering API package in the Studio runtime environment.

%pip install tencent-wedata-feature-engineering

If you use the DLC engine, select the "wedata-data-science" mirror when building the resource group. The feature engineering package has been pre-installed and requires no additional installation. Execute command check version:

%pip list | grep tencent-wedata-feature-engineering

The latest version is viewable at: https://pypi.org/project/tencent-wedata-feature-engineering/#history

Initializing the Feature Engineering Client

init

The init API can be used to initialize a FeatureStoreClient instance.

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

spark | Yes | Optional[SparkSession] | An initialized SparkSession object, auto-created if not provided | SparkSession.builder.getOrCreate() |

cloud_secret_id | Yes | str | service key ID | "your_secret_id" |

cloud_secret_key | Yes | str | service key | "your_secret_key" |

Output Parameter

Result Name | Field Type | Field Description |

client | FeatureStoreClient | Return the FeatureStoreClient instance |

Call Example

from wedata.feature_store.client import FeatureStoreClient# Build a feature engineering client instance# create FeatureStoreClientclient = FeatureStoreClient(spark, cloud_secret_id=cloud_secret_id, cloud_secret_key=cloud_secret_key)

Error Code

No specific error code. SparkSession creation failed and throws related exceptions.

Offline Feature Table Operation

create_table

Create a feature table (supports batch and stream data ingestion).

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

name | Yes | str | Full table name (format: <table>) | "user_features" |

primary_keys | Yes | Union[str, List[str]] | Primary key column name (supports composite primary key) | "user_id" or ["user_id", "session_id"] |

timestamp_key | Yes | str | timestamp key (for tense feature) | "timestamp" |

engine_type | Yes | wedata.feature_store.constants.engine_types.EngineTypes | Engine Type EngineTypes.HIVE_ENGINE -- HIVE engine EngineTypes. ICEBERG_ENGINE -- ICEBERG engine | EngineTypes.HIVE_ENGINE |

data_source_name | Yes | str | Data Source Name | "hive_datasource" |

database_name | No | Optional[str] | Database name | "feature_db" |

df | No | Optional[DataFrame] | initial data (used for infer schema) | spark.createDataFrame([...]) |

partition_columns | No | Union[str, List[str], None] | partition column (optimized storage querying) | "date" or ["date", "region"] |

schema | No | Optional[StructType] | Table structure definition (required when df is not provided) | StructType([...]) |

description | No | Optional[str] | Business Description | User feature table |

tags | No | Optional[Dict[str, str]] | business tag | {"domain": "user", "version": "v1"} |

catalog_name | If catalog is not enabled, no need to enter If catalog is enabled, it is required | str | catalog name where the feature table resides | "DataLakeCatalog" |

Output Parameter

Result Name | Field Type | Field Description |

feature_table | FeatureTable | FeatureTable object containing table metadata |

Call Example

from wedata.feature_store.constants.engine_types import EngineTypes# Create user feature table--EMR on hive# create user's feature table--EMR on hivefeature_table = client.create_table(name=table_name, # Table nameprimary_keys=["wine_id"], # Primary keydf=wine_features_df, # dataengine_type=EngineTypes.HIVE_ENGINE,data_source_name=data_source_name,timestamp_key="event_timestamp",tags={ # Business tag"purpose": "demo","create_by": "wedata"})# Create user feature table--DLC on iceberg (catalog not enabled)# create user's feature table--DLC on iceberg(disable catalog)feature_table = client.create_table(name=table_name, # Table namedatabase_name=database_name,primary_keys=["wine_id"], # Primary keydf=wine_features_df, # dataengine_type=EngineTypes.ICEBERG_ENGINE,data_source_name=data_source_name,timestamp_key="event_timestamp",tags={ # Business tag"purpose": "demo","create_by": "wedata"})# Create user feature table--DLC on iceberg (catalog enabled)# create user's feature table--DLC on iceberg(enable catalog)feature_table = client.create_table(name=table_name, # Table nameprimary_keys=["wine_id"], # Primary keydf=wine_features_df, # dataengine_type=EngineTypes.ICEBERG_ENGINE,data_source_name=data_source_name,timestamp_key="event_timestamp",tags={ # Business tag"purpose": "demo","create_by": "wedata"},catalog_name=catalog_name)

Error Code

ValueError: Reports an error when schema and data mismatch.

register_table

Register an ordinary table as a feature table and return the feature table data.

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

name | Yes | str | Table name | "user_features" |

timestamp_key | Yes | str | timestamp key (for follow-up online/offline feature synchronization) | "timestamp" |

engine_type | Yes | wedata.feature_store.constants.engine_types.EngineTypes | Engine Type EngineTypes.HIVE_ENGINE -- HIVE engine EngineTypes. ICEBERG_ENGINE -- ICEBERG engine | EngineTypes.HIVE_ENGINE |

data_source_name | Yes | str | Data Source Name | "hive_datasource" |

database_name | No | Optional[str] | Feature library name | "feature_db" |

primary_keys | No | Union[str, List[str]] | Primary key column name (Valid at that time only when engine_type is EngineTypes.HIVE_ENGINE) | "user_id" |

catalog_name | If catalog is not enabled, no need to enter If catalog is enabled, it is required | str | catalog name where the feature table resides | "DataLakeCatalog" |

Output Parameter

Result Name | Field Type | Field Description |

dataframe | pyspark.DataFrame | DataFrame object containing table data |

Call Example

from wedata.feature_store.constants.engine_types import EngineTypes# Register feature table--EMR on hive# register feature table--EMR on hiveclient.register_table(database_name=database_name, name=register_table_name, timestamp_key="event_timestamp",engine_type=EngineTypes.HIVE_ENGINE, data_source_name=data_source_name, primary_keys=["wine_id",])# Register feature table--DLC on iceberg (catalog not enabled)# register feature table--DLC on iceberg(disable catalog)client.register_table(database_name=database_name, name=register_table_name, timestamp_key="event_timestamp",engine_type=EngineTypes.ICEBERG_ENGINE, data_source_name=data_source_name, primary_keys=["wine_id",])# register feature table--DLC on iceberg(enable catalog)# register feature table--DLC on iceberg(enable catalog)client.register_table(database_name=database_name, name=register_table_name, timestamp_key="event_timestamp",engine_type=EngineTypes.HIVE_ENGINE, data_source_name=data_source_name, primary_keys=["wine_id",], catalog_name=catalog_name)

Error Code

No specific error code.

read_table

Read feature table data.

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

name | Yes | str | Table name | "user_features" |

database_name | No | Optional[str] | Feature library name | "feature_db" |

is_online | No | bool | Whether to read the online feature table (default False) | True |

online_config | No | Optional[RedisStoreConfig] | Online feature table configuration (Valid only when is_online is True) | RedisStoreConfig(...) |

entity_row | No | Optional[List[Dict[str, Any]]] | Row data of the physical entity (valid only when is_online is True) | [{"user_id": ["123", "456"]}] |

catalog_name | If catalog is not enabled, no need to enter If catalog is enabled, it is required | str | catalog name where the feature table resides | "DataLakeCatalog" |

Output Parameter

Result Name | Field Type | Field Description |

dataframe | pyspark.DataFrame | DataFrame object containing table data |

Call Example

# Read offline feature table--EMR on hive--&--DLC on iceberg(catalog not enabled)# read offline feature table--EMR on hive--&--DLC on iceberg(disable catalog)get_df = client.read_table(name=table_name, database_name=database_name)get_df.show(30)# Read online feature table--EMR on hive--&--DLC on iceberg(catalog not enabled)# read online feature table--EMR on hive--&--DLC on iceberg(disable catalog)online_config = RedisStoreConfig(host=redis_host, port=redis_password, db=redis_db, password=redis_password)primary_keys_rows = [{"wine_id": 1},{"wine_id": 3}]result = client.read_table(name=table_name,database_name=database_name, is_online=True, online_config=online_config, entity_row=primary_keys_rows)result.show()# Read offline feature table--DLC on iceberg(enable catalog)# read offline feature table--DLC on iceberg(enable catalog)get_df = client.read_table(name=table_name, database_name=database_name, catalog_name=catalog_name)get_df.show(30)# Get online feature table data--DLC on iceberg(enable catalog)# read online feature table--DLC on iceberg(enable catalog)primary_keys_rows = [{"wine_id": 1},{"wine_id": 3}]result = client.read_table(name=table_name,database_name=database_name, is_online=True, online_config=online_config, entity_row=primary_keys_rows, catalog_name=catalog_name)result.show()

Error Code

No specific error code.

get_table

Retrieve feature table data.

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

name | Yes | str | Table name | "user_features" |

database_name | No | Optional[str] | Feature library name | "feature_db" |

catalog_name | If catalog is not enabled, no need to enter If catalog is enabled, it is required | str | catalog name where the feature table resides | "DataLakeCatalog" |

Output Parameter

Result Name | Field Type | Field Description |

feature_table | FeatureTable | FeatureTable object containing table metadata |

Call Example

# Get feature table data--EMR on hive--&--DLC on iceberg(catalog not enabled)# get feature table data--EMR on hive--&--DLC on iceberg(disable catalog)featureTable = client.get_table(name=table_name, database_name=database_name)# Get online feature table data--DLC on iceberg(enable catalog)# get feature table data--DLC on iceberg(enable catalog)featureTable = client.get_table(name=table_name, database_name=database_name, catalog_name=catalog_name)

Error Code

No specific error code.

drop_table

Delete a feature table.

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

name | Yes | str | Name of the feature table to delete | "user_features" |

database_name | No | Optional[str] | Feature library name | "feature_db" |

catalog_name | If catalog is enabled, it is required | str | catalog name where the feature table resides | "DataLakeCatalog" |

Output Parameter

Result Name | Field Type | Field Description |

N/A | None | no return value |

Call Example

# drop feature table data--EMR on hive--&--DLC on iceberg(disable catalog)# drop feature table data--EMR on hive--&--DLC on iceberg(disable catalog)client.drop_table(table_name)# Delete feature table--DLC on iceberg(enable catalog)# drop feature table data--DLC on iceberg(enable catalog)client.drop_table(table_name,catalog_name="DataLakeCatalog")

Error Code

No specific error code.

write_table

Write data to a feature table (supports batch and stream processing).

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

name | Yes | str | Full table name (format: <table>) | "user_features" |

df | No | Optional[DataFrame] | DataFrame to write | spark.createDataFrame([...]) |

database_name | No | Optional[str] | Feature library name | "feature_db" |

mode | No | Optional[str] | Write Mode (default APPEND) | "overwrite" |

checkpoint_location | No | Optional[str] | Checkpoint location for stream processing | "/checkpoints/user_features" |

trigger | No | Dict[str, Any] | Stream processing trigger configuration | {"processingTime": "10 seconds"} |

catalog_name | If catalog is not enabled, no need to enter If catalog is enabled, it is required | str | catalog name where the feature table resides | "DataLakeCatalog" |

Output Parameter

Result Name | Field Type | Field Description |

streaming_query | Optional[StreamingQuery] | If it is stream write return StreamingQuery object, otherwise return None |

Call Example

from wedata.feature_store.constants.constants import APPEND# Batch processing write--EMR on hive--&--DLC on iceberg(catalog not enabled)# batch write--EMR on hive--&--DLC on iceberg(disable catalog)client.write_table(name="user_features",df=user_data_df,database_name="feature_db",mode="append")# Stream processing write--EMR on hive--&--DLC on iceberg(catalog not enabled)# strem write--EMR on hive--&--DLC on iceberg(disable catalog)streaming_query = client.write_table(name="user_features",df=streaming_df,database_name="feature_db",checkpoint_location="/checkpoints/user_features",trigger={"processingTime": "10 seconds"})# Batch processing write--DLC on iceberg(catalog enabled)# batch write--EMR on hive--&--DLC on iceberg(enable catalog)client.write_table(name="user_features",df=user_data_df,database_name="feature_db",mode="append",catalog_name=catalog_name)# Stream processing write--DLC on iceberg(catalog enabled)# strem write--EMR on hive--&--DLC on iceberg(enable catalog)streaming_query = client.write_table(name="user_features",df=streaming_df,database_name="feature_db",checkpoint_location="/checkpoints/user_features",trigger={"processingTime": "10 seconds"},catalog_name=catalog_name)

Error Code

No specific error code.

Offline Feature Consumption

Feature Lookup

Search features in the feature table.

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

table_name | Yes | str | Table name | "user_features" |

lookup_key | Yes | Union[str, List[str]] | Key for joining the feature table with the training set, usually the primary key | "user_id" or ["user_id", "session_id"] |

is_online | No | bool | Whether to read the online feature table (default False) | True |

online_config | No | Optional[RedisStoreConfig] | Online feature table configuration (Valid only when is_online is True) | RedisStoreConfig(...) |

feature_names | No | Union[str, List[str], None] | Feature name to search in the feature table | ["age", "gender", "preferences"] |

rename_outputs | No | Optional[Dict[str, str]] | Feature rename map | {"age": "user_age"} |

timestamp_lookup_key | No | Optional[str] | timestamp key for time point search | "event_timestamp" |

lookback_window | No | Optional[datetime.timedelta] | Time point search backtracking window | datetime.timedelta(days=1) |

Output Parameter

Result Name | Field Type | Field Description |

FeatureLookup object | FeatureLookup | Constructed FeatureLookup instance |

Call Example

from wedata.feature_store.entities.feature_lookup import FeatureLookup# Feature search--EMR on hive--&--DLC on iceberg(catalog not enabled)--&--DLC on iceberg(catalog enabled)# feature lookup--EMR on hive--&--DLC on iceberg(disable catalog)--&--DLC on iceberg(enable catalog)wine_feature_lookup = FeatureLookup(table_name=table_name,lookup_key="wine_id",timestamp_lookup_key="event_timestamp")

Error Code

No specific error code.

create_training_set

Search features in the feature table.

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

df | No | Optional[DataFrame] | DataFrame to write | spark.createDataFrame([...]) |

feature_lookups | Yes | List[Union[FeatureLookup, FeatureFunction]] | Feature query list | [FeatureLookup(...), FeatureFunction(...)] |

label | Yes | Union[str, List[str], None] | tag column name | "is_churn" or ["label1", "label2"] |

exclude_columns | No | Optional[List[str]] | Exclude column name | ["user_id", "timestamp"] |

database_name | No | Optional[str] | Feature library name | "feature_db" |

catalog_name | If catalog is not enabled, no need to enter If catalog is enabled, it is required | str | catalog name where the feature table resides | "DataLakeCatalog" |

Output Parameter

Result Name | Field Type | Field Description |

training_set | TrainingSet | Constructed TrainingSet instance |

Call Example

from wedata.feature_store.entities.feature_lookup import FeatureLookupfrom wedata.feature_store.entities.feature_function import FeatureFunction# Define feature search# define feature lookupwine_feature_lookup = FeatureLookup(table_name=table_name,lookup_key="wine_id",timestamp_lookup_key="event_timestamp")# Build training data# prepare training datainference_data_df = wine_df.select(f"wine_id", "quality", "event_timestamp")# create trainingset--EMR on hive--&--DLC on iceberg(disable catalog)# create trainingset--EMR on hive--&--DLC on iceberg(disable catalog)training_set = client.create_training_set(df=inference_data_df, # basic datafeature_lookups=[wine_feature_lookup], # feature search configurationlabel="quality", # tag columnexclude_columns=["wine_id", "event_timestamp"] # exclude unnecessary columns)# create trainingset--DLC on iceberg(enable catalog)# create trainingset--DLC on iceberg(enable catalog)training_set = client.create_training_set(df=inference_data_df, # basic datafeature_lookups=[wine_feature_lookup], # feature search configurationlabel="quality", # tag columnexclude_columns=["wine_id", "event_timestamp"], # exclude unnecessary columnsdatabase_name=database_name,catalog_name=catalog_name)# get final training DataFrame# get final training DataFrametraining_df = training_set.load_df()# Print training set data# print trainingset dataprint(f"\\n=== Training set data ===")training_df.show(10, True)

Error Code

ValueError: FeatureLookup must be specified table_name.

log_model

Record MLflow models and associate feature search info.

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

model | Yes | Any | Model objects to record | sklearn.RandomForestClassifier() |

artifact_path | Yes | str | Model storage path | "churn_model" |

flavor | Yes | ModuleType | MLflow model type module | mlflow.sklearn |

training_set | No | Optional[TrainingSet] | TrainingSet object used by training model | training_set |

registered_model_name | No | Optional[str] | Model name to register (Enable catalog to enter the model name for catalog) | "churn_prediction_model" |

model_registry_uri | No | Optional[str] | Address of the model registry | "databricks://model-registry" |

await_registration_for | No | int | Wait seconds for model registration to complete (default 300 seconds) | 600 |

infer_input_example | No | bool | Whether to automatically record input example (default False) | True |

Output Parameter

N/A

Call Example

# Training model# train modelfrom sklearn.model_selection import train_test_splitfrom sklearn.metrics import classification_reportfrom sklearn.ensemble import RandomForestClassifierimport mlflow.sklearnimport pandas as pdimport osproject_id=os.environ["WEDATA_PROJECT_ID"]mlflow.set_experiment(experiment_name=expirement_name)# Set mlflow information reporting address# set mlflow tracking_urimlflow.set_tracking_uri("http://30.22.36.75:5000")# Convert Spark DataFrame to Pandas DataFrame for training# convert Spark DataFrame to Pandas DataFrame to traintrain_pd = training_df.toPandas()# Delete timestamp column# delete timestamp# train_pd.drop('event_timestamp', axis=1)# Prepare features and tags# prepare features and tagsX = train_pd.drop('quality', axis=1)y = train_pd['quality']# Divide the training set and test set# split traningset and testsetX_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)# Convert date to timestamp (seconds)# or convert datatime to timestamp(second)for col in X_train.select_dtypes(include=['datetime', 'datetimetz']):X_train[col] = X_train[col].astype('int64') // 10**9 # Convert nanoseconds to seconds# Confirm no missing values cause dtype to downgrade to object# Verify that no missing values cause the dtype to be downgraded to object.X_train = X_train.fillna(X_train.median(numeric_only=True))# initialize and train model and record model--EMR on hive--&--DLC on iceberg(disable catalog)--&--DLC on iceberg(enable catalog)# Initialize, train and log the model.--EMR on hive--&--DLC on iceberg(disable catalog)--&--DLC on iceberg(enable catalog)model = RandomForestClassifier(n_estimators=100, max_depth=3, random_state=42)model.fit(X_train, y_train)with mlflow.start_run():client.log_model(model=model,artifact_path="wine_quality_prediction", # model artifact pathflavor=mlflow.sklearn,training_set=training_set,registered_model_name=model_name, # model name (if catalog is enabled, must be catalog model name))

Error Code

No specific error code.

score_batch

Execute batch reasoning. If input features miss (note that the corresponding primary key is required), the reasoning process will automatically execute feature complement according to the feature_spec file recorded by the model.

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

model_uri | Yes | str | MLflow model URI location | "models:/churn_model/1" |

df | Yes | DataFrame | DataFrame to reason | spark.createDataFrame([...]) |

result_type | No | str | The model return type (default "double") | "string" |

Output Parameter

Result Name | Field Type | Field Description |

predictions | DataFrame | DataFrame containing forecast results |

Call Example

# run score_batch--EMR on hive--&--DLC on iceberg(disable catalog)--&--DLC on iceberg(enable catalog)# run score_batch--EMR on hive--&--DLC on iceberg(disable catalog)--&--DLC on iceberg(enable catalog)result = client.score_batch(model_uri=f"models:/{model_name}/1", df=wine_df)result.show()

Error Code

No specific error code.

Online Feature Table Operation

publish_table

Publish an offline feature table as an online feature table.

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

table_name | Yes | str | Full table name of the offline feature table (format: <table>) | "user_features" |

data_source_name | Yes | str | Data Source Name | "hive_datasource" |

database_name | No | Optional[str] | Database name | "feature_db" |

is_cycle | No | bool | Whether to enable periodic release (default: False) | True |

cycle_obj | No | TaskSchedulerConfiguration | Periodic task configuration object | TaskSchedulerConfiguration(...) |

is_use_default_online | No | bool | Whether to use the default online storage configuration (default True) | False |

online_config | No | RedisStoreConfig | Custom Online Storage Configuration | RedisStoreConfig(...) |

catalog_name | If catalog is not enabled, no need to enter If catalog is enabled, it is required | str | catalog name where the feature table resides | "DataLakeCatalog" |

Output Parameter

Result Name | Field Type | Field Description |

N/A | None | no return value |

Call Example

from wedata.feature_store.common.store_config.redis import RedisStoreConfigfrom wedata.feature_store.cloud_sdk_client.models import TaskSchedulerConfigurationonline_config = RedisStoreConfig(host=redis_host, port=redis_password, db=redis_db, password=redis_password)# Publish offline feature table to online feature table--EMR on hive--&--DLC on iceberg(catalog not enabled)# publish a offline feature table to online feature table--EMR on hive--&--DLC on iceberg(disable catalog)# Case 1 Import offline table table_name to default online data source via assigned offline data source using snapshot method# Case 1 Pass the offline table table_name from the specified offline data source to the default online data source in snapshot mode.result = client.publish_table(table_name=table_name, data_source_name=data_source_name, database_name=database_name,is_cycle=False, is_use_default_online=True)# Case 2 Import offline table table_name to specified data source (non-default) via assigned offline data source using snapshot method. Ensure the IP here matches the logged in data source IP in the entered data source for information consistency.# Case 2 Sync the offline table table_name from the specified offline data source to the designated non-default data source in snapshot mode. Note that the IP address here must be consistent with the login IP information of the entered data source.result = client.publish_table(name=table_name, data_source_name=data_source_name, database_name=database_name,is_cycle=False, is_use_default_online=False, online_config=online_config)# Case 3 Import offline table table_name to default online data source via assigned offline data source in a cycle way, run once every 5 minutes# Case 3 Sync the offline table table_name from the specified offline data source to the default online data source in cycle mode, with a run interval of every five minutes.cycle_obj = TaskSchedulerConfiguration()cycle_obj.CrontabExpression = "0 0/5 * * * ?"result = client.publish_table(name=table_name, data_source_name=data_source_name, database_name=database_name,is_cycle=True, cycle_obj=cycle_obj, is_use_default_online=True)# Publish offline feature table as online feature table--DLC on iceberg(enable catalog)# publish a offline feature table to online feature table--DLC on iceberg(enable catalog)# Case 1 Import offline table table_name to default online data source via assigned offline data source using snapshot method# Case 1 Pass the offline table table_name from the specified offline data source to the default online data source in snapshot mode.result = client.publish_table(table_name=table_name, data_source_name=data_source_name, database_name=database_name,is_cycle=False, is_use_default_online=True, catalog_name=catalog_name)# Case 2 Import offline table table_name to specified data source (non-default) via assigned offline data source using snapshot method. Ensure the IP here matches the logged in data source IP in the entered data source for information consistency.# Case 2 Sync the offline table table_name from the specified offline data source to the designated non-default data source in snapshot mode. Note that the IP address here must be consistent with the login IP information of the entered data source.result = client.publish_table(table_name=table_name, data_source_name=data_source_name, database_name=database_name,is_cycle=False, is_use_default_online=False, online_config=online_config, catalog_name=catalog_name)# Case 3 Import offline table table_name to default online data source via assigned offline data source in a cycle way, run once every 5 minutes# Case 3 Sync the offline table table_name from the specified offline data source to the default online data source in cycle mode, with a run interval of every five minutes.cycle_obj = TaskSchedulerConfiguration()cycle_obj.CrontabExpression = "0 0/5 * * * ?"result = client.publish_table(table_name=table_name, data_source_name=data_source_name, database_name=database_name,is_cycle=True, cycle_obj=cycle_obj, is_use_default_online=True, catalog_name=catalog_name)

Error Code

No specific error code.

drop_online_table

Delete an online feature table.

Input parameters

Parameter Name | Required | Field Type | Field Description | input parameter example |

table_name | Yes | str | Full table name of the offline feature table (format: <table>) | "user_features" |

online_config | No | RedisStoreConfig | Custom Online Storage Configuration | RedisStoreConfig(...) |

database_name | No | Optional[str] | Database name | "feature_db" |

Output Parameter

Result Name | Field Type | Field Description |

N/A | None | no return value |

Call Example

from wedata.feature_store.common.store_config.redis import RedisStoreConfig# drop online table--EMR on hive--&--DLC on iceberg(disable catalog)--&--DLC on iceberg(enable catalog)# delete online feature table--EMR on hive--&--DLC on iceberg(disable catalog)--&--DLC on iceberg(enable catalog)online_config = RedisStoreConfig(host=redis_host, port=redis_password, db=redis_db, password=redis_password)client.drop_online_table(table_name=table_name, database_name=database_name, online_config=online_config)

Error Code

No specific error code.

Help and Support

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback