采集 TKE K8s 集群日志

本文将介绍如何在控制台配置腾讯云容器服务(Tencent Kubernetes Engine,TKE) K8s 集群的日志采集规则并投递到 腾讯云日志服务 CLS。若需使用 CRD 配置 TKE 的 K8s 集群投递日志,可参考 TKE 场景使用 CRD 进行日志采集配置指导。

使用场景

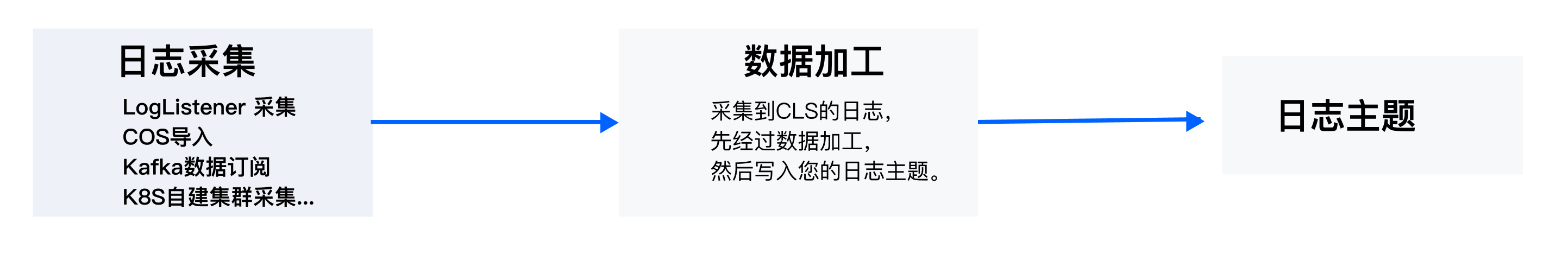

TKE K8s 业务日志采集功能是为用户提供的腾讯云 TKE Kubernetes 集群内的业务日志采集工具,可以将集群内的业务日志、审计日志和事件日志发送至 腾讯云日志服务 CLS。

日志采集功能需要为集群安装日志采集组件并配置采集规则。日志采集组件安装后,日志采集 Agent 会在集群内以 DaemonSet 的形式运行,并根据用户通过日志采集规则配置的采集源、CLS 日志主题和日志解析方式,从采集源进行日志采集,将日志内容发送到日志消费端。您可根据以下操作安装并配置日志采集。

前提条件

集群业务日志采集

步骤1:选择集群

1. 登录 日志服务控制台。

2. 在左侧导航栏中,单击容器集群管理,进入容器集群管理页面。

3. 在页面右上角选中 TKE 集群。

4. 选择 TKE 集群所在地域, 并找到目标采集集群。

5. 若采集组件状态为未安装,单击安装,安装日志采集组件。

注意:

安装日志采集组件将在集群 kube-system 命名空间下,以 DaemonSet 的方式部署一个 tke-log-agent 的 pod 和一个 cls-provisioner 的 pod。请为每个节点至少预留0.1核16Mib以上的可用资源。

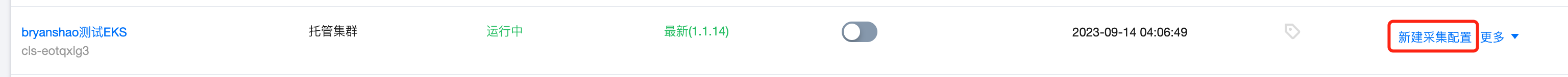

6. 若采集组件状态为最新,单击右侧新建采集配置,进入集群日志采集配置流程。

步骤2:配置日志主题

步骤3:配置采集规则

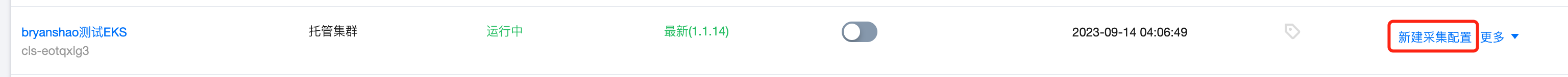

完成日志主题的选择后,单击下一步进入采集配置即可配置采集规则,配置信息如下:

日志源配置:

采集规则名称:您可以自定义日志采集规则名称。

采集类型:目前采集类型支持容器标准输出、容器文件路径和节点文件路径。

支持所有容器、指定工作负载、指定 Pod Labels 三种方式指定容器标准输出的采集日志源。

所有容器:代表采集指定命名空间下的所有容器中的标准输出日志,如下图所示:

指定工作负载:代表采集指定命名空间下,指定工作负载中,指定容器的标准输出日志如下图所示:

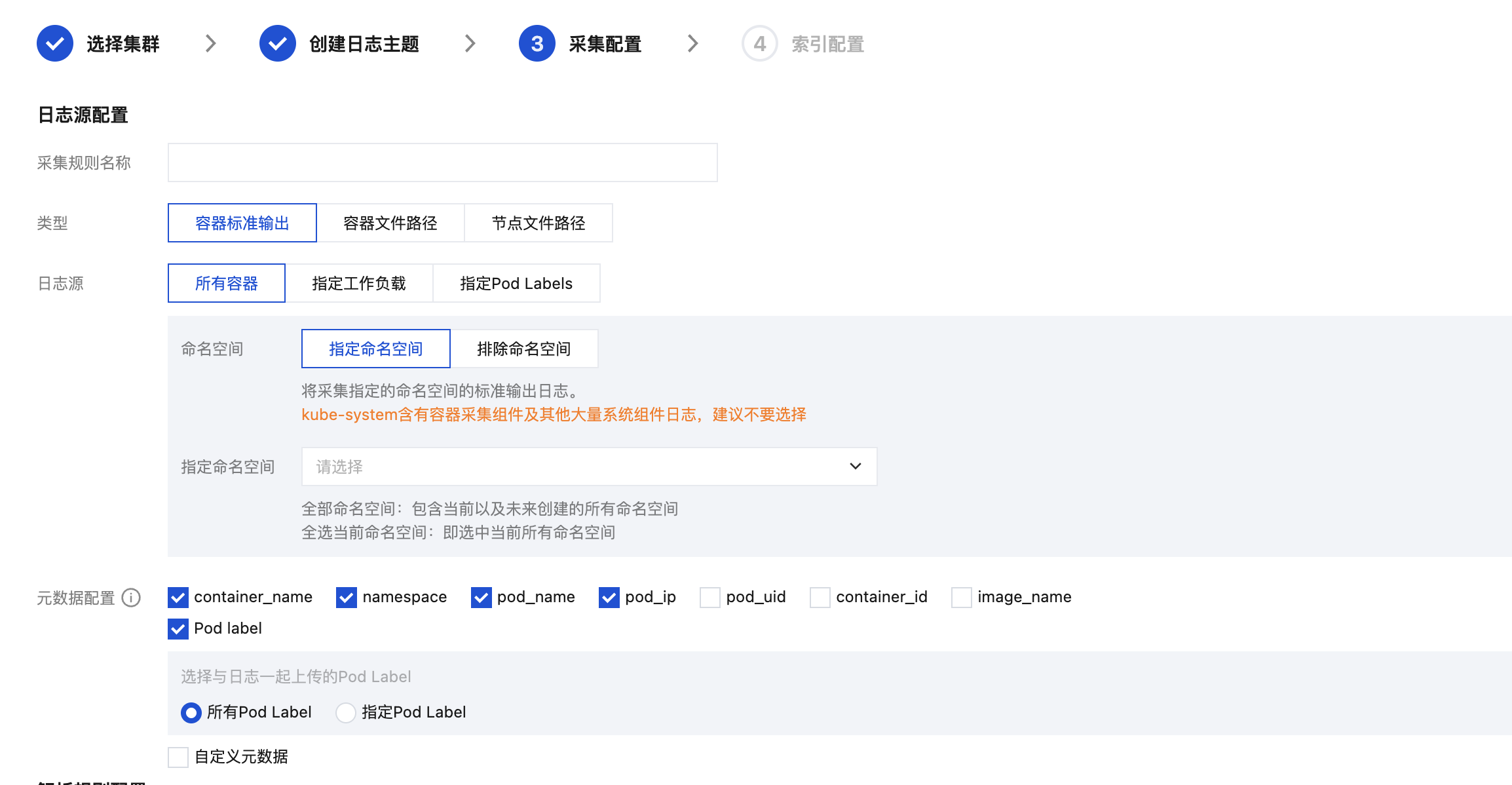

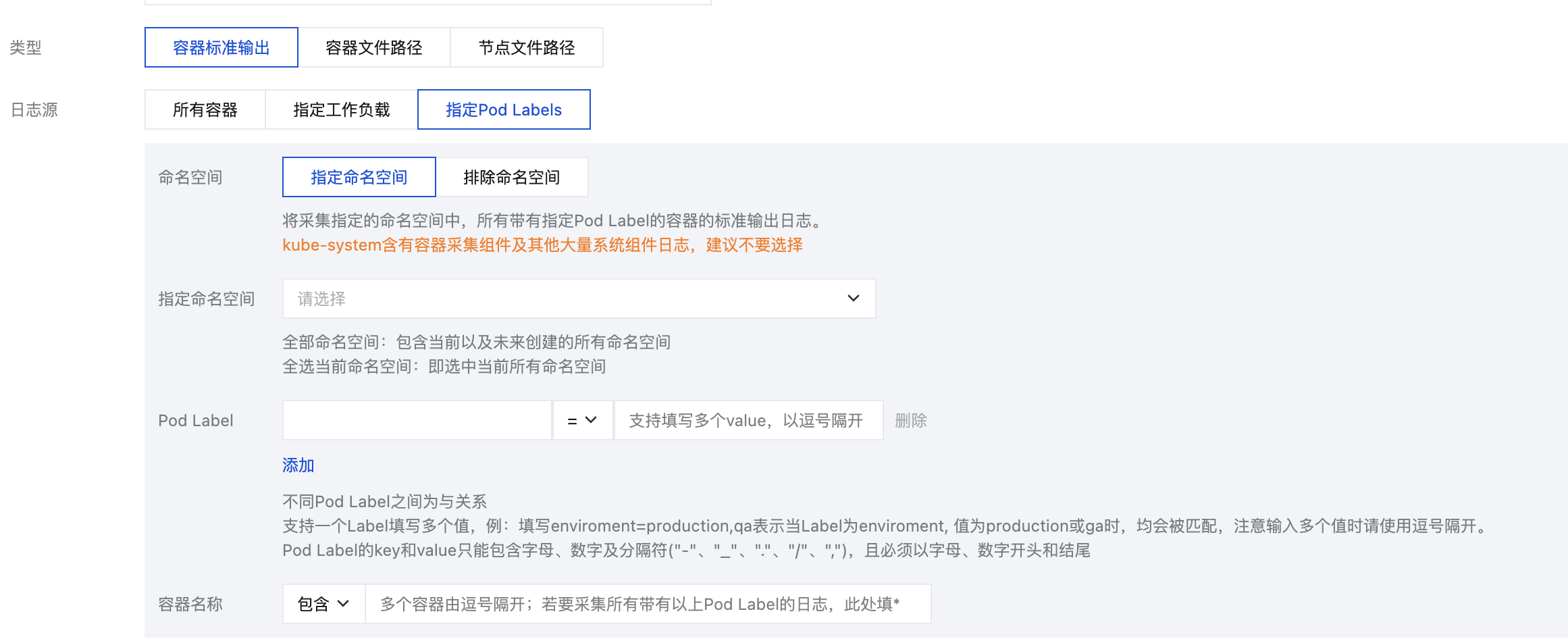

指定 Pod Labels:代表采集指定命名空间下,所有带有指定 Pod Labels 的容器中的标准输出日志,如下图所示:

注意:

“容器文件路径” 不能为软链接,否则会导致软链接的实际路径在采集器的容器内不存在,采集日志失败。

支持指定工作负载、指定 Pod Labels 两种方式指定容器文件路径的采集日志源。

指定工作负载:代表采集指定命名空间下、指定工作负载中、指定容器中的容器文件路径,如下图所示:

指定 Pod Labels:代表采集指定命名空间下,所有带有指定 Pod Label 的容器中的容器文件路径,如下图所示:

容器文件路径由日志目录与文件名组成,日志目录前缀以 / 开头,文件名以非 / 开头,前缀和文件名均支持使用通配符 ? 和 *,不支持逗号。/**/代表日志采集组件将监听所填前缀目录下的所有层级匹配上的日志文件。多文件路径之间为或关系。例如:当容器文件路径为

/opt/logs/*.log,可以指定目录前缀为 /opt/logs,文件名为 *.log的日志。注意:

仅版本1.1.12及以上的容器采集组件支持多采集路径。

仅升级至1.1.12及以上版本后创建的采集配置支持定义多采集路径。

版本1.1.12以下创建的采集配置在升级至1.1.12后不支持配置多采集路径,需重新创建采集配置。

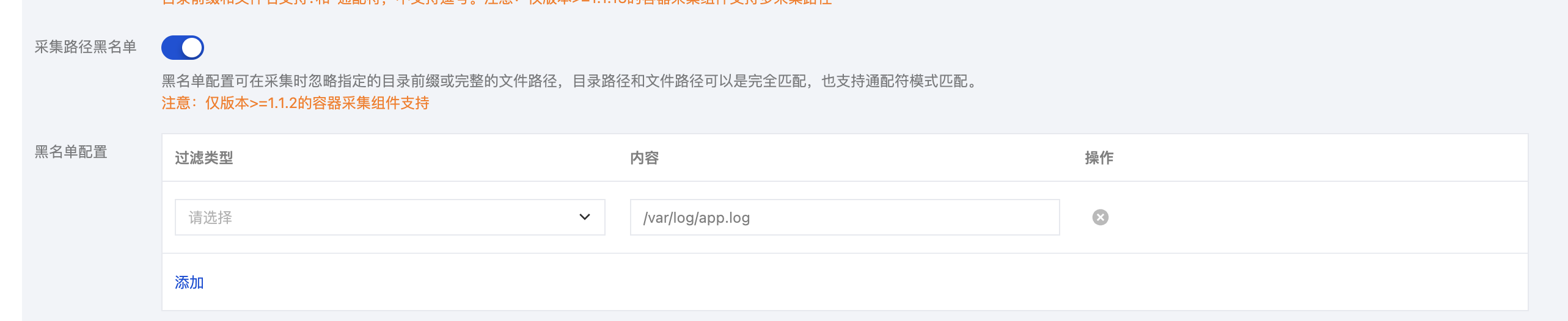

采集路径黑名单。开启后可在采集时忽略指定的目录路径或完整的文件路径。目录路径和文件路径可以是完全匹配,也支持通配符模式匹配。

采集黑名单分为两类过滤类型,且可以同时使用:

文件路径:采集路径下,需要忽略采集的完整文件路径,支持通配符*或?,支持**路径模糊匹配。

目录路径:采集路径下,需要忽略采集的目录前缀,支持通配符*或?,支持**路径模糊匹配。

注意:

需要容器日志采集组件1.1.2及以上版本。

采集黑名单是在采集路径下进行排除,因此无论是文件路径模式,还是目录路径模式,其指定路径要求为采集路径的子集。

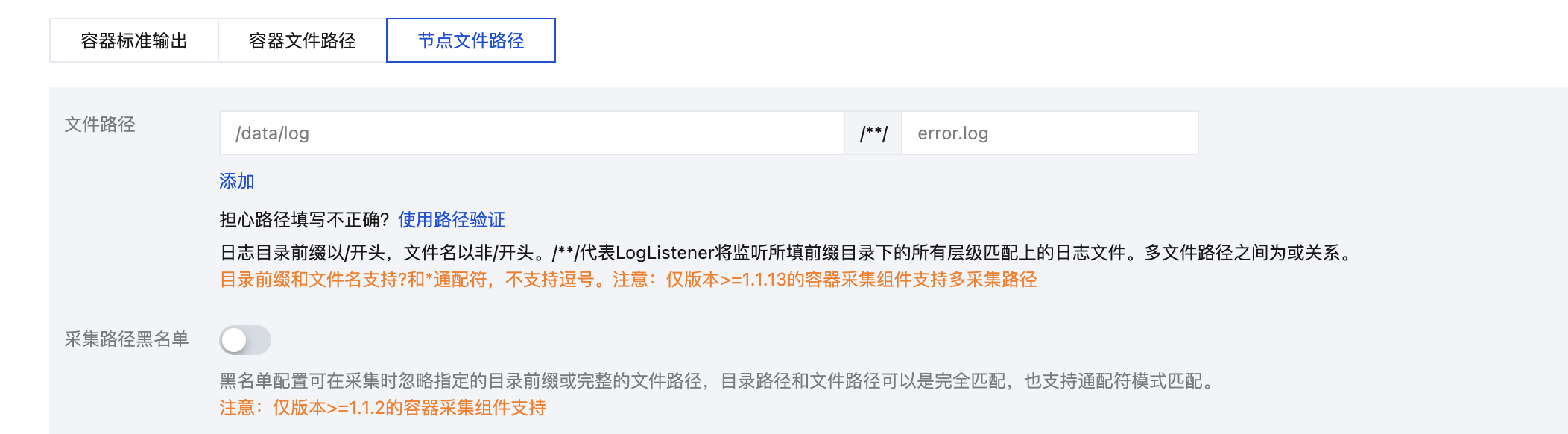

节点文件路径由日志目录与文件名组成,日志目录前缀以 / 开头,文件名以非 / 开头,前缀和文件名均支持使用通配符 ? 和 *,不支持逗号。/**/代表日志采集组件将监听所填前缀目录下的所有层级匹配上的日志文件。多文件路径之间为或关系。例如:当节点文件路径为

/opt/logs/*.log,可以指定目录前缀为 /opt/logs,文件名为 *.log的日志。注意:

仅版本1.1.12及以上的采集组件支持多采集路径。

仅升级至1.1.12及以上版本后创建的采集配置支持定义多采集路径。

版本1.1.12以下创建的采集配置在升级至1.1.12后不支持配置多采集路径,需重新创建采集配置。

采集路径黑名单。开启后可在采集时忽略指定的目录路径或完整的文件路径。目录路径和文件路径可以是完全匹配,也支持通配符模式匹配。

采集黑名单分为两类过滤类型,且可以同时使用:

文件路径:采集路径下,需要忽略采集的完整文件路径,支持通配符*或?,支持**路径模糊匹配。

目录路径:采集路径下,需要忽略采集的目录前缀,支持通配符*或?,支持**路径模糊匹配。

注意:

需要容器日志采集组件1.1.2及以上版本。

采集黑名单是在采集路径下进行排除,因此无论是文件路径模式,还是目录路径模式,其指定路径要求为采集路径的子集。

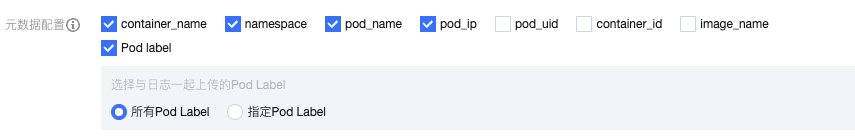

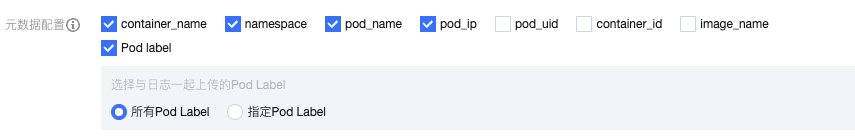

元数据配置:

除了原始的日志内容, 日志服务还会带上容器或 Kubernetes 相关的元数据(例如:产生日志的容器 ID)一起上报到 CLS,方便用户查看日志时追溯来源或根据容器标识、特征(例如:容器名、labels)进行检索。您可以自行选择是否需要上报这些元数据,按需勾选上传。

容器或 kubernetes 相关的元数据请参见下方表格:

字段名 | 含义 |

container_id | 日志所属的容器 ID。 |

container_name | 日志所属的容器名称。 |

image_name | 日志所属容器的镜像名称 IP。 |

namespace | 日志所属 pod 的 namespace。 |

pod_uid | 日志所属 pod 的 UID。 |

pod_name | 日志所属 pod 的名字。 |

pod_ip | 日志所属 pod 的 IP 地址。 |

pod_lable_{label name} | 日志所属 pod 的 label(例如一个 pod 带有两个 label:app=nginx,env=prod,则在上传的日志会附带两个 metadata:pod_label_app:nginx,pod_label_env:prod)。 |

说明:

如果想采集部分 podlabel,需要手动输入想要的 label key (可以输入多个,每输入一个以回车结束),命中的话会采集。

解析规则配置:

采集策略。您可以选择全量或者增量。

全量:全量采集指从日志文件的开头开始采集。

增量:增量采集指只采集文件内新增的内容

编码模式:支持 UTF-8和 GBK。

提取模式:支持多种类型的提取模式,详情如下:

单行全文日志是指一行日志内容为一条完整的日志。日志服务在采集的时候,将使用换行符 \\n 来作为一条日志的结束符。为了统一结构化管理,每条日志都会存在一个默认的键值__CONTENT__,但日志数据本身不再进行日志结构化处理,也不会提取日志字段,日志属性的时间项由日志采集的时间决定。

假设一条日志原始数据为:

Tue Jan 22 12:08:15 CST 2019 Installed: libjpeg-turbo-static-1.2.90-6.el7.x86_64

采集到日志服务的数据为:

__CONTENT__:Tue Jan 22 12:08:15 CST 2019 Installed: libjpeg-turbo-static-1.2.90-6.el7.x86_64

多行全文日志是指一条完整的日志数据可能跨占多行(例如 Java stacktrace)。在这种情况下,以换行符 \\n 为日志的结束标识符就显得有些不合理,为了能让日志系统明确区分开每条日志,采用首行正则的方式进行匹配,当某行日志匹配上预先设置的正则表达式,就认为是一条日志的开头,而下一个行首出现作为该条日志的结束标识符.

多行全文也会设置一个默认的键值__CONTENT__,但日志数据本身不再进行日志结构化处理,也不会提取日志字段,日志属性的时间项由日志采集的时间决定。

假设一条多行日志原始数据为:

2019-12-15 17:13:06,043 [main] ERROR com.test.logging.FooFactory:java.lang.NullPointerExceptionat com.test.logging.FooFactory.createFoo(FooFactory.java:15)at com.test.logging.FooFactoryTest.test(FooFactoryTest.java:11)

首行正则表达式为如下:

\\d{4}-\\d{2}-\\d{2}\\s\\d{2}:\\d{2}:\\d{2},\\d{3}\\s.+

采集到日志服务的数据为:

__CONTENT__:2019-12-15 17:13:06,043 [main] ERROR com.test.logging.FooFactory:\\njava.lang.NullPointerException\\n at com.test.logging.FooFactory.createFoo(FooFactory.java:15)\\n at com.test.logging.FooFactoryTest.test(FooFactoryTest.java:11)

单行完全正则格式通常用来处理结构化的日志,指将一条完整日志按正则方式提取多个 key-value 的日志解析模式。

假设一条日志原始数据为:

10.135.46.111 - - [22/Jan/2019:19:19:30 +0800] "GET /my/course/1 HTTP/1.1" 127.0.0.1 200 782 9703 "http://127.0.0.1/course/explore?filter%5Btype%5D=all&filter%5Bprice%5D=all&filter%5BcurrentLevelId%5D=all&orderBy=studentNum" "Mozilla/5.0 (Windows NT 10.0; WOW64; rv:64.0) Gecko/20100101 Firefox/64.0" 0.354 0.354

配置的正则表达式为如下:

(\\S+)[^\\[]+(\\[[^:]+:\\d+:\\d+:\\d+\\s\\S+)\\s"(\\w+)\\s(\\S+)\\s([^"]+)"\\s(\\S+)\\s(\\d+)\\s(\\d+)\\s(\\d+)\\s"([^"]+)"\\s"([^"]+)"\\s+(\\S+)\\s(\\S+).*

采集到日志服务的数据为:

body_bytes_sent: 9703http_host: 127.0.0.1http_protocol: HTTP/1.1http_referer: http://127.0.0.1/course/explore?filter%5Btype%5D=all&filter%5Bprice%5D=all&filter%5BcurrentLevelId%5D=all&orderBy=studentNumhttp_user_agent: Mozilla/5.0 (Windows NT 10.0; WOW64; rv:64.0) Gecko/20100101 Firefox/64.0remote_addr: 10.135.46.111request_length: 782request_method: GETrequest_time: 0.354request_url: /my/course/1status: 200time_local: [22/Jan/2019:19:19:30 +0800]upstream_response_time: 0.354

假设您的一条日志原始数据为:

[2018-10-01T10:30:01,000] [INFO] java.lang.Exception: exception happenedat TestPrintStackTrace.f(TestPrintStackTrace.java:3)at TestPrintStackTrace.g(TestPrintStackTrace.java:7)at TestPrintStackTrace.main(TestPrintStackTrace.java:16)

行首正则表达式为:

\\[\\d+-\\d+-\\w+:\\d+:\\d+,\\d+]\\s\\[\\w+]\\s.*

配置的自定义正则表达式为:

\\[(\\d+-\\d+-\\w+:\\d+:\\d+,\\d+)\\]\\s\\[(\\w+)\\]\\s(.*)

系统根据

()捕获组提取对应的 key-value 后,您可以自定义每组的 key 名称如下所示:time: 2018-10-01T10:30:01,000`level: INFO`msg:java.lang.Exception: exception happenedat TestPrintStackTrace.f(TestPrintStackTrace.java:3)at TestPrintStackTrace.g(TestPrintStackTrace.java:7)at TestPrintStackTrace.main(TestPrintStackTrace.java:16)

假设您的一条 JSON 日志原始数据为:

{"remote_ip":"10.135.46.111","time_local":"22/Jan/2019:19:19:34 +0800","body_sent":23,"responsetime":0.232,"upstreamtime":"0.232","upstreamhost":"unix:/tmp/php-cgi.sock","http_host":"127.0.0.1","method":"POST","url":"/event/dispatch","request":"POST /event/dispatch HTTP/1.1","xff":"-","referer":"http://127.0.0.1/my/course/4","agent":"Mozilla/5.0 (Windows NT 10.0; WOW64; rv:64.0) Gecko/20100101 Firefox/64.0","response_code":"200"}

经过日志服务结构化处理后,该条日志将变为如下:

agent: Mozilla/5.0 (Windows NT 10.0; WOW64; rv:64.0) Gecko/20100101 Firefox/64.0body_sent: 23http_host: 127.0.0.1method: POSTreferer: http://127.0.0.1/my/course/4remote_ip: 10.135.46.111request: POST /event/dispatch HTTP/1.1response_code: 200responsetime: 0.232time_local: 22/Jan/2019:19:19:34 +0800upstreamhost: unix:/tmp/php-cgi.sockupstreamtime: 0.232url: /event/dispatchxff: -

假设您的一条日志原始数据为:

10.20.20.10 - ::: [Tue Jan 22 14:49:45 CST 2019 +0800] ::: GET /online/sample HTTP/1.1 ::: 127.0.0.1 ::: 200 ::: 647 ::: 35 ::: http://127.0.0.1/

当日志解析的分隔符指定为

:::,该条日志会被分割成八个字段,并为这八个字段定义唯一的 key,如下所示:IP: 10.20.20.10 -bytes: 35host: 127.0.0.1length: 647referer: http://127.0.0.1/request: GET /online/sample HTTP/1.1status: 200time: [Tue Jan 22 14:49:45 CST 2019 +0800]

假设您的一条日志的原始数据为:

1571394459,http://127.0.0.1/my/course/4|10.135.46.111|200,status:DEAD,

自定义插件内容如下:

{"processors": [{"type": "processor_split_delimiter","detail": {"Delimiter": ",","ExtractKeys": [ "time", "msg1","msg2"]},"processors": [{"type": "processor_timeformat","detail": {"KeepSource": true,"TimeFormat": "%s","SourceKey": "time"}},{"type": "processor_split_delimiter","detail": {"KeepSource": false,"Delimiter": "|","SourceKey": "msg1","ExtractKeys": [ "submsg1","submsg2","submsg3"]},"processors": []},{"type": "processor_split_key_value","detail": {"KeepSource": false,"Delimiter": ":","SourceKey": "msg2"}}]}]}

经过日志服务结构化处理后,该条日志将变为如下:

time: 1571394459submsg1: http://127.0.0.1/my/course/4submsg2: 10.135.46.111submsg3: 200status: DEAD

配置日志时间戳来源:您可选择以日志采集时间或者指定日志字段两种时间作为日志的时间戳。

过滤器:LogListener 仅采集符合过滤器规则的日志,Key 支持完全匹配,过滤规则支持正则匹配,如仅采集

ErrorCode = 404 的日志。您可以根据需求开启过滤器并配置规则。配置上传解析失败日志:建议开启上传解析失败日志。开启后,LogListener 会上传各式解析失败的日志。若关闭上传解析失败日志,则会丢弃失败的日志。

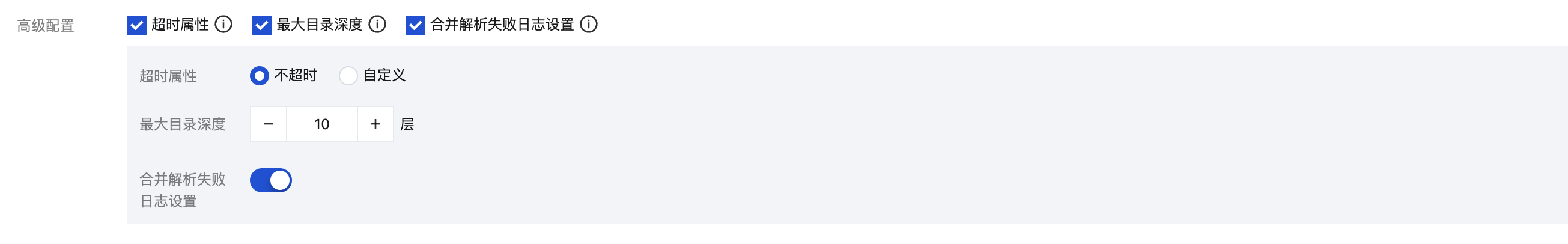

高级配置:通过勾选,选择您需要定义的高级配置。

多行-完全正则提取模式下,支持配置以下高级配置(其他模式仅支持前2 种):

名称 | 描述 | 配置项 |

超时属性 | 该配置控制日志文件的超时时间。如果一个日志文件在指定时间内没有任何更新,则为超时。超时的日志文件 LogListener 将不再采集。当您的日志文件数量较 大时,建议降低超时时间,避免 LogListener 性能浪费 | 不超时:日志文件永不超时 自定义:自定义日志文件的超时时间 |

最大目录深度 | 该配置控制日志采集的最大目录深度。LogListener 不会采集所在目录层级超过指定最大目录深度的日志文件。当您目标采集路径包含模糊匹配时,建议配置合适的最大目录深度,避免 LogListener 性能浪费。 | 大于0的整数。0代表不进行子目录的下钻 |

合并解析失败日志 | 注意: LogListener 2.8.8及以上版本才可以配置合并解析失败日志。 该配置支持在采集时将目标日志文件中连续解析失败的日志合并为一条日志上传。 若您的首行正则表达式无法覆盖所有的多行日志, 建议开启该配置,避免因首行匹配失败的单条多行日志被拆分至多条日志。 | 开启/关闭 |

步骤4:配置索引

1. 单击下一步,进入“索引配置”页面。

2. 在“索引配置”页面,设置如下信息。配置详情请参见 索引配置。

注意:

检索必须开启索引配置,否则无法检索。

步骤5:检索日志

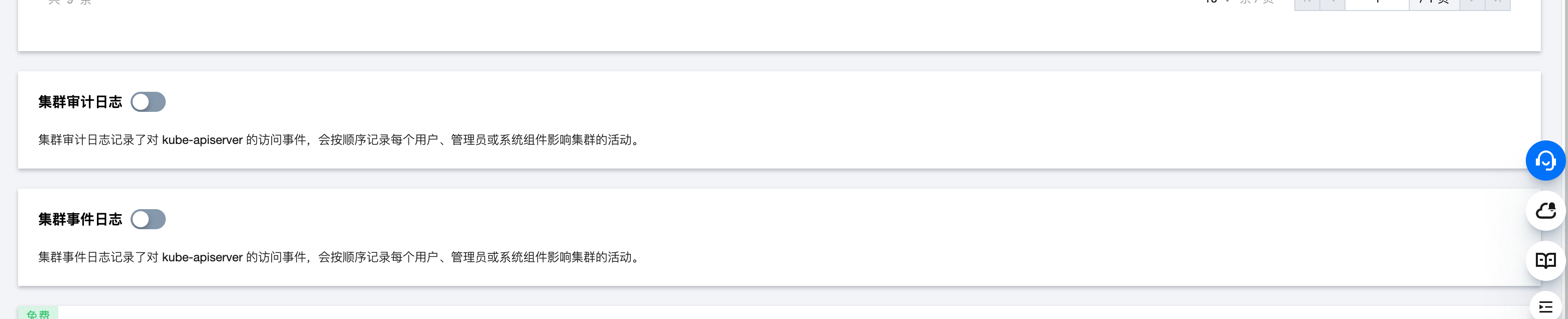

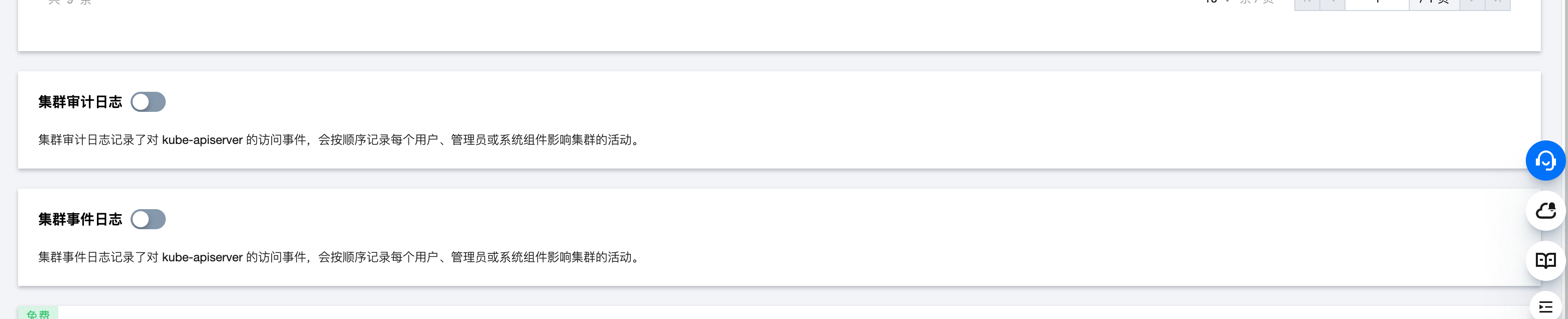

集群审计/事件日志采集

说明:

集群审计日志记录了对 kube-apiserver 的访问事件,会按顺序记录每个用户、管理员或系统组件影响集群的活动。

集群事件日志记录了集群的运行和各类资源的调度情况。

步骤1:选择集群

1. 登录 日志服务控制台。

2. 在左侧导航栏中,选择容器集群管理,进入容器集群管理页面。

3. 在页面右上角选中 TKE 集群。

4. 选择 TKE 集群所在地域,并找到目标采集集群。

5. 若采集组件状态为未安装,单击安装,安装日志采集组件。

注意:

安装日志采集组件将在集群 kube-system 命名空间下,以 DaemonSet 的方式部署一个 tke-log-agent 的 pod 和一个 cls-provisioner 的 pod。请为每个节点至少预留0.1核16Mib以上的可用资源。

6. 若采集组件状态为最新,单击集群名称,进入集群详情页,并在集群详情页中找到集群审计日志或集群事件日志。

7. 单击开启集群审计或事件日志,并进入集群审计或事件日志配置流程。

步骤2:选择日志主题

步骤3:索引配置

1. 单击下一步,进入“索引配置”页面。

2. 在“索引配置”页面,设置如下信息。配置详情请参见 索引配置。

注意:

检索必须开启索引配置,否则无法检索。

步骤4:检索日志

其他操作

管理业务日志采集配置

1. 在容器集群管理页中,找到目标 TKE 集群,并单击集群名称,进入集群详情页。

2. 在集群详情页中,您可以在集群业务日志中查看并管理您的集群业务日志采集配置。

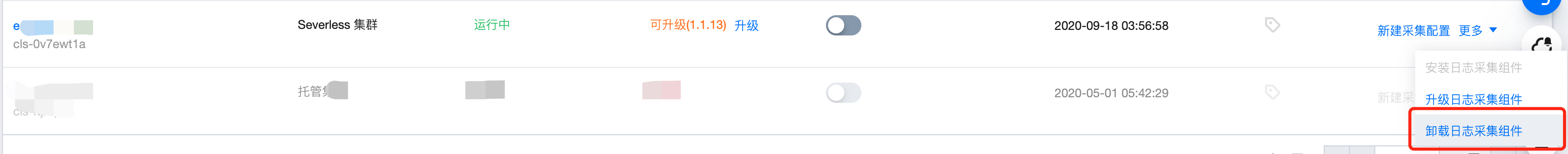

升级日志采集组件

在容器集群管理页中,找到目标 TKE 集群,若采集组件状态显示可升级,可单击升级,将日志采集组件升级至最新版本。

卸载日志采集组件

在容器集群管理页中,找到目标 TKE 集群,单击操作中的更多,并在下拉框中单击卸载日志采集组件。

文档反馈