Introduction to Client Rebalance

What Client Rebalance Is

In Kafka, Client Rebalance refers to the process of reallocating subscribed partitions among consumer instances within a consumer group. When group membership changes, the number of partitions changes, or subscription relationships are modified, Kafka triggers Client Rebalance to ensure partitions are as evenly distributed as possible among consumers.

Why Client Rebalance Is Needed

Client-side load balancing between consumer groups fundamentally exists to ensure the allocation relationship between partitions and consumer instances can dynamically adapt to cluster state changes.

Scenario 1 – Scaling Out client Instances

Scenario 2 – Scaling In client Instances

Scenario 3 – client Instance Failure

Scenario 4 – Scaling Out topics

Scenario 5 – Deleting a topic

Partition Allocation Algorithm

Since the goal of Rebalance is to ensure partitions are evenly distributed among multiple clients, we will now introduce the partition allocation algorithm.

RangeAssignor

Group the partitions of a topic in order and assign them sequentially to consumers, which is suitable for allocation scenarios involving a single topic.

Core Principles

1. Sorting and grouping of a single topic

For each topic, sort its partitions in ascending order by partition number (e.g., topic1 has partitions 0-5). Calculate the base number of partitions per group based on the number of consumers: total partitions / number of consumers = base partition count. The remainder is distributed to the first remainder-numbered consumers, each receiving one additional partition.

Example: A topic has 5 partitions and 2 consumers (C1, C2), calculated as:

C1: partitions 0, 1, 2 (5/2 = 2 with a remainder of 1, so the first consumer gets one extra partition)

C2:partition 3, 4

2. Multiple topic allocation logic

Performing the above allocation independently for each topic may result in the same consumer being assigned excessive partitions across different topics.

Advantages and Disadvantages

Advantages:

Sequential allocation facilitates understanding and debugging.

The allocation logic is stable and predictable during Rebalance.

Disadvantages:

Uneven distribution may occur: when the number of partitions is not divisible by the number of consumers, the first few consumers (equal to the remainder) will receive one extra partition each.

Not suitable for multiple topics; in multi-topic scenarios, it may cause "data skew": for example, Consumer A is responsible for 3 partitions of topic1 and 3 partitions of topic2, while Consumer B is only responsible for 2 partitions.

RoundRobinAssignor

Consolidate and sort all topic partitions, then allocate them to consumers in a round-robin fashion to achieve global balance.

Core Principles

1. Global partition ordering

Sort all subscribed topic partitions in ascending order by topic + partition (e.g., topic1-0, topic1-1, topic2-0, topic2-1).

2. Round-robin allocation mechanism

Assign each partition sequentially according to the consumer list order (in ascending order of consumer ID), similar to a "dealing cards" approach.

Example: Allocating 6 partitions to 3 consumers (C1, C2, C3) results in:

C1: partition 0, 3

C2: partition 1, 4

C3: partition 2, 5

3. Configuration

partition.assignment.strategy must be explicitly configured as org.apache.kafka.clients.consumer.RoundRobinAssignor.

Advantages and Disadvantages

Advantages:

Global allocation is more even, avoiding the skew issue of the single-topic Range algorithm.

Supports cross-topic load balancing and is suitable for multi-topic consumption scenarios.

Disadvantages:

Rebalance may trigger mass migration of partitions: when the number of consumers changes, all partitions need to be reallocated via round-robin, incurring significant overhead.

StickyAssignor

Prioritizes preserving historical partition assignment relationships and adjusts partitions only when necessary, reducing migration overhead caused by rebalance.

Core Principles

1. "Sticky" assignment strategy

During rebalance, the allocator prioritizes retaining previously assigned partitions for the original consumers, adjusting only when partitions must be reassigned (e.g., when a consumer leaves).

Example: Consumer C1 originally held partitions 0-2. When a new consumer C3 is added, C1 may only release partition 2 and retain partitions 0-1.

2. Combination with the Cooperative protocol

In Kafka 2.4+ versions, the CooperativeStickyAssignor supports incremental rebalance, migrating partitions in phases to avoid full partition migration.

Advantages and Disadvantages

Advantages:

Significantly reduces partition migration volume during rebalance and minimizes application downtime.

Disadvantages:

High implementation complexity, requiring tracking the historical assignment state (via the ownedPartitions field).

The custom assignor must explicitly implement sticky logic; otherwise, it may degrade to the old protocol.

If an imbalance exists in historical assignments, the sticky strategy may perpetuate this imbalance (requiring manual intervention or a restart reset).

Comparison of Three Load Balancing Algorithms

|

Allocation granularity | Range-based grouping of partitions within a single topic | Global partition round-robin | Prioritize preserving historical assignments to minimize migration. |

Balance | Imbalance may occur within a single topic; skew is prone to occur across multiple topics. | Optimal global balance | Prioritize historical assignments, but may perpetuate previous imbalance. |

rebalance overhead | High (full reallocation) | High (full reallocation) | Low (only necessary partition migration) |

State-aware | Stateless | Stateless | Stateful (dependent on historical assignment records) |

Applicable Scenario | Single topic, small number of partitions, static consumer group | Multiple topics, pursuing global balance, dynamic scaling | Stateful applications, frequent rebalance, scenarios requiring reduced migration |

Kafka version support | All versions | All versions | Legacy StickyAssignor (≤2.3), New CooperativeStickyAssignor (≥2.4) |

Summary

RangeAssignor: Suitable for scenarios with a single topic, a fixed number of consumers, and low requirements for allocation uniformity.

RoundRobinAssignor: Suitable for scenarios involving consuming multiple topics, dynamic changes in the number of consumers, and pursuing global balance.

StickyAssignor: Primarily used for stateful components such as Kafka Streams and Kafka Connect to reduce state reconstruction caused by rebalance.

Rebalance Trigger Scenarios

consumer member changes

consumer crashed

consumer scaling

consumer scale-in

topic changes

Create topic

Delete topic

Scaling out partitions

Rebalance Protocol Process Analysis

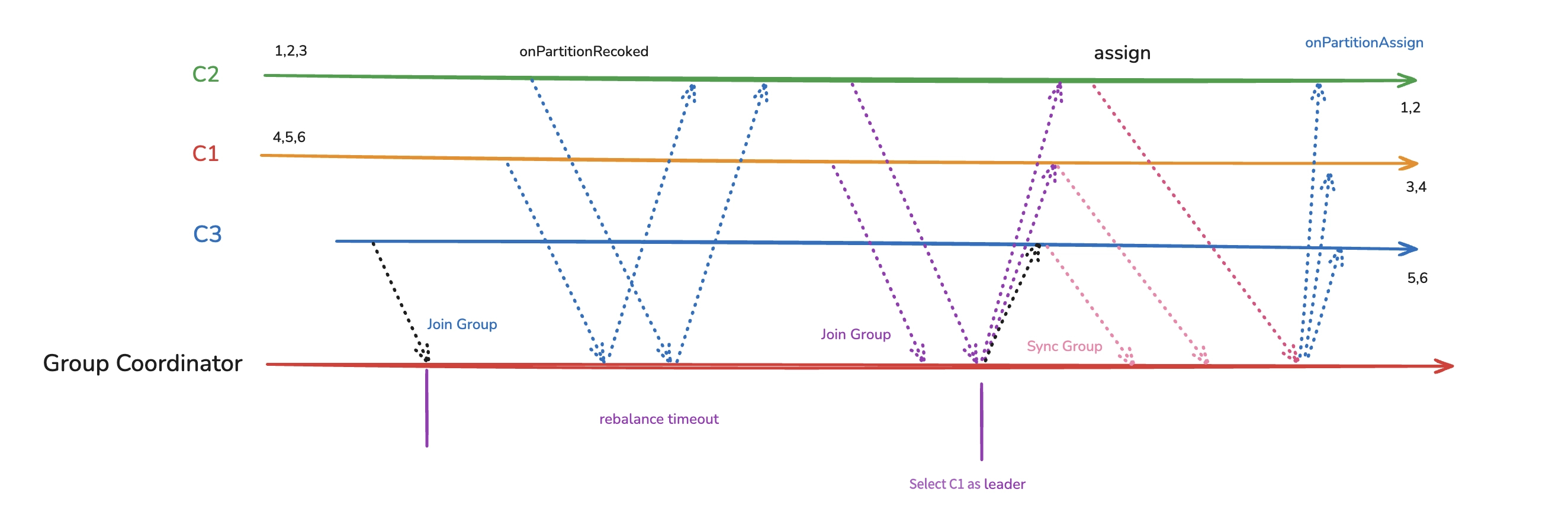

Eager Rebalance Protocol Flow

ConsumerRebalanceListener

onPartitionsRevoked (called before sending a join group request)

onPartitionsAssigned (called after receiving the sync group request)

ConsumerPartitionAssigner

assign (called by the client leader to implement the partition allocation algorithm)

Eager Rebalance Has Defects

1. Stop the world: consumption interruption, particularly noticeable when there are large quantities of partitions and clients.

2. partition unnecessary migration: for example, partition migration during single instance restart scenarios.

Optimizing the Eager Rebalance Protocol

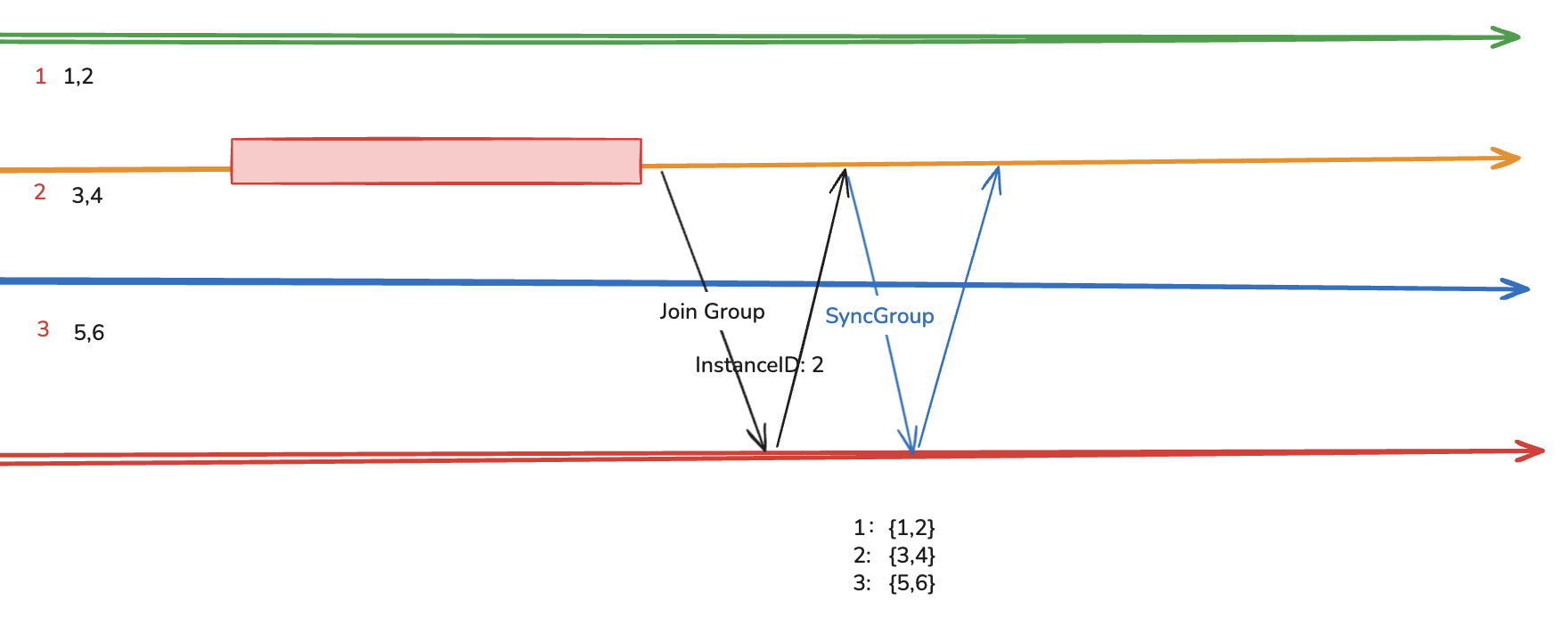

Static Membership Rebalance Protocol

Optimization Approach: Reduce the probability of triggering rebalance, raise the requirement for triggering rebalance, primarily optimizing the scenario of single instance restart.

Optimization Method:

1. Add a parameter named instance.id for each instance, and after an instance restarts on k8s, this instance.id remains unchanged.

2. Adjust the session.timeout parameter and set session.timeout to a higher value, so that during instance restart the coordinator does not detect the lack of heartbeat from the instance.

3. The newly started instance retains the instance.id from before the restart, the coordinator finds the corresponding partitions information, and returns it to the newly started instance.

Scenarios triggering rebalance after optimization:

1. Scaling out clients

2. client leader rejoins

3. The duration that a client instance crashes exceeds the session timeout

4. Scaling in clients

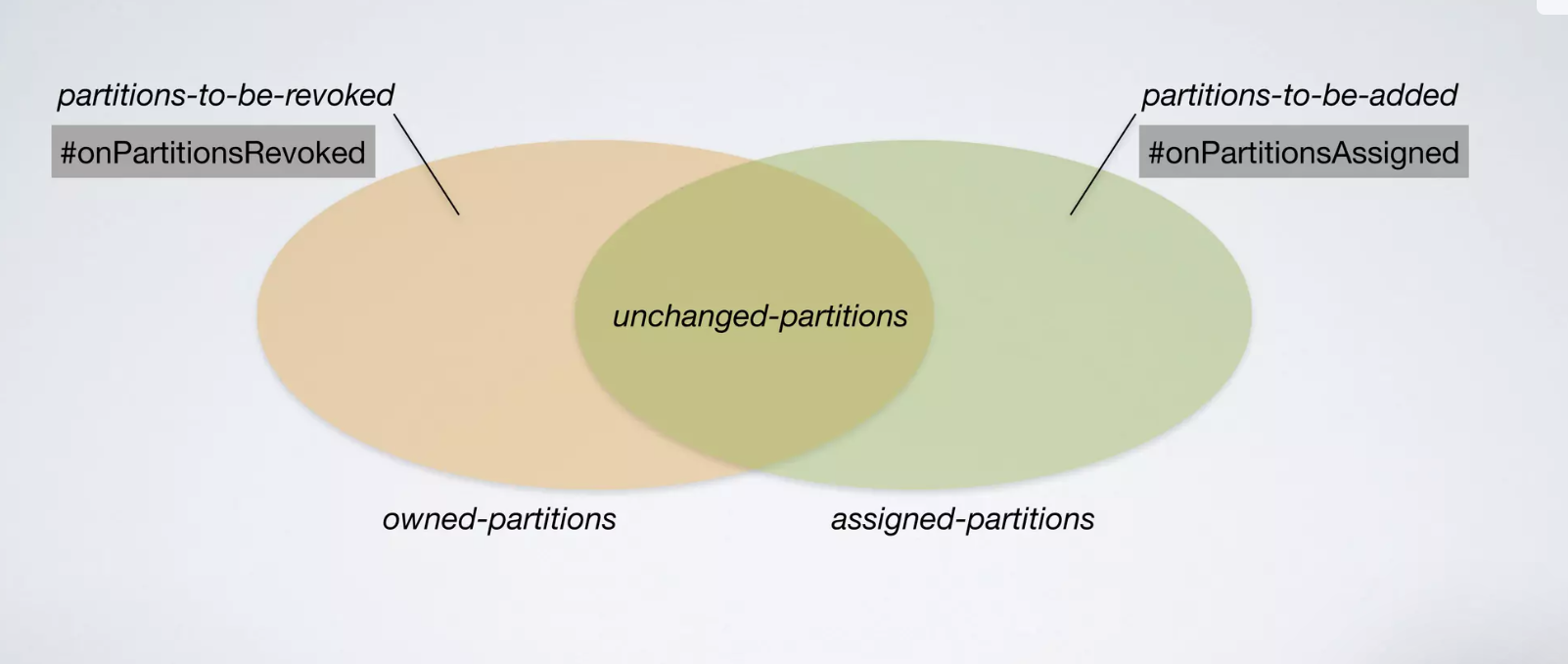

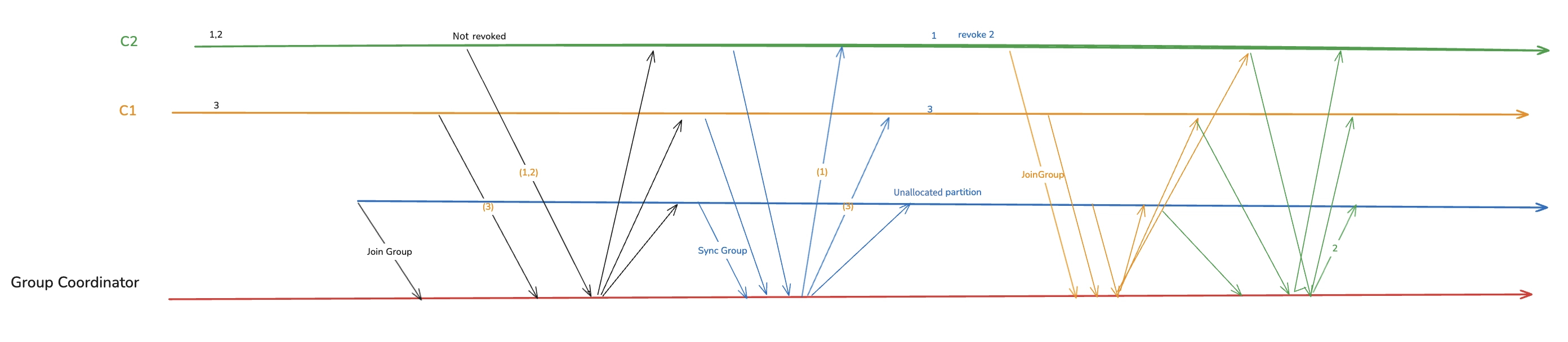

Cooperative Rebalance Protocol

Optimization Approach: The conditions for triggering rebalance remain unchanged, while optimizing the rebalance process to minimize partition reassignment.

During a rebalance process, partitions for a client are considered to be in three states:

Should be revoked by the client

Should be added by the client

Remains unchanged

Therefore, during the rebalance process, partitions that remain unchanged should continue consuming, then revoke partitions before assigning new ones.

Optimization Method:

1. Partitions being consumed are not revoked before rebalance.

2. Incremental multi-phase rebalance: The two stages of "revoking old partitions" and "assigning new partitions" allow consumers to continue processing retained partitions during the first phase, minimizing service interruption.

Upgrade from the Eager Rebalance Protocol to the Cooperative Rebalance Protocol

The consumer needs to modify the configuration and restart twice:

1. Introducing new protocols while retaining the old ones

1.1 Modify consumer configuration

partition.assignment.strategy = org.apache.kafka.clients.consumer.CooperativeStickyAssignor,org.apache.kafka.clients.consumer.RangeAssignor

Note:

The new assignor configuration should be placed first in the list.

1.2 After restarting the consumer, the new instance sends a joinGroup request containing the new protocol. However, since the old protocol remains in the list, the consumer group chooses the protocol supported by all assignors (currently still eager, as RangeAssignor only supports eager). Old instances continue using the eager protocol, while new instances are ready to support the cooperative protocol, though the protocol has not yet switched.

2. Remove the protocol

Remove the old protocol from the configuration

partition.assignment.strategy = org.apache.kafka.clients.consumer.CooperativeStickyAssignor

Comparison of Static Membership and Cooperative Rebalance Protocols

|

Advantages | 1. The process is simple 2. It fundamentally does not trigger rebalance 3. Compatible with StickyAssignor | 1. Does not rely on a separate unique id 2. Aware of historical assignment results, reducing fluctuations during rebalance | Two protocols are not contradictory and can be used simultaneously. |

Disadvantages | 1. instance.id needs to be provided 2. Increasing the session timeout may result in less noticeable detection when containers crash. 3. Fall back to global rebalance when it does not take effect | 1. The process is complex 2. Requires the use of CooperativeStickyAssignor, which relies on the sticky assignment algorithm. |

|

Client Rebalance Implementation in Tencent Cloud

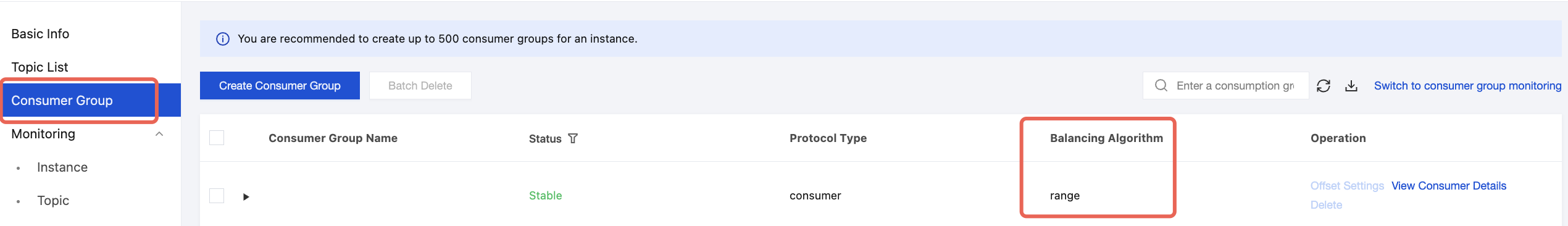

View the Load Balancing Algorithm for the Current Partition

You can go to the instance details, view the Consumer Group tab, and check the client balancing algorithm in the list.

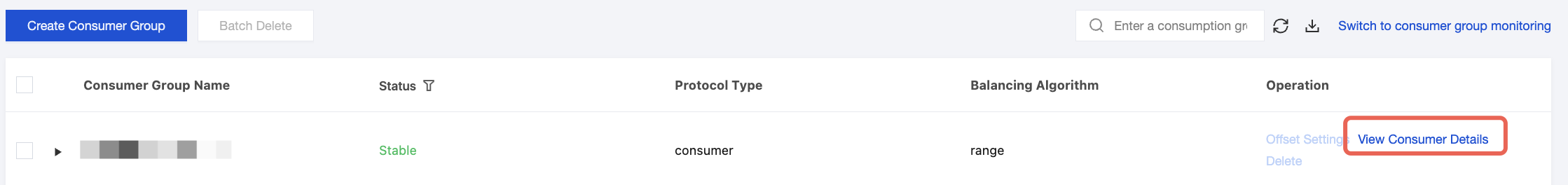

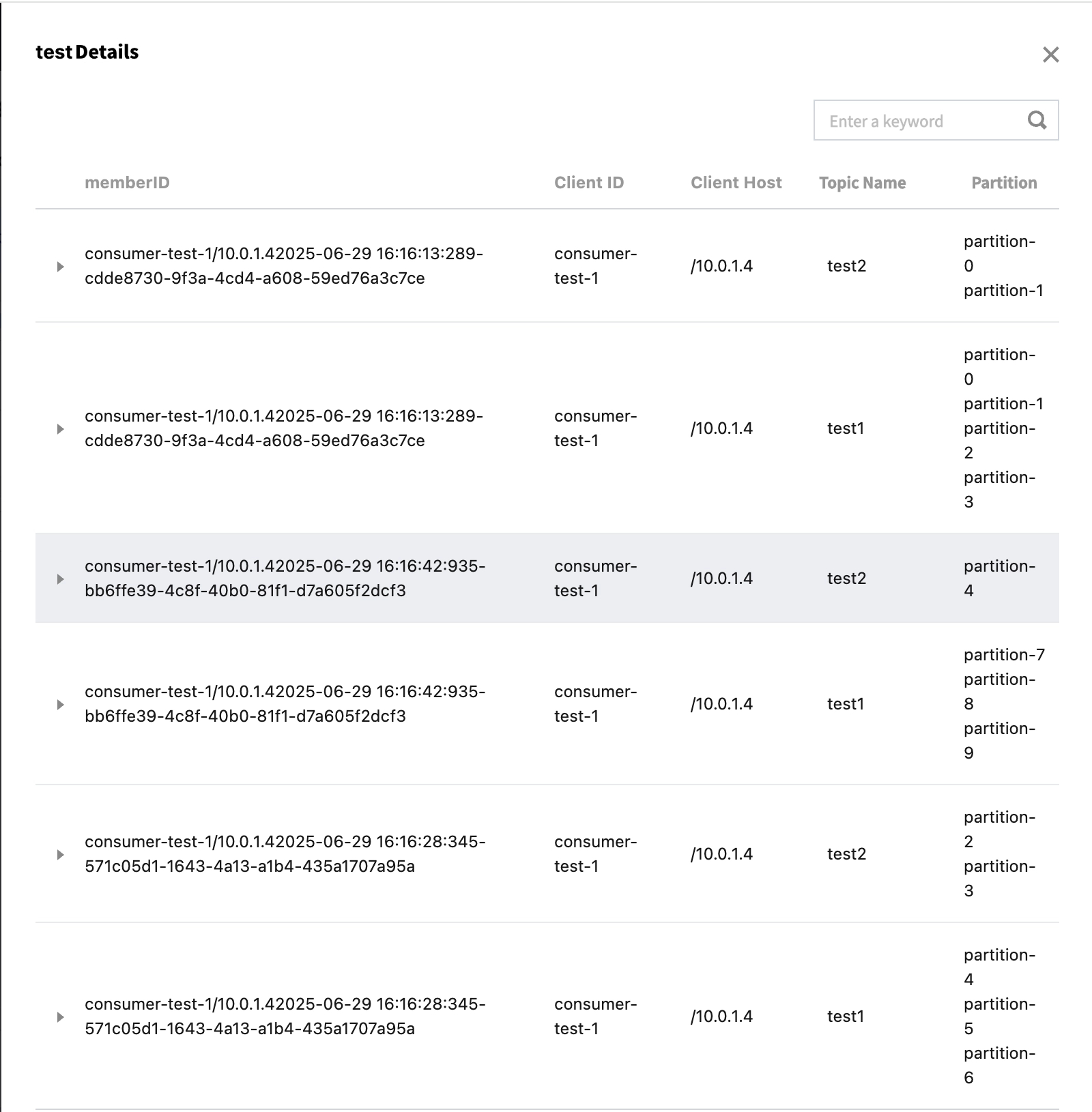

View partition Assignment Results Across Clients

Prerequisite: In the code, the KafkaConsumer object must call the subscribe function.

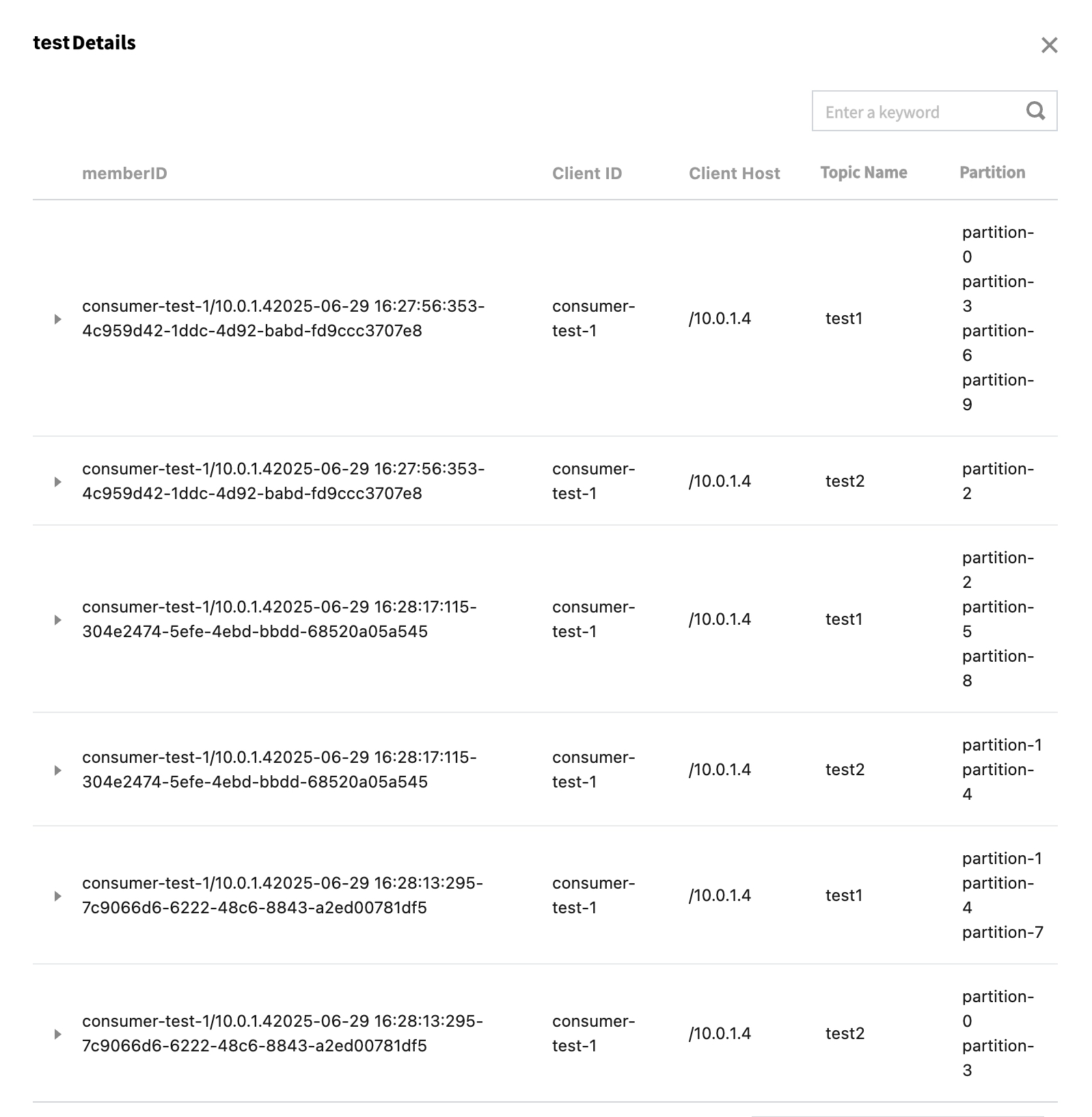

Currently using group test to consume 2 topics: one topic test1 has 10 partitions, and the other topic test2 has 5 partitions.

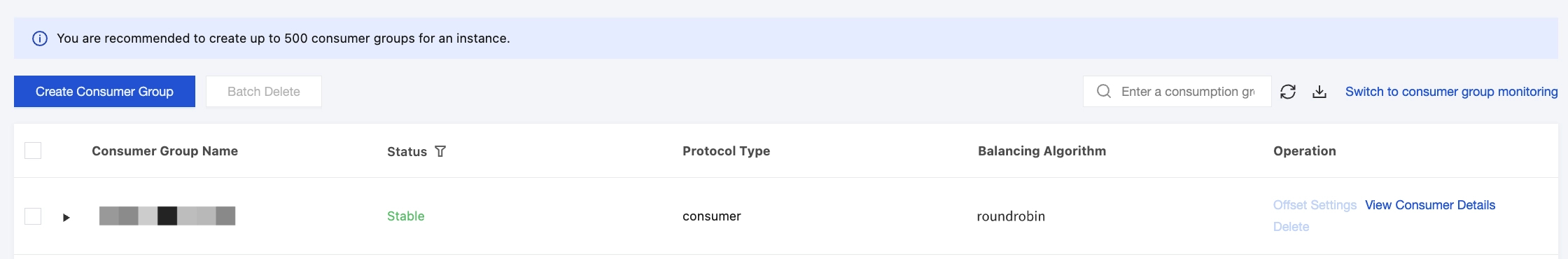

After applying the range assignment algorithm, it is observed that the first consumer processes 6 partitions, the second consumer processes 4 partitions, and the third consumer processes 5 partitions. When switching to the RoundRobinAssignor balancing algorithm, the result is as follows:

It is observed that the partitions are more evenly distributed among the 3 clients, with each client being assigned 5 partitions.

References