Data Subscription (Kafka Edition)

Download

Modo Foco

Tamanho da Fonte

Feature overview

Data subscription refers to the process where DTS gets the data change information of a key business in the database, converts it into message objects, and pushes them to Kafka for the downstream businesses to subscribe to, get, and consume. DTS allows you to directly consume data through a Kafka/Flink client, so you can build data sync features between TencentDB databases and heterogeneous systems, such as cache update, real-time ETL (data warehousing technology) sync, and async business decoupling.

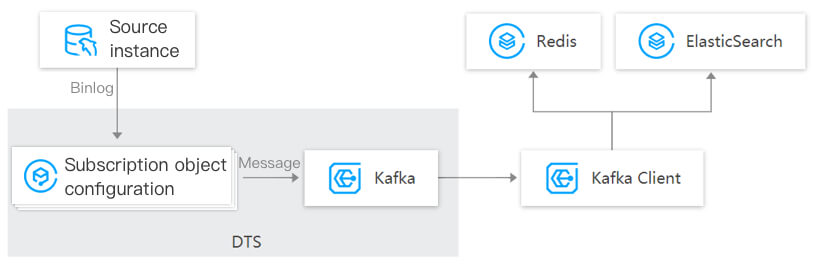

How it works

The following takes MySQL as an example to describe how data subscription pulls the incremental binlog from the source database in real time, parses the incremental data into Kafka messages, and then stores them on the Kafka server. You can consume the data through a Kafka client. As an open-source messaging middleware, Kafka supports multi-channel data consumption and SDKs for multiple programming languages to reduce your use costs.

Typical use cases

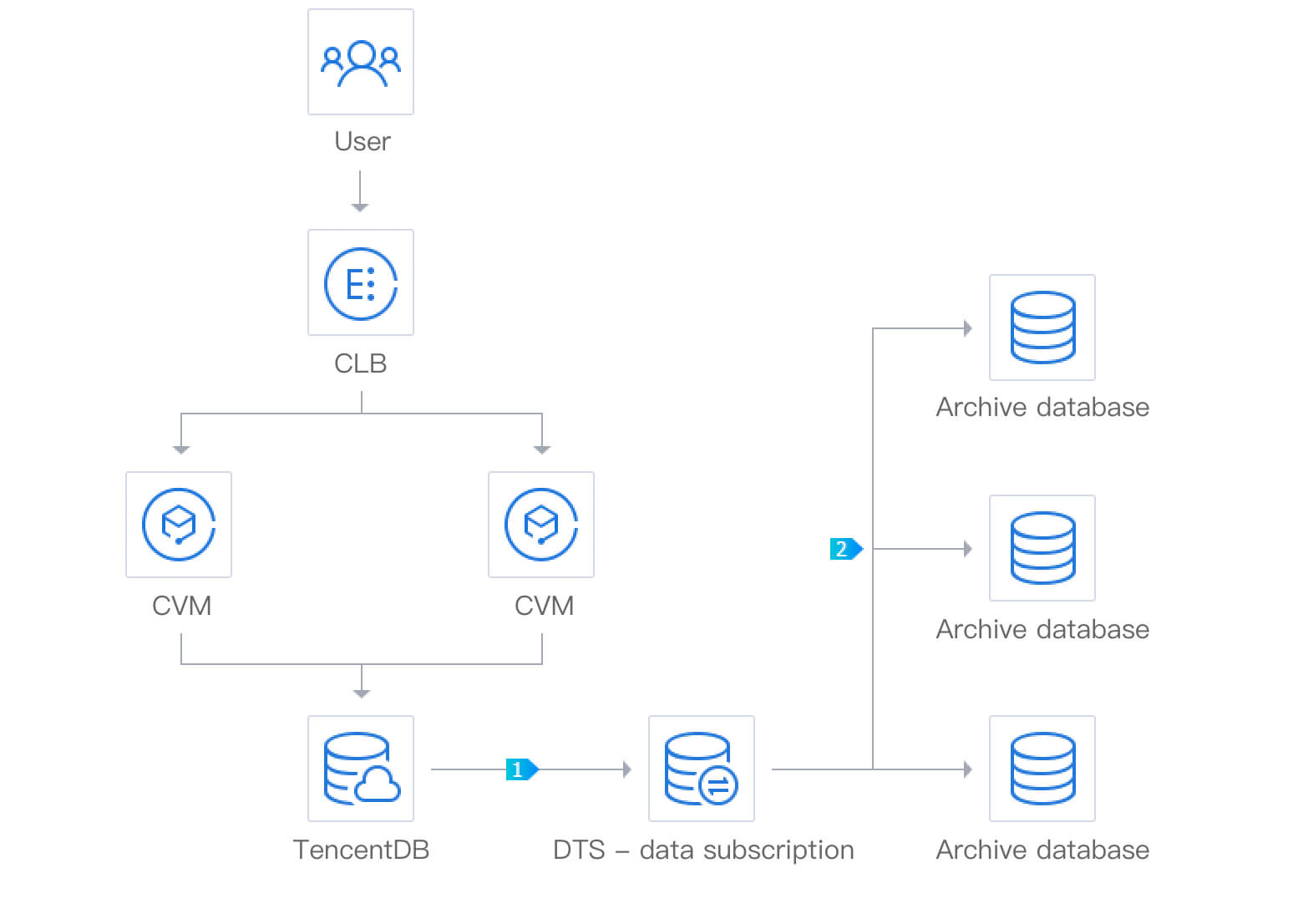

Data archiving

By using the data subscription feature of DTS, you can push the updated incremental data in TencentDB to an archive database or data warehouse as a stream in real time.

Restrictions

Currently, the subscribed message content is retained for 1 day by default. Once expired, the data will be cleared. Therefore, you need to consume the data promptly.

The region where the data is consumed should be the same as that of the subscribed instance.

Data subscription to MySQL, MariaDB, and TDSQL for MySQL does not support geometry data types.

Performance description

In the subscription link, the data parsed by the source database is first written into the Kafka instance built in DTS and then consumed by the client. The performance of write and consumption is as follows:

Scenario | Reference Performance Cap |

Data write to built-in Kafka (MySQL/MariaDB/Percona/TDSQL-C for MySQL/TDSQL for MySQL single-shard) | 10 MB/s |

Data write to built-in Kafka (TDSQL for MySQL multi-shard) | 10 MB/s * shard quantity |

Data consumption from built-in Kafka | 20 MB/s (single-consumer group) |

| 50 MB/s (multi-consumer group) |

The above performance data is for reference only. The actual performance may be compromised by various factors such as high load or network delay in the source database.

Supported subscription types

DTS allows you to subscribe to databases and tables. Specifically, the following three subscription types are supported:

Data update: Subscription to DML operations.

Structure update: Subscription to DDL operations.

Full: Subscription to the DML and DDL operations of all tables.

Consumable data formats

Subscribed data in ProtoBuf, Avro, or JSON formats can be consumed. ProtoBuf and Avro adopt the binary format with a higher consumption efficiency, while JSON adopts the easier-to-use lightweight text format.

Supported advanced features

Feature | Description | Documentation |

SDKs for various programming languages | DTS uses the Kafka protocol and supports Kafka client SDKs for multiple programming languages. | - |

Metric monitoring and default alarm policy | Data subscription metrics can be monitored. Default configuration is supported for data subscription event monitoring to automatically notify you of abnormal events. | |

Multi-channel data consumption | DTS allows creating multiple data channels for a single database, which can be consumed concurrently through a consumer group. | - |

Partitioned consumption | DTS supports partitioned storage of data in a single topic for concurrent consumption of data in multiple partitions, improving the consumption efficiency. | - |

Custom routing policy | DTS supports routing data fields to Kafka partitions according to custom rules. | - |

Consumption offset change | DTS supports modifying the consumption offset. |

Ajuda e Suporte

Esta página foi útil?

Você também pode entrar em contato com a Equipe de vendas ou Enviar um tíquete em caso de ajuda.

comentários