Mastering OpenClaw | Tutorial on Integrating Custom Large Models with OpenClaw (Clawdbot)

By now, most of you are probably familiar with and using OpenClaw (Clawdbot).

Note for first-time users: If you haven’t deployed OpenClaw yet, start with Tencent Cloud OpenClaw first. You can launch your OpenClaw instance in seconds with one click, then come back to this guide to explore more advanced use cases.

It comes with a visual management panel and supports fast integration with QQ, WeCom, Lark, DingTalk, Discord, WhatsApp, Telegram, and iMessage.

Still don’t know what OpenClaw is? This article will help you understand and quickly build your own OpenClaw >> OpenClaw One-Click Instant Deployment Guide

If you have other third-party providers (such as Qiniu, Silicon Motion, etc.) that need configuration, this article will teach you how to integrate these third-party providers.

Important

When selecting a model provider, please ensure that your Light Application Server region is among the regions supported by the model provider.

Connect to custom AI providers

Simply fill in the relevant fields for the custom model in the Application Management section of the Lightweight Application Server console and click Save to complete the custom model configuration:

Silicon-based flow

Taking silicon-based flow as an example, using the “OpenAI” protocol and the DeepSeekv3.2 model:

{

"provider": "siliconflow",

"base_url": "https://api.siliconflow.cn/v1",

"api": "openai-completions",

"api_key": "your-api-key-here",

"model": {

"id": "deepseek-ai/DeepSeek-V3.2",

"name": "DeepSeek-V3.2"

}

}

Kimi Code

⚠️: Using Kimi Code membership benefits outside of official channels (Kimi CLI) may be considered abuse and violation by Kimi, resulting in membership suspension or account ban. See Kimi Code Usage Scope for details.

Using Kimi Code, taking the Kimi-k2.5 model as an example:

{

"provider": "kimicode",

"base_url": "https://api.kimi.com/coding",

"api": "anthropic-messages",

"api_key": "your-api-key-here",

"model": {

"id": "kimi-k2.5",

"name": "Kimi K2.5"

}

}

Google Gemini

Using the Google Gemini API, taking the latest Gemini 3 Flash model as an example:

{

"provider": "google",

"base_url": "https://generativelanguage.googleapis.com/v1beta/openai",

"api": "openai-completions",

"api_key": "your-api-key-here",

"model": {

"id": "gemini-3-flash-preview",

"name": "Gemini 3 Flash"

}

}

OpenAI (GPT)

Official documentation: https://developers.openai.com/api/docs

Using the OpenAI official API, taking the latest GPT-5.2 model as an example:

{

"provider": "openai",

"base_url": "https://api.openai.com/v1",

"api": "openai-completions",

"api_key": "your-api-key-here",

"model": {

"id": "gpt-5.2",

"name": "GPT-5.2"

}

}

Anthropic Claude

Official documentation: https://platform.claude.com/docs/zh-CN/home

Using the Anthropic Claude API, taking the latest Claude Opus 4.6 model as an example:

{

"provider": "anthropic",

"base_url": "https://api.anthropic.com",

"api": "anthropic-messages",

"api_key": "your-api-key-here",

"model": {

"id": "claude-opus-4-6",

"name": "Claude Opus 4.6"

}

}

xAI Grok

Using the xAI Grok API (compatible with the OpenAI protocol), taking the latest Grok 4.1 model as an example:

{

"provider": "xai",

"base_url": "https://api.x.ai/v1",

"api": "openai-completions",

"api_key": "your-api-key-here",

"model": {

"id": "grok-4.1",

"name": "Grok 4.1"

}

}

OpenRouter

Using the OpenRouter API (compatible with the OpenAI protocol), taking the NVIDIA: Nemotron 3 Nano 30B A3B model (free for a limited time) as an example:

{

"provider": "openrouter",

"base_url": "https://openrouter.ai/api/v1",

"api": "openai-completions",

"api_key": "your-api-key-here",

"model": {

"id": "nvidia/nemotron-3-nano-30b-a3b:free",

"name": "NVIDIA: Nemotron 3 Nano 30B A3B"

}

}

General Configuration Template

If the above doesn’t provide the model you want, you can use the following general template to connect to any model compatible with the OpenAI/Anthropic protocol:

{

"provider": "provider_name",

"base_url": "baseurl",

"api": "API Protocol",

"api_key": "your-api-key-here",

"model": {

"id": "model_id",

"name": "model_name"

}

}

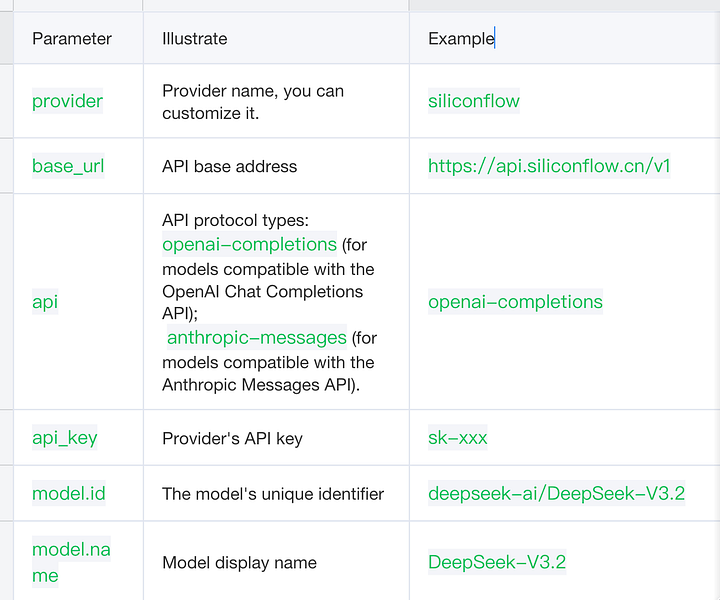

Parameter Description

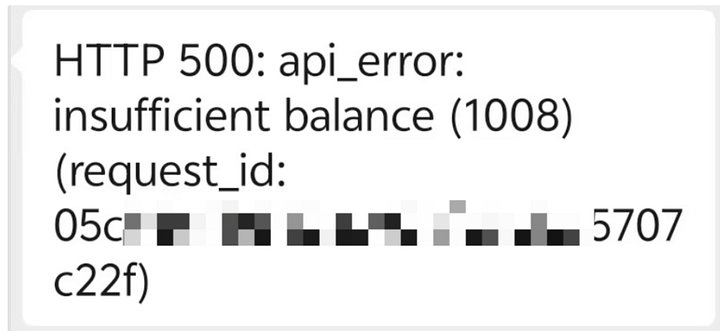

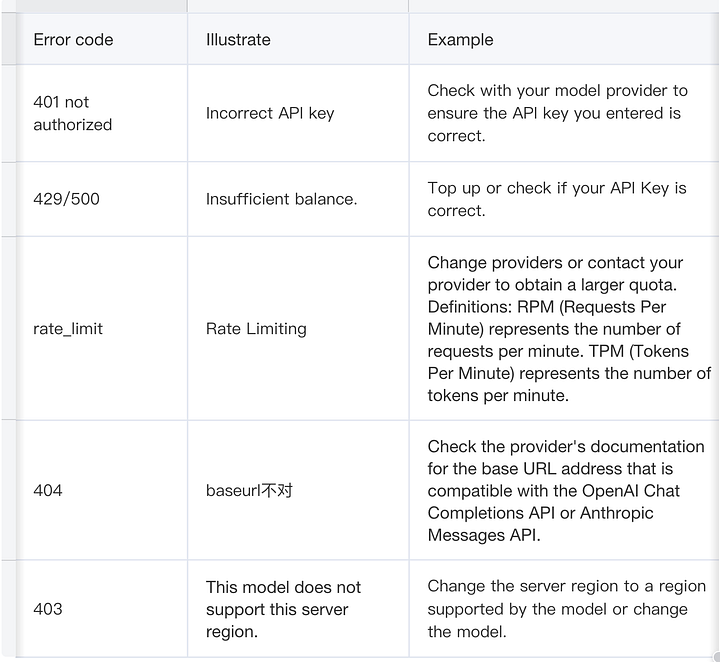

Frequently Asked Questions (Error Codes)

99% of error codes can be translated by long-pressing to understand the cause.

Common Error Codes

Error codes may vary slightly depending on the provider and are for reference only.

Slow Model Response

If your chosen lightweight application server is located outside of mainland China and you are using a domestic network/model provider, high latency may occur due to cross-border network issues.

If you choose a deep thinking model, excessive context may cause the model to take too long to process. We recommend choosing a non-thinking model/fast thinking model as an alternative.

Rapid Token Consumption

OpenClaw carries a significant amount of contextual information when calling models to ensure task continuity and accuracy, which may result in high token consumption. It is recommended to monitor token usage and billing during use.

Welcome to join the discussion!

A Discord has been created, and everyone is welcome to join and explore advanced ways to use Moltbot (Clawdbot) together!

🚀 Developer Community & Support

1️⃣ OpenClaw Developer Community

Unlock advanced tips on Discord

Click to join the community

Note: After joining, you can get the latest plugin templates and deployment playbooks

2️⃣ Dedicated Support

Join WhatsApp / WeCom for dedicated technical support

| Channel | Scan / Click to join |

|---|---|

| WhatsApp Channel |

|

| WeCom (Enterprise WeChat) |

|

Learn more on the official page: Tencent Cloud OpenClaw