Getting Started with Hermes Agent: Deploying on Tencent Cloud Lighthouse

Overview

Welcome to deploying Hermes Agent on Lighthouse!

This article is included in the column: Mastering Lighthouse

👋 Don't have a cloud server yet? Click here to enjoy Lighthouse Exclusive Offers and quickly deploy Hermes Agent~

More Language:

玩转Hermes Agent|使用Lighthouse快速部署云上Hermes Agent

https://www.tencentcloud.com/techpedia/143917

Getting Started with Hermes Agent: Deploying on Tencent Cloud Lighthouse

https://www.tencentcloud.com/techpedia/143916

클라우드에서 Hermes 원클릭 배포 가이드

https://www.tencentcloud.com/techpedia/143922

クラウドでHermesを簡単デプロイ:ワンクリック設定ガイド

https://www.tencentcloud.com/techpedia/143920

Panduan Deploy Hermes di Cloud dengan Sekali Klik

https://www.tencentcloud.com/techpedia/143921

Guía de Despliegue de Hermes en la Nube con Un Solo Clic

https://www.tencentcloud.com/techpedia/143923

Introduction

In April 2026, Nous Research officially released the open-source AI Agent project Hermes Agent, which quickly gained widespread attention on GitHub and in the AI community. Unlike coding assistants bound to IDEs or single-API chatbots on the market, Hermes Agent is a true Autonomous Agent—it runs on your server, has persistent memory, and becomes stronger over time. What's even more exciting is that it has a complete self-learning loop: autonomously creating skills, improving skills through use, and recalling memories across sessions, truly becoming "smarter the more you use it."

Hermes Agent has deep connections with the previously popular OpenClaw project—it even includes the built-in hermes claw migrate command, supporting one-click migration of OpenClaw settings, memories, skills, and API keys. Hermes Agent represents a comprehensive evolution in the AI Agent space.

Where Should Hermes Agent Be Installed?

Similar to OpenClaw, Hermes Agent also has full system operation permissions (terminal execution, file read/write, browser automation, etc.), so the official recommendation is to deploy it in an environment isolated from your personal main computer to ensure data security.

Hermes Agent supports Linux, macOS, WSL2, and Android (Termux) platforms, with Linux being the most recommended deployment environment. Deploying Hermes Agent on a cloud server not only achieves secure isolation from your local computer but also enables 24/7 online availability, allowing you to interact with it anytime, anywhere through chat applications like Telegram and Discord.

Note: Hermes Agent currently does not support native Windows environments. Windows users need to install WSL2 first and run it there.

Why Choose Tencent Cloud Lighthouse?

Tencent Cloud Lighthouse is a cloud server product designed for lightweight application scenarios. It doesn't require understanding complex cloud computing concepts and offers cost-effective server packages.

Compared to running on a local computer, deploying Hermes Agent using Lighthouse server has the following advantages:

- Quick Start: Complete deployment in just a few minutes

- Cost-Friendly: Start experiencing for just tens of dollars

- 24/7 Online: Native support for round-the-clock operation, interact with Hermes Agent through chat apps anytime

- Secure Isolation: Cloud environment is strongly isolated from local computer, protecting personal data security

Installing Hermes Agent on Tencent Cloud Lighthouse

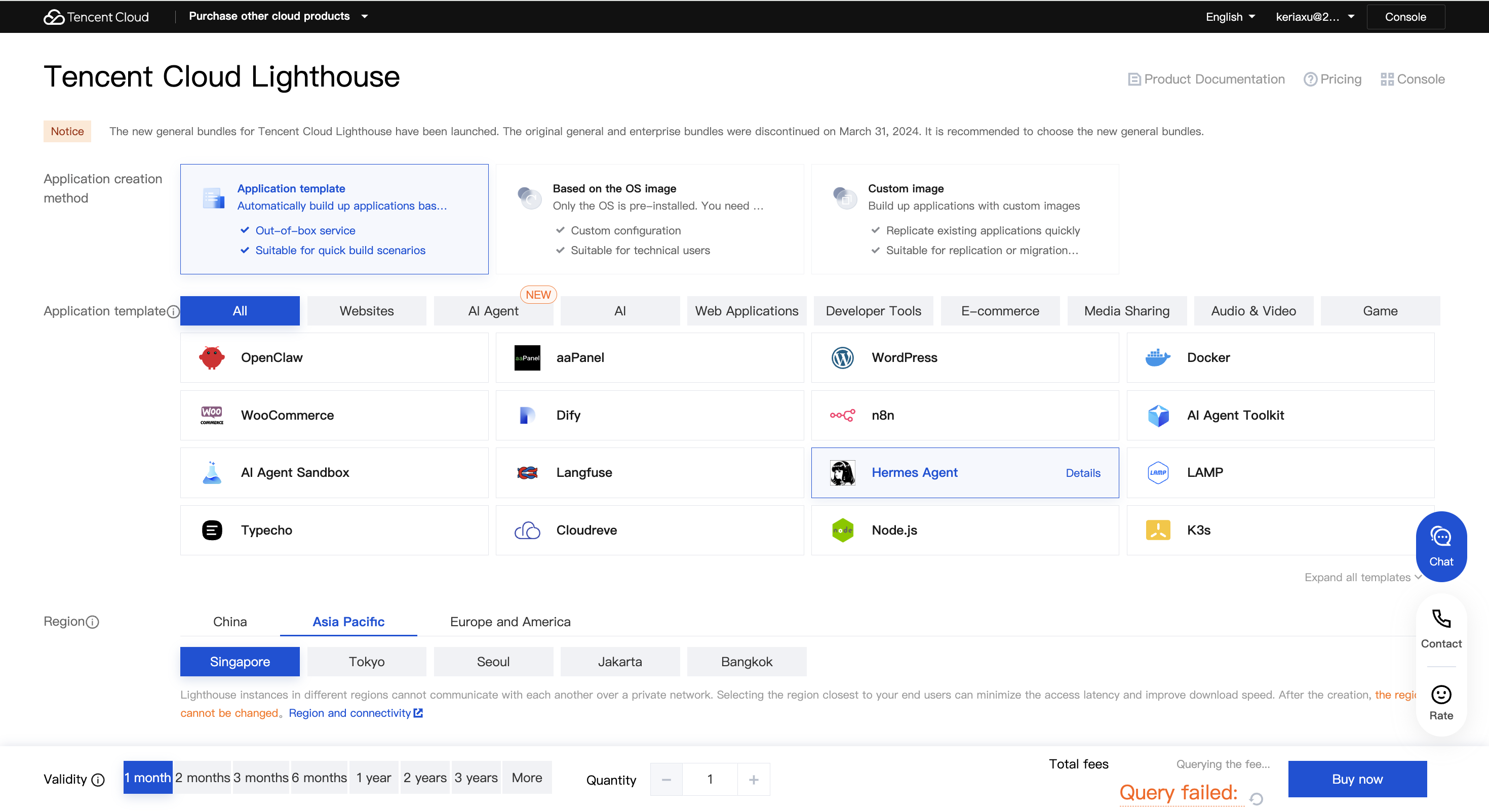

Method 1: Purchase a New Lighthouse Server

Go to the Tencent Cloud Lighthouse product purchase page to purchase a server. Recommended configuration:

- Image: Hermes Agent

- Region: Choose based on your use case. For connecting to overseas platforms like Discord, typically choose an overseas region (such as Hong Kong, China); if you need to call domestic models (such as Kimi, MiniMax, Zhipu GLM), it's recommended to choose a domestic region.

- Package Configuration: Recommended 2-core 4G memory or higher, minimum configuration supports 2-core 2G memory (suitable for simple trial).

Click Buy Now, follow the page guidance to complete payment, and wait about 30 seconds to complete server creation.

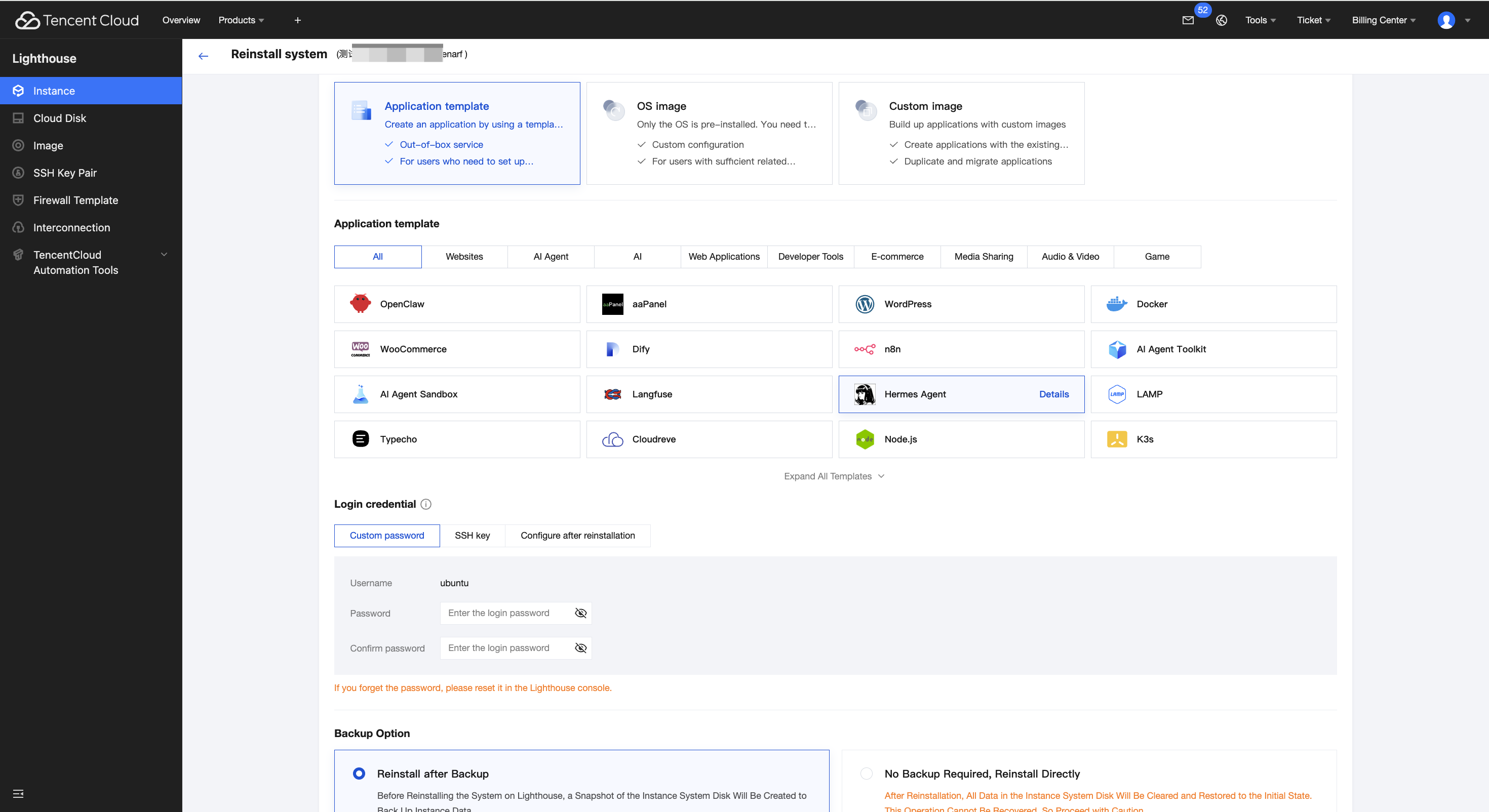

Method 2: Reinstall an Existing Lighthouse Server

Note: Reinstalling the system will erase all data on the server. Please proceed with caution!

If you have an existing idle Lighthouse instance, you can select the Hermes Agent image through "Reinstall System," then configure Hermes Agent following the subsequent steps.

Hermes Agent Model Configuration Guide

After installation, we need to configure the model (AI brain) for Hermes Agent. Hermes Agent does not include an AI model itself and requires connecting to an external large language model (LLM) to power its intelligence. This tutorial uses MiniMax China as an example for configuration.

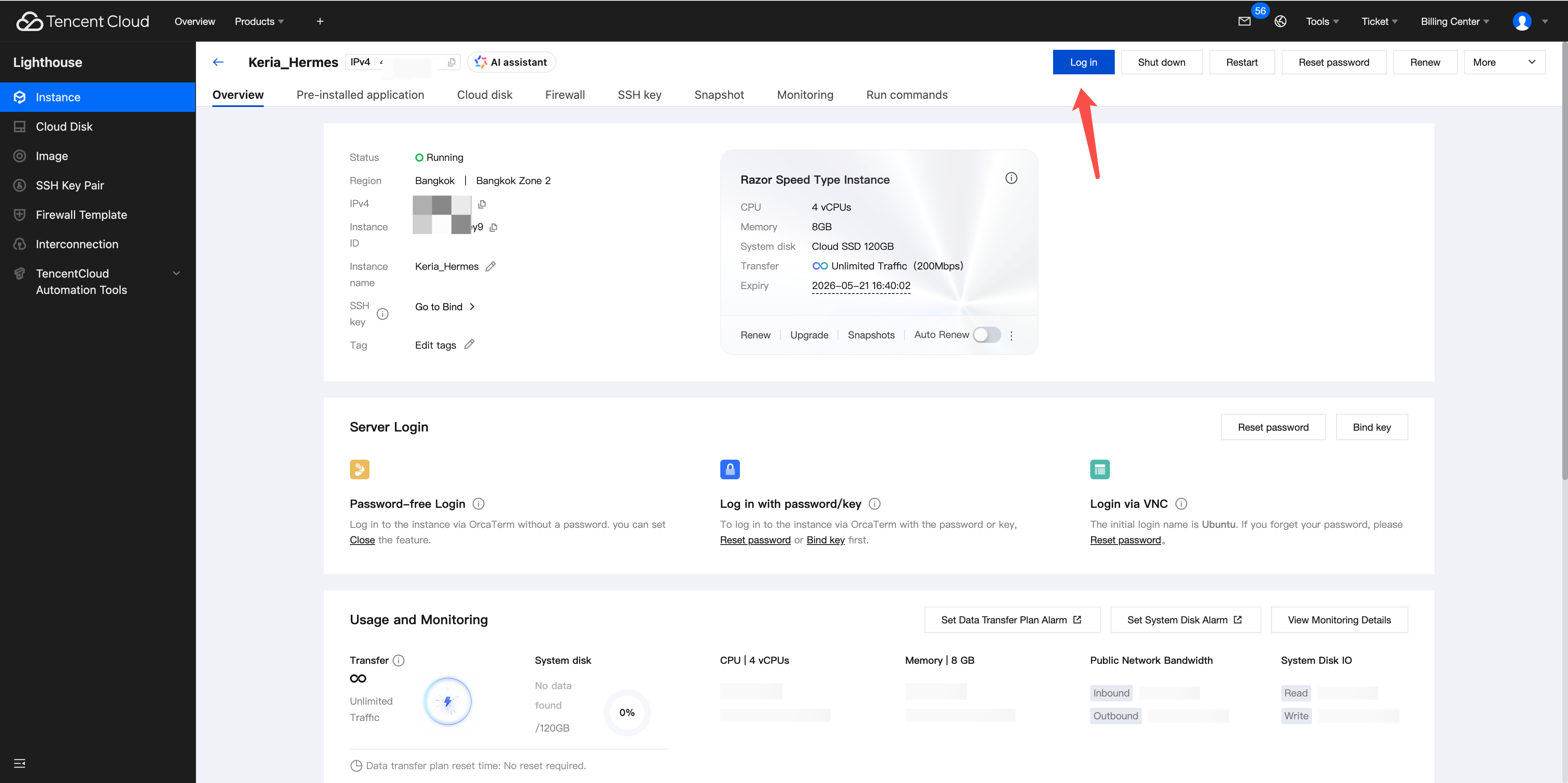

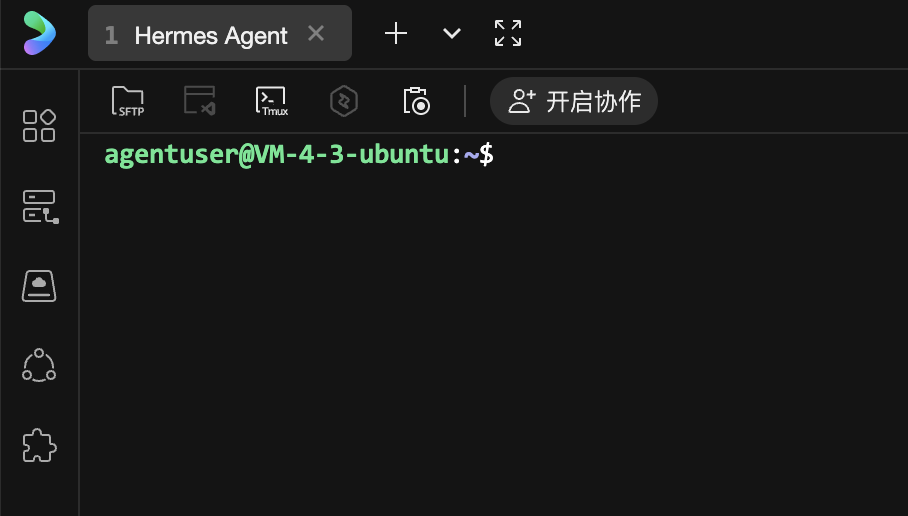

Step 1: Log in to the Server

Open the Tencent Cloud Console, find the Lighthouse instance with Hermes Agent already installed.

Click the Login button on the instance card, and the browser will automatically redirect to Tencent Cloud's web terminal tool OrcaTerm.

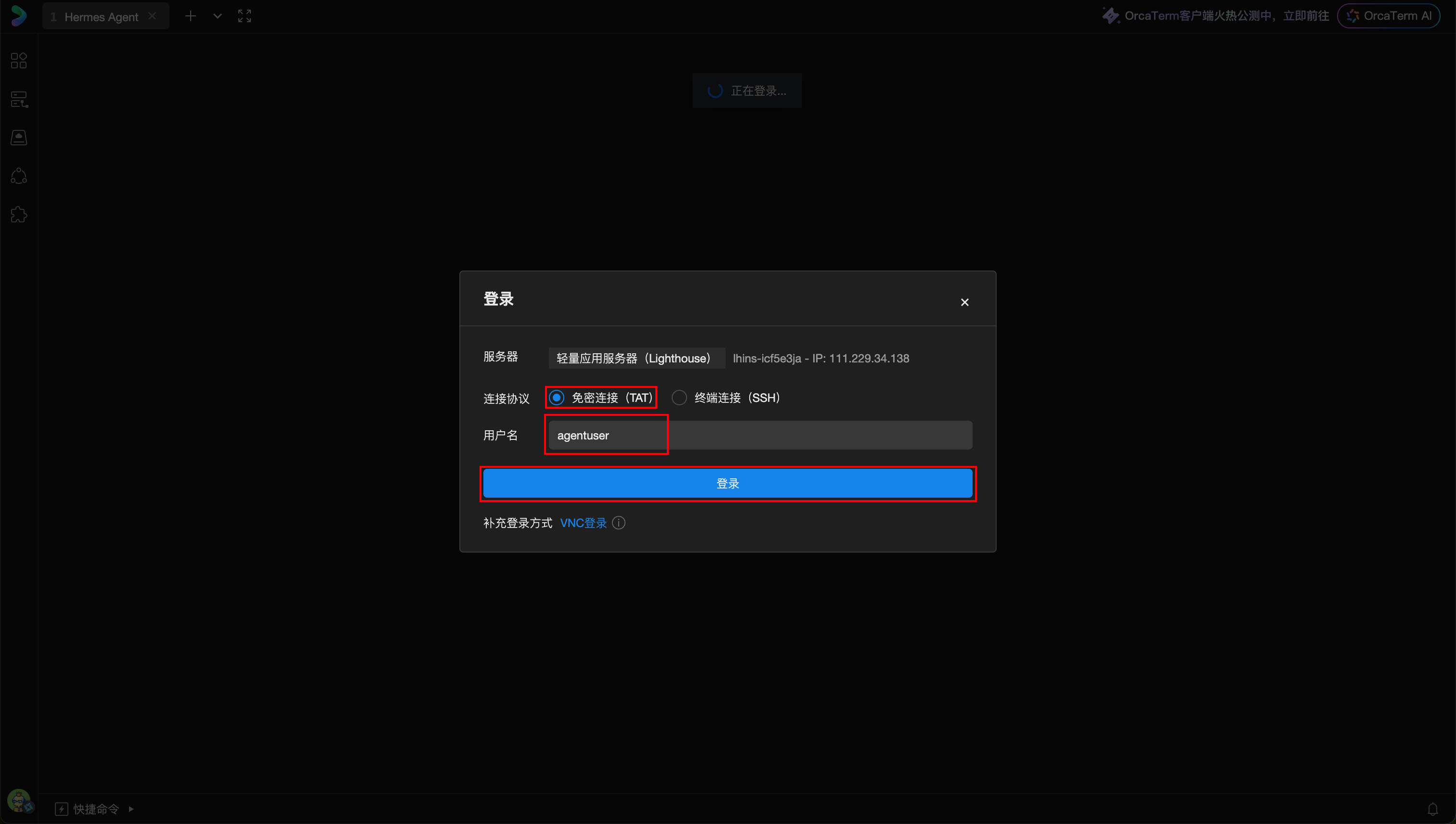

Configure in the popup window:

- Login Method: Select Password-free Login

- Username: Change to

agentuser(Hermes Agent is installed under this user)

Note: If your Hermes Agent instance was created before April 15, 2026, you need to switch the username to

lighthouse. You can also switch to the lighthouse user by executing thesu lighthousecommand after logging in.

Confirm the information and click the Login button. After a successful login, you will enter the server terminal interface.

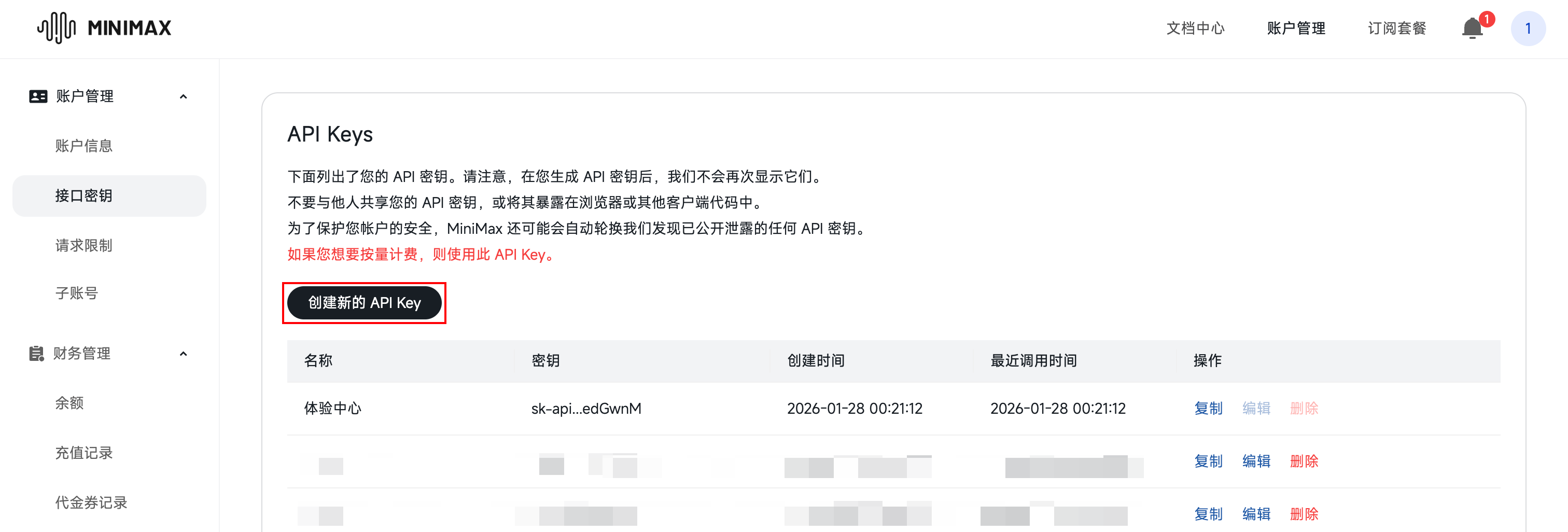

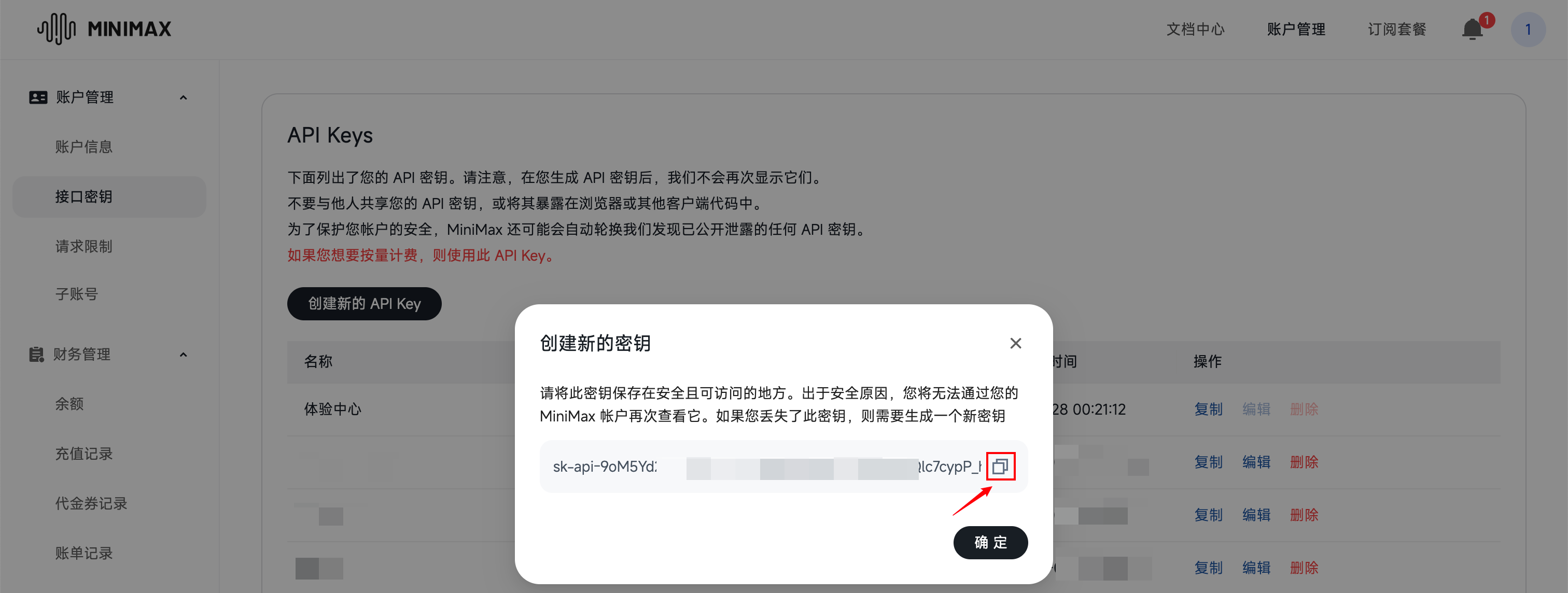

Step 2: Obtain MiniMax API Key

Before configuring the model, you need to obtain an API key from the MiniMax platform.

- Open MiniMax Open Platform - API Keys Page

- Register or log in to your MiniMax account

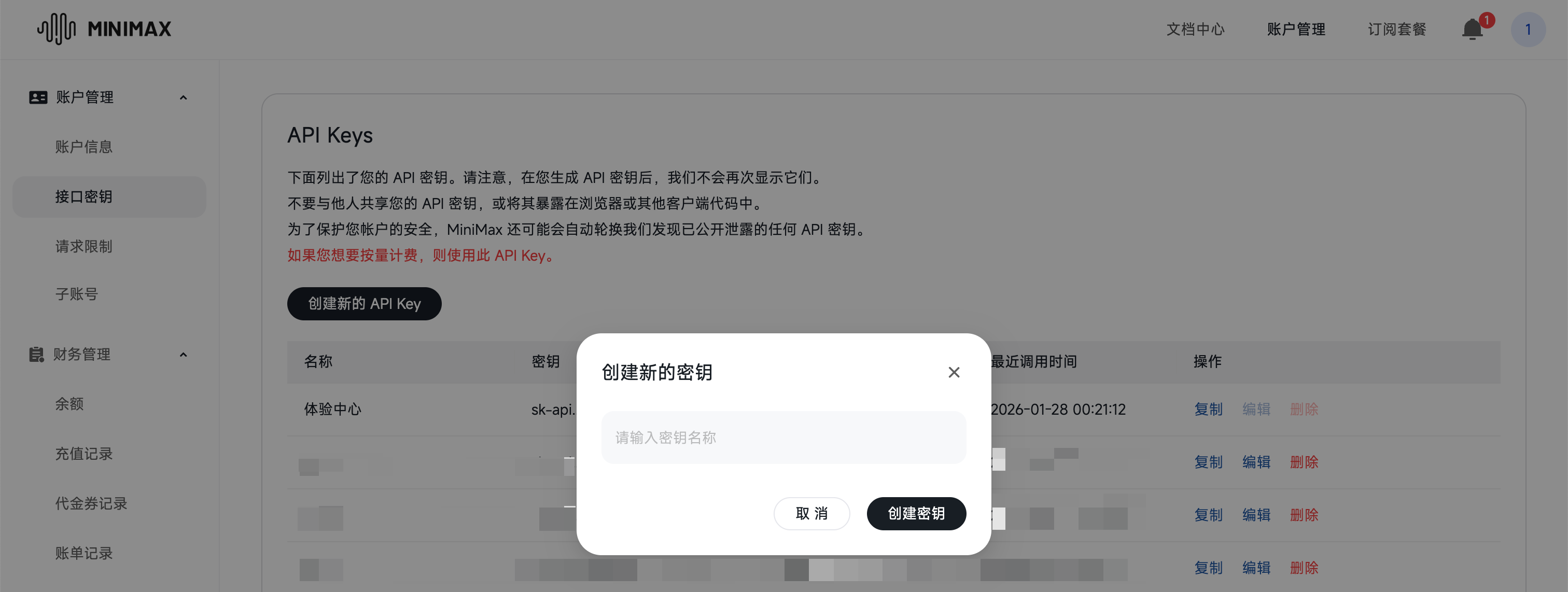

- Click Create API Key, and enter a key name in the popup

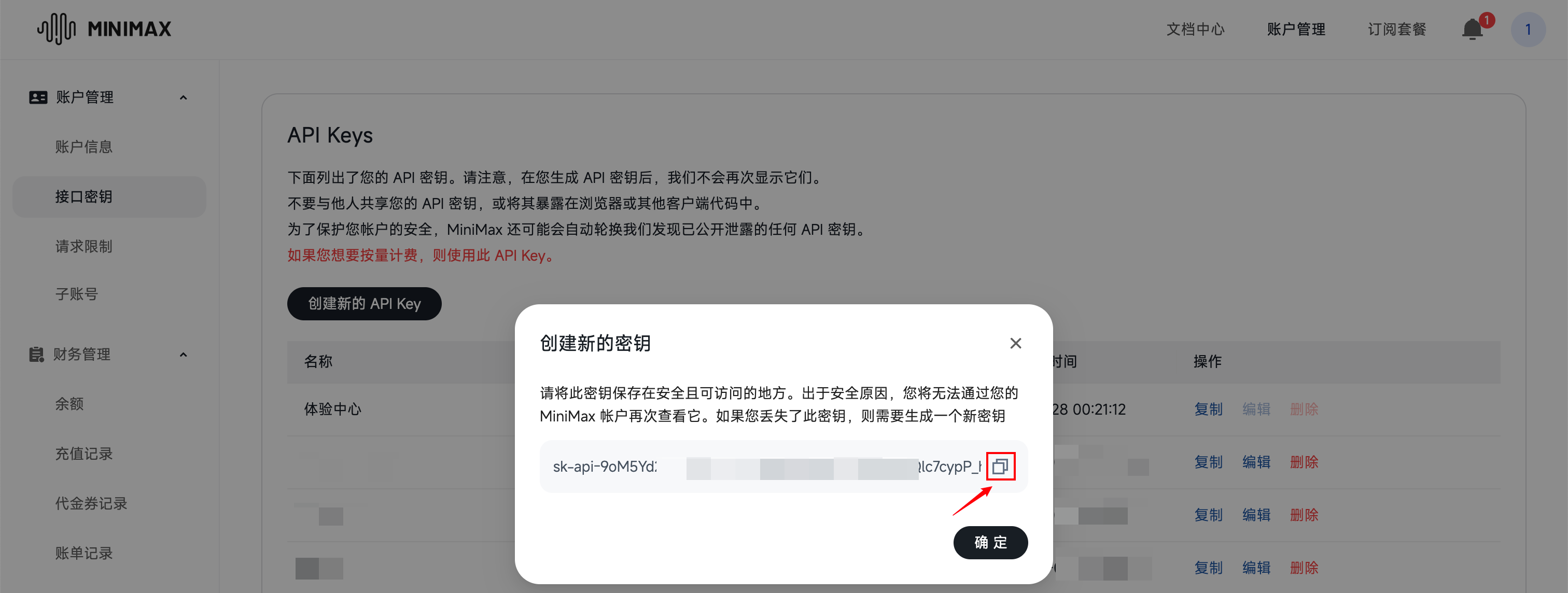

The system will generate a key string starting with sk-api-. Copy and save it immediately.

Important: The complete key cannot be viewed again after the page is closed. Please copy and save it immediately.

Important: Make sure your MiniMax account balance is not 0. If the balance is zero, Hermes Agent will not be able to call the model even if the API key is configured correctly. You can check and recharge your balance on the MiniMax Billing Center - Balance Page.

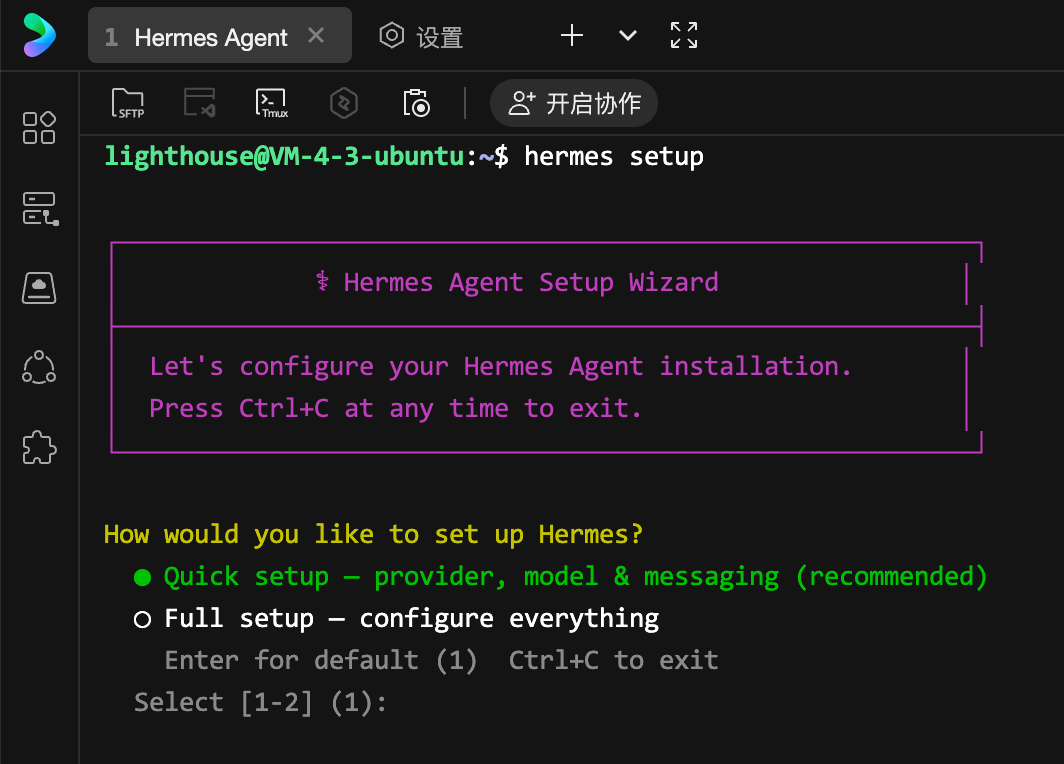

Step 3: Run hermes setup to Configure the Model

Return to the server terminal and execute the following command to start the interactive configuration wizard:

hermes setup

After execution, the terminal displays the configuration wizard interface:

Use the up and down arrow keys to select Quick setup — provider, model & messaging (recommended), and press Enter to confirm.

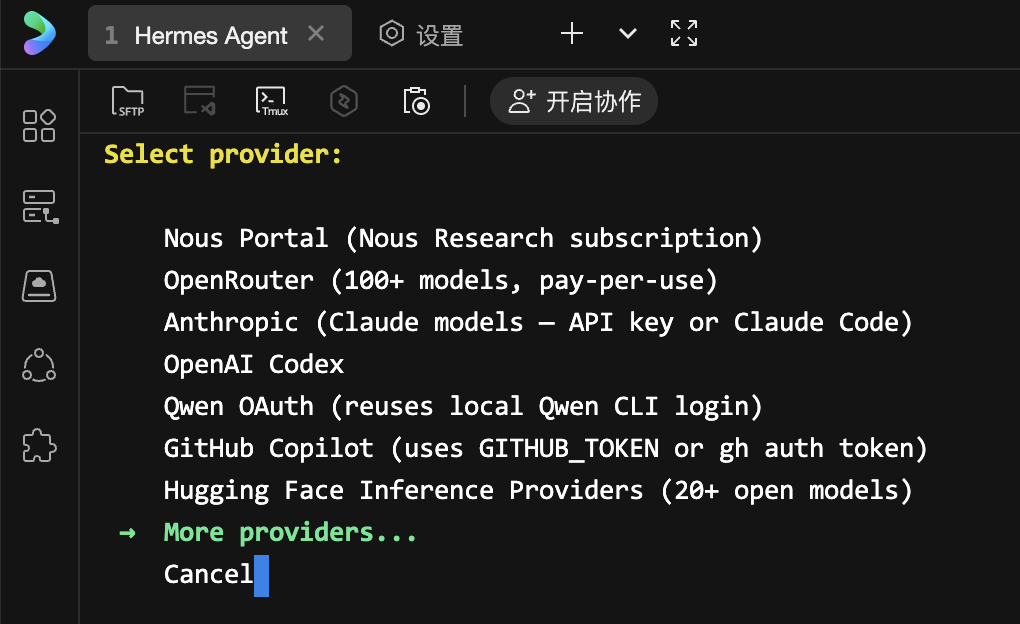

3.1 Select Model Provider

The wizard displays a list of supported model service providers. Use the up and down arrow keys to scroll and select More providers..., then press Enter.

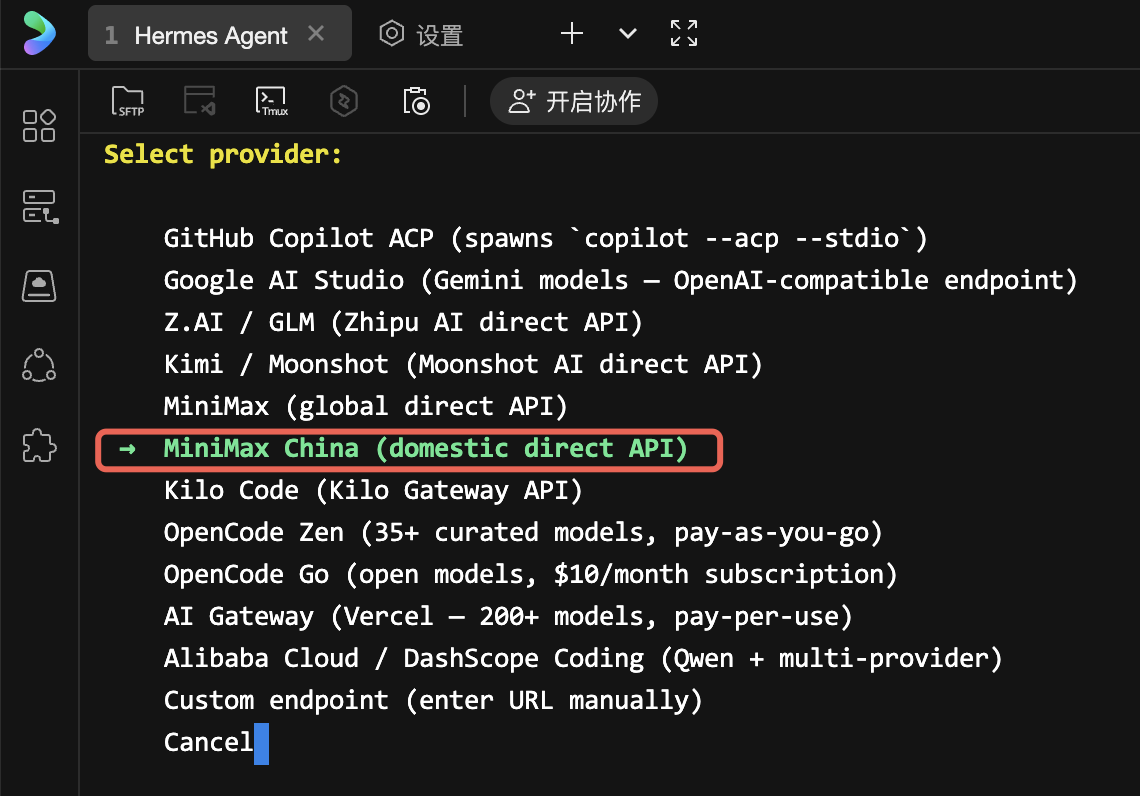

Find and select MiniMax (China), and press Enter to confirm.

Note: MiniMax and MiniMax (China) are two different options. MiniMax (China) corresponds to the MiniMax China site. If you are using it domestically in China and obtained the API key from the MiniMax China site, please select this option.

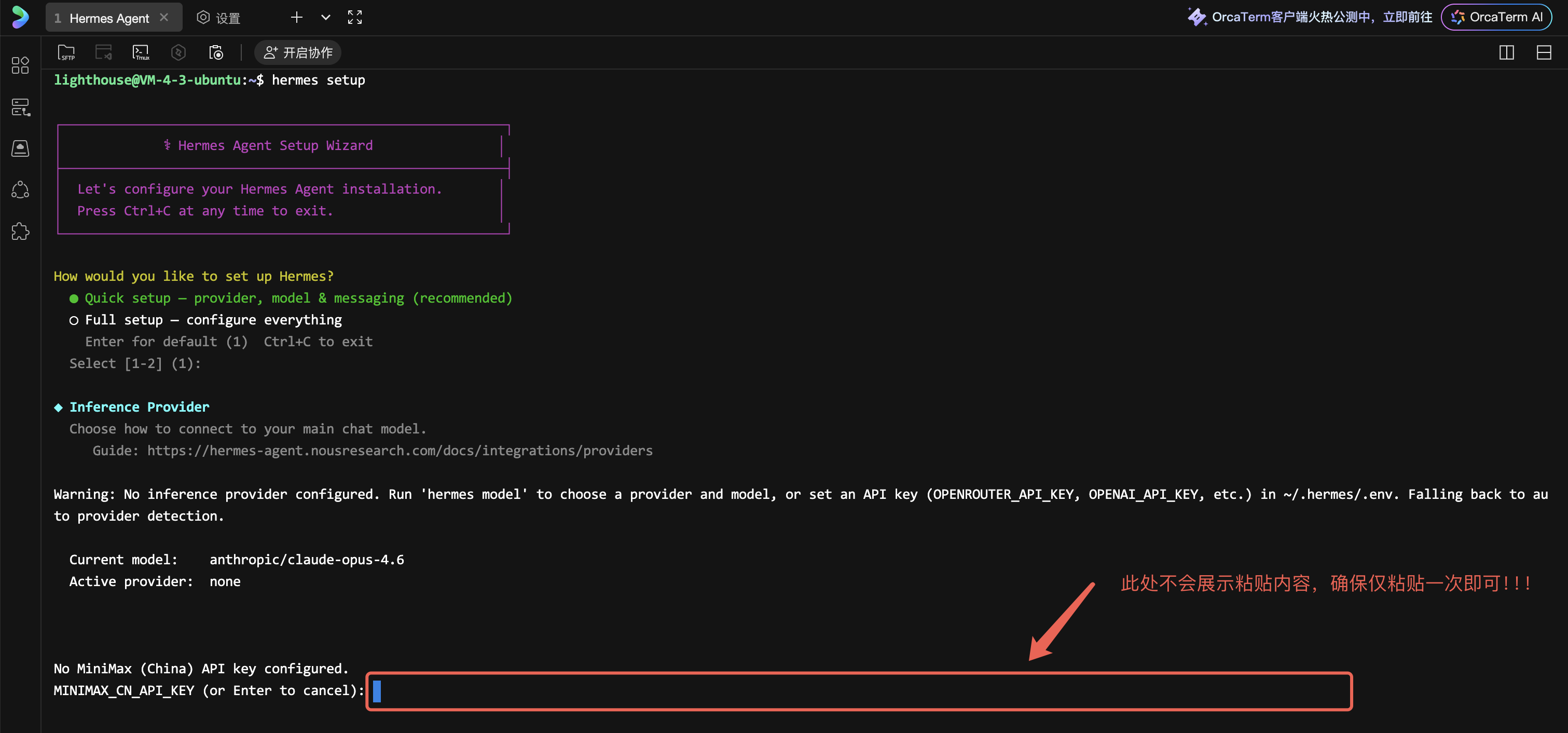

3.2 Enter API Key

After selecting the provider, the wizard prompts you to enter the API key:

MiniMax CN API key:

Paste the API key you copied in Step 2 here (paste method: right-click, or press Ctrl+Shift+V), and press Enter to confirm.

Note: For security, no characters (including asterisks) will be displayed on the screen when entering the key. Simply paste and press Enter.

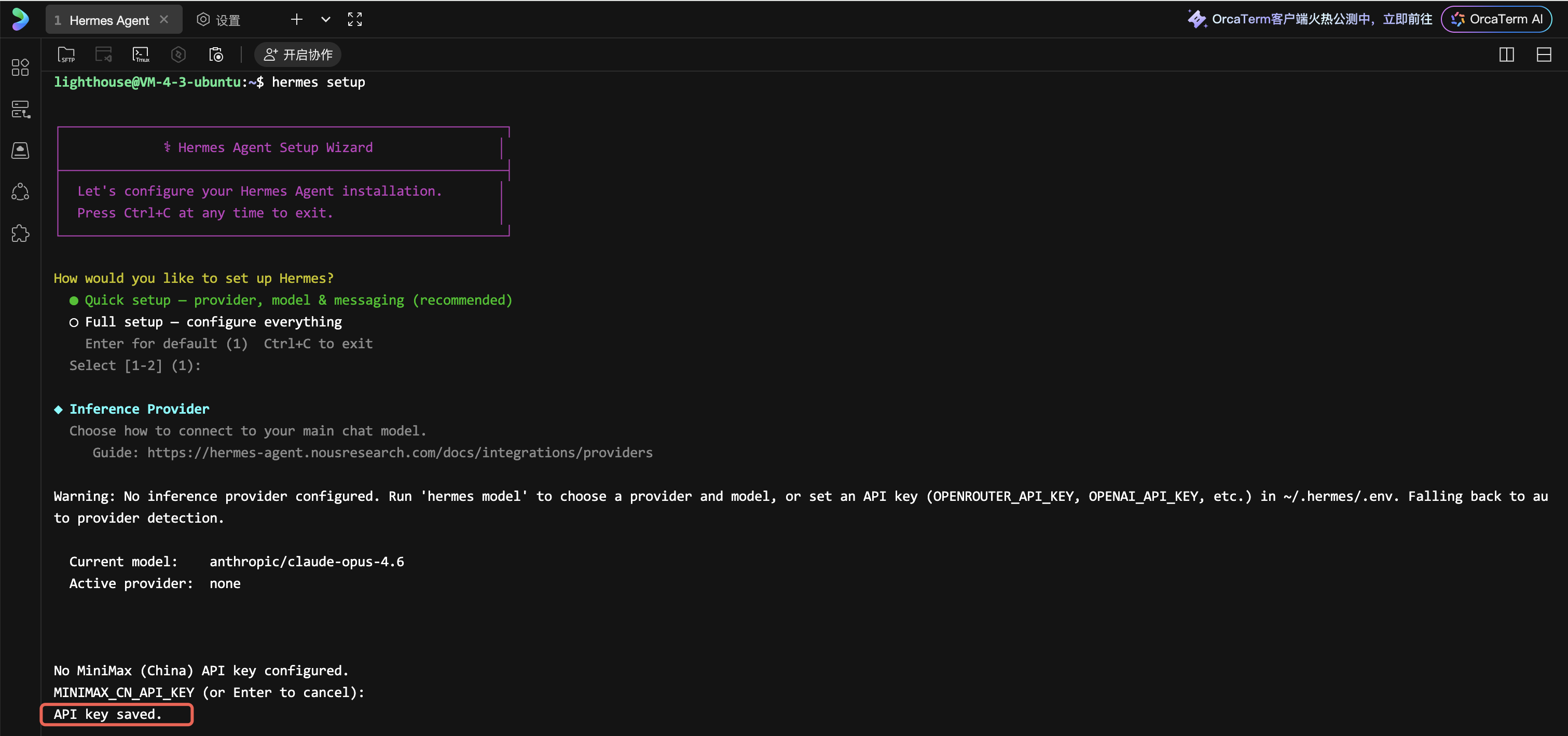

Upon successful input, the confirmation message is displayed:

API Key Saved.

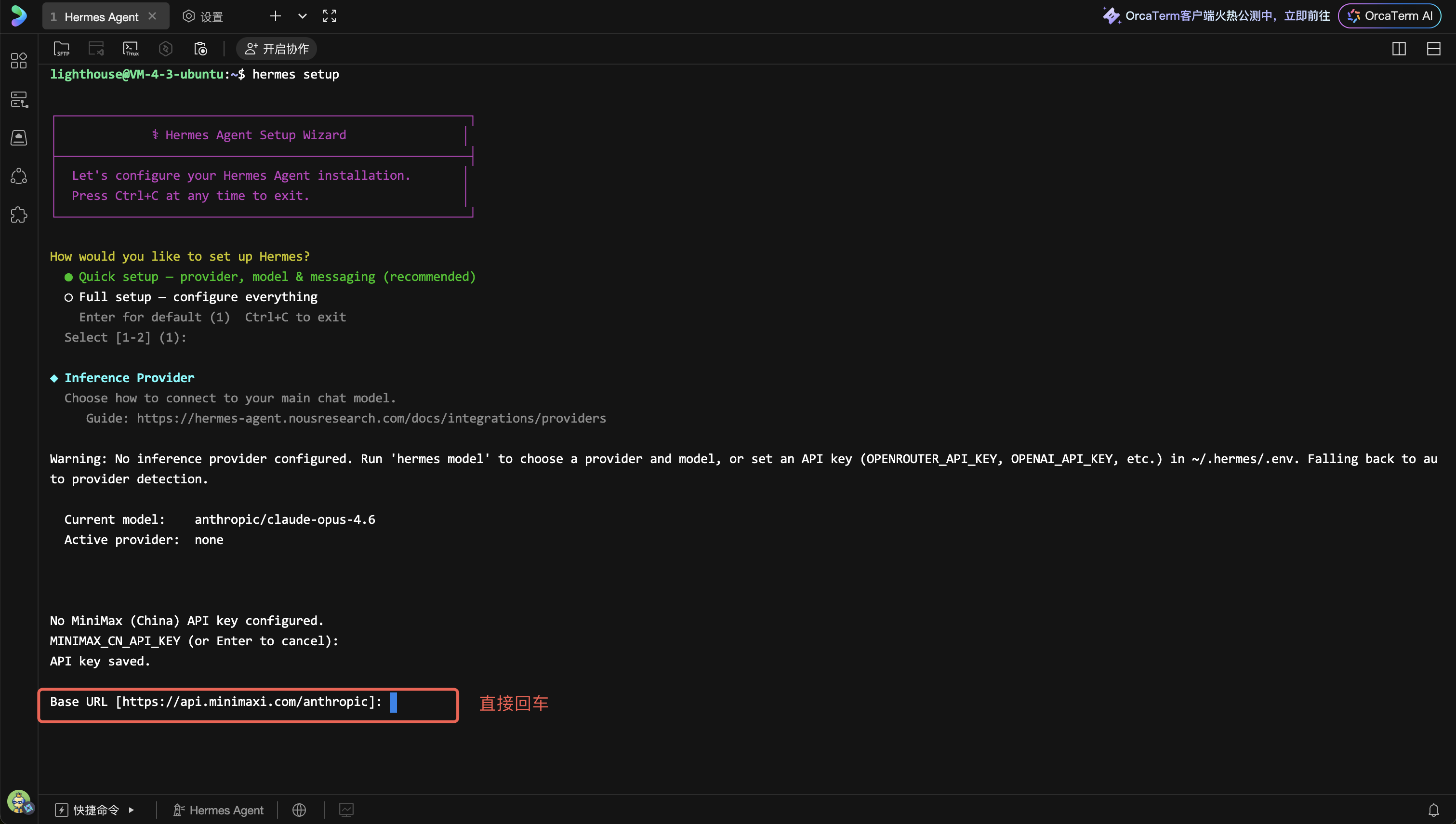

At this point, you will be prompted for Base URL information. Simply press Enter to confirm:

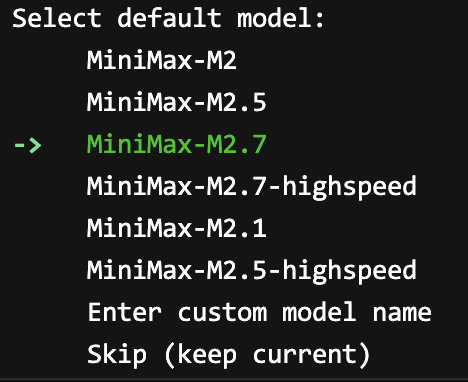

3.3 Select Model

After the API key is saved successfully, the wizard displays the list of models supported by MiniMax China:

-> MiniMax-M2

MiniMax-M2.5

MiniMax-M2.7

MiniMax-M2.7-highspeed

MiniMax-M2.1

MiniMax-M2.5-highspeed

Enter custom model name

Skip (keep current)

Use the up and down arrow keys to select the model. Recommended: MiniMax-M2.7 (latest version, most powerful), press Enter to confirm.

Upon confirmation, the following is displayed:

✓ Default model set to: MiniMax-M2.7

3.4 Skip Messaging Platform Configuration

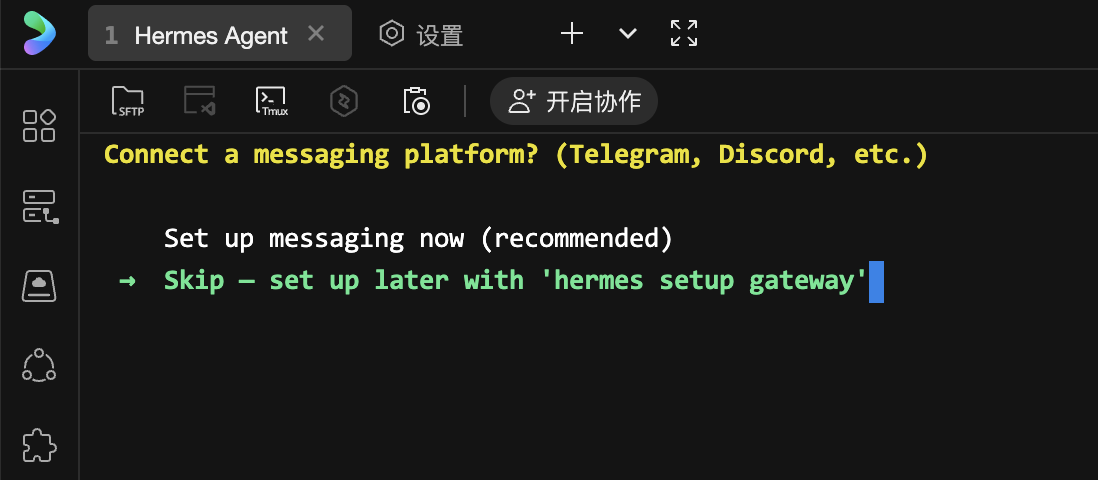

After model configuration is complete, the wizard asks if you want to connect a messaging platform:

Connect a messaging platform? (Telegram, Discord, etc.)

● Set up messaging now (recommended)

○ Skip — set up later with 'hermes setup gateway'

Select Skip — set up later with 'hermes setup gateway', and press Enter to skip.

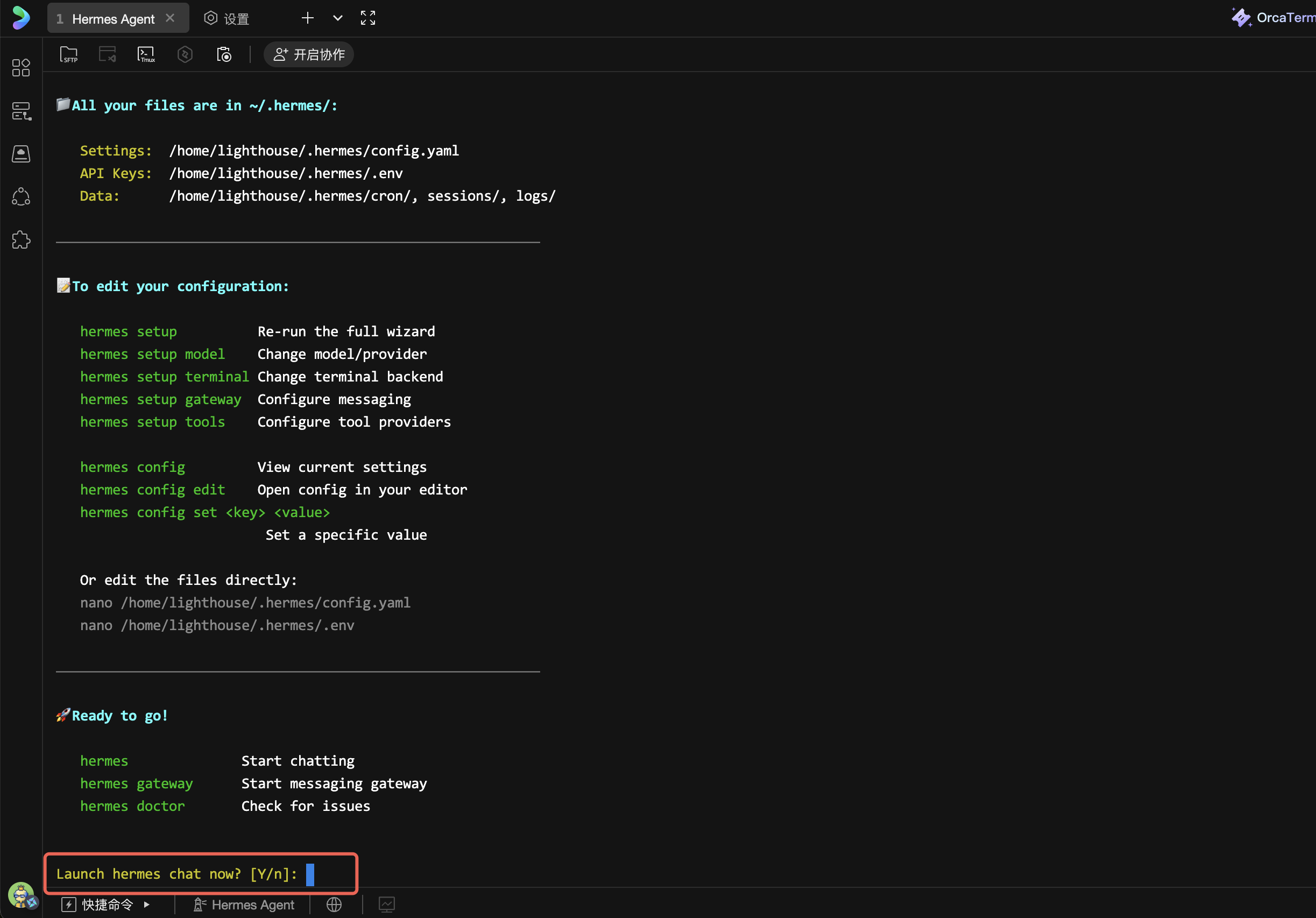

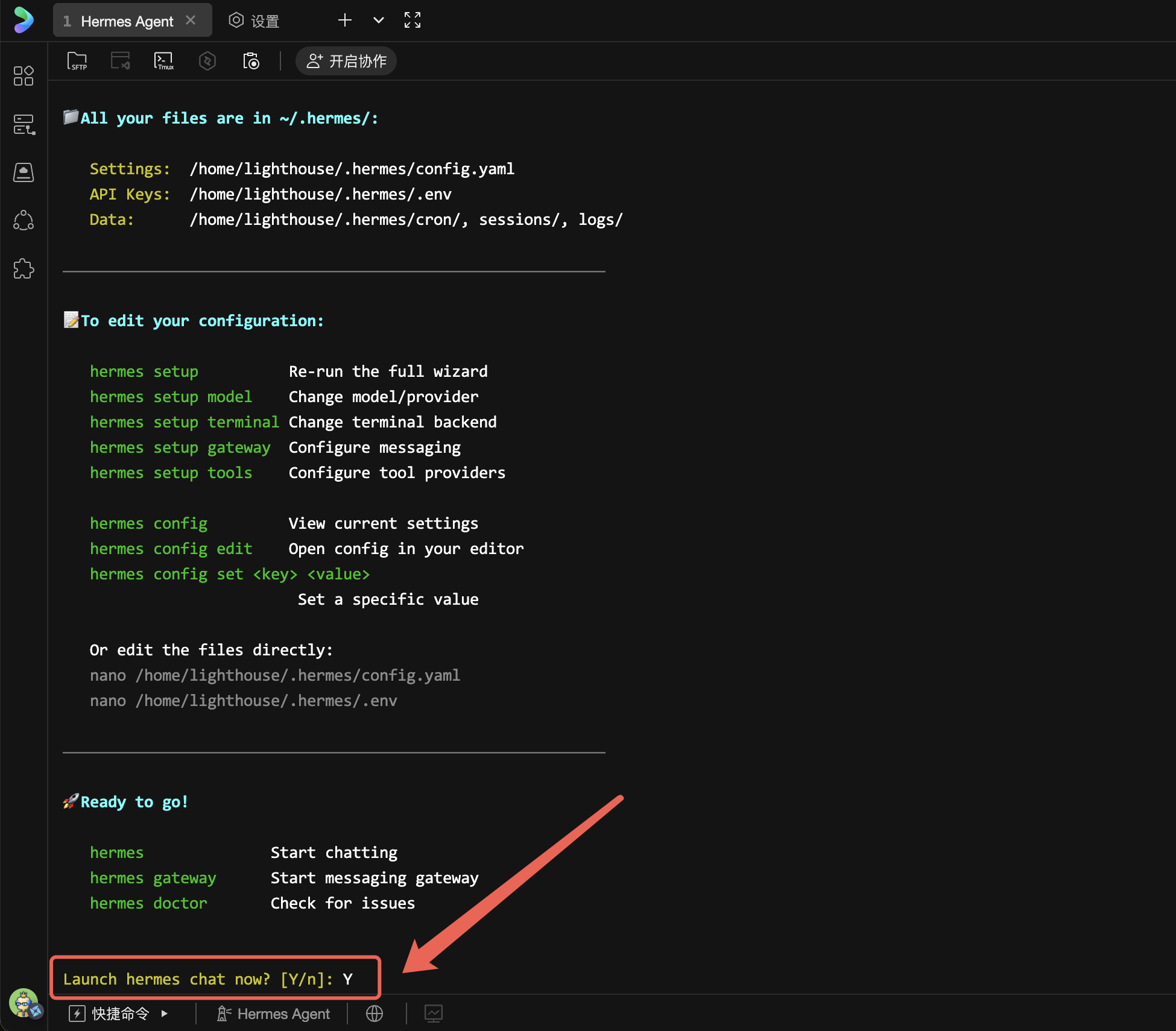

The wizard displays a summary of the completed configuration and prompts whether to connect to Hermes for conversation:

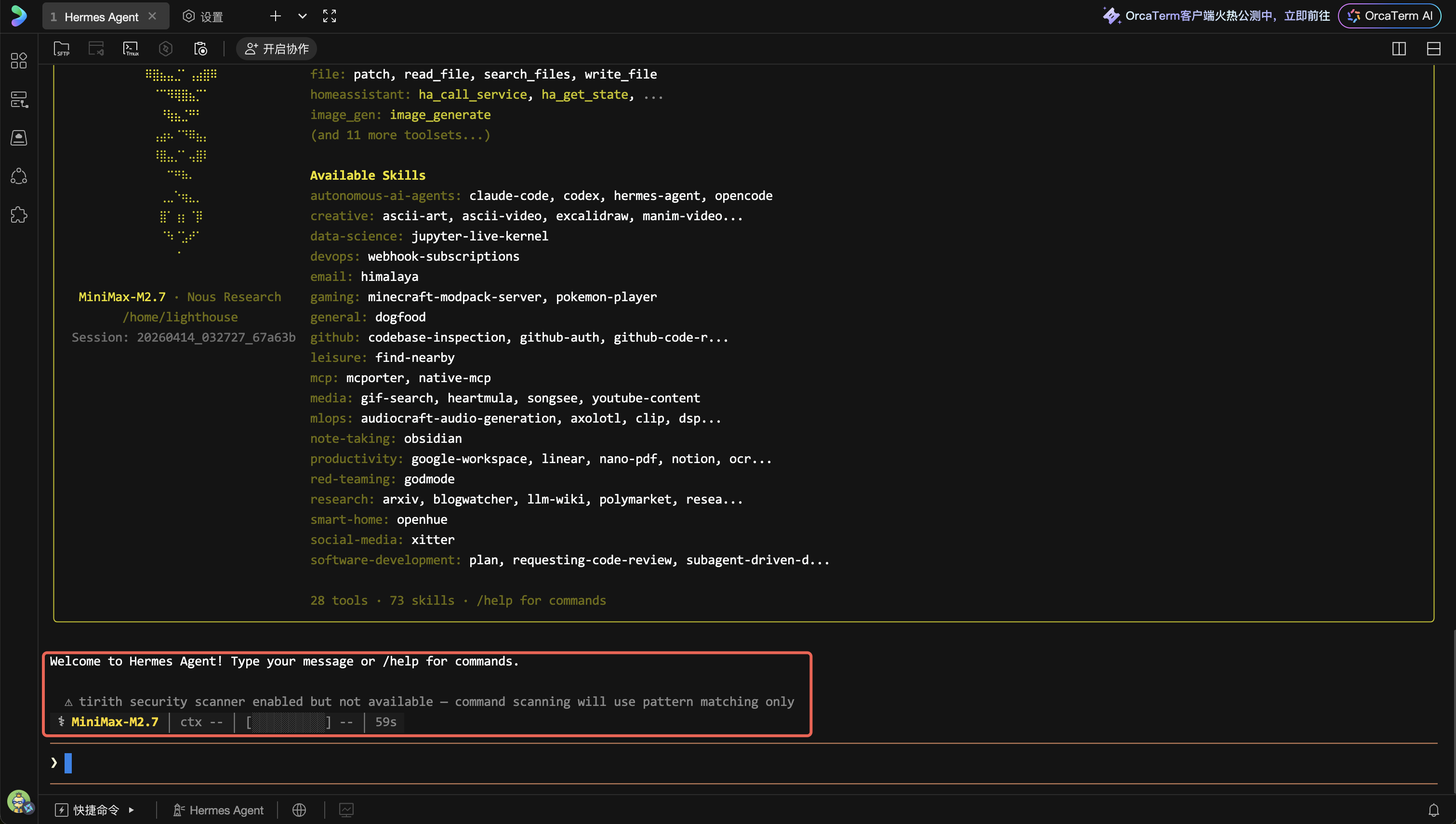

Step 4: Verify Model Configuration

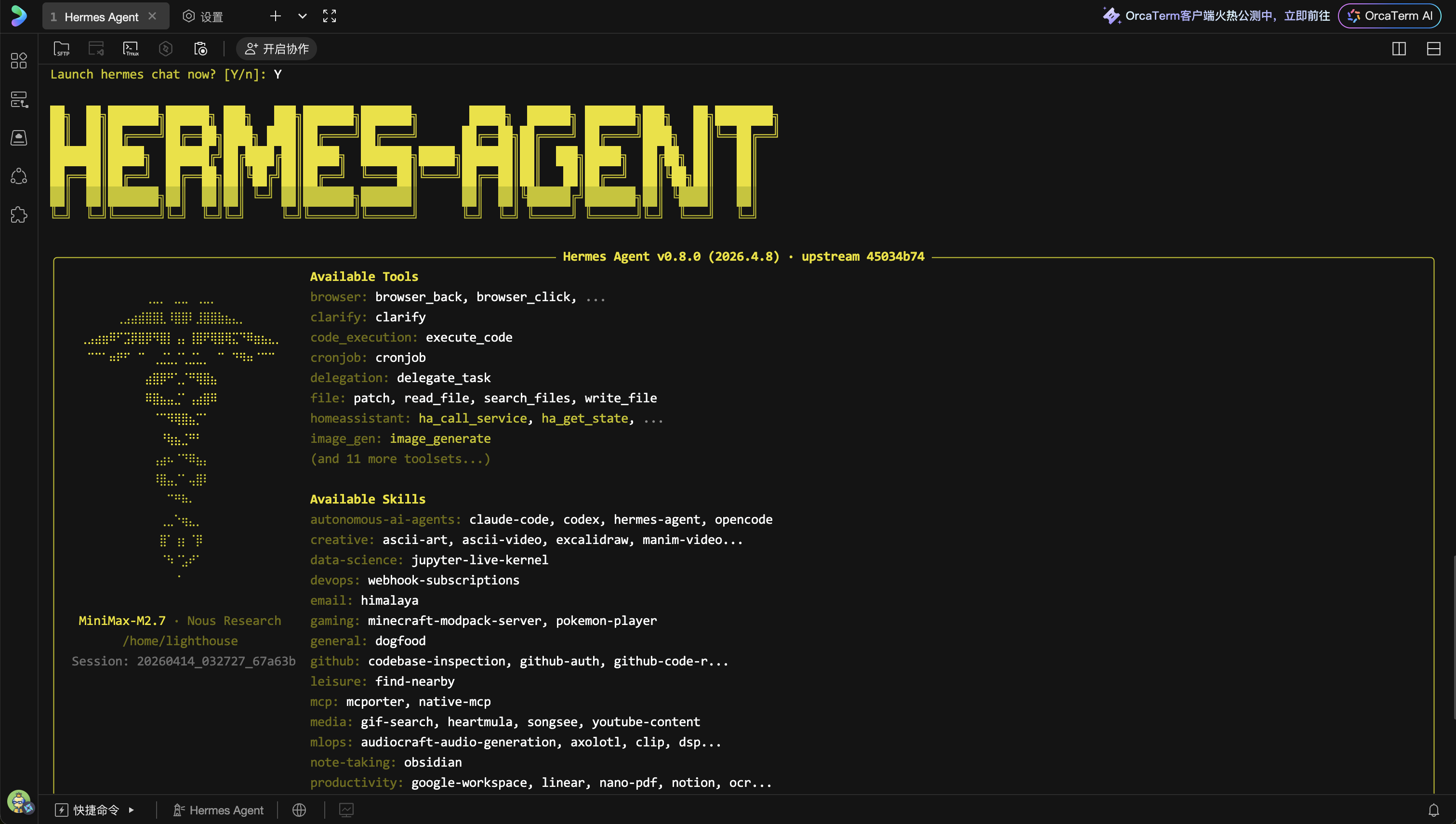

Enter Y on the command line and press Enter, or directly run the hermes command in the terminal to launch the built-in command-line chat interface (TUI).

If the model is configured correctly, you will see the Hermes Agent welcome screen, with the current model information displayed at the bottom (e.g., MiniMax-M2.7 via minimax-cn).

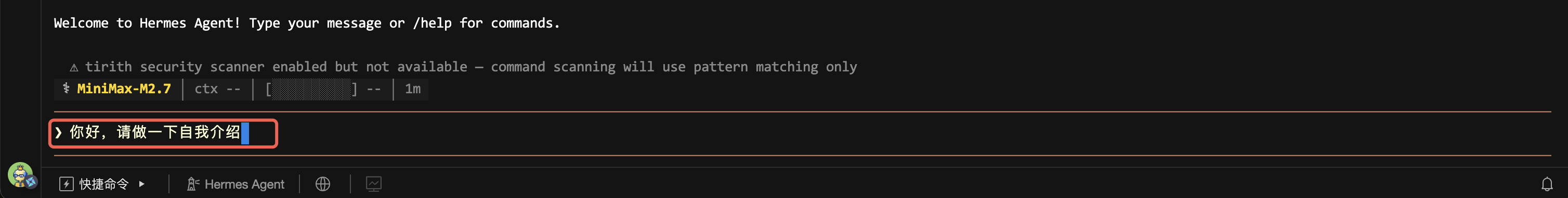

Enter a test message in the input box:

Hello, please introduce yourself

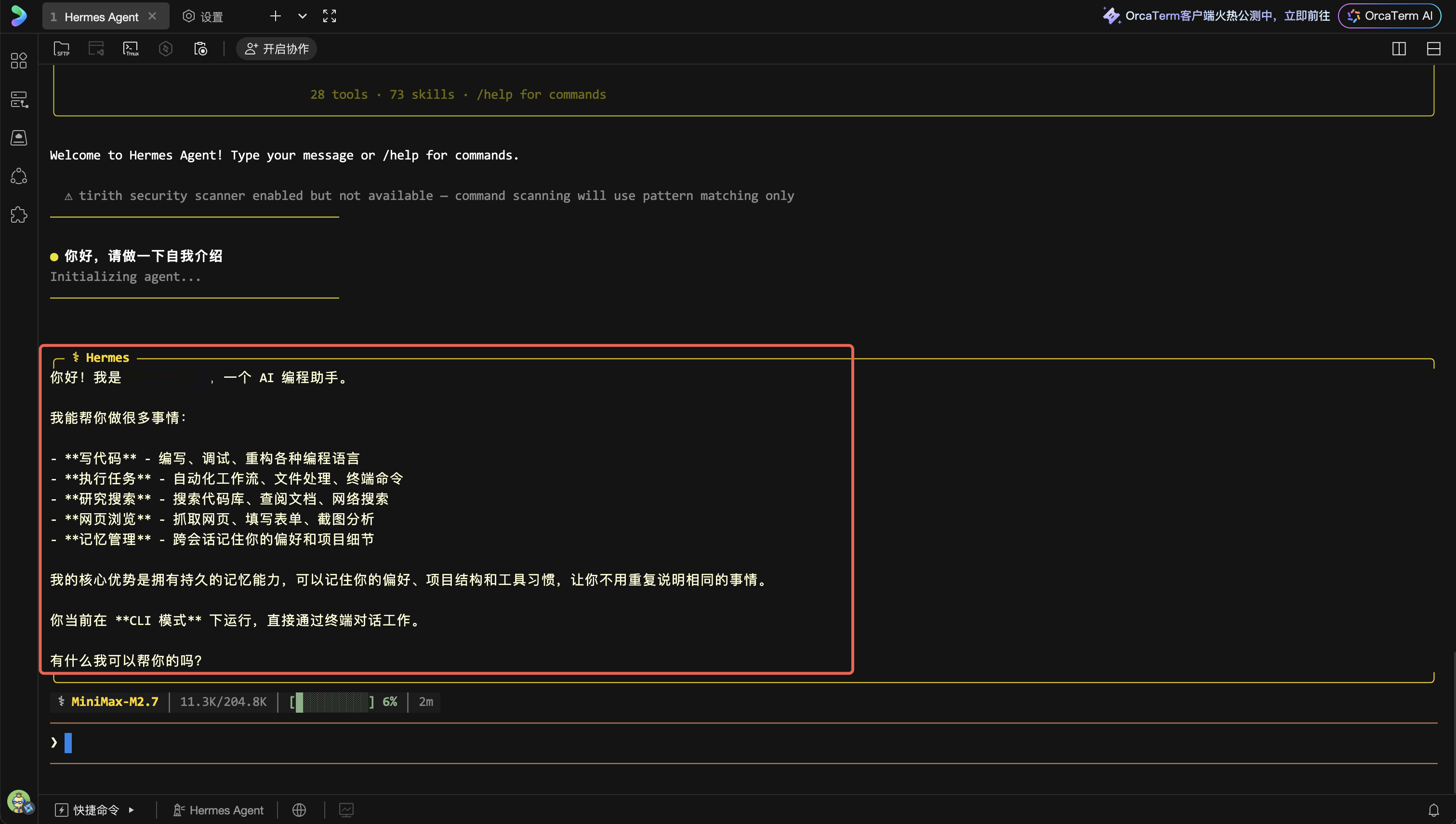

Press Enter to send. If everything works correctly, Hermes Agent generates a response via the MiniMax model:

If you encounter errors (such as "API key invalid" or "connection timeout"), please check:

- Whether the API key was pasted correctly (run

hermes doctorfor diagnosis) - Whether the server can access MiniMax's API service (domestic Lighthouse instances typically have no issues)

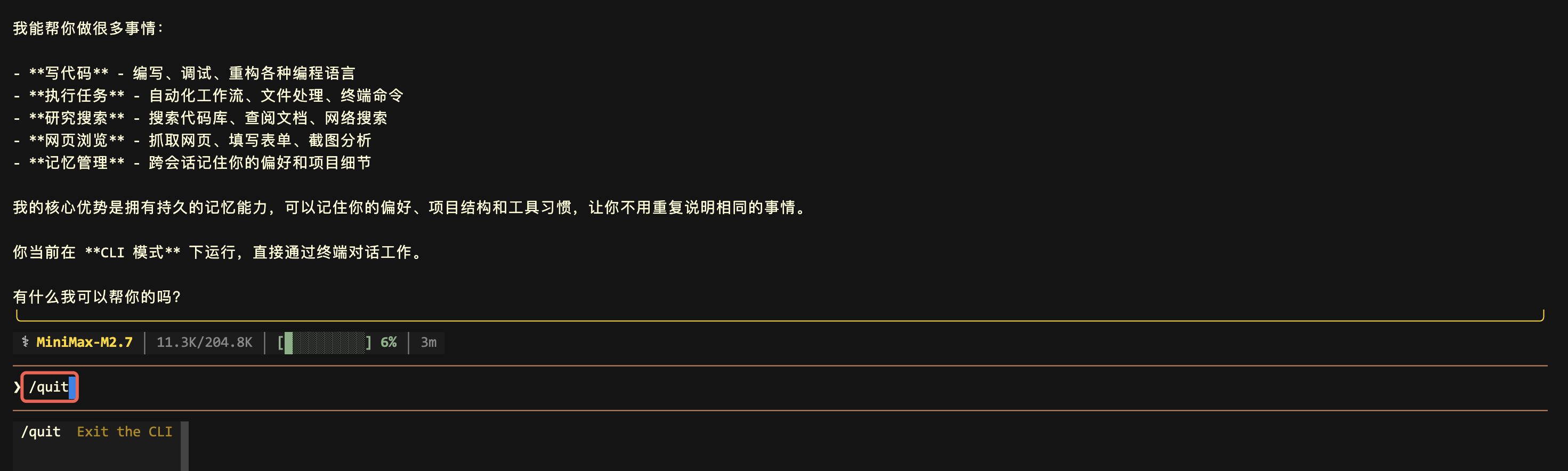

After successful verification, enter /quit and press Enter to exit the TUI interface:

/quit

Next Step: After model configuration is complete, you can continue to configure the WeChat Work messaging channel to enable real-time conversation with Hermes Agent.

Complete Guide to WeCom Integration for Hermes Agent

Connect WeCom (WeChat Work)

WeCom is one of the most commonly used enterprise-level instant messaging tools in China. Hermes Agent natively supports connecting to WeCom via WebSocket, requiring no public IP address, no Webhook callback address, with simple configuration.

Step 1: Create AI Bot in WeCom Management Console

After model verification is successful, we need to configure WeCom as a chat channel, so you can talk to Hermes Agent just like sending WeChat messages.

5.1 Log in to WeCom Management Console

Open WeCom Management Console in your browser.

Log in using WeCom QR code scanning (you need to have administrator permissions for a WeCom organization).

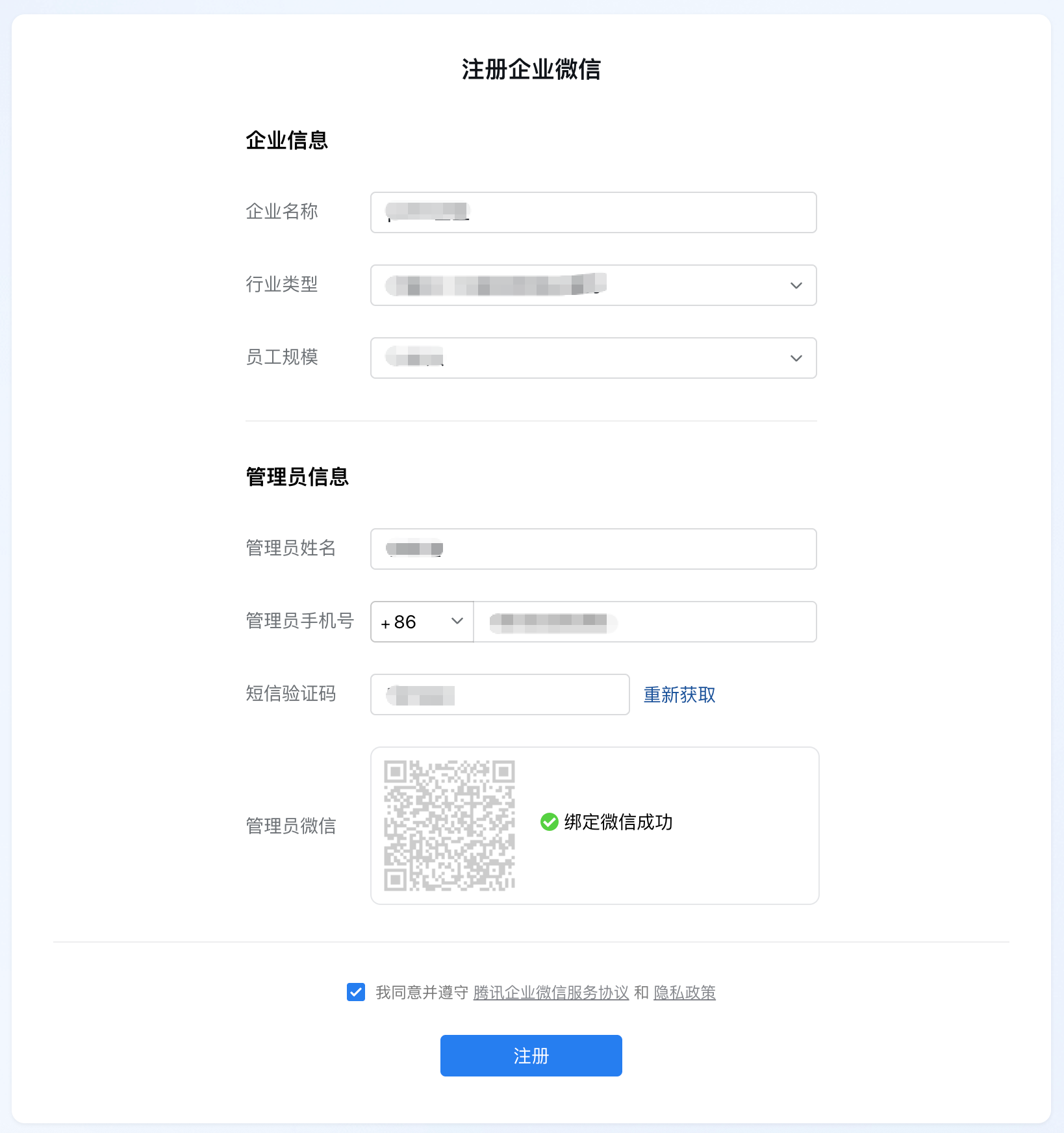

Tip: If you don't have a WeCom organization yet, you can register one for free. Visit WeCom Official Website, click "Register Now", and follow the process to create an organization.

5.2 Create AI Bot Application

After logging into the management console, find and click Security & Management in the left menu bar.

Click Management Tools, then select Smart Robot in the Smart Zone.

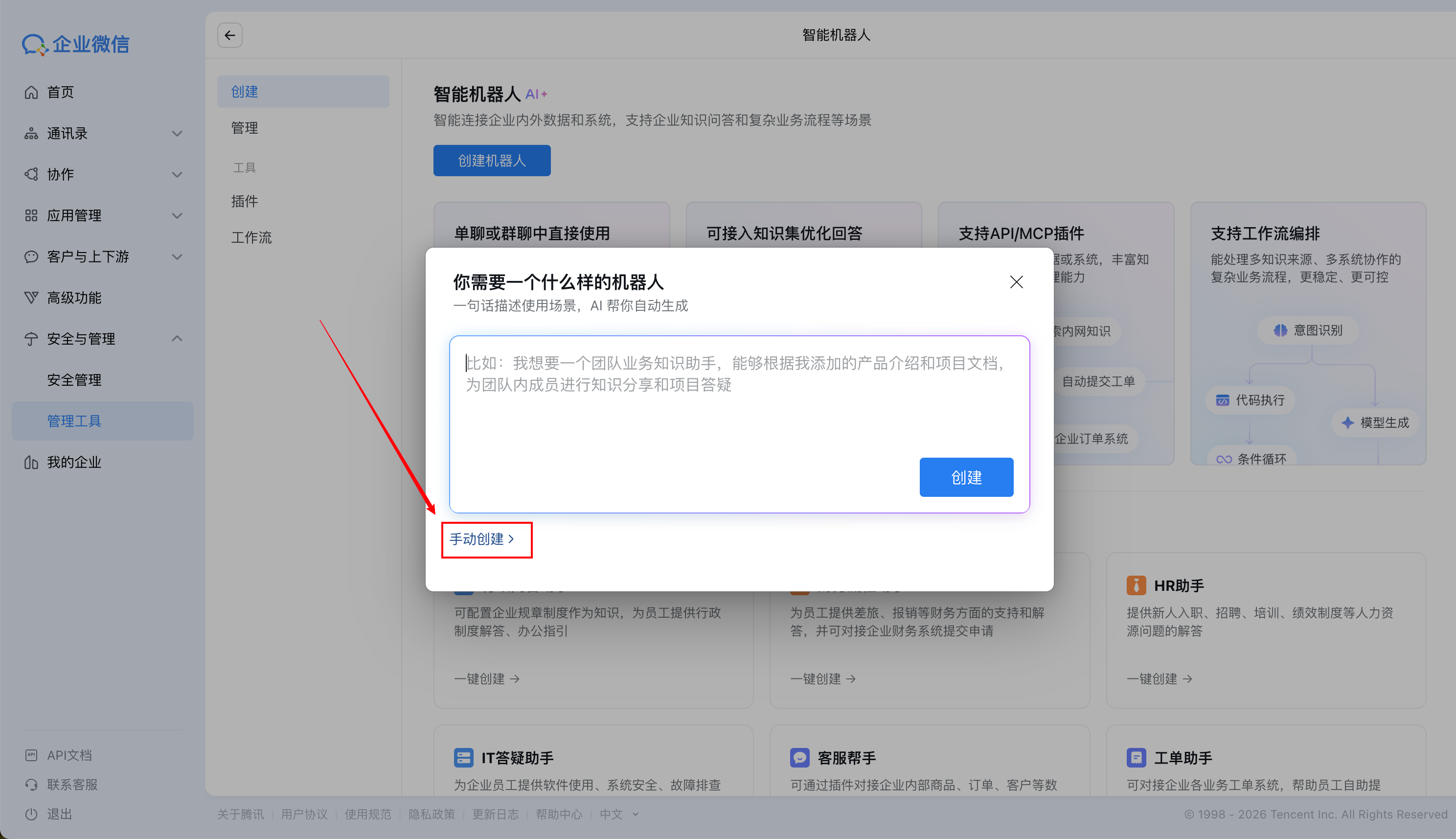

On the creation page, click the Create Robot button.

In the pop-up window, select Manual Creation.

On the Create Smart Robot page, click the edit button, and in the subsequent pop-up window, fill in the robot's avatar, name (e.g., "Hermes"), and description information sequentially.

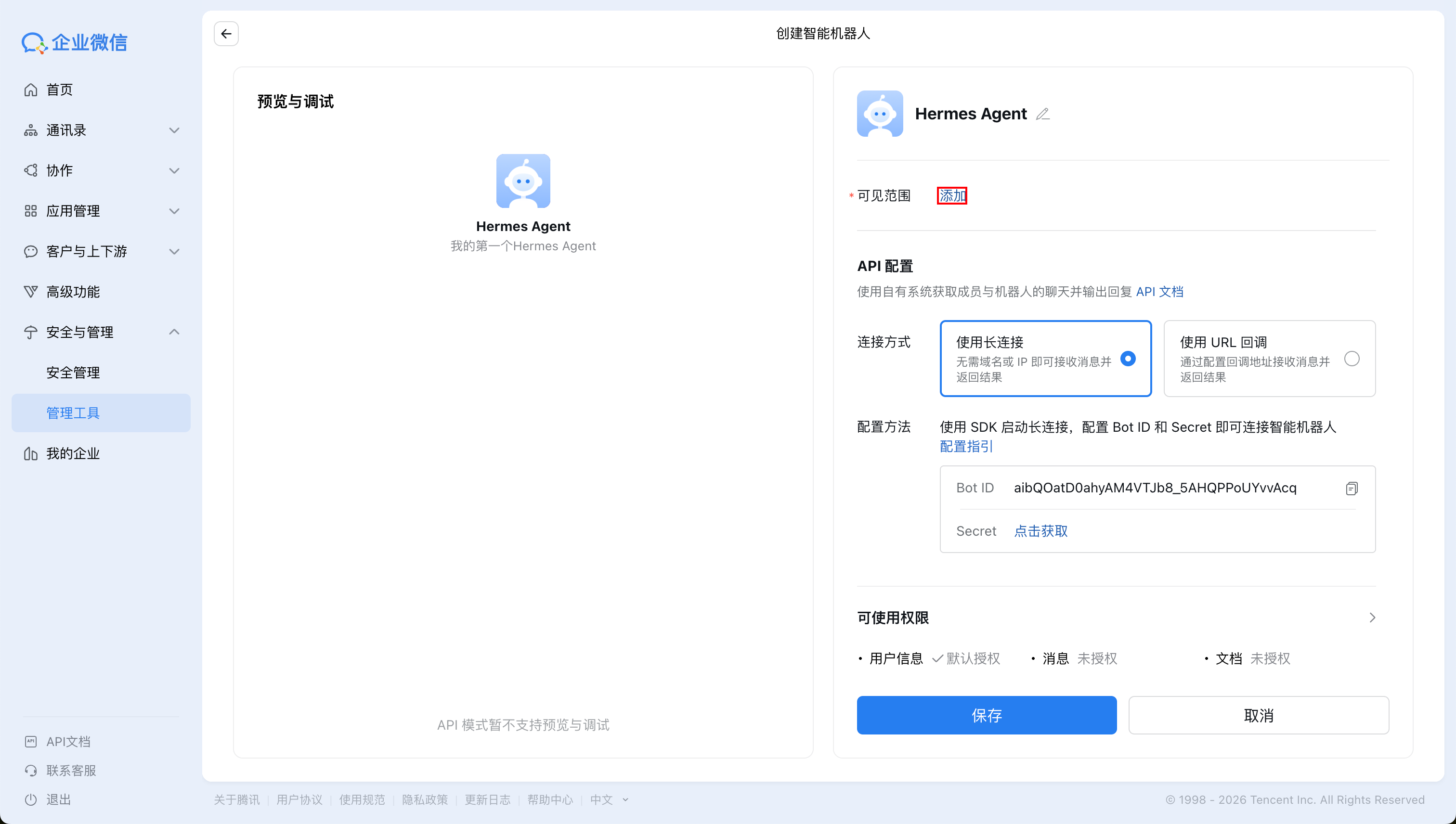

Scroll to the bottom right of the page, select API Mode Creation.

Use Long Connection Method to Connect WeCom Robot

On the next page, configure the visible scope as needed.

In the API configuration, find Secret, and click the Click to Get button next to it:

In the available permissions, select Messages (other permissions can be granted as needed):

After authorization is complete, return to the page and click the Save button.

5.3 Get Bot ID and Secret

After creation is complete, enter the robot's details page, where you will see the following two key pieces of information:

- Bot ID: The robot's unique identifier

- Secret: The robot's secret key

Please copy and securely save these two values, as they will be needed later when configuring Hermes Agent.

Security Tip: Secret is equivalent to the robot's password. Please do not disclose it to others.

Step 2: Configure WeCom Credentials on the Server

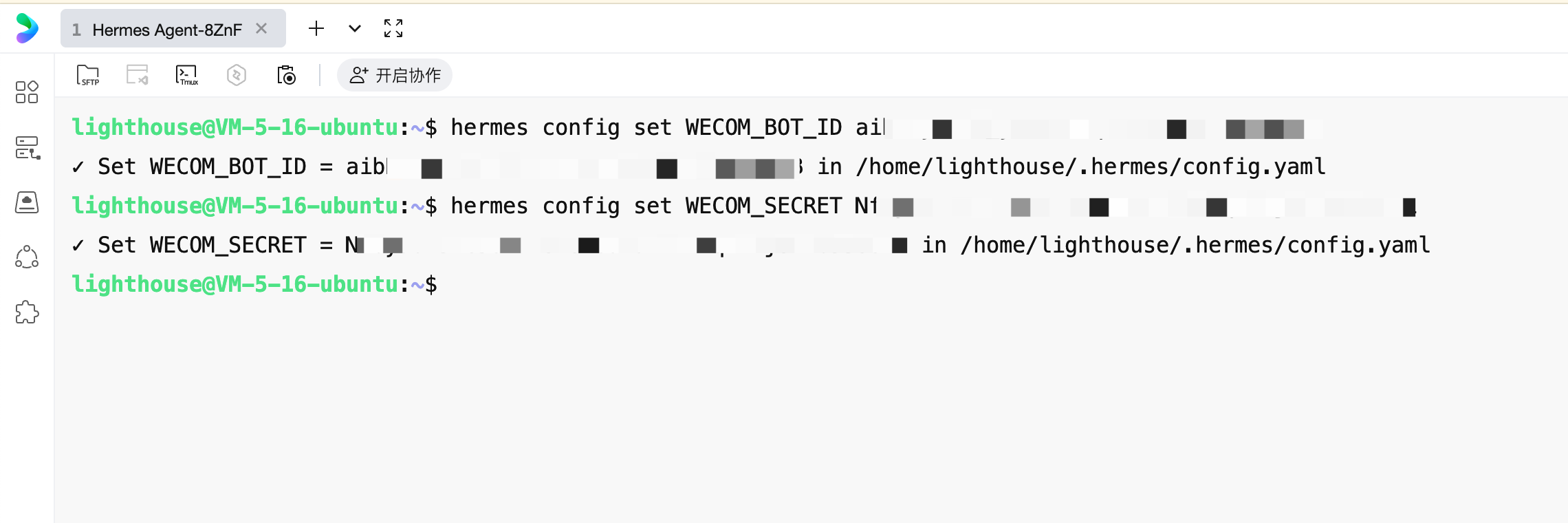

Return to the server terminal. We need to configure the WeCom Bot ID and Secret we just obtained into Hermes Agent.

All sensitive information for Hermes Agent (such as API keys, Bot credentials, etc.) is saved in the ~/.hermes/.env file. We can use the following commands to write the WeCom credentials one by one.

6.1 Write Bot ID and Secret

Run the following command in the terminal (replace your-bot-id with the actual Bot ID you copied in step 5.3):

hermes config set WECOM_BOT_ID your-bot-id

For example, if your Bot ID is bot_abc123xyz, the command would be:

hermes config set WECOM_BOT_ID bot_abc123xyz

Run the following command (replace your-secret with the actual Secret you copied in step 5.3):

hermes config set WECOM_SECRET your-secret

6.2 Private Message Permission Settings

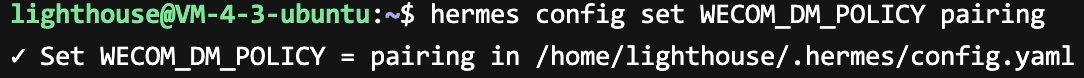

You can restrict which users can send private messages to Hermes Agent by setting WECOM_DM_POLICY. Here, set it to pairing.

This way, when an unauthorized user sends a private message to your Bot, it won't directly open a conversation but will trigger a pairing code verification process—the Bot will send a one-time pairing code to the other party, and you need to confirm it on the server side before it can pass.

hermes config set WECOM_DM_POLICY pairing

6.3 Configure Access Restrictions (Optional)

You can restrict which WeCom users can converse with Hermes Agent by setting WECOM_ALLOWED_USERS:

hermes config set WECOM_ALLOWED_USERS your_user_id

If allowing multiple users, separate them with English commas:

hermes config set WECOM_ALLOWED_USERS user_id_1,user_id_2

How to get WeCom user ID: In WeCom Management Console → Contacts → Click on member → View the "Account" field, which is the user ID.

If you don't need access restrictions temporarily (for personal testing only), you can skip this step. By default, all WeCom members who can contact this Bot can converse with it (requires pairing).

Tip: If you want all WeCom users to be able to interact with the Bot, you can execute

hermes config set GATEWAY_ALLOW_ALL_USERS true. If you only want to allow specific users to access, you can set a whitelist viahermes config set WECOM_ALLOWED_USERS user_id_1,user_id_2.

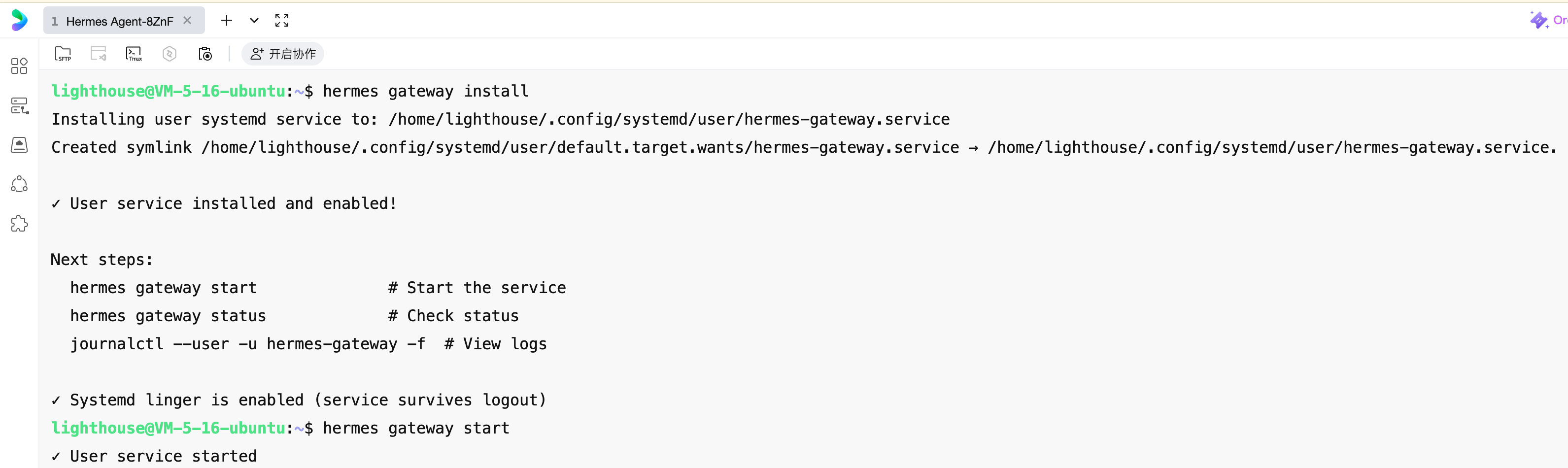

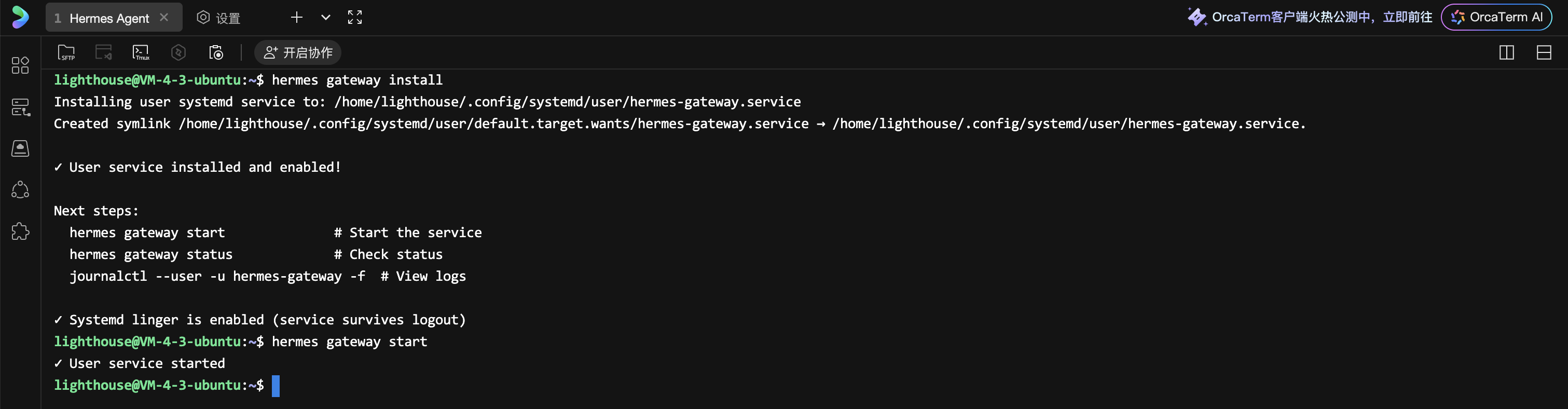

Step 3: Start the Gateway

After all configurations are complete, we need to start Hermes Agent's message gateway, which is responsible for receiving and sending WeCom messages.

To have Hermes Agent run continuously in the background and start automatically after server reboot, it is recommended to install the gateway as a system service:

hermes gateway install

After installation is complete, start the service:

hermes gateway start

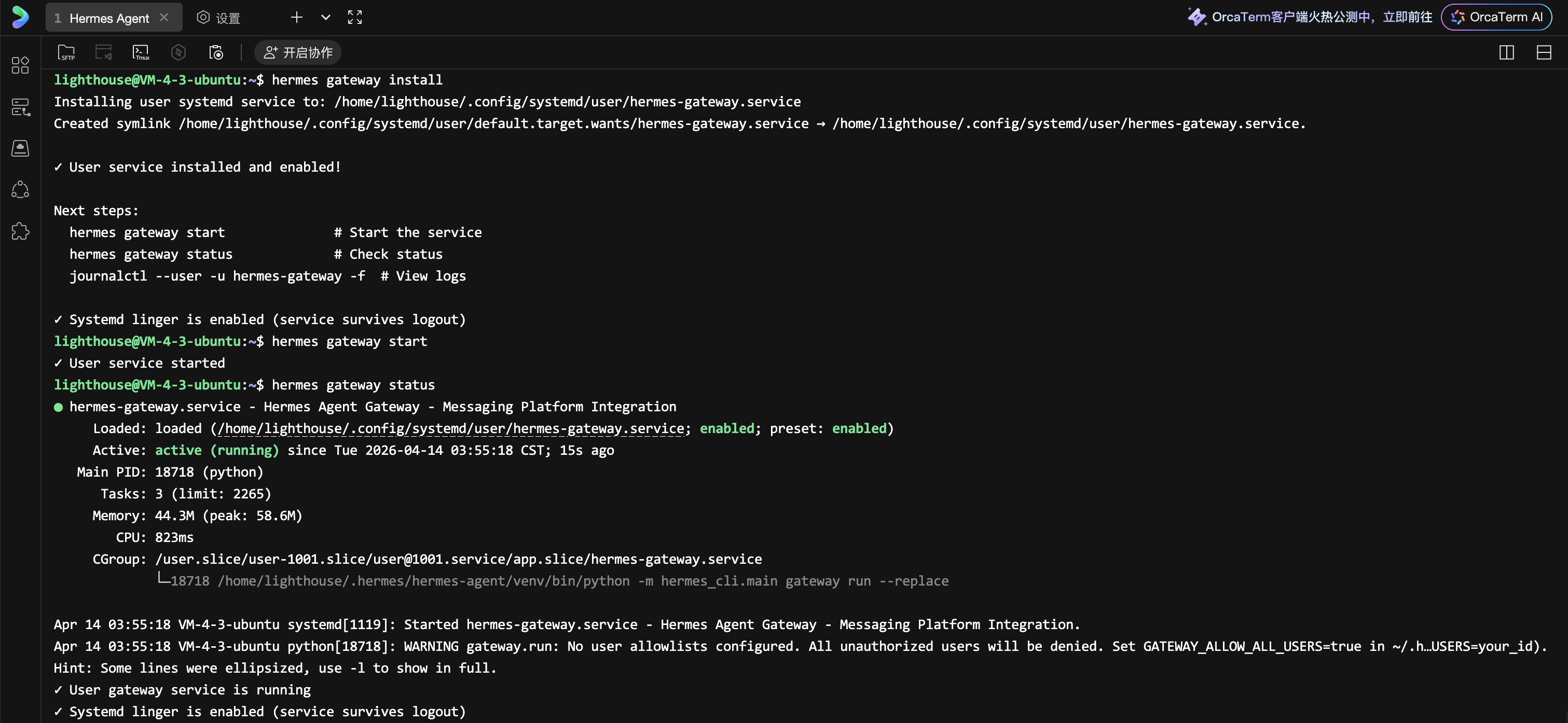

You can check the running status of the gateway service at any time with the following command:

hermes gateway status

At this point, your Hermes Agent is fully configured and running in the background.

Now we return to the WeCom backend. We can obtain and scan the robot's QR code to add it to the chat dialog:

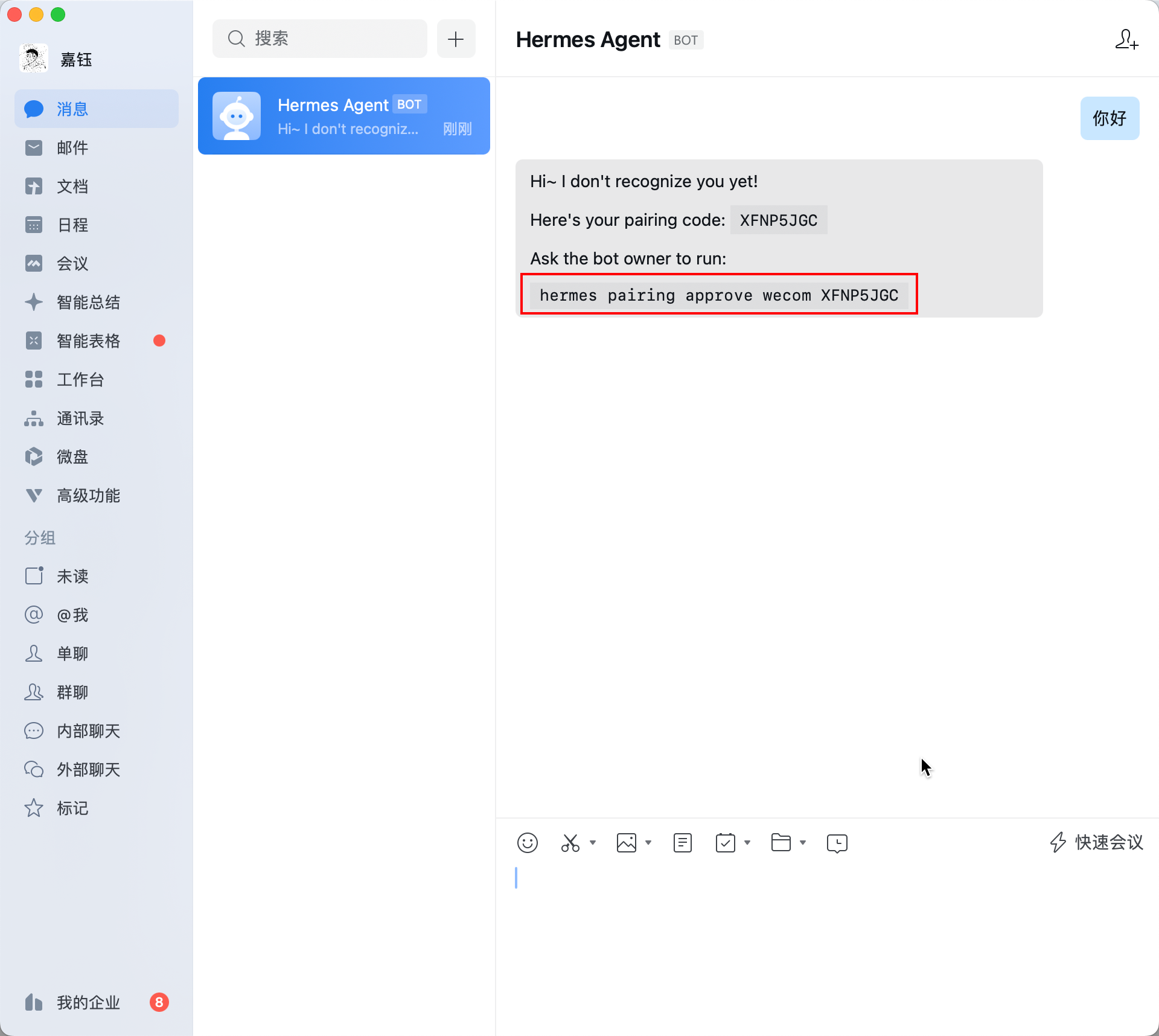

After sending any message in WeCom, you will see a pairing message:

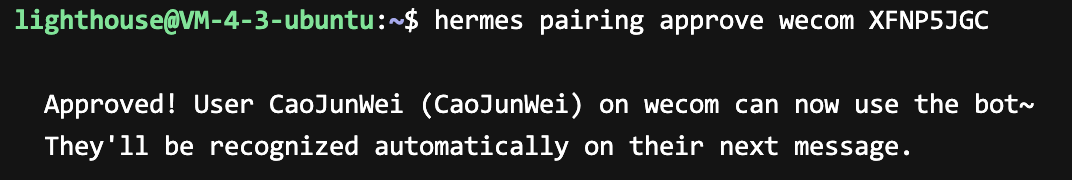

Copy the last command from the pairing message, return to the server command line, paste it, and press Enter. After seeing the following information, it indicates pairing is complete.

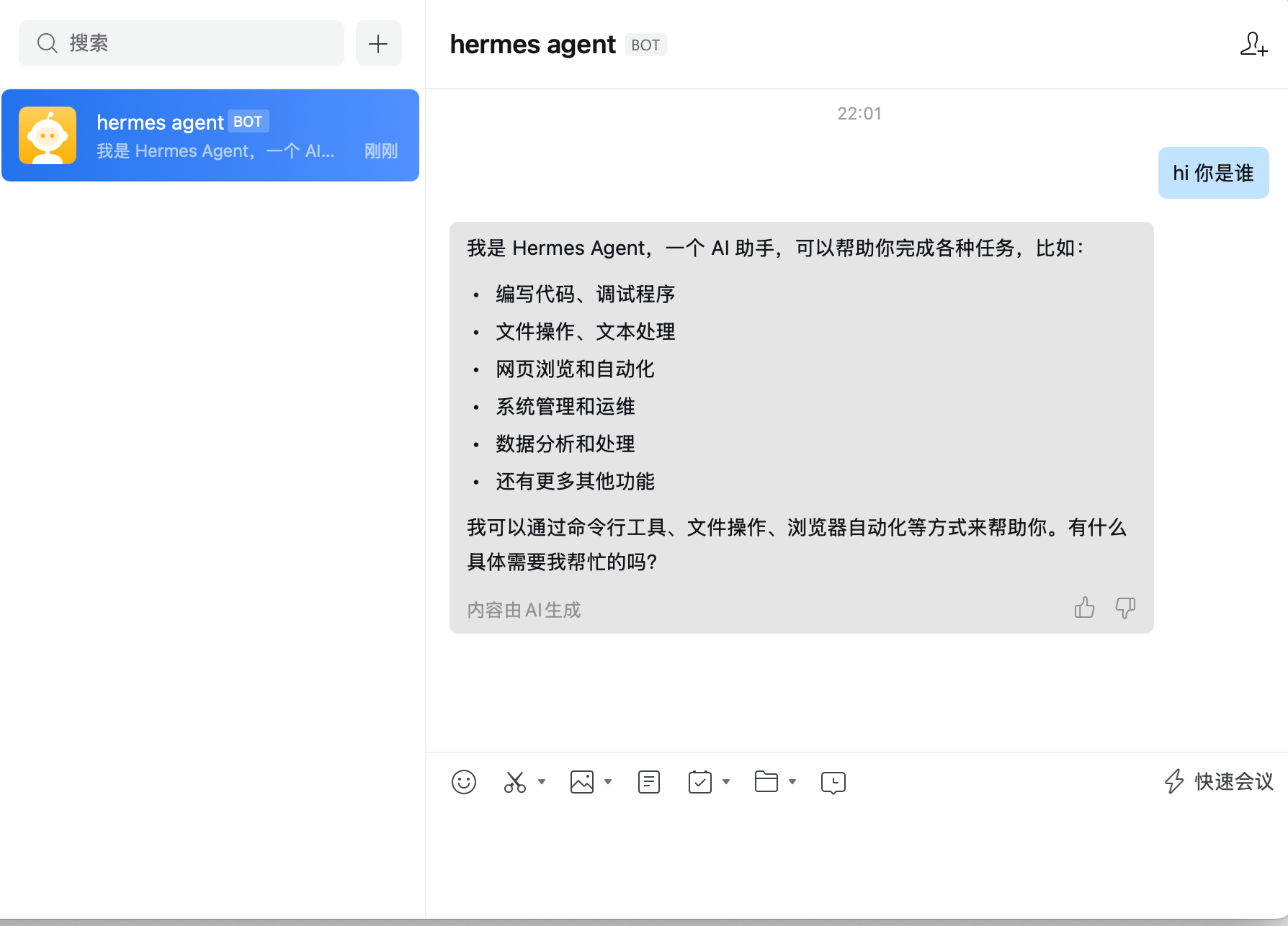

Now return to WeCom, and you can start conversing with Hermes Agent!

If you encounter a prompt similar to 📬 No home channel is set for Wecom. A home channel is where Hermes delivers cron job results and cross-platform messages., you can directly send /sethome in the chat dialog to complete it.

Security Tip: Hermes Agent's WebUI does not provide authentication capabilities. Anyone who knows the access address can directly operate your Agent. We strongly do not recommend enabling WebUI yourself and accessing it via public network.

Advanced WeCom Configuration

You can further configure WeCom's access policies in ~/.hermes/config.yaml, such as restricting which groups can use the Bot, setting group user whitelists, etc.:

platforms:

wecom:

enabled: true

extra:

dm_policy: "open" # Private chat policy: open/allowlist/disabled

group_policy: "allowlist" # Group chat policy: open/allowlist

group_allow_from: # List of allowed group IDs

- "group_id_1"

Common Issues

Prompt "Code not found or expired" after entering pairing command

Symptom: After running the pairing command provided in the WeCom message in the server terminal, the prompt appears:

Code 'XXXXXXXX' not found or expired for platform 'wecom'.

Run 'hermes pairing list' to see pending codes.

Cause: The pairing code has a validity period (1 hour). It may have expired or changed while you were copying the command.

Solution:

- Run the following command to view current pending pairing requests:

hermes pairing list

- Find the pairing code corresponding to the Code column in the returned list (e.g.,

XXXXXXXX):

Pending Pairing Requests (1):

Platform Code User ID Name Age

-------- ---- ------- ---- ---

wecom XXXXXXXX JIAYU JIAYU 1m ago

- Copy this pairing code and re-run the approval command:

hermes pairing approve wecom XXXXXXXX

Replace XXXXXXXX with the actual pairing code you see in the list.

Quick Reference for Common Management Commands

After completing the above configurations, you may need the following commands to manage your Hermes Agent:

| Command | Description |

|---|---|

hermes |

Directly chat with Hermes Agent in terminal (TUI interface) |

hermes gateway |

Start message gateway in foreground |

hermes gateway start |

Start background gateway service |

hermes gateway stop |

Stop background gateway service |

hermes gateway status |

Check gateway service status |

hermes setup |

Re-run configuration wizard |

hermes setup model |

Only reconfigure model/provider |

hermes setup gateway |

Only reconfigure chat platform |

hermes model |

Switch model |

hermes doctor |

Diagnose configuration issues |

hermes update |

Update to latest version |

hermes config |

View current configuration |

Start and Manage Gateway

After configuration is complete, use the following commands to manage the gateway service:

# Run in foreground (suitable for debugging)

hermes gateway

# Start in background

hermes gateway start

# Check running status

hermes gateway status

# Stop gateway

hermes gateway stop

Tip: One gateway process can simultaneously connect to all configured platforms (WeCom, Telegram, Discord, etc.), no need to start separate services for each platform.

Welcome to join the discussion!

A Discord has been created, and everyone is welcome to join and explore advanced ways to use Moltbot (Clawdbot) together!

🚀 Developer Community & Support

1️⃣ Developer Community

Unlock advanced tips on Discord

Click to join the community

Note: After joining, you can get the latest plugin templates and deployment playbooks

2️⃣ Dedicated Support

Join WhatsApp / WeCom for dedicated technical support

| Channel | Scan / Click to join |

|---|---|

| WhatsApp Channel |

|

| WeCom (Enterprise WeChat) |

|