Application Settings Overview

Download

Focus Mode

Font Size

After creating an application, enter the Application Settings Page to configure the application model, knowledge base, and output configuration. This document uses the standard mode application as an example to introduce application configuration in detail.

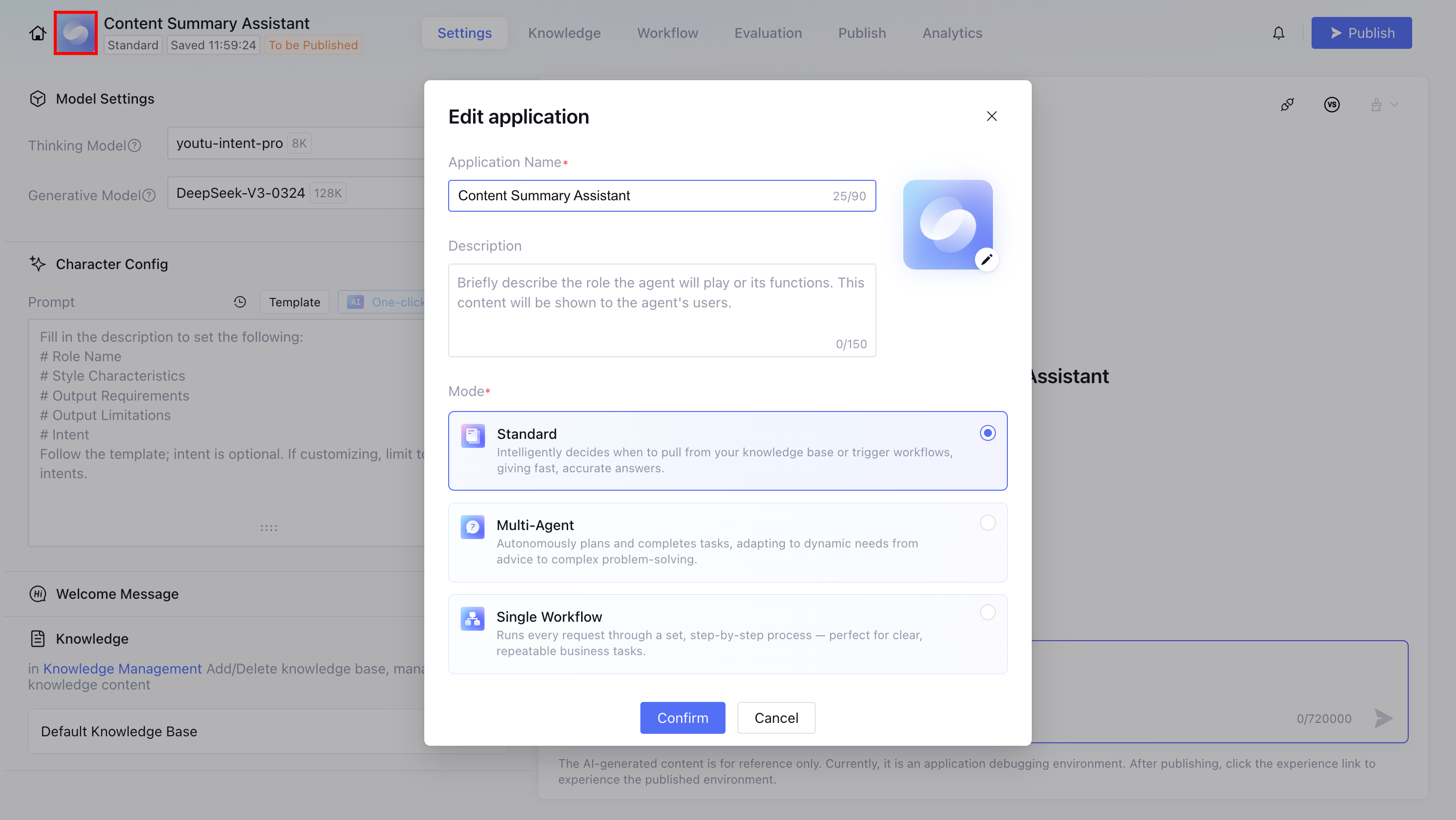

Edit Application

After creating the application, click the app icon in the upper left corner. You can modify basic information such as the app icon, application name, and application description in the Edit application pop-up window, and perform application mode switchover. For details about each mode, see Agent Application and Its Three Modes.

After the settings are completed, the settings page will have differences based on the application mode. Reference the following table:

Configuration Details | Standard Mode | Single Workflow Mode | Multi-Agent Mode |

Basic settings | Application name, avatar, and welcome words remain constant across different modes. | | |

Application Settings | App settings are independent across different modes, they are not inherited when switching modes. | App settings are independent across different modes, they are not inherited when switching modes. Prompts, plugins, and other settings are not supported in Single Workflow Mode. | App settings are independent across different modes, they are not inherited when switching modes. Multi-Agent Mode and Standard Mode have largely the same scope of setting items, but the specific optional ranges are different. |

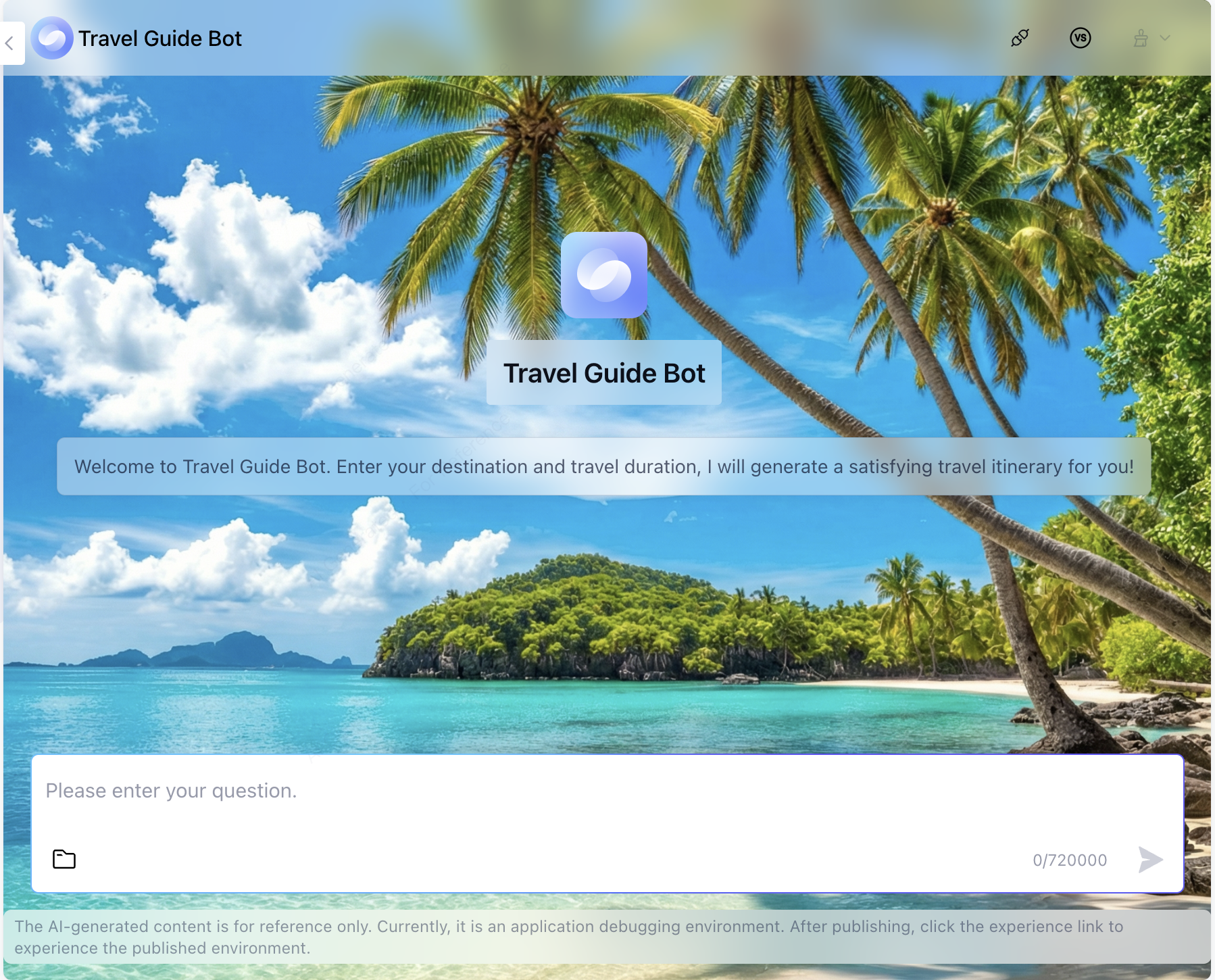

Set the application icon and name. Once published, they will display in the user end interface window.

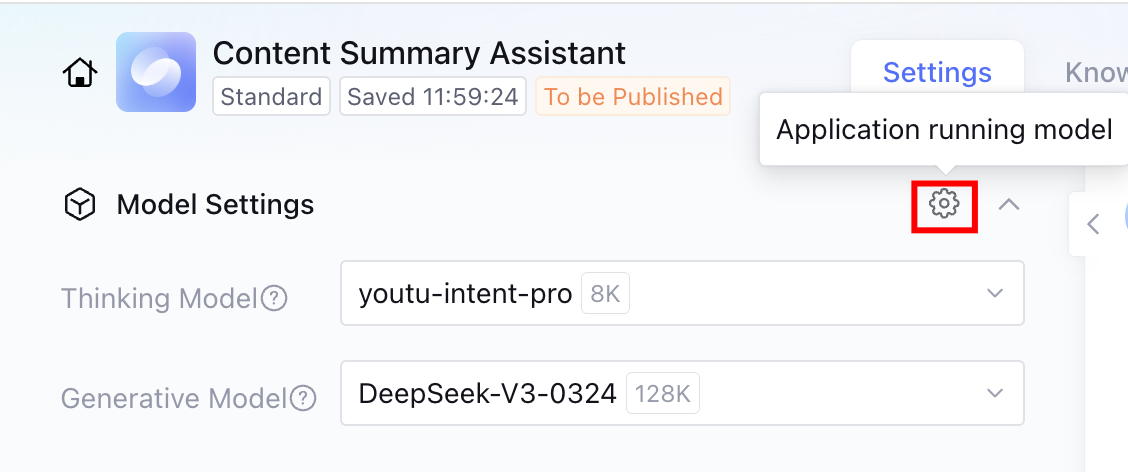

Model Settings

Note:

The multimodal QA model, multimodal reading comprehension model, Prompt rewrite model, and AI one-click optimization model displayed by ADP as default models when creating a new application are the officially recommended best practice models on the ADP platform. These models are the optimal choices validated through a large number of scenarios. It is not recommended that users modify them. Self-modification may cause a drop in end-to-end Q&A effect. Please be cautious when replacing them.

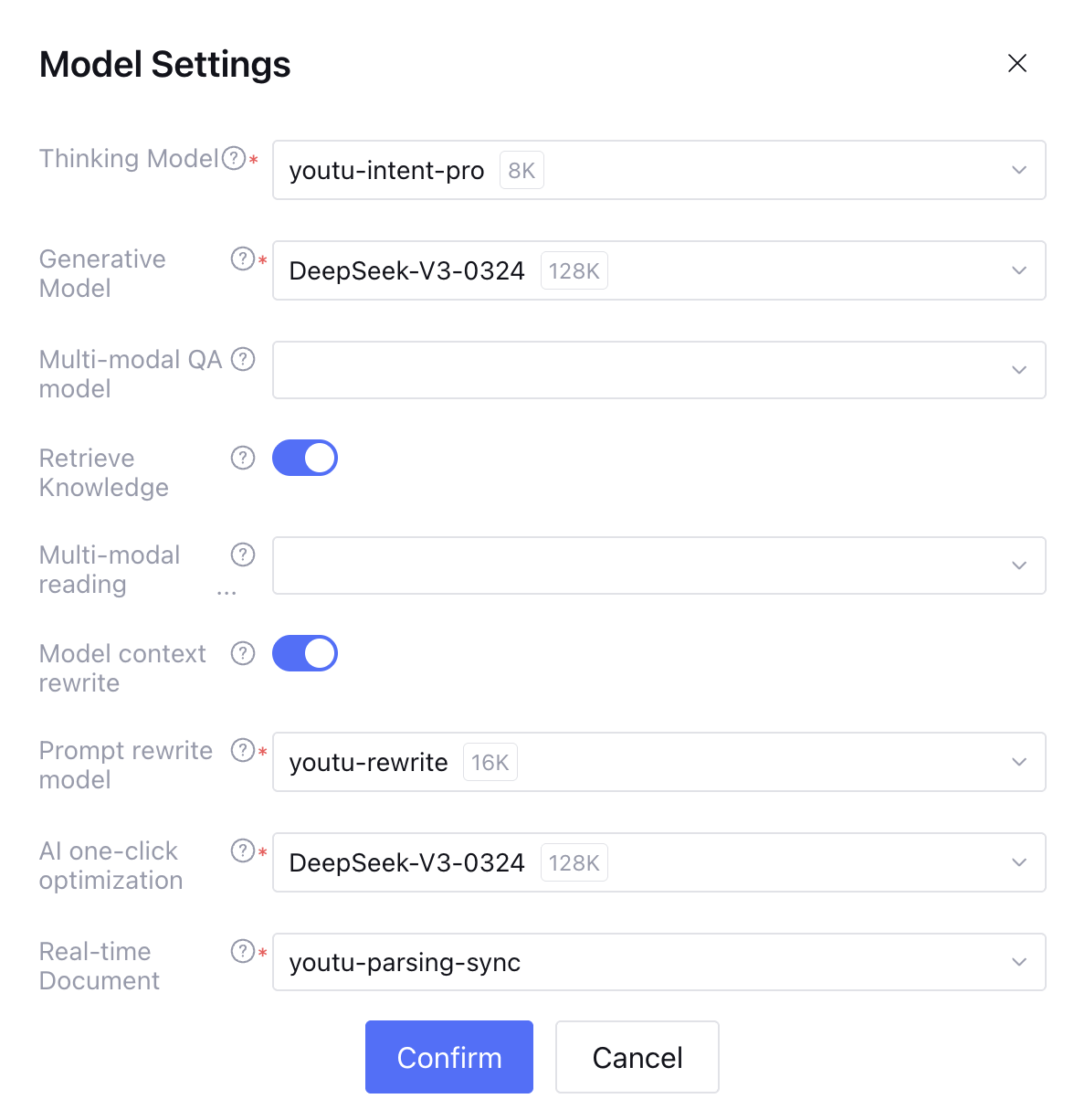

Model settings support model configuration for the running application.

1. The thinking model is used for intent recognition (standard mode), task planning, and plugin selection (Multi-Agent Mode).

2. The generative model is used for reading comprehension, summarization, and reply generation.

3. The multimodal QA model is used to understand uploaded images in the application dialogue.

4. The multimodal reading comprehension model parses multiple questions and image segments recalled from the knowledge library search.

5. The Prompt rewrite model addresses the "fragmented context" issue in multi-round dialogue by converting blurry, omitted, or context-dependent user input into complete semantic instructions that large models can accurately understand.

6. The AI one-click optimization model is used for one-click optimization of prompt content, role-based directives, workflow description, and prompt content for each node. At the same time, it is also used for AI code generation in workflow code nodes.

7. The real-time document parsing model performs document parsing on uploaded documents in QA.

Standard mode and single workflow mode can be set uniformly in model settings.

Model settings feature functionality as follows:

Model service: New users of Tencent Cloud ADP will automatically obtain a certain amount of free tokens. You can select different types of models to perform free application debugging. Based on test results, you can further proceed to purchase and use.

Context rounds: Set the number of historical dialogue rounds input to the large model as prompt. More rounds lead to higher relevance in multi-round dialogue, but also consume more tokens.

Parameter setting:

Temperature: Controls the randomness and variety of generated text. A relatively high value makes the output more random and creative, suitable for poetry writing and other scenarios. A lower value makes the output more focused and confirm, suitable for code generation and other scenarios.

top_p: Controls the variety of text generated by the model. top_p is a nucleus sampling method where the model considers the most likely vocabulary with cumulative probability reaching the top_p threshold.

Maximum output length: Limits the maximum length of text generated by the generative model. Helps control the cost and response time of API calls and prevents generating too long meaningless text.

Role Commands

After a user asks a question, the application will provide a response based on the task role defined in the Role Commands. You can refer to the given instructions to limit the model's response language, tone, etc. Currently, Tencent Cloud ADP supports QA output in Chinese and English.

Version: Supports saving the current prompt content draft as a version and filling in the version description. Saved versions can be viewed and copied in view version record. The version record only shows versions created under the current prompt content box. Supports selecting two versions in content comparison to view their prompt content differences.

Template: A preset role command format template. It is advisable to fill in according to the template for better effect. After writing the command, you can also click Template > Save as Template to save the written command as a template.

AI One-Click Optimization: After completing the initial character design, click One-Click Optimization to optimize the character design content. The model will optimize the setting based on the input content, enabling it to better meet corresponding requirements.

Note:

The AI one-click optimization feature will consume the user's token resources.

Welcome Words

After filling in the welcome message, it will be displayed on the client homepage, supporting insertion of application-level variables for display. You can use AI One-Click Optimization to generate the welcome message.

Knowledge

The knowledge base is a module to import, process, and maintain knowledge.

The Intelligent Agent development platform product divides the knowledge base into default and knowledge base.

Default knowledge base: An application contains only one default knowledge base. The default knowledge base can only be used by this application and is not supported for use by other applications.

Knowledge base: Under the same root account, flexible association between knowledge bases and applications is supported. A knowledge base can be shared by multiple applications, and an application can also refer to multiple knowledge bases. You can create and maintain knowledge bases in "Knowledge Base" and refer to them for usage in knowledge management under applications.

The knowledge base supports displaying the default knowledge base and referred knowledge bases under the application. It also allows setting retrieval recall, search scope, and knowledge base model for each knowledge base.

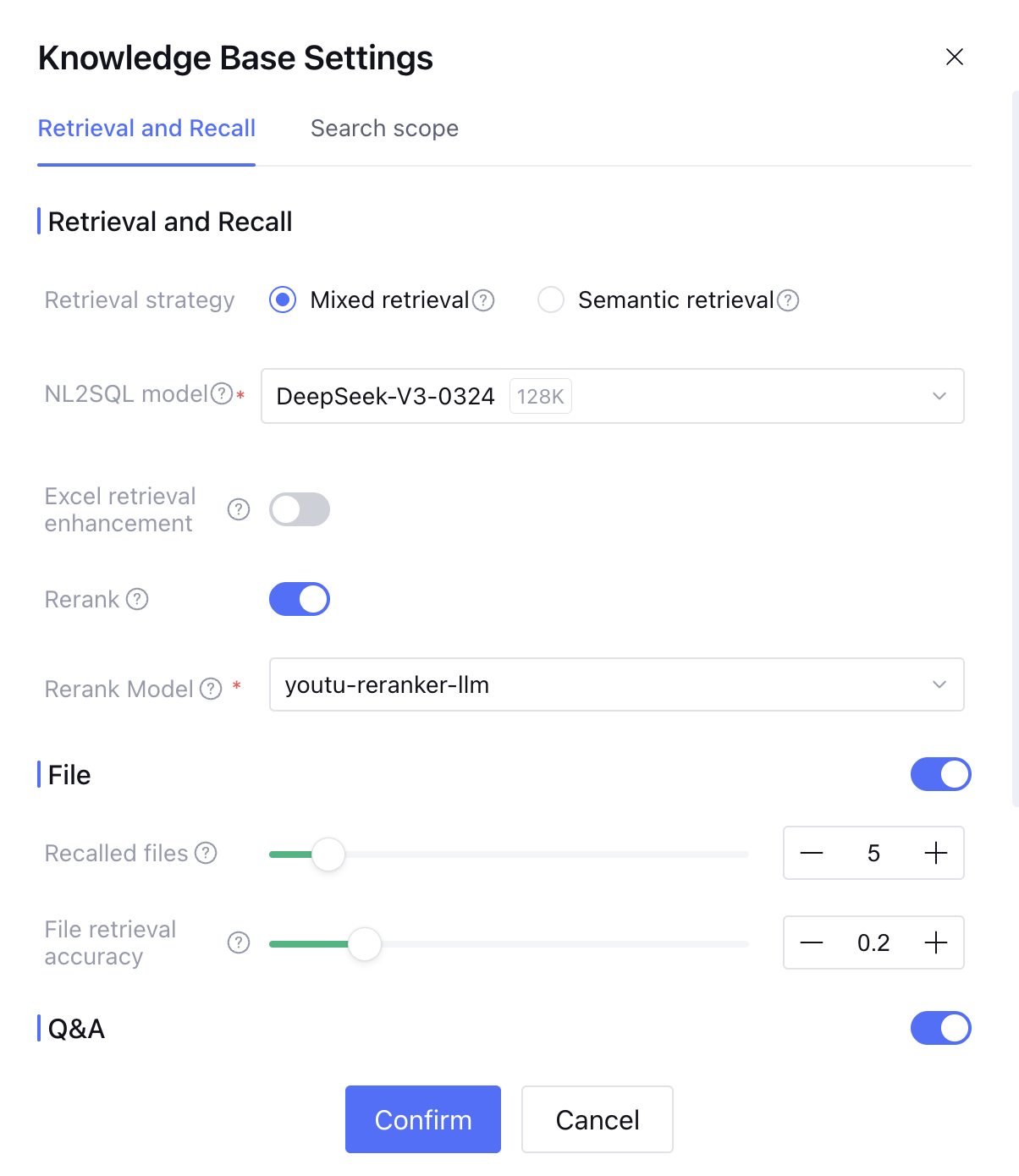

1. Retrieval recall:

Retrieval strategy: hybrid retrieval or semantic retrieval available.

Hybrid retrieval: execute keyword retrieval and vector retrieval simultaneously. Recommended for scenarios requiring string and semantic association, with comprehensive effect.

Semantic retrieval: recommended for scenarios where query and text fragments have minimal vocabulary overlap and semantic matching is required.

Excel search enhancement: when enabled, it is supported based on natural language to query and calculate excel spreadsheet, but may affect reply duration.

Result reordering: When enabled, reordering models are available. During the result reordering process after retrieval and recall, the slice sequence is readjusted through analysis of the user question, ranking content with the highest similarity to the user question first. The platform offers two preset reordering models, or you can go to the model plaza to configure external reordering models.

File: When enabled, the large model will answer questions based on the document library you build. You can directly upload files or upload web pages. The large model will parse and learn the files you upload. Related content can be viewed in File Overview.

Number of document recalls: retrieve and return the top N document fragments with the highest matching degree as input to the large model for reading comprehension.

Document retrieval match rate: based on settings, the found text fragments will be returned to the large model as reference for reply. A lower value means more fragments are recalled, but may affect accuracy. Content with a match rate less than the set value will not be recalled.

QA: When enabled, the large model will answer questions based on the Q&A database you build. You can choose to directly upload files for batch import, manually enter Q&A content, or automatically generate Q&A from files in the document library. Related content can be viewed in QA.

QA database answer reply: available direct reply and polished reply.

Direct reply: when the similarity of the detected problem exceeds the reference value, use the answer to reply directly.

Polished reply: after the answer is detected, it will be polished before replying.

Number of QA recalls: retrieve and return the top N QAs with the highest matching degree as input to the large model for reading comprehension.

QA retrieval match rate: based on settings, the found Q&A content will be returned to the large model as reference for reply. A lower value means more fragments are recalled, but may affect accuracy. Content with a match rate less than the set value will not be recalled.

Database: when enabled, the large model will answer questions based on your third-party database integration.

2. Search scope: implement different answers for different user inquiries by providing different knowledge ranges. For details, see search in the knowledge library related settings.

Workflow

Workflow is used for interaction in complex business scenarios. The enable status of the control process can be managed on the workflow management page. For workflow configuration process and introduction, check What is workflow?

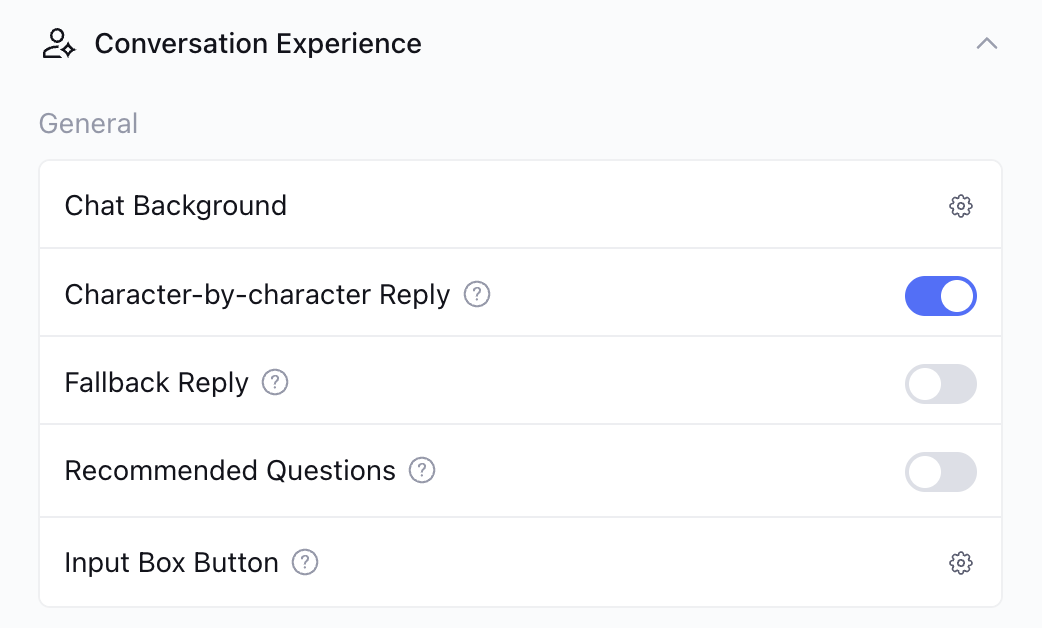

Chat Experience

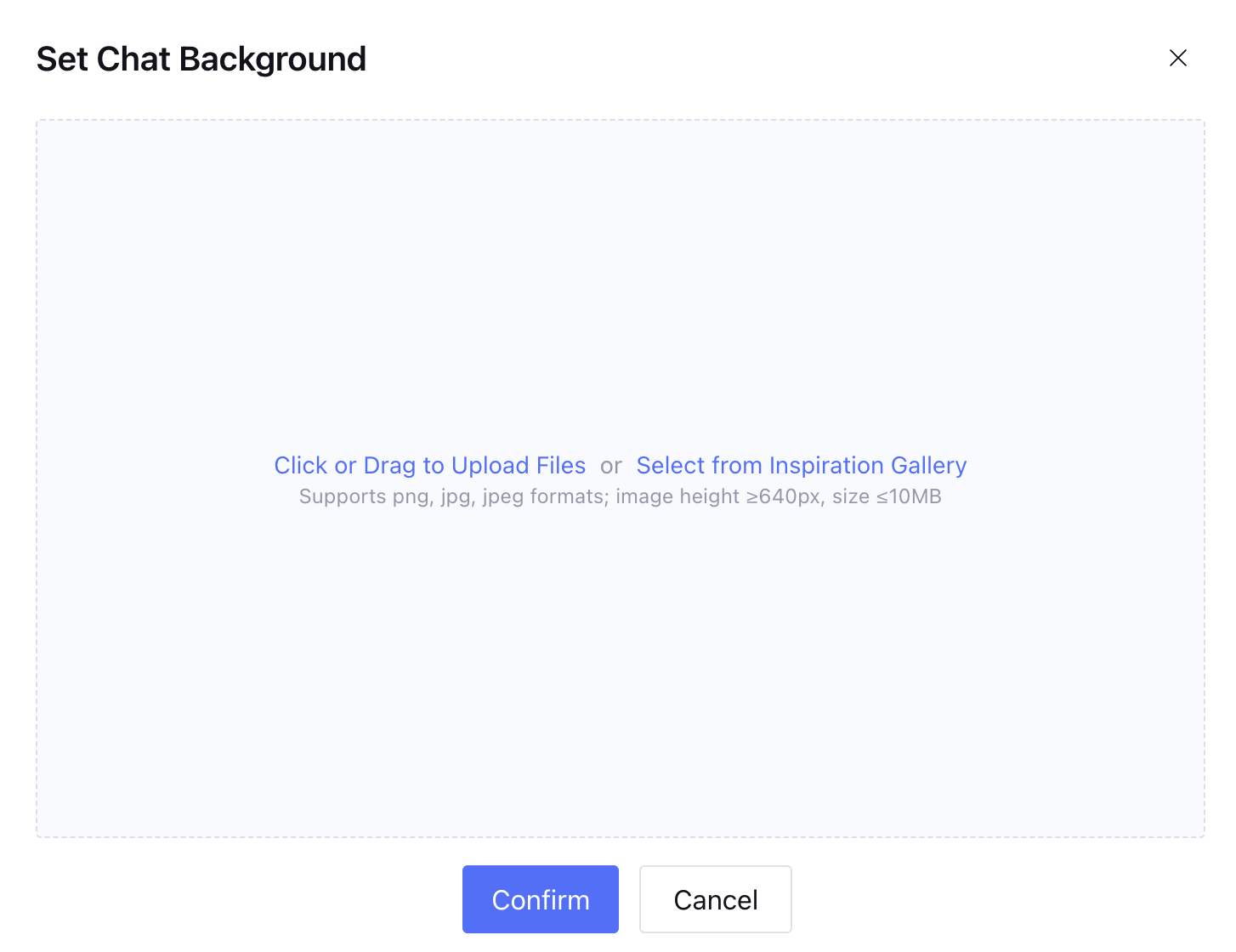

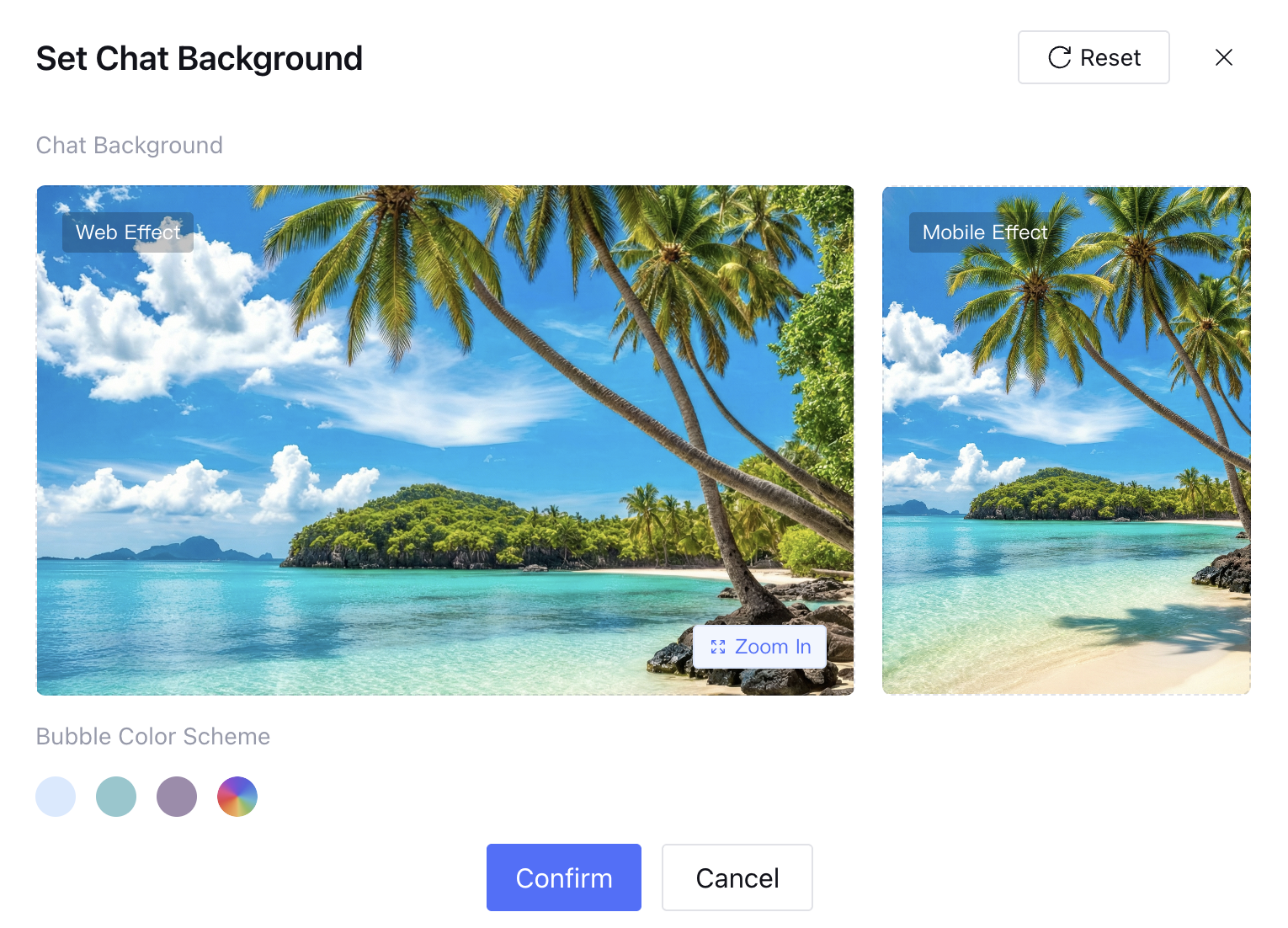

Chat background

Support setting background styles for the chat interface. After clicking the set button, you can upload a custom background image, support uploading local files or selecting from the image library.

1. Upload a local file or select a background image from the image library.

2. Set effect preview, support viewing WEB and mobile terminal effects and set bubble color scheme.

3. Click Confirm, the chat background is successfully set.

Reply word by word

When enabled, the large model reply will be shown in streaming output method, presenting content word by word during the generation process; after disabling, the reply will be output in one-time after fully generated.

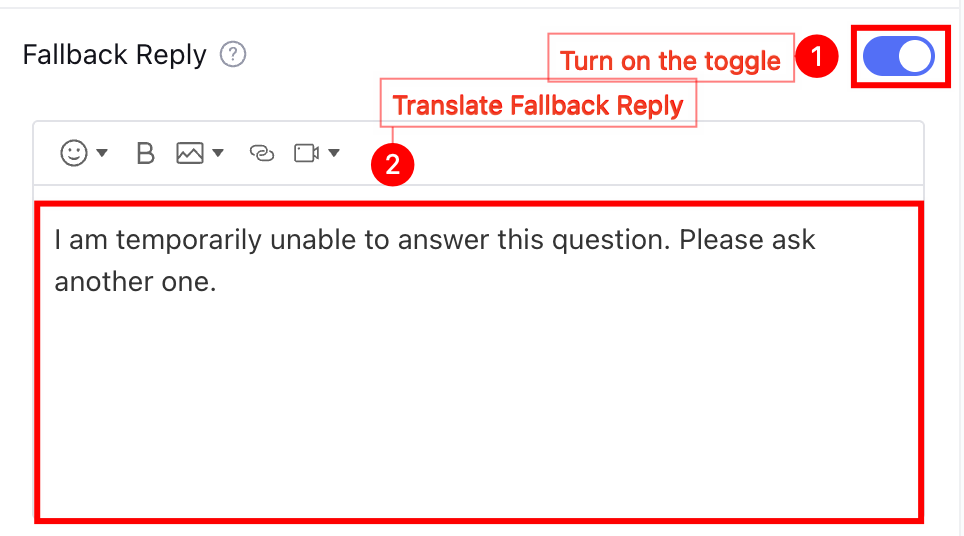

Fallback response

When enabled, if a user's ask exceeds the configured knowledge source scope, the large model will reply based on the fallback content filled here to avoid unanswerable circumstances.

Recommended questions

When enabled, after completing the current question reply, the large model will automatically generate up to 3 recommended follow-up questions based on the Prompt content to guide users in continuing the dialogue.

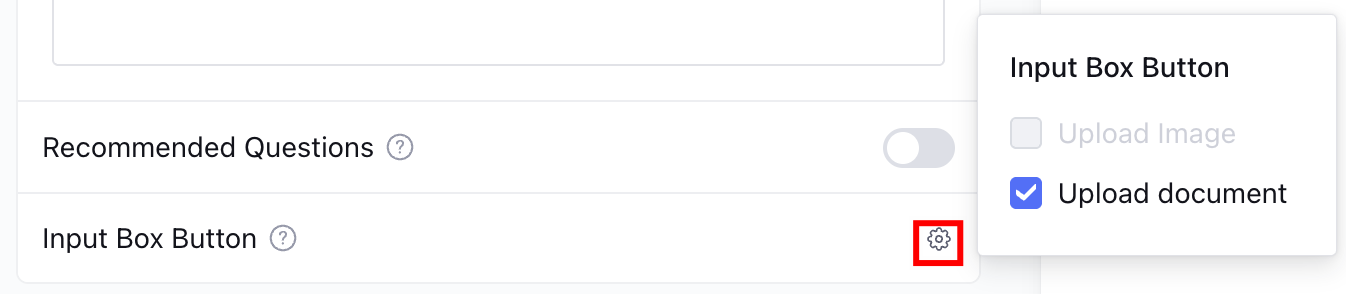

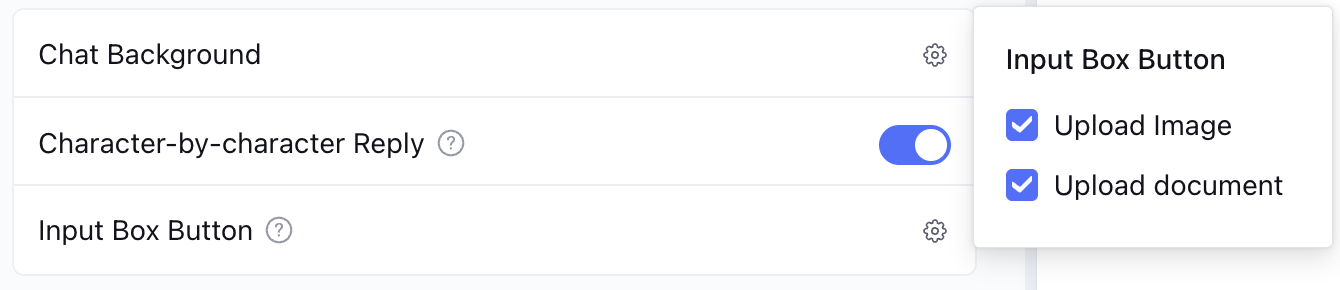

Input Box Button

Support configuring feature buttons shown in the chat input box, options including image upload and upload documents. Among them, only the upload documents button is supported in standard mode; in Multi-Agent Mode, both image upload and upload documents buttons are simultaneously supported.

Standard mode supports upload documents.

Multi-Agent mode supports upload documents and images.

Variable and Memory

Variable

Application variables visible in global scope include system variables, environment variables, API parameters and application variables. For more details, see Variable Description. Click Settings to access the variable management page.

System variable: Variables in application runtime. User customization or modification of existing variables is not supported.

Environment variable: Used to store sensitive information such as API keys and user passwords. You can add parameters by clicking Create to enter the environment variable creation page. It supports filling in default values.

API parameters: Refers to variables imported into the workflow through the custom_variables field when calling the Tencent Cloud Intelligent Agent development platform API (for details on the usage of this parameter, see Dialogue End API Documentation (HTTP SSE), Dialogue End API Documentation (WebSocket)). These variables are referenced in the workflow to execute subsequent business logic. You can add custom parameters by clicking Create to enter the API parameter creation page, which supports filling in default values.

Application variable: A global variable that can be "read and modified" in the global scope of an application, circulated between workflows and with each other among Agents. It supports users to modify manually.

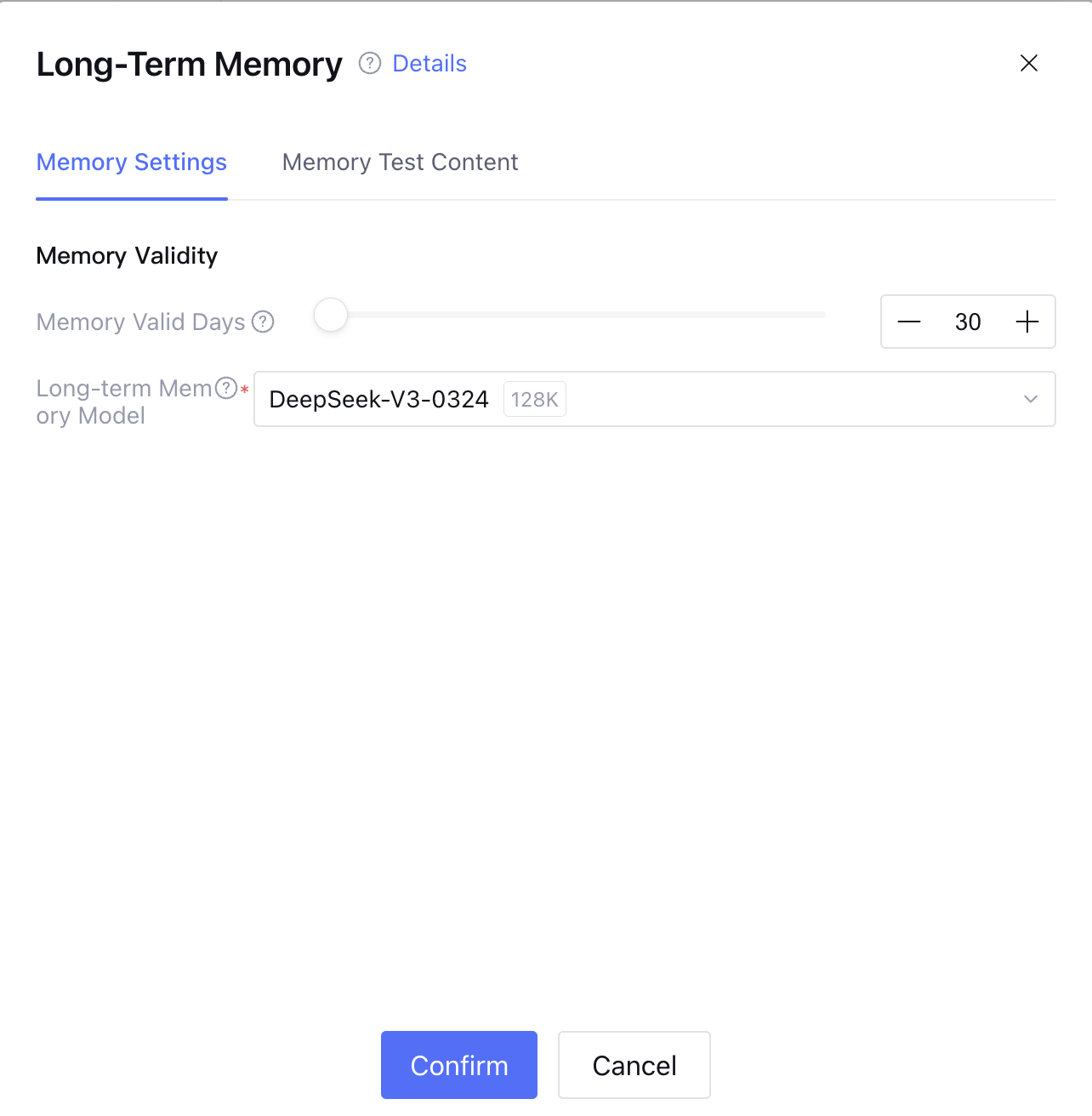

Long-Term Memory

Long-Term Memory supports remembering information in conversation with users to deliver a personalized dialogue experience. After enabling Long-Term Memory, the model will capture and save personalized user information in conversation. For more details, see Long-Term Memory.

Memory settings support configuration of the long-term memory retention period, with an optional range of 1-999 days. The default is 30 days. Memory content will be deleted if it exceeds this limit.

Memory test content displays all memory content within the validity period, supporting operations to modify, delete, and clear all memory content.

Advanced Settings

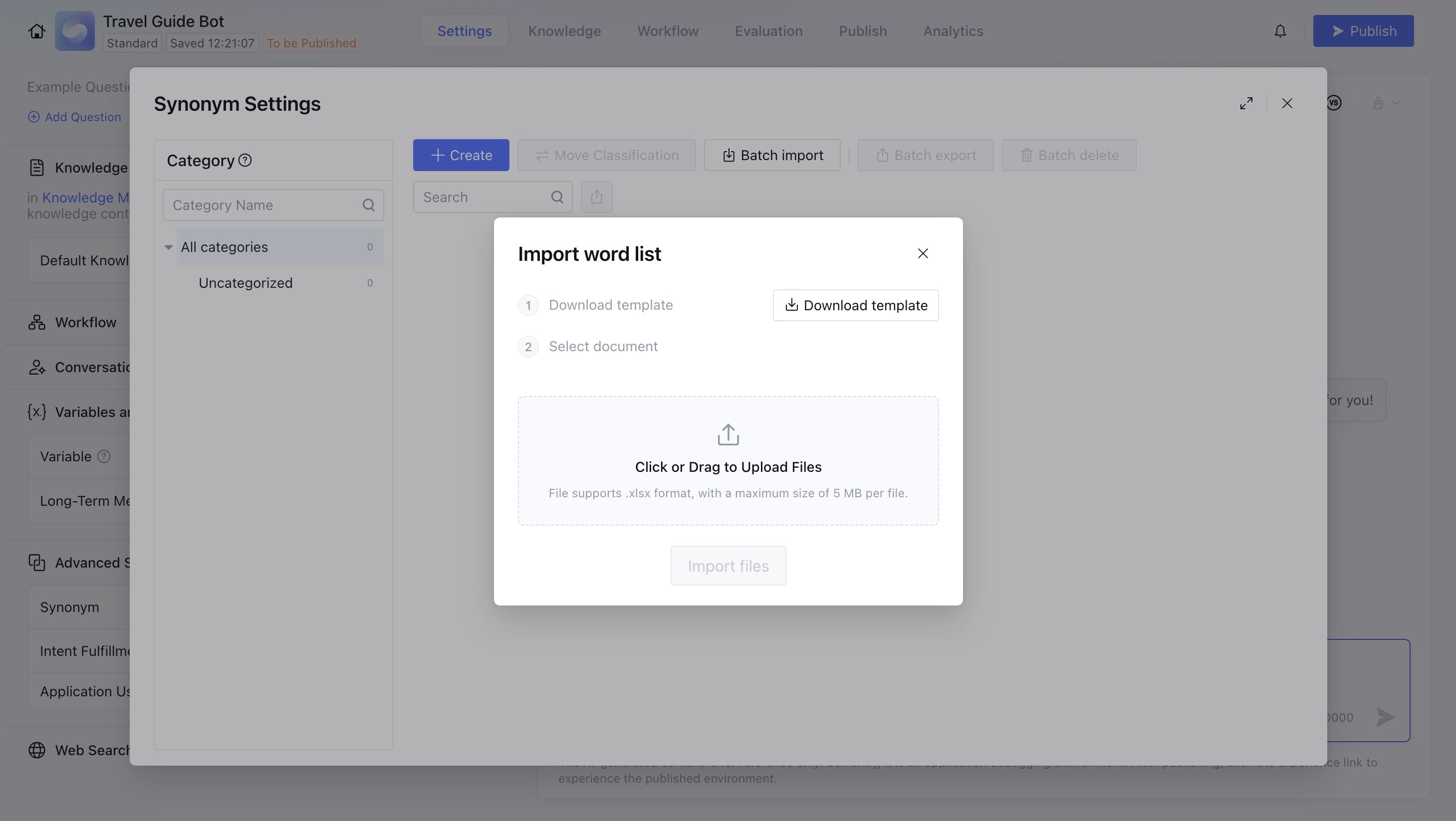

Synonym Settings

Import proper nouns in business scenarios. For synonyms in queries, replace with unified names in the knowledge base before retrieval to improve accuracy.

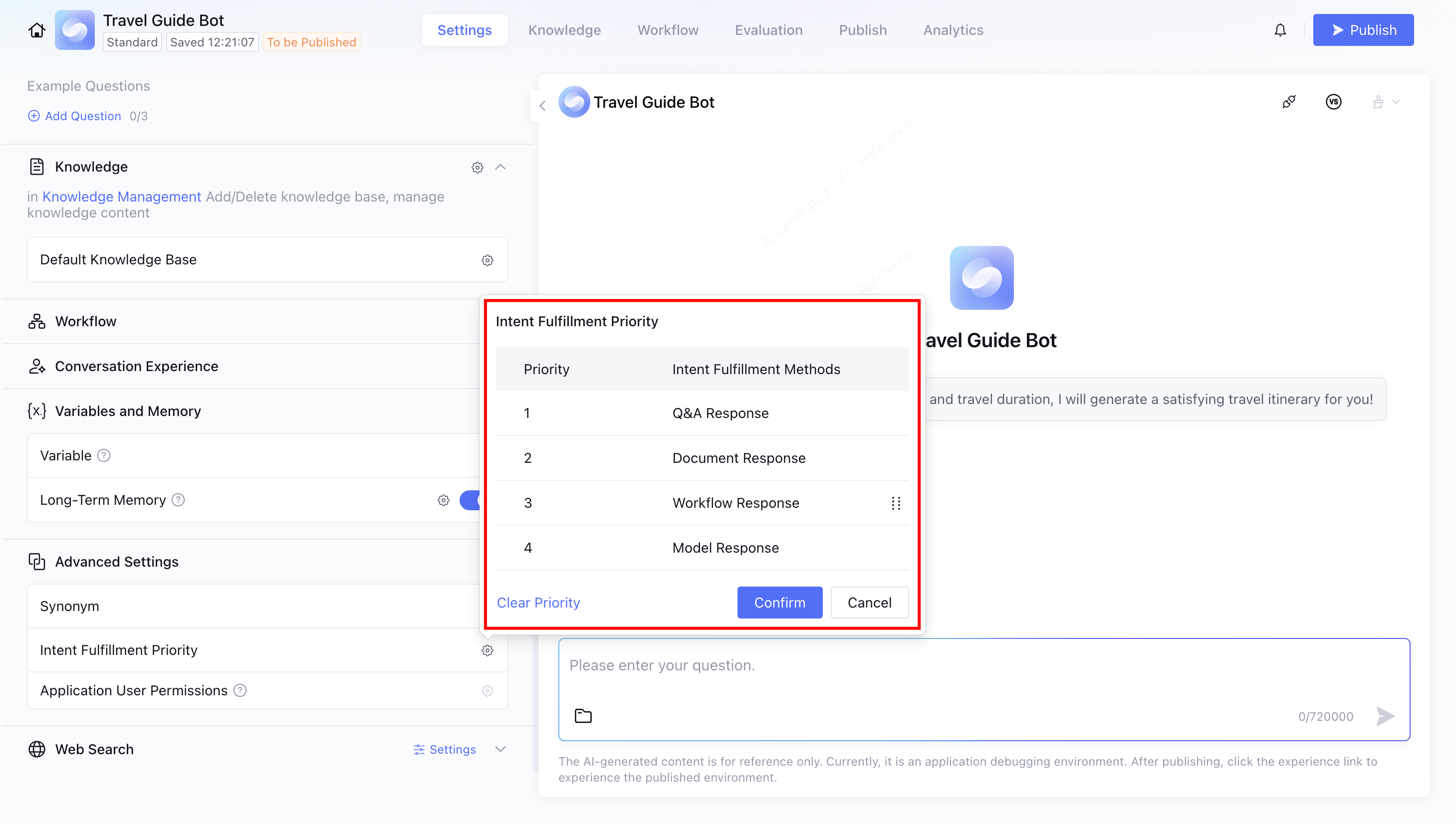

Intent Attainment Priority

Normally, you do not need to set intent attainment priority.

If the QA and workflow in your platform setting are highly similar, it may affect the accuracy of the large model's judgment regarding API call methods. At this point, you can set a reasonable priority.

Application User Permissions

By default, users can view all knowledge. After setting permission, different users can only view knowledge within their corresponding permissions when consulting. For more information, please refer to Application User Permission Description.

Help and Support

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback