Technical Evolution and Usage Practices of Online DDL

Download

Focus Mode

Font Size

Introduction

In the history of database development, DDL (Data Definition Language) operations have always posed significant challenges. In early versions of mainstream commercial databases, traditional DDL operations often required placing an exclusive lock on data tables to prevent data inconsistencies during execution. This locking mechanism caused databases to become unavailable during DDL operations, which is unacceptable for large-scale modern applications requiring 7*24 operation.

To address this issue, ORACLE introduced Online Table Redefinition starting from 9i, and MySQL introduced Online DDL from version 5.6, both aiming to significantly reduce (or eliminate) application downtime required for modifying database objects (this feature is collectively referred to as Online DDL). Subsequently, MySQL 8.0 further introduced the Instant algorithm, marking a major breakthrough in DDL operations. Instant DDL allows certain operations—such as adding columns at the end, modifying column names, renaming tables, and so on—to be executed immediately without locking tables or copying data. This greatly accelerates DDL operations and minimizes business impact. Nevertheless, DBAs may still need to rely on external tools like pt-online-schema-change (pt-osc) in certain scenarios, as Online DDL does not support all types of DDL operations, and pt-osc offers additional flexibility through features like flow control.

With the rise of distributed databases, Online DDL presents new challenges. In distributed environments, DDL operations must be executed simultaneously across multiple nodes, requiring not only guaranteed safety for concurrent DDL & DML operations but also considerations for performance, execution efficiency, and crash-safe mechanisms.

This article shares the technical evolution and practical implementation of Online DDL in the latest product of the TencentDB TDSQL series: TDSQL Boundless.

Introduction to TDSQL Boundless Architecture

TDSQL Boundless is a high-performance, high-availability enterprise-grade distributed database solution developed by Tencent for financial-grade application scenarios. It adopts a containerized cloud-native architecture, delivering high-performance computing capabilities and cost-effective massive storage at the cluster level.

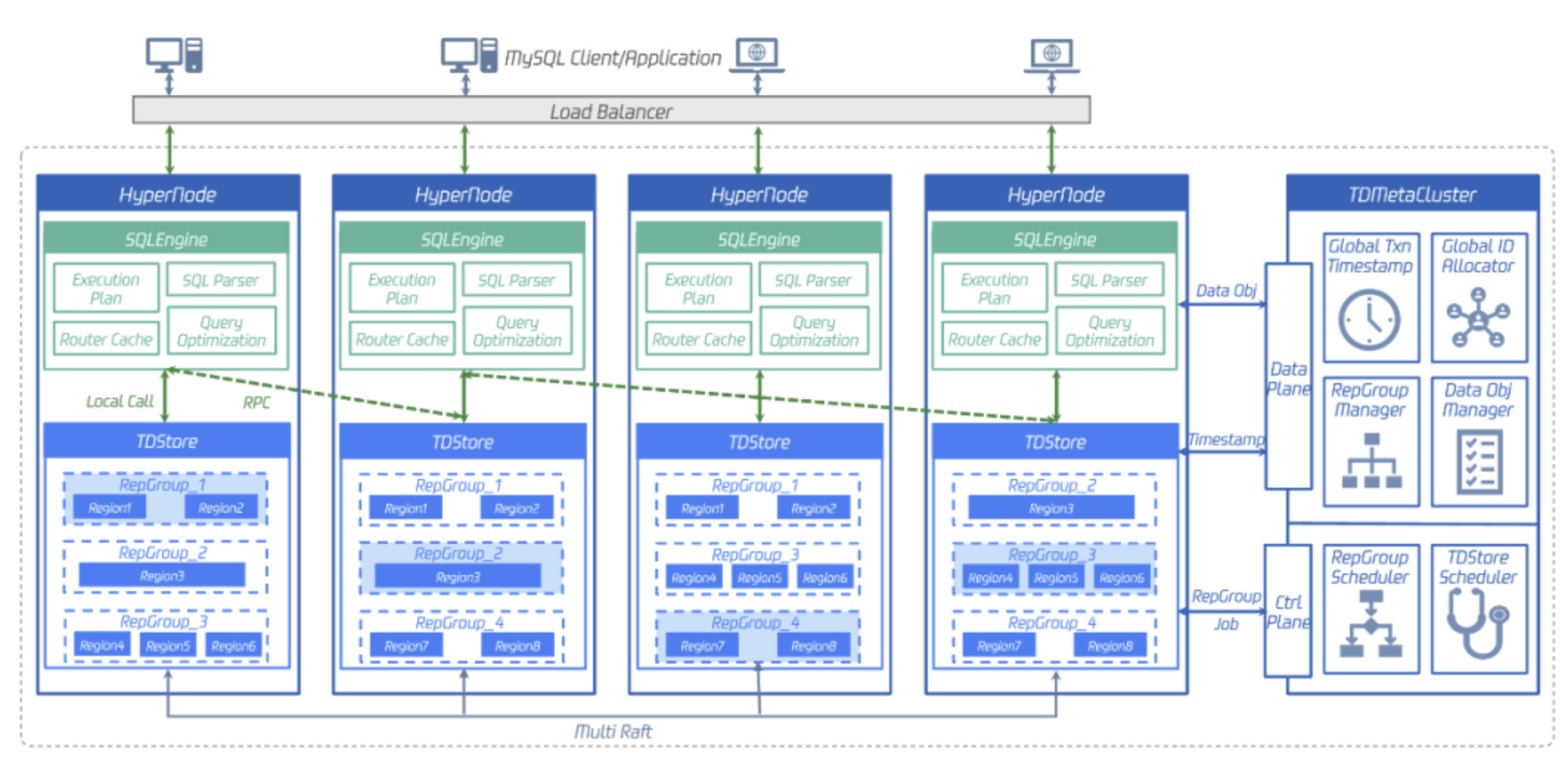

The figure below shows the architecture of TDSQL Boundless, where the entire instance is divided into two types of nodes:

The TDMetaCluster on the right serves as the management node: responsible for management of cluster metadata and intelligent scheduling.

The HyperNode on the left is a peer node, also known as a hybrid node: Each node comprises two components—the computing layer (SQLEngine) and the storage layer (TDStore). The SQLEngine layer is responsible for SQL parsing, query plan generation, query optimization, and so on, and sends transaction-related read/write requests to the underlying TDStore.

TDSQL Boundless architecture and features: fully distributed + storage-compute integration/storage-compute separation + data plane/control plane separation + high scalability + global consistency + high compression ratio.

Tackling the Challenges of TDSQL Boundless Online DDL

Current approach to executing DDL in traditional standalone MySQL:

1. instant DDL (ALGORITHM=INSTANT): Only modifies metadata in the data dictionary without copying data or rebuilding the table, leaving the original table data unaffected. Such DDL statements can be executed directly and completed immediately.

2. INPLACE DDL (ALGORITHM=INPLACE): Rebuilds the table "in-place" at the storage engine layer without blocking DML operations. However, for large table modifications, this may lead to prolonged primary-replica inconsistency. For such DDL statements, if greater control over the DDL process is required and replica lag is a concern, it is recommended to use third-party online schema change tools like pt-osc.

3. COPY DDL (ALGORITHM=COPY): Operations such as "modifying column types" or "changing table character sets" only support table copy, which blocks DML operations and does not qualify as Online DDL. For such DDL statements, it is recommended to use third-party online schema change tools like pt-osc.

Overall, beyond INSTANT DDL, traditional standalone MySQL primarily relies on third-party online schema change tools to execute DDL operations. However, this approach presents several notable drawbacks:

1. DDL may fail to acquire the lock due to large transactions or long-running queries, resulting in persistent waits and repeated failures.

2. All third-party tools require rebuilding the entire table, significantly sacrificing performance to ensure stability. Tests show that compared to native DDL execution modes (INSTANT/INPLACE/COPY), third-party tools exhibit a 10-fold or even orders-of-magnitude performance degradation in DDL execution. This is unacceptable given the current rapidly growing data volumes.

Compared to traditional standalone MySQL, executing DDL in distributed databases faces more and complex challenges:

1. Whether the native INSTANT DDL's ultra-fast execution feature is compatible and retained.

2. How to solve the concurrency control issue between DDL requiring data backfill and DML while maintaining execution efficiency?

3. In native distributed databases with a storage-compute separation architecture, compute nodes are loosely coupled (stateless). When DDL changes are executed on one compute node, how do other compute nodes within the same instance promptly detect these DDL modifications? Simultaneously, data read/write accuracy must be guaranteed throughout the DDL change process.

4. Whether in native distributed databases, where data is distributed across multiple nodes—with both node scale and storage capacity significantly larger than standalone MySQL—bypassing the storage layer directly + combining multi-machine parallelism can significantly accelerate DDL operations requiring table reconstruction.

5. Distributed Database DDL crash-safe issue.

Below we will explore how TDSQL Boundless employs a series of innovative strategies to overcome various challenges faced by Online DDL.

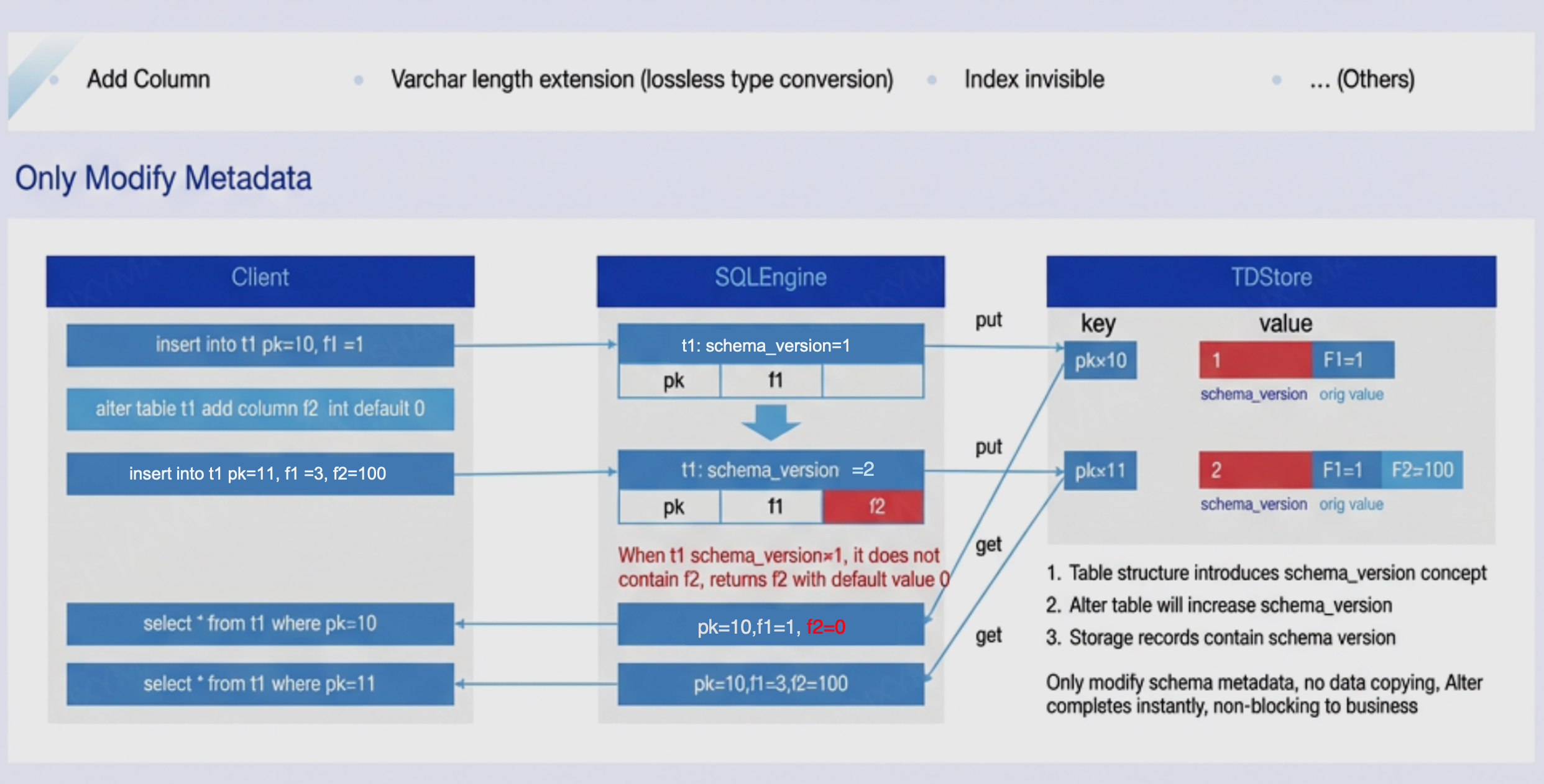

1. By introducing multi-version schemas to solve instant DDL issues:

By introducing the concept of a version number (schema version) in the table structure. As shown in the figure above, table t1 initially has version 1; after a column is added, the version number becomes 2. New data rows carry the version number upon insertion. During data reading, the row version must be checked first: if the row version is 2, the current table structure is used for parsing; if the row version is 1 (lower than the current version), after it is determined that column F2 is absent in this version's schema, the default value is populated directly before returning to the client. This multi-version schema parsing mechanism enables non-destructive type changes—such as adding columns or extending varchar length—to complete instantly by modifying metadata only.

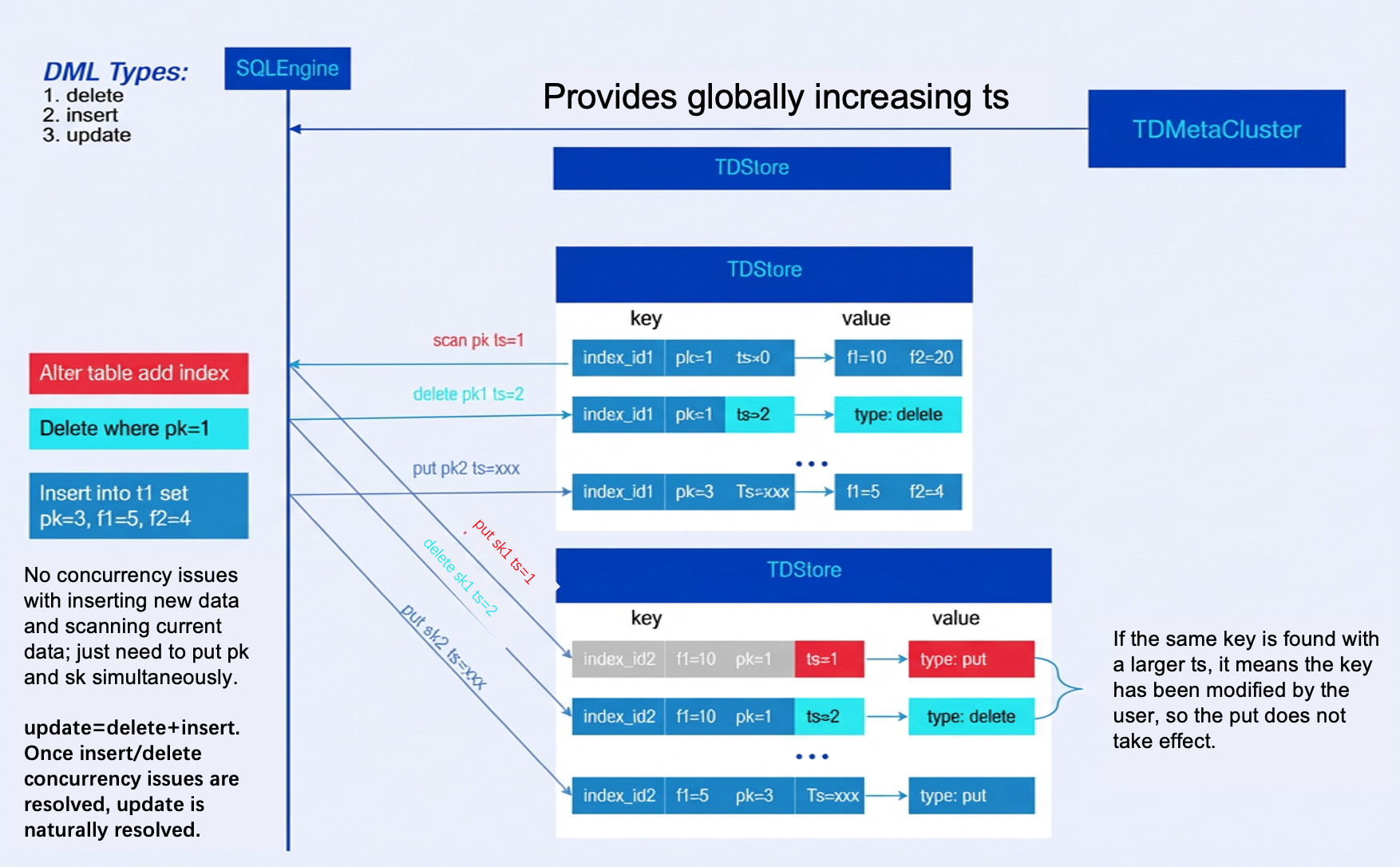

2. By introducing (Thomas Write Rule) to resolve concurrency control issues between DDL requiring data backfill and DML:

By introducing (Thomas Write Rule) to provide a concurrency control mechanism for DDL & DML operations, ensuring serialization order of database protocols. Compared to standard timestamp-based concurrency control methods, (Thomas Write Rule) ignores obsolete writes, reducing the likelihood of transactions being aborted.

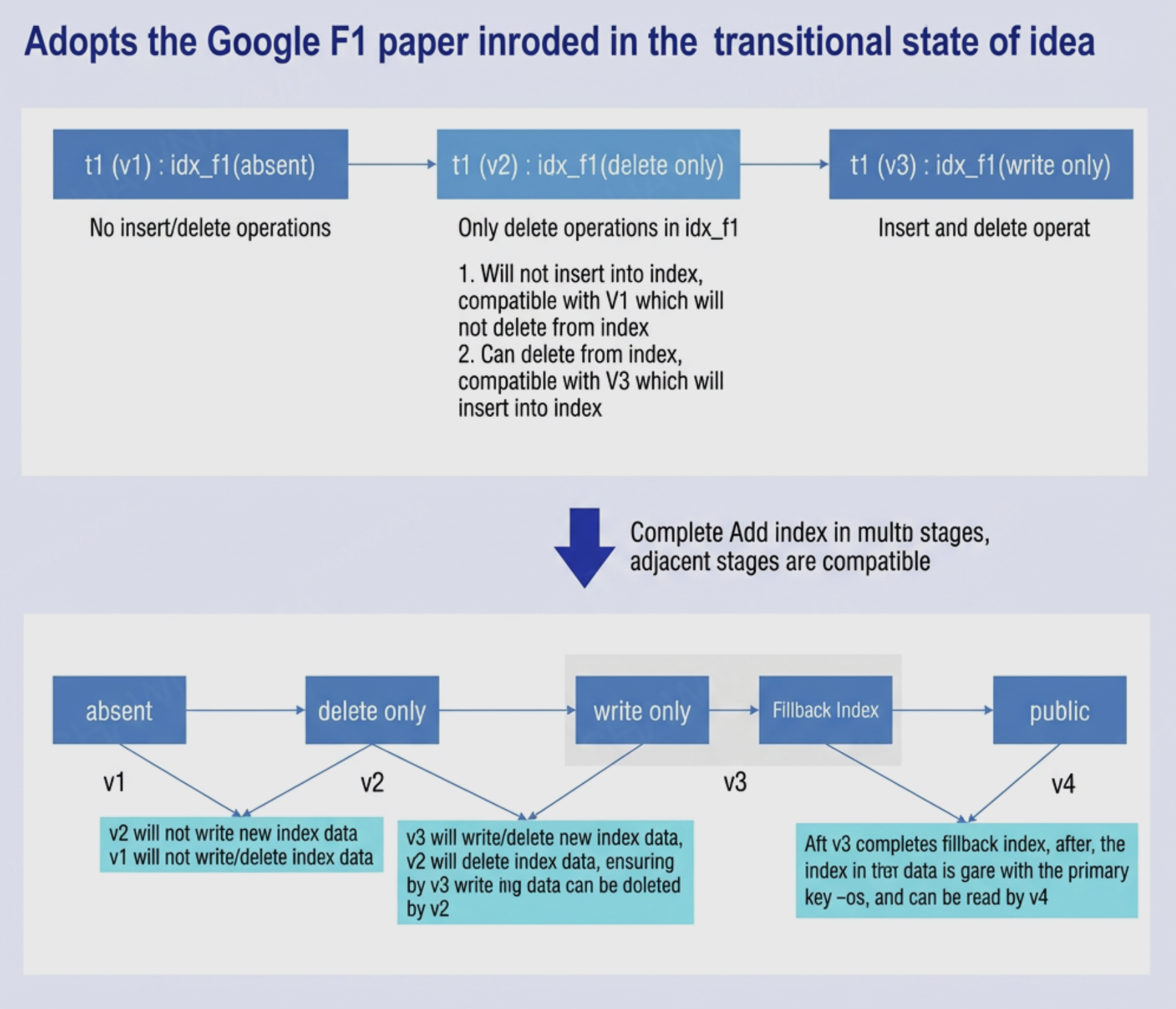

3. Referencing Google F1's schema change methodology, transitional states are introduced to ensure smooth schema transitions during the change process:

The fundamental concept abstracted from this: when an action cannot immediately transition from one state (before adding an index) to another (after adding an index), transitional intermediate states can be introduced to ensure compatibility between adjacent states. Although entities cannot instantly enter a specific state, as long as they remain within two compatible states and maintain compatibility while progressively advancing through state transitions, they can gradually reach the final state and complete the entire transition.

The figure illustrates a typical add index operation in DDL. During the state transition of all SQLEngines from v1 -> v4, two transitional states (v2 and v3) are introduced. It can be observed that:

3.1 Coexistence of v1 and v2: v1 represents the old schema, while v2 is the new schema (delete-only). At this stage, all SQLEngines are either in the v1 or v2 state. Since the index is only manipulated during delete operations and no index has been created yet, no data inconsistency issues arise.

3.2 Coexistence of v2 and v3: v2 represents the new schema (delete-only), while v3 is the new schema (write-only). At this stage, all SQLEngines are either in the v2 or v3 state. SQLEngines in the v2 state can normally insert data, delete data, and manage indexes (both existing and new data lack indexes); SQLEngines in the v3 state can normally insert, delete data, and manage indexes. However, since indexes remain invisible to users in both v2 and v3 states, and users only see data, data consistency is maintained from the user's perspective.

3.3 Coexistence of v3 and v4: v3 represents the new schema (write-only), while v4 is the new schema (index addition completed). At this stage, all SQLEngines are either in the v3 or v4 state. SQLEngines in the v3 state can normally insert and delete data and indexes (existing data still lacks indexes); SQLEngines in the v4 state have populated indexes for existing data, representing the final state after add index. It can be observed that in the v3 state, users see complete data; in the v4 state, both complete data and indexes are visible to users, thus maintaining data consistency.

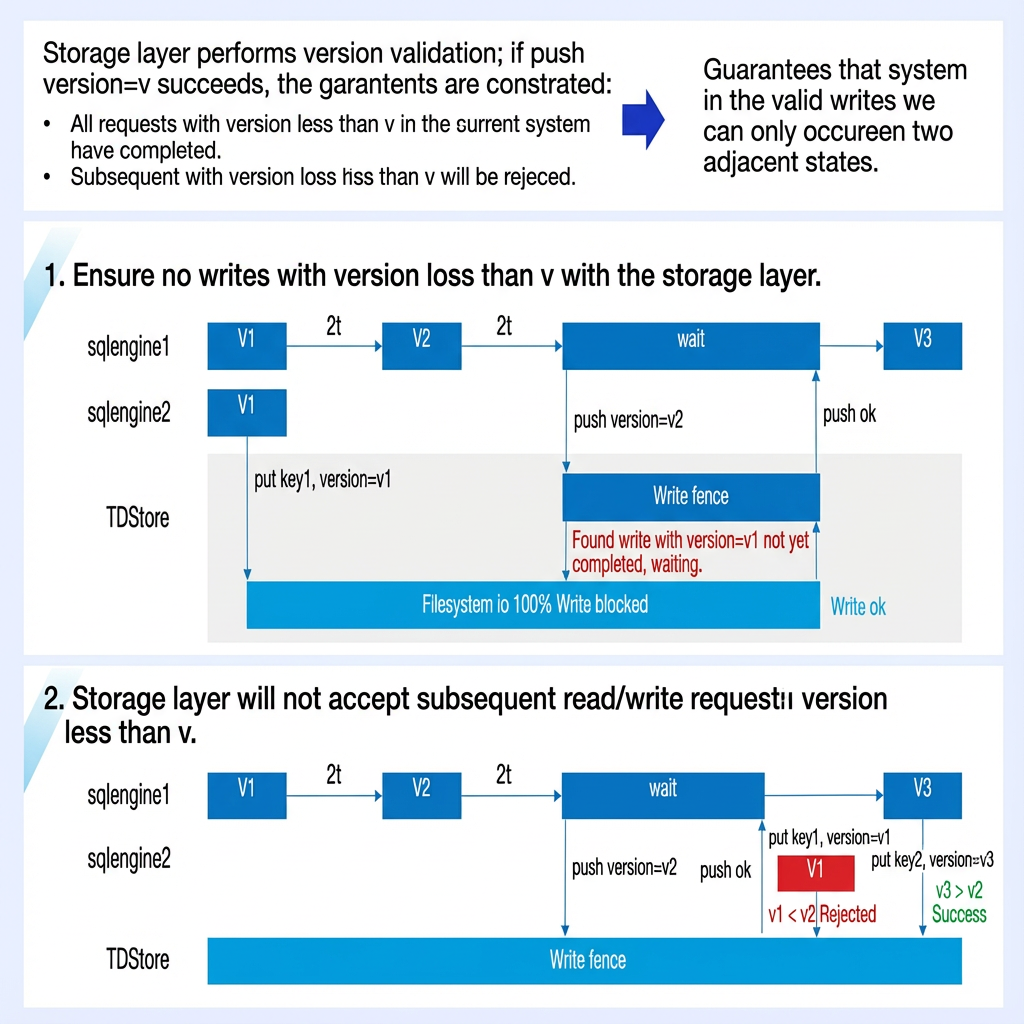

4. By incorporating the concept of transitional states, a Write Fence mechanism is designed: Through version verification of requests at the storage layer, it ensures that successful writes from multiple SQLEngines can only occur between two adjacent states at any given moment, preventing data inconsistency.

Write Fence is an internal data structure of TDSQL Boundless, storing the mapping of schema_obj_id → schema_obj_version. It addresses the collaboration challenge between the SQLEngine at the computation layer and TDStore at the storage layer. We need to ensure TDStore is aware of relevant changes before the SQLEngine status is advanced.

Before each SQLEngine enters the next state, it must push the current version to TDStore (push write fence). A successful push ensures two constraints:

In the current system, all requests with versions lower than the pushed version have been completed.

Subsequent requests with versions lower than the pushed version will be rejected.

Through the version verification mechanism at the storage layer, it ensures that successful writes from multiple SQLEngines can only occur between two adjacent states at any given moment, preventing data inconsistency.

The figure also demonstrates that even in extreme exception scenarios, if a node attempts to send a request with a version lower than v2 after successfully pushing the v2 version, the storage layer TDStore will detect that the request version is lower than the current v2 in the Write Fence and thus rejects the request. This ensures the correctness of the operation of the overall algorithm.

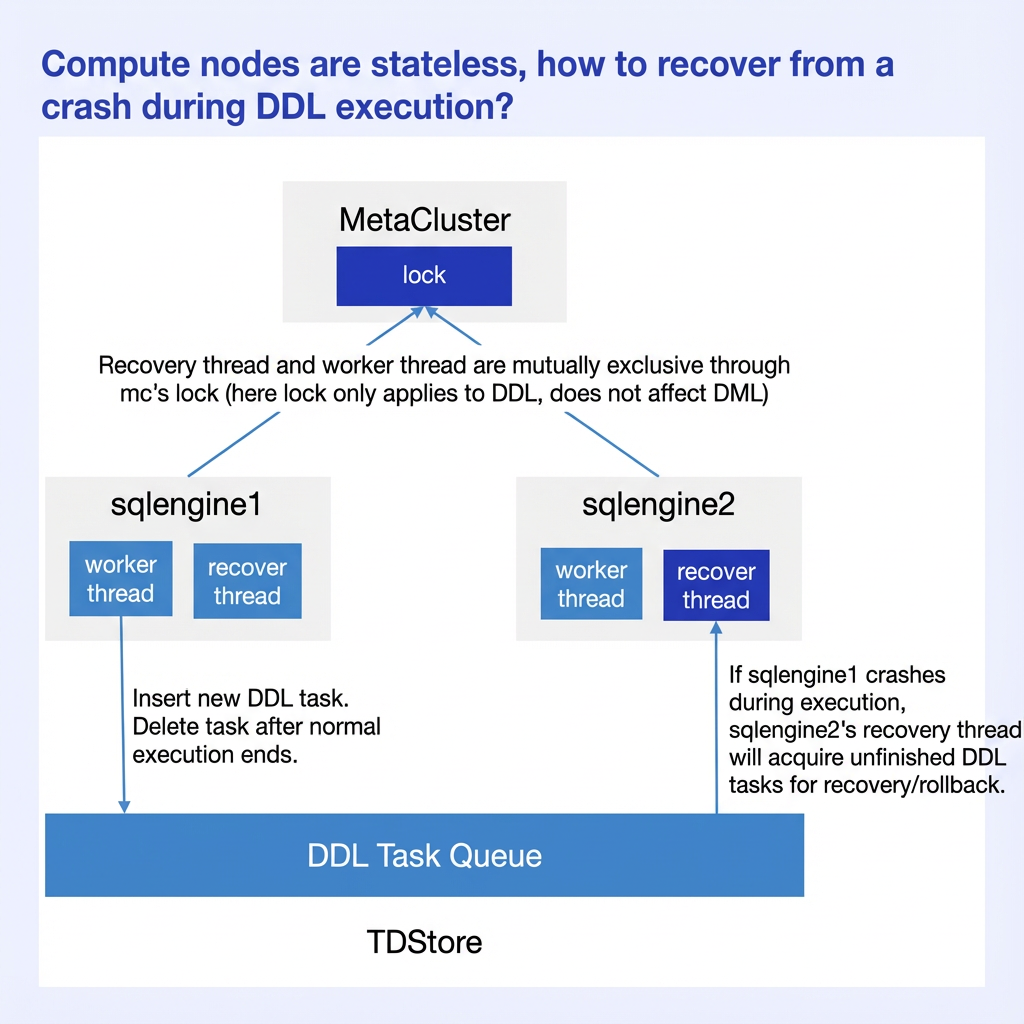

5. crash-safe Issue of DDL:

A DDL job queue + DDL recovery thread are introduced to ensure crash-safe for DDL. Note that the recovery thread operates independently from worker threads and does not wait for communication from each other. If execution fails due to network issues, the recovery thread takes over (the recovery thread may not reside on the same node as the original worker thread). Monitoring the DDL job queue is straightforward: query the

INFORMATION_SCHEMA.DDL_JOBS system table and verify the final execution status via the DDL_STATUS field.TDSQL Boundless currently supports Online DDL capabilities in most scenarios. For details, see OnlineDDL Description.

TDSQL Boundless Fast Online-DDL New Feature

From the above introduction, we can see that if data backfilling occurs during Online DDL in TDSQL Boundless, it adopts the (Thomas Write Rule) for batch backfilling and accelerates the process through parallel execution. However, in practical usage, especially in scenarios with large data volumes, the execution time remains relatively long because Thomas Write requires comparing backfilled data with concurrent DML data using timestamps to determine which version to retain. Therefore, the latest version of TDSQL Boundless introduces the Fast Online-DDL feature: It uses a bulk load approach to organize backfilled data into external sst files and directly ingests them into the Lmax layer of TDStore. This eliminates the overhead of timestamp comparison and concurrency control, significantly improving execution efficiency.

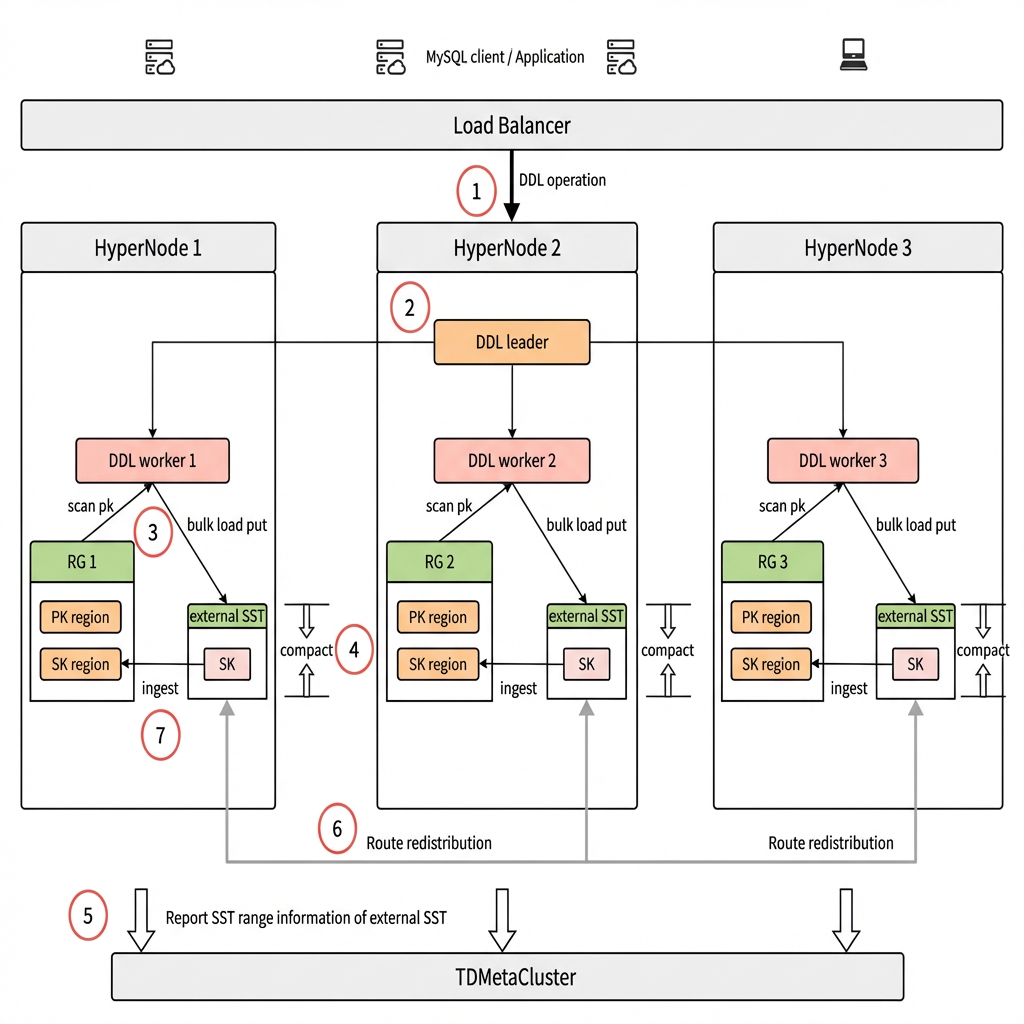

The diagram above illustrates the architecture of Fast Online-DDL. Let's examine how it works:

1. The user initiates a DDL operation request, which is randomly routed through the load balancer to a peer node in the TDSQL Boundless instance. Here, it is assumed to be routed to HyperNode 2.

2. The HyperNode 2 node executes the DDL operation as the DDL leader, assigning workers to all relevant HyperNode peer nodes based on the PK data distribution of the target object.

3. Each worker scans local PKs to generate index data and writes it into external sst files using the bulk load method.

4. After all workers complete scanning the PKs, they perform a compaction operation (compact) on the external sst files to ensure no duplicate keys exist within sst files at the same level.

5. The compacted sst range information is reported to the TDMetaCluster (mc) management node. Based on this information, mc divides these ranges into regions (a logical concept referring to small data segments) and assigns them to corresponding RGs (Replication Group, a logical concept where one RG contains multiple regions and serves as the smallest multi-replica data synchronization unit), generating new routing information. Simultaneously, mc temporarily disables the split and merge of routing for regions involved in SK.

6. The HyperNode reorganizes the external sst files based on the new routing information and transfers them to the corresponding HyperNode nodes.

7. The external sst files that meet the new routing criteria are directly ingested into the Lmax layer of the user RG's sst files. Upon completion, mc removes the restrictions on split and merge operations for region routing involved in SK.

TDSQL Boundless Fast Online-DDL Practice

The following steps will create a large partitioned table and use the DDL statement `add index` to test the performance improvement of Fast Online-DDL.

Hardware Environment

Node Type | Node Specifications | Number of Nodes |

HyperNode | 16-Core CPU/32 GB of Memory/Enhanced SSD Cloud Disk 300 GB | 3 |

Data Preparation

-- Create a partitioned table where the number of partitions is a multiple of the nodes:CREATE TABLE `lineitem` (`L_ORDERKEY` int NOT NULL,`L_PARTKEY` int NOT NULL,`L_SUPPKEY` int NOT NULL,`L_LINENUMBER` int NOT NULL,`L_QUANTITY` decimal(15,2) NOT NULL,`L_EXTENDEDPRICE` decimal(15,2) NOT NULL,`L_DISCOUNT` decimal(15,2) NOT NULL,`L_TAX` decimal(15,2) NOT NULL,`L_RETURNFLAG` char(1) NOT NULL,`L_LINESTATUS` char(1) NOT NULL,`L_SHIPDATE` date NOT NULL,`L_COMMITDATE` date NOT NULL,`L_RECEIPTDATE` date NOT NULL,`L_SHIPINSTRUCT` char(25) NOT NULL,`L_SHIPMODE` char(10) NOT NULL,`L_COMMENT` varchar(44) NOT NULL,PRIMARY KEY (`L_ORDERKEY`,`L_LINENUMBER`)) ENGINE=ROCKSDB DEFAULT CHARSET=utf8mb3PARTITION BY HASH (`L_ORDERKEY`) PARTITIONS 24;-- Generate test data using the official TPC standard tool: TPC-H v3.0.1. Download URL: tpc.org/tpch/.-- Import, replacing the data file directory for LOAD DATA with your own directory:#!/bin/bashfor tbl in lineitemdofor i in {1..30}doecho "Importing table: $tbl"mysql $opts -e "set tdsql_bulk_load_allow_unsorted=1;set tdsql_bulk_load = 1;LOAD DATA INFILE '/data/TPCH_test/dbgen/tpch-100g/${tbl}.tbl.$i' INTO TABLE $tbl FIELDS TERMINATED BY '|';" &donedonewaitdate-- Verify the number of records:sql> select count(*) from tpchpart100g.lineitem;+-----------+| count(*) |+-----------+| 600037902 |-- Expected to be roughly evenly distributed across each node:select sum(region_stats_approximate_size) as size, count(b.rep_group_id) as region_nums, sql_addr, c.leader_node_name, b.rep_group_id from information_schema.META_CLUSTER_DATA_OBJECTS a join information_schema.META_CLUSTER_REGIONS b join information_schema.META_CLUSTER_RGS c join information_schema.META_CLUSTER_NODES d on a.data_obj_id = b.data_obj_id and b.rep_group_id = c.rep_group_id and c.leader_node_name = d.node_name where a.table_name = 'lineitem' and a.data_obj_type = 'PARTITION_L1' group by rep_group_id order by leader_node_name;+------------+-------------+------------------+----------------------+--------------+| size | region_nums | sql_addr | leader_node_name | rep_group_id |+------------+-------------+------------------+----------------------+--------------+| 8879148586 | 76 | 9.30.0.133:15070 | node-three-001 | 64535 || 8878971995 | 81 | 9.30.2.175:15088 | node-three-002 | 513 || 8878089427 | 79 | 9.30.0.134:15070 | node-three-003 | 65082 |+------------+-------------+------------------+----------------------+--------------+-- If data is unevenly distributed, perform splitting and leader switching.ALTER INSTANCE SPLIT RG 64535 BY 'size';ALTER INSTANCE TRANSFER LEADER RG 64535 TO 'node-three-003';

Fast Online DDL: Before and After It Is Enabled

Related parameters:

-- Number of worker threads for DDL operations (total across all nodes), default value: 8max_parallel_ddl_degree-- Data backfill mode for DDL operations. Default value: 'ThomasWrite'. To enable the Fast Online DDL feature, it must be set to 'IngestBehind'.tdsql_ddl_fillback_mode

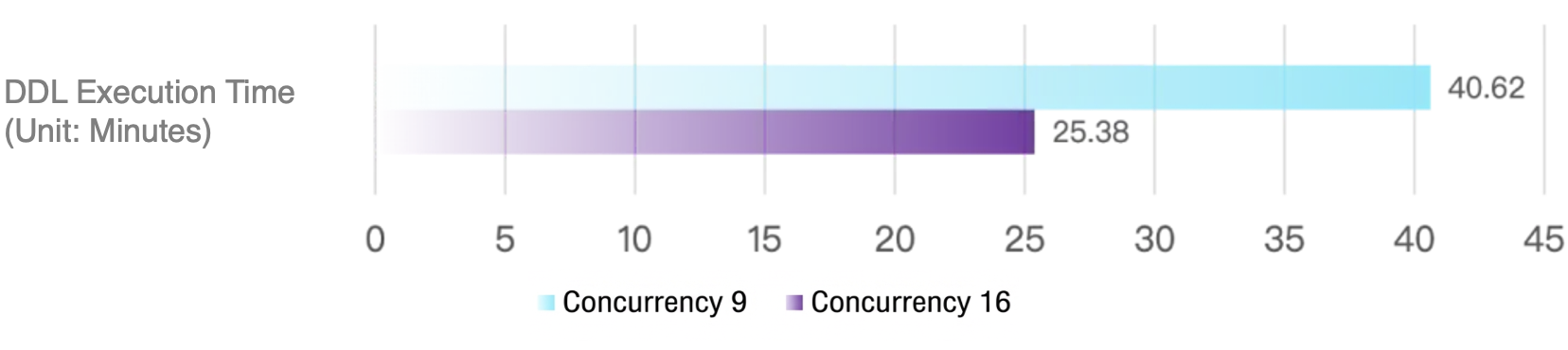

Using the default 'ThomasWrite' data backfill mode [in this mode, DDL threads are executed on a single machine], enable 9 and 16 threads respectively [without exceeding the number of CPUs per node] to test add index:

-- ThomasWrite backfill mode:set session max_parallel_ddl_degree=9;set session tdsql_ddl_fillback_mode='ThomasWrite';alter table tpchpart100g.lineitem add index index_idx_q_part_key(l_partkey);Query OK, 0 rows affected (40 min 37.62 sec)set session max_parallel_ddl_degree=16;set session tdsql_ddl_fillback_mode='ThomasWrite';alter table tpchpart100g.lineitem add index index_idx_w_part_key(l_partkey);Query OK, 0 rows affected (25 min 22.95 sec)

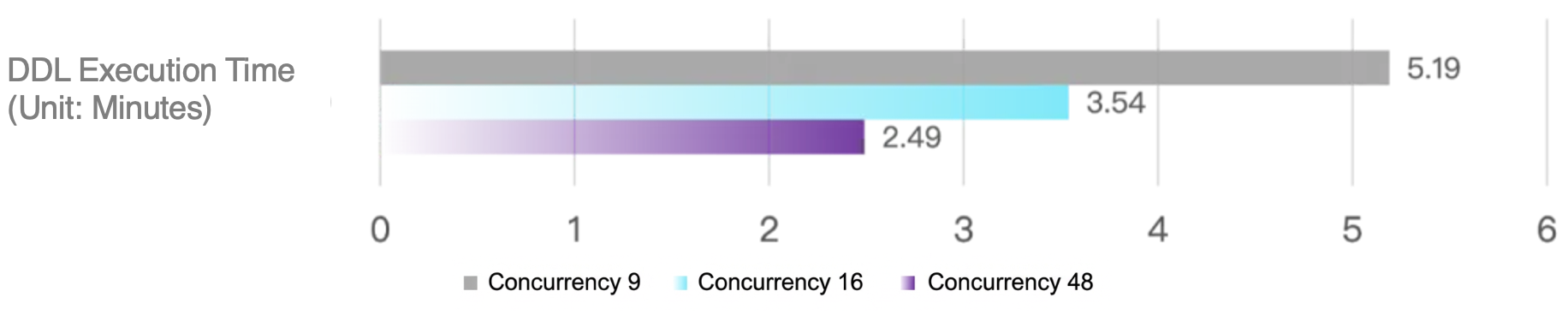

With Fast Online DDL not enabled, execution time comparison:

Enable Fast Online DDL using the 'IngestBehind' data backfill mode [in this mode, DDL threads are executed in a distributed manner]. Enable 9, 16, and 48 threads respectively [without exceeding the total number of CPUs across data distribution nodes] to test add index:

-- IngestBehind backfill mode:set session max_parallel_ddl_degree=9;set session tdsql_ddl_fillback_mode='IngestBehind';alter table tpchpart100g.lineitem add index index_idx_j_part_key(l_partkey);Query OK, 0 rows affected (5 min 11.66 sec)set session max_parallel_ddl_degree=16;set session tdsql_ddl_fillback_mode='IngestBehind';alter table tpchpart100g.lineitem add index index_idx_k_part_key(l_partkey);Query OK, 0 rows affected (3 min 32.15 sec)set session max_parallel_ddl_degree=48;set session tdsql_ddl_fillback_mode='IngestBehind';alter table tpchpart100g.lineitem add index index_idx_l_part_key(l_partkey);Query OK, 0 rows affected (2 min 29.52 sec)

With Fast Online DDL enabled, execution time comparison:

Query for DDL execution results, DDL_STATUS field displays the final result:

--ddl_jobs: dictionary table that records the execution process of DDLselect * from INFORMATION_SCHEMA.DDL_JOBS where date_format(START_TIMESTAMP,'%Y-%m-%d')='2024-11-22' and IS_HISTORY=1 order by START_TIMESTAMP desc limit 1\\G*************************** 1. row ***************************ID: 18SCHEMA_NAME: tpch100gTABLE_NAME: lineitemVERSION: 204DDL_STATUS: SUCCESSSTART_TIMESTAMP: 2024-11-22 18:47:47LAST_TIMESTAMP: 2024-11-22 18:50:18DDL_SQL: alter table tpch100g.lineitem add index index_idx_s_part_key(l_partkey)INFO_TYPE: ALTER TABLEINFO: {"tmp_tbl":{"db":"tpch100g","table":"#sql-11746_212c8b_673ef509000030_9"},"alt_type":1,"alt_tid_upd":{"tid_from":10013,"tid_to":10013},"cr_idx":[{"id":10240,"ver":34,"stat":0,"tbl_type":2,"idx_type":2},{"id":10241,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10242,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10243,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10244,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10245,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10246,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10247,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10248,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10249,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10250,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10251,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10252,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10253,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10254,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10255,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10256,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10257,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10258,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10259,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10260,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10261,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10262,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10263,"ver":34,"stat":0,"tbl_type":2,"idx_type":4},{"id":10264,"ver":34,"stat":0,"tbl_type":2,"idx_type":4}],"rm_idx":[],"init":false,"tmp_tab":false,"online_op":true,"wf_rmed":false,"online_copy_stage":0,"idx_op":true,"row_applied":true,"row_apply_saved":true,"current_schema_name":"tpch100g","crt_data_obj_task_id":29062709316158283,"dstr_data_obj_task_id":0,"data_obj_to_be_dstr_arr":[],"part_policy_ids":[1],"progress":"total: 200, scanned: 200 (100.00%)","fillback_mode":"IngestBehind","exec_addr":{"ip":"10.0.20.144","port":6008},"recov_addr":{"ip":"10.0.20.144","port":6008}}IS_HISTORY: 11 row in set (0.00 sec)

Detailed description of the ddl_jobs field:

Field | Description |

ID | Each DDL JOB has a unique ID. |

SCHEMA_NAME | Database name. |

TABLE_NAME | Table name. |

VERSION | INFO version number of the field parsing. |

DDL_STATUS | Execution status of the DDL JOB, with three possible states: SUCCESS, FAIL, EXECUTING. |

START_TIMESTAMP | Initiation time of the DDL JOB. |

LAST_TIMESTAMP | End time of the DDL JOB. |

DDL_SQL | Details of DDL statements. |

INFO_TYPE | Type of DDL statement. |

INFO | Metadata information during DDL execution (including execution progress of DDL statements such as add index and copy table). |

Best Practices for TDSQL Boundless Online DDL

The Fast Online DDL capability of TDSQL Boundless combines parallel processing with bypass writes, making DDL operations more efficient and convenient.

However, if we fail to properly distinguish between large/small tables or do not partition data appropriately based on scale, the execution efficiency of Fast Online DDL may be significantly reduced. This is because, without proper partitioning, data in a large table is likely to reside on a single node. Consequently, DDL operations will be executed on that single node rather than being parallelized across multiple nodes, greatly diminishing execution efficiency.

Only by reasonably utilizing partitioned tables based on data scale can the distributed scalability of Fast Online DDL be fully leveraged.

Partitioning recommendations:

1. TDSQL Boundless is 100% compatible with native MySQL partitioned table syntax, supporting primary/secondary partitioning. It is primarily designed to address: (1) Capacity issues of large tables; (2) Performance issues under high-concurrency access.

2. Capacity issues of large tables: If a single table is expected to exceed the data disk capacity of a single node in the future, it is recommended to create primary hash or key partitioning to evenly distribute data across multiple nodes. Should data volume continue to grow, elastic scaling can be leveraged to progressively "reduce" disk usage per node.

3. Performance issues under high-concurrency access: For TP (Transaction Processing) services experiencing high-concurrency access, if a single node is expected to be unable to handle the excess read/write load, it is also recommended to create primary hash or key partitioning to evenly distribute read/write pressure across multiple nodes.

4. For partitioned tables created in points 2 and 3, it is recommended to select partition keys based on business characteristics that satisfy most core business queries. The number of partitions should be an integer multiple of the number of instance nodes.

5. For data cleanup requirements, create RANGE-partitioned tables to perform rapid data cleanup using the truncate partition command. To also distribute data while meeting cleanup needs, further create partitioned tables with HASH subpartitions.

Development of TDSQL Boundless Online DDL

TDSQL Boundless is rapidly evolving, with features iterating and improving based on user needs. DDL parallel performance continues to be optimized. The current new version of TDSQL Boundless specifically enhances data backfilling performance for partitioned tables during add index operations. This prioritization stems from observing that many deployed business systems utilize large partitioned tables spanning terabytes or tens of terabytes, often requiring Online index additions. In the upcoming TDSQL Boundless release, we will fully implement the Fast Online DDL capability, delivering its benefits for partitioned tables during copy table operations, as well as for regular tables during add index and copy table operations.

As the core of Tencent Cloud Database's long-term strategy, TDSQL Boundless will continue to be driven by business needs, focusing on refining the product to deliver more efficient and stable services to users.

Help and Support

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback