Usage Statistics

Download

Focus Mode

Font Size

Feature Overview

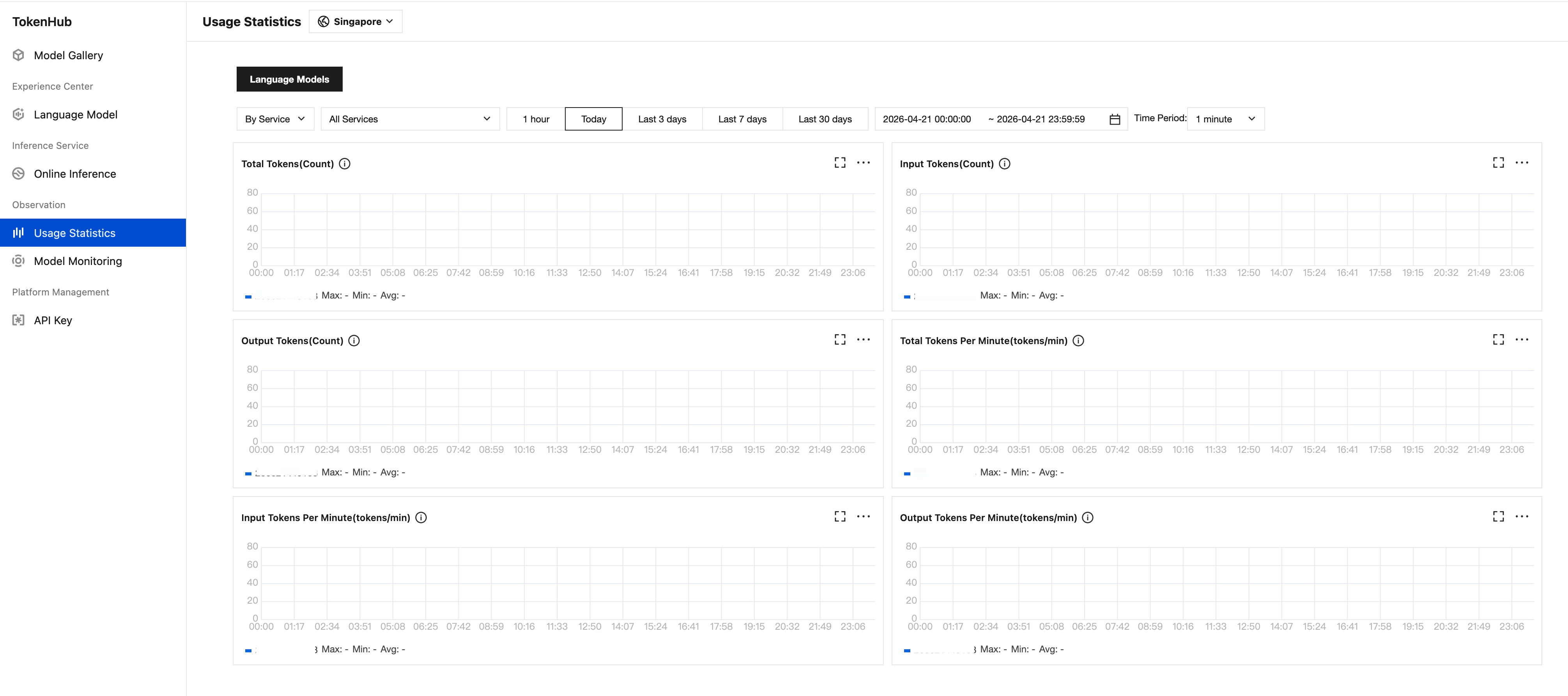

Usage Statistics page helps you comprehensively monitor AI resource consumption, supporting viewing data from three dimensions:

Service dimension: view the usage of specific online inference services.

Model dimension: view the call volume, Token consumption, and free quota for each model.

Key dimension: view the usage of different API Keys.

Model usage

Aggregate call data by model dimension, supporting categorized views by model type.

Filter by category

The top of the page provides filter tabs for quick categorization by model type, while supporting filtering by Online Inference Service and API Key to view specific service usage.

Model Type | include model |

Language Model | DeepSeek V3.2,GLM-5,GLM-5.1,GLM-5V-Turbo,GLM-5-Turbo,kimi-k2.5,MiniMax-M2.5,MiniMax-M2.7 |

Metric Details

Key call metrics for each model within the selected time range, with supported granularities of 1 minute / 5 minutes / 1 hour:

Field | Model Type | Description |

Total Tokens | Text generation | Input number of Tokens + Output number of Tokens. |

Input Tokens | | Number of Tokens consumed by the request (Prompt) portion. |

Output Tokens | | Number of Tokens consumed by the model response (Completion) portion. |

Total Tokens per Minute | | Input number of Tokens per minute + Output number of Tokens per minute. |

Input Tokens per Minute | | Input-side Token throughput (measured in tokens/min). |

Output Tokens per Minute | | Output-side Token throughput (measured in tokens/min). |

Usage Trend Chart

Visual charts display call trends, with each metric providing maximum, minimum, and average statistical summaries to help users quickly identify peak usage and overall trends.

Text Generation

Providing trend monitoring across six Token dimensions:

Token Consumption Trends: Total number of Tokens / Input number of Tokens / Output number of Tokens over Time

Token Throughput Trends: Total Tokens per Minute / Input Tokens per Minute / Output Tokens per Minute - Concurrency Variations

Help and Support

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback