Data Intelligent Scheduling and Related Practices for Performance Optimization

Download

포커스 모드

폰트 크기

Introduction

After years of technological evolution, distributed databases have transcended the limitations of traditional "sharding" scenarios, evolving from a stopgap measure for massive data sets to a core architecture supporting elastic business expansion. TDSQL Boundless achieves dynamic adaptation between database operation modes and business scales through its (Integrated Centralized-Distributed Solution): During initial business phases, the system operates with full compatibility to centralized databases, preserving development practices and Ops frameworks. When businesses enter rapid growth stages, seamless transition to distributed mode requires only configuration-level adjustments. Online elastic scaling handles traffic spikes and data expansion. This process achieves two breakthroughs:

1. Zero-modification Smooth Evolution: The business logic layer does not need to adapt to distributed transaction logic, while the data layer maintains access consistency through intelligent routing.

2. Seamless Architecture Transition: The upgrade process from centralized to distributed architecture maintains business continuity, eliminating time-consuming data migration and risks of service disruption inherent in traditional solutions.

The integrated centralized-distributed solution of TDSQL Boundless primarily relies on intelligent data scheduling technology. Intelligent data scheduling technology not only realizes the integrated centralized-distributed solution but also innovatively introduces an optimization mechanism for data affinity in distributed scenarios. By combining predefined rules and customized policies, it intelligently schedules data units with strong business coupling to the same physical node, fundamentally avoiding the performance overhead of cross-node RPC access. This article will share the latest product in the TencentDB TDSQL series: TDSQL Boundless's intelligent data scheduling and related practices for performance optimization.

TDSQL Boundless Intelligent Data Scheduling Component TDMetaCluster

TDSQL Boundless is a high-performance, high-availability enterprise-grade distributed database solution developed by Tencent for financial-grade application scenarios. It adopts a containerized cloud-native architecture, delivering high-performance computing capabilities and cost-effective massive storage at the cluster level.

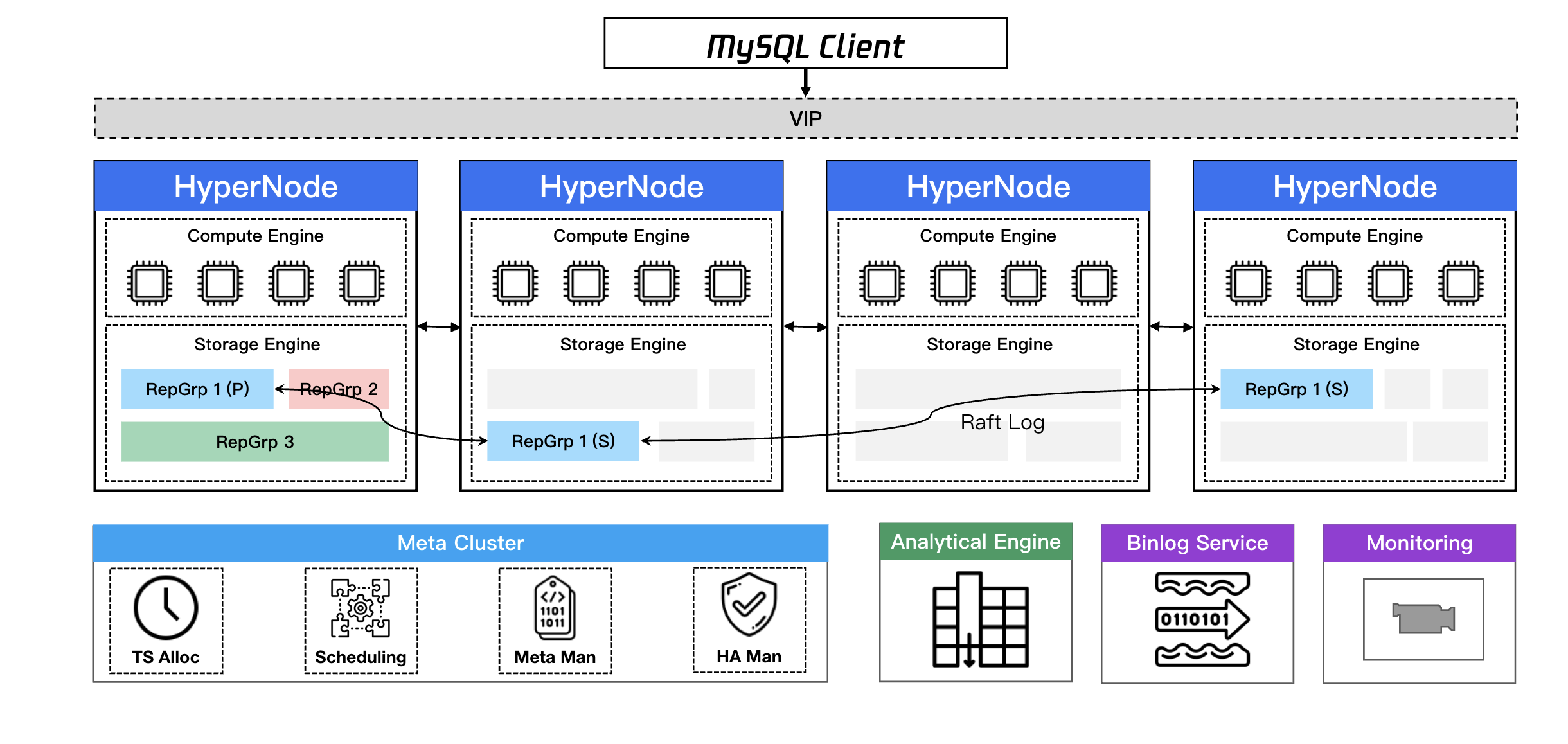

TDSQL Boundless architecture and features: fully distributed + storage-compute integration/storage-compute separation + data plane/control plane separation + high scalability + global consistency + high compression ratio.

In TDSQL Boundless, the component responsible for intelligent data scheduling is TDMetaCluster (TDMC for short, shown on the right side of the figure above), which serves as the central management and control module for TDSQL Boundless database instances.

1. TDMC architecture: A metadata management service based on the Raft protocol with one primary and two secondary nodes, where the Leader node handles requests.

2. TDMC data plane:

2.1 Assign globally unique and monotonically increasing transaction IDs.

2.2 Manage metadata for TDStore and SQLEngine.

2.3 Manage the data routing information for Replication Groups.

2.4 Manage global MDL locks.

3. TDMC control plane:

3.1 Schedule the splitting, merging, migrating, and switching over of Replication Groups and Regions.

3.2 Schedule the scaling of the storage layer.

3.3 Schedule the load balancing of the storage layer.

3.4 Issue alarms for abnormal events in various dimensions.

As the core scheduling engine for TDSQL Boundless instances, it not only manages the organization and distribution of data replicas through predefined rules but also supports flexible configuration of customized policies based on business needs. Next, we will explore how to select the appropriate deployment mode: centralized or distributed—based on business scenarios. While TDSQL Boundless, as a native distributed database, offers robust distributed processing capabilities, a centralized mode may better align with practical application requirements in specific scenarios.

TDSQL Boundless Centralized-Distributed Integration

First, based on assessment of business scale: including data volume, access frequency, response time, and scalability requirements, select the appropriate TDSQL Boundless specification. Even if initial estimates have deviations, there is no need to worry, as TDSQL Boundless, with its efficient AS capability, can flexibly adapt to various dynamically changing agile business scenarios.

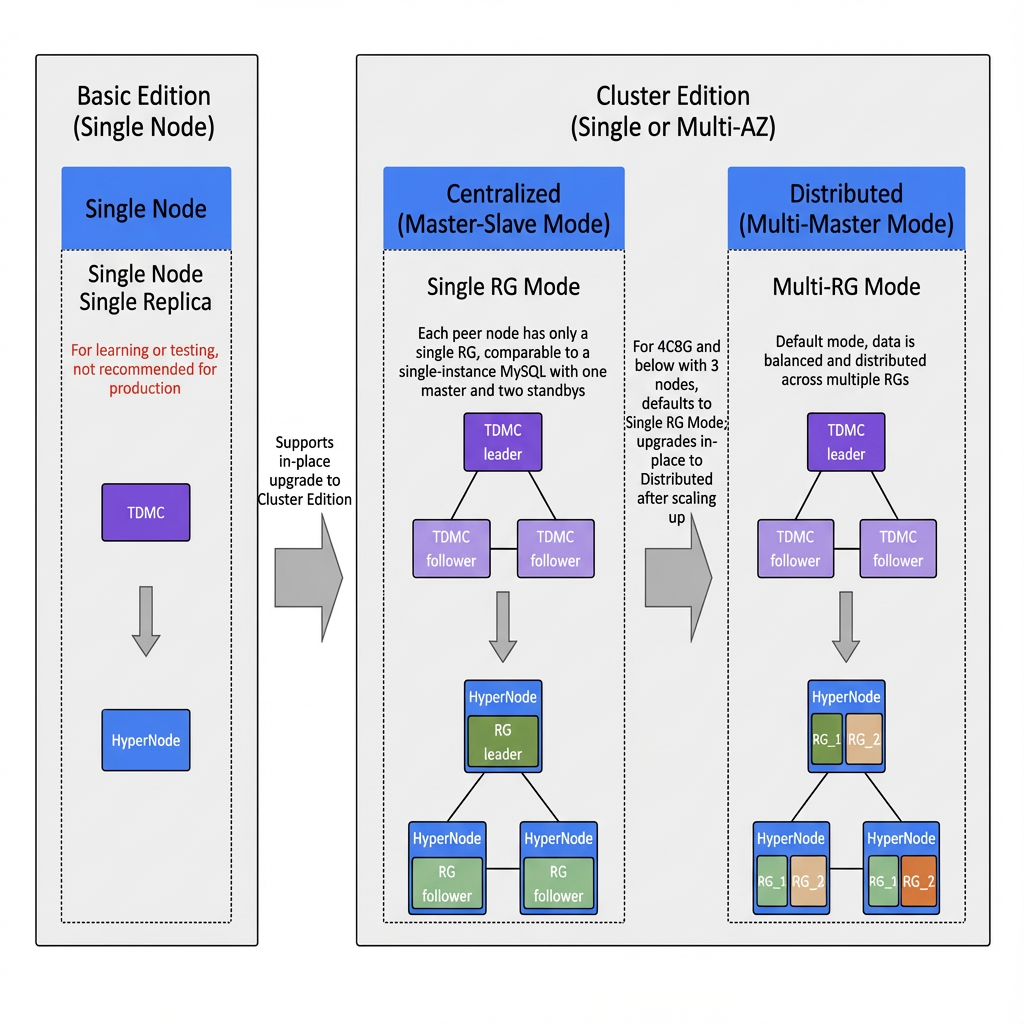

As shown in the figure above, the deployment mode is selected as follows:

1. Trial: Select the "Basic Edition" (single node, single replica), without the feature of high availability.

2. Production environment: Select the "Cluster Edition" [triple-replica architecture based on the Raft majority protocol].

3. Advanced Disaster Recovery: The Cluster Edition supports both "single-AZ" and "multi-AZ" deployments, with the latter enabling disaster recovery across three AZs within the same region.

4. Small-scale business: When specifications are 4C/8G or below with 3 nodes, the system automatically enables centralized mode (single RG mode), where primary replicas are centrally stored, ensuring compatibility with the standalone MySQL experience.

5. Medium-to-large-scale business: When specifications exceed 4C/8G or the number of nodes exceeds 3, the system automatically switches to distributed mode (multi-RG mode), where data is evenly distributed and peer-to-peer read/write operations are performed across multiple nodes, ensuring high performance and high availability.

It is recommended to conduct functional and performance tests based on actual business scenarios to verify whether the configuration meets peak demand.

After understanding how to select TDSQL Boundless configurations, you may have no doubts about the centralized mode but still worry whether the distributed architecture affects performance. Such concerns are valid, and technology for intelligent database scheduling is key to solving such performance issues. Before it is delved deeper into, it's essential to grasp a critical design in distributed systems: data balancing—the cornerstone that ensures system performance and reliability. Next, we will detail the principles of data balancing and its practical implementation in TDSQL Boundless.

TDSQL Boundless Data Balancing

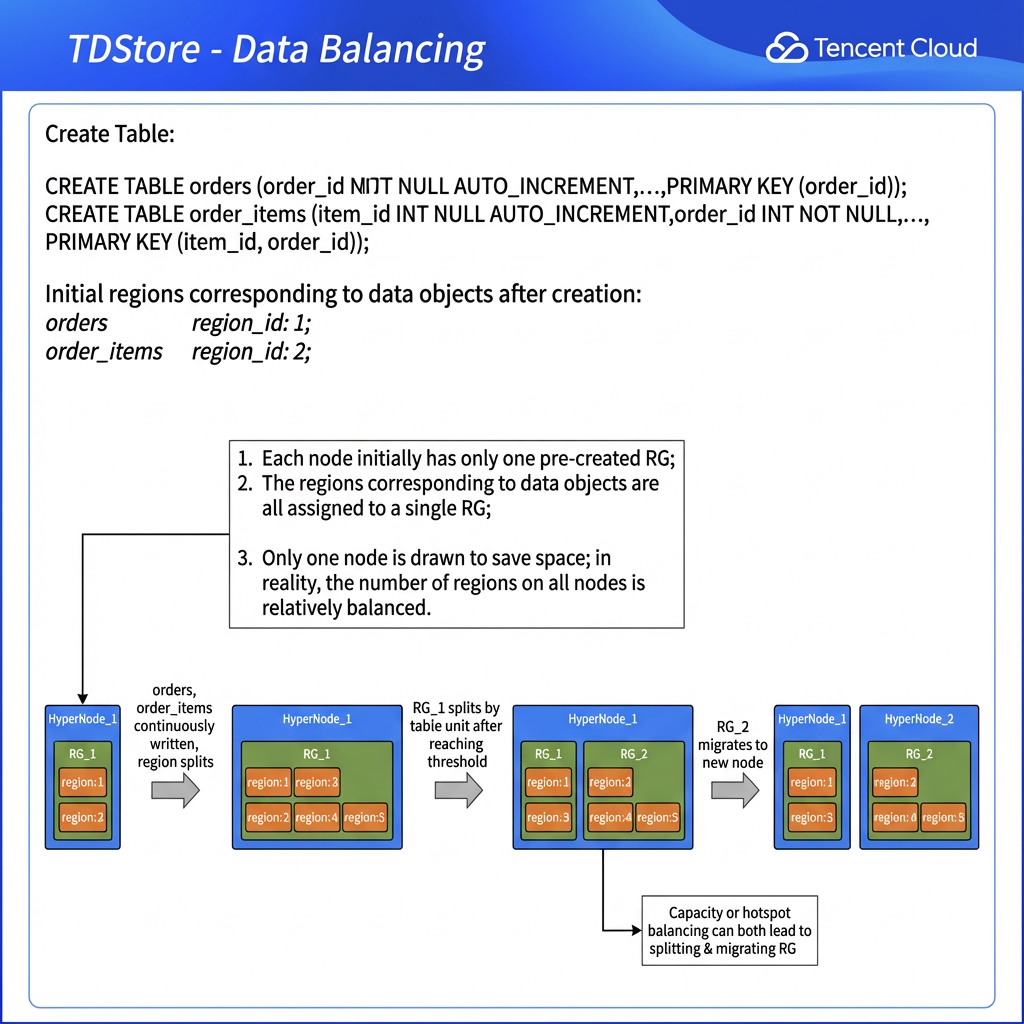

Data balancing is a key feature of distributed systems, encompassing two major dimensions: Capacity Balancing and Hotspot Balancing. Capacity balancing optimizes global resource utilization through uniform data distribution, while hotspot balancing focuses on resolving localized high-load issues.

Technical Highlights of TDSQL Boundless

1. Capacity Balancing

1.1 Three-tier Storage Model and Resource Pre-allocation:

Based on the three-tier storage model design (refer to Product Overview), each node pre-creates a primary RG, which can accommodate Regions for 100 tables by default. New RGs are automatically created only after exceeding this limit, reducing resource fragmentation, lowering management overhead, and improving performance.

1.2 Control of Two-dimensional Split:

Region-level: Triggers a split at 256M/5 million keys and remains with the original RG.

RG-level: Triggers a split at 32G/160 million keys. A single-table RG splits only when exceeding 20% of the node capacity to avoid distributed transactions. After the split is performed, automatic migration occurs at the RG level to maintain capacity balancing.

1.3 Policy of Migration Priority:

Prioritize migrating follower replicas. Leader replicas are migrated only when they exist on a node, ensuring the process remains transparent to services.

1.4 Linkage of Elastic Scaling:

Data rebalancing is automatically triggered to adapt to changes in the number of nodes.

2. Hotspot Balancing

2.1 Engine for Intelligent Decision:

Employing a weighted moving average algorithm, it collects three-level traffic metrics (node/RG/data object) in real time, then identifies hotspot sources at each level and executes differentiated scheduling operations—such as cross-node leader switching or splitting + leader switching combinations.

Mechanism for Stability Assurance:

Cooldown Period for Scheduling: After a hotspot RG is scheduled, it enters a cooldown state to prevent such RG from frequent leader switching.

Memory of Historical Scheduling: Records node migration trajectories to prevent hotspot ping-ponging.

2.2 Mode for Scenario-based Scheduling:

Manual configuration of read-write hotspot modes is supported, with read hotspot priority enabled by default to flexibly adapt to diverse business requirements.

However, for distributed database systems, mere data balancing does not equate to an "optimal" state. If the scheduling module fails to fully comprehend the logical meaning of database tables in the storage layer, the system may still encounter performance bottlenecks.

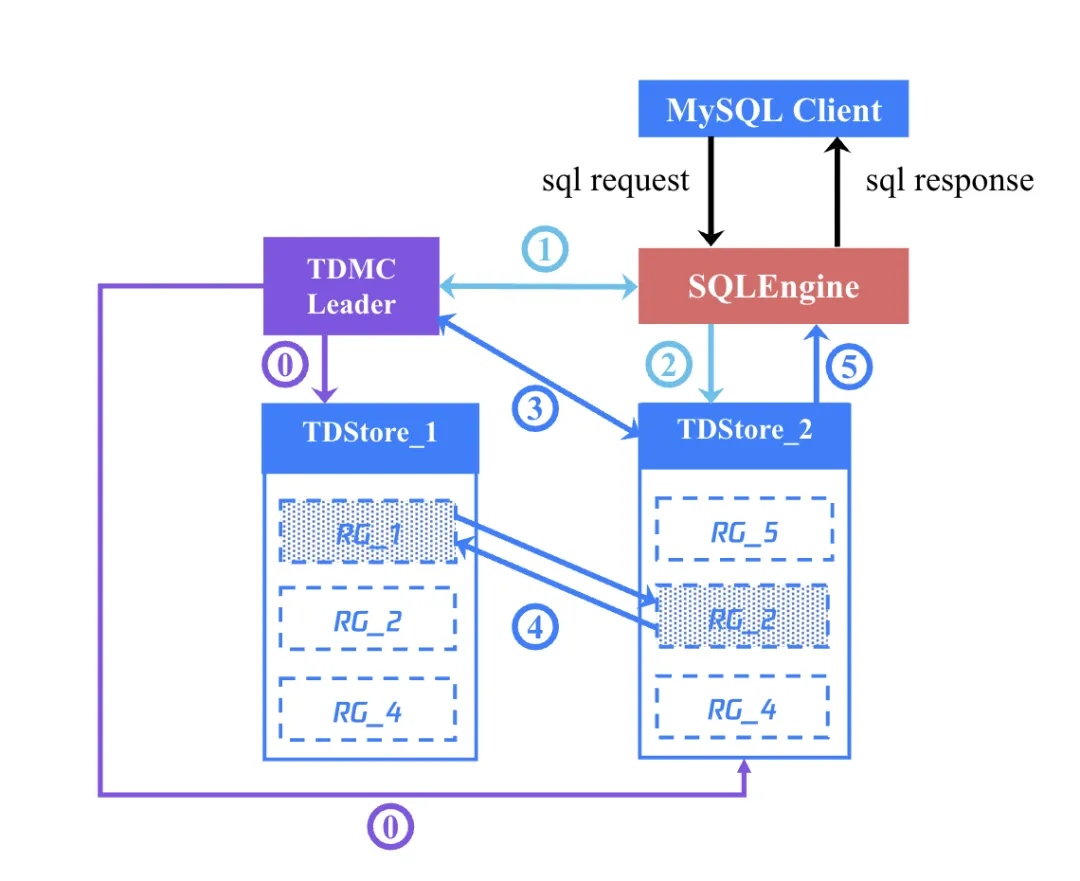

To better illustrate this point, let's examine a typical distributed application scenario: when an application client submits an SQL statement to a TDSQL Boundless database instance, how TDMC (the management module) collaborates with SQLEngine (SQL engine) and TDStore (storage engine) to process the request.

Step 0: TDMC periodically issues global minimum snapshot points to all TDStores to ensure global consistency for read operations.

Step 1: The SQLEngine receives the SQL statement, initiates a transaction, and retrieves the timestamp of transaction start

begin_ts from TDMC; it checks its local cache for RG routing information corresponding to the SQL statement. If none exists, it sends a request for key range query to TDMC to obtain the latest routing information.Step 2: SQLEngine sends the request to the TDStore where the Leader replica of the corresponding RG resides.

Step 3: The Leader replica, acting as the coordinator, initiates a two-phase transaction and retrieves prepare_ts from TDMC.

Step 4: After all participants are prepared, retrieve

commit_ts from TDMC and commit the transaction; if a leader switch occurs in other RGs involved during the process, query their latest Leader information from TDMC.Step 5: Commit the transaction; upon successful completion, return the result to SQLEngine, which then returns the result to the client.

The execution process described above may face the following three performance challenges:

1. Separation of Compute and Storage: If compute nodes and storage nodes are strictly separated, network latency and bandwidth limitations can negatively impact performance. Data transfer between different nodes introduces additional overhead, thereby reducing the response speed of the overall system.

2. Scattered Storage of Data Objects: If relevant data objects of the same table are excessively scattered—for example, table data stored in RG_1 while its secondary index resides in RG_2—even a simple write operation for a single-row transaction requires coordination through distributed transactions. This inevitably increases complexity and processing time.

3. Cross-Machine Join Queries: When multiple tables with relationships (such as Table A stored in RG_1 and Table B stored in RG_2) require JOIN operations or participate in distributed transactions, these operations will inevitably involve cross-machine communication, further exacerbating latency and resource consumption.

To address the first challenge, TDSQL Boundless adopts an integrated compute-storage architecture by physically binding SQLEngine with TDStore to form peer nodes, enhancing localized data access performance (using function calls instead of RPC). As for the remaining two challenges, they are resolved through TDSQL Boundless' intelligent scheduling mechanism. Next, we will delve into how TDSQL Boundless tackles these challenges.

TDSQL Boundless Intelligent Scheduling for Data

TDSQL Boundless implements scheduling for data affinity through a multi-level rule system, which includes three types of rules:

General rules: Such as the structural coupling of data objects in relational databases, this rule remains unchanged.

Predefined rules: Logical coupling that may enhance performance; if they do not align with business requirements, this rule can be modified.

Custom rules: Users can create them on their own based on business requirements.

Through this multi-level rule system, TDSQL Boundless achieves an optimal balance between system performance and business flexibility: ensuring efficient operation while significantly reducing transaction latency and improving speed of query response. Next, we will delve into the details of its technical implementation.

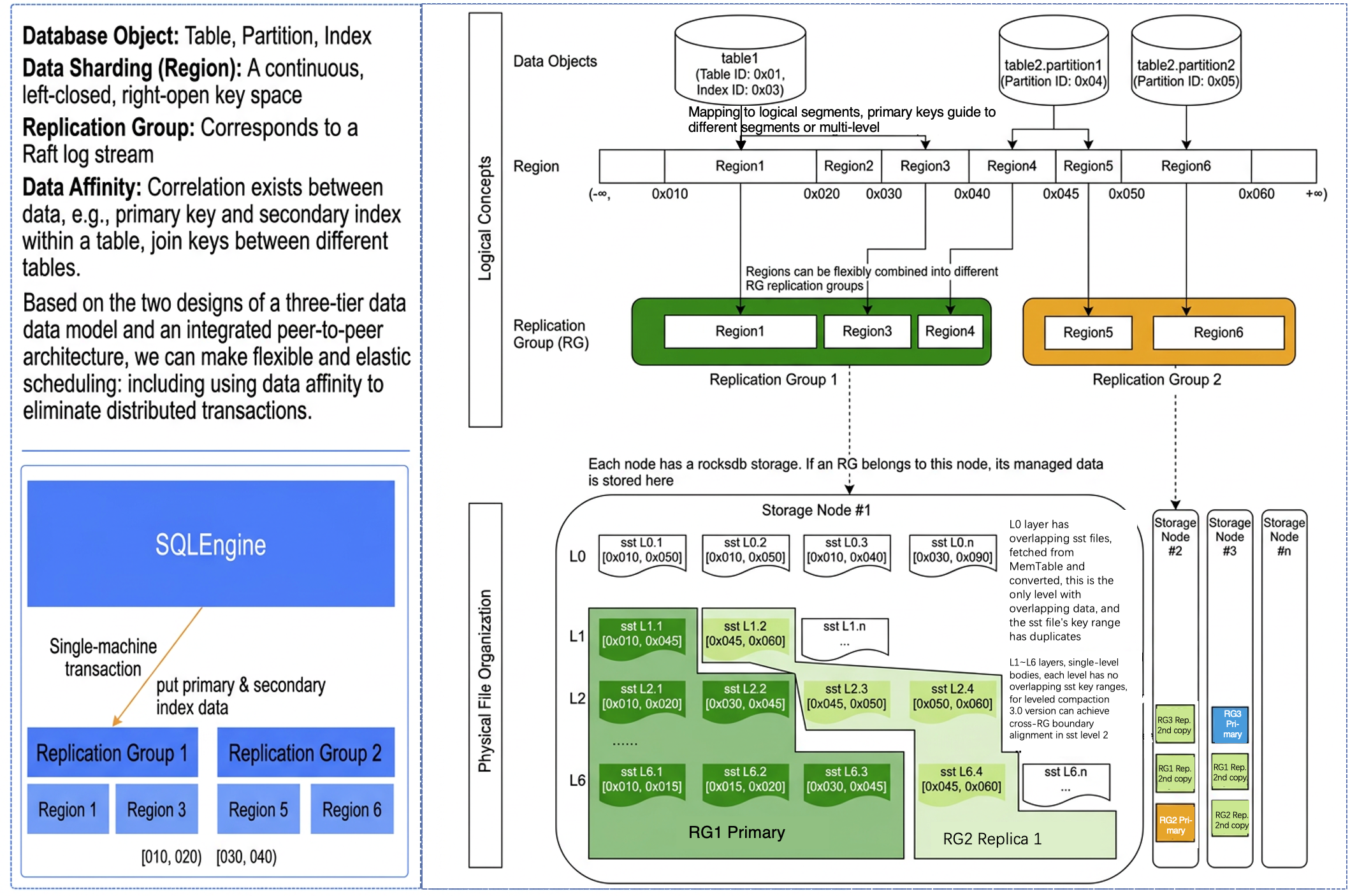

1. General rules

The following diagram illustrates the three-layer data model of TDStore: In the system, a table does not directly correspond to a physical file but is first mapped to a range-based Region. The right side of the diagram shows that Table 1 is allocated two Regions, 01 and 03, where 01 corresponds to the primary key and 03 to its secondary index. When a table's data volume becomes very large, the Region can be split and then mapped to other data nodes. Between Regions and physical data, a Replication Group (RG) is introduced, allowing flexible placement of different range-based Regions into this RG. Each RG corresponds to a Raft log stream. If a transaction only modifies data within this replication group, it can be committed after completing a single-node transaction. However, if a transaction involves multiple replication groups, it must proceed through a two-phase commit as a distributed transaction.

Through built-in general rules, TDStore leverages data affinity to eliminate distributed transactions: by default storing a table's primary key and its corresponding secondary index within the same RG [as shown in the bottom-left of the figure, Table1's primary key is Region 1 and its secondary index is Region 3, both belonging to RG 1]. This ensures that updates and writes to the table are always processed as single-node transactions.

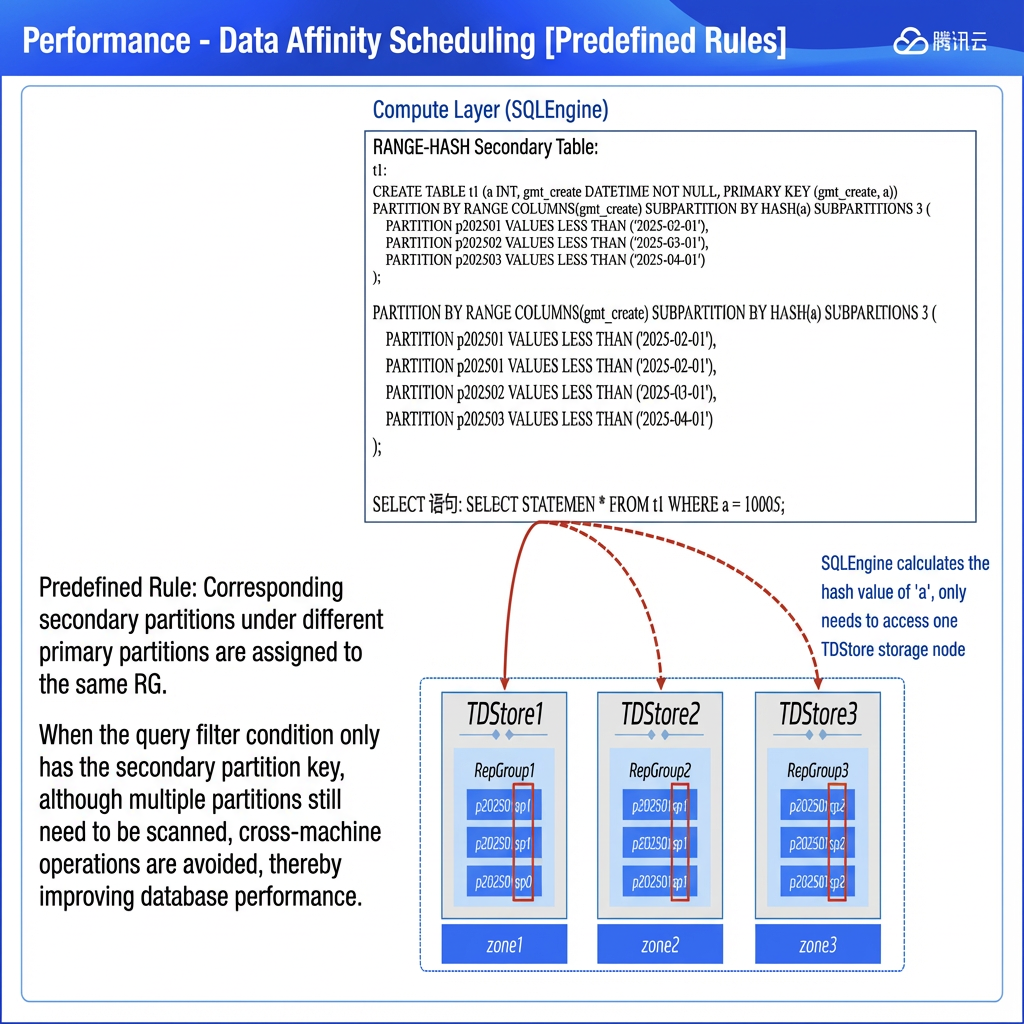

2. Predefined rules

TDSQL Boundless supports predefined data affinity rules, which are best practices summarized from daily operations adapting to business logic. For example: when secondary partitioned tables are created, corresponding secondary partitions under different primary partitions are assigned to the same RG, such as placing t1.p0.sp0 and t1.p0.sp0 in the same RG. If specific business requirements exist, there are methods to modify this default behavior.

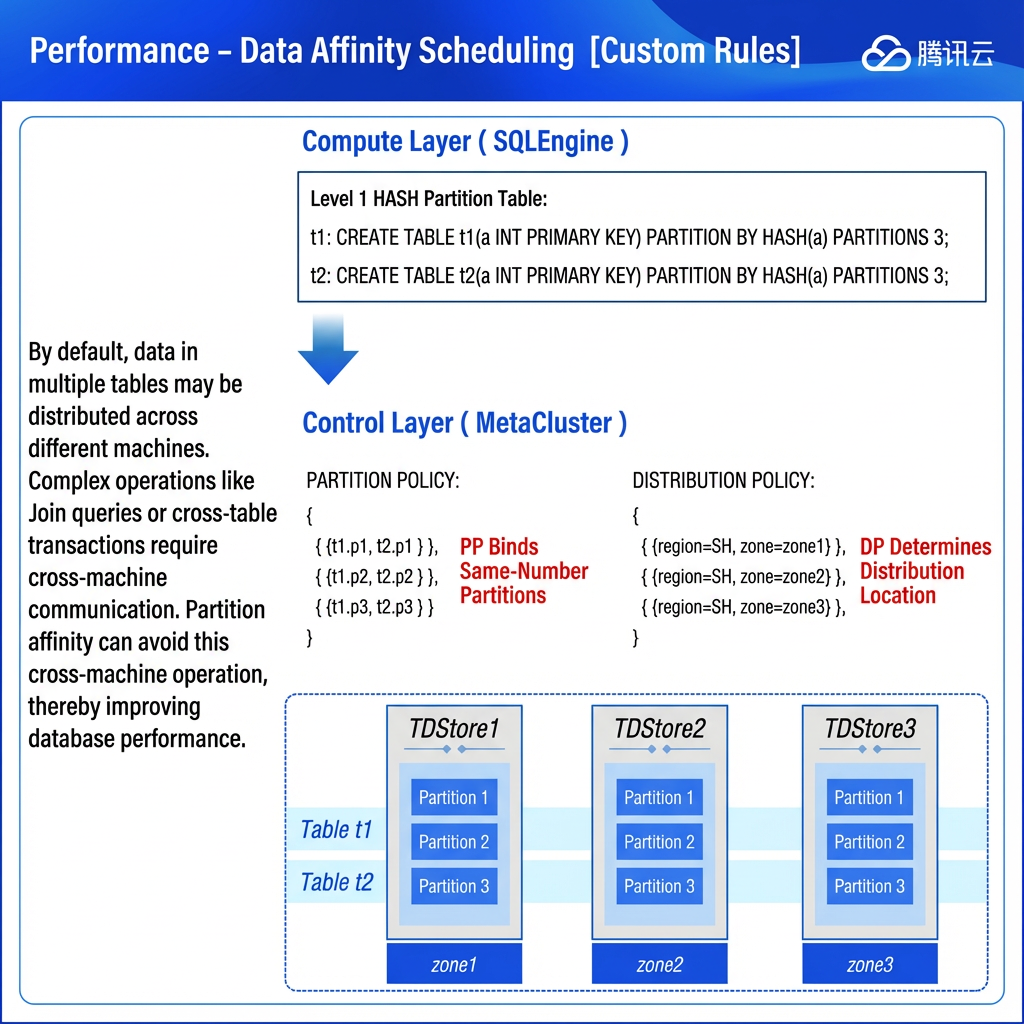

3. Custom rules

Additionally, TDSQL Boundless supports custom data affinity rules based on business logic. Users can control the physical storage proximity of a group of tables according to business data correlations, further optimizing transaction processing performance. Specifically, this is achieved by creating a binding policy for same-numbered partitions and node distribution policies for a table group through specific SQL commands, then binding them to the table group (which requires identical partition types, partition counts, and partition values). The system will then collocate same-numbered partitions of these tables on a single machine, avoiding cross-node queries and distributed transactions to enhance performance.

TDSQL Boundless Practice of Partition Affinity Optimization

In the practical section, we provide an example of data affinity with "custom rules." Specifically, we will compare the performance of two tables during JOIN queries: one scenario where the tables satisfy data affinity, and another where they do not. Through this comparison, we can gain a clearer understanding of the practical impact of data affinity on query performance.

Hardware Environment

Node Type | Node Specifications | Number of Nodes |

HyperNode | 16-Core CPU/32 GB of Memory/Enhanced SSD 300 GB | 3 |

Test Objective

TDSQL Boundless first supports implicit partition affinity for primary HASH-partitioned tables. As long as related tables are created as primary HASH partitions with the same number of partitions, same-numbered partitions will be scheduled to the same replication group by default. [Future releases will support manually creating affinity policies and richer types of partitioned tables.]

The data model for this test involves a JOIN query between orders and order details.

Data Preparation

-- Order table orders, primary hash-partitioned table:CREATETABLE orders (order_id INTNOTNULLAUTO_INCREMENT,customer_id INTNOTNULL,order_date DATENOTNULL,total_amount DECIMAL(10,2)NOTNULL,PRIMARYKEY(order_id))PARTITIONBYHASH(order_id)PARTITIONS 3;-- Order details table order_items, with 1-3 items per order randomly, primary hash-partitioned table:CREATETABLE order_items (item_id INTNOTNULLAUTO_INCREMENT,order_id INTNOTNULL,product_id INTNOTNULL,quantity INTNOTNULL,price DECIMAL(10,2)NOTNULL,PRIMARYKEY(item_id, order_id),INDEX idx_order_id (order_id))PARTITIONBYHASH(order_id)PARTITIONS 3;-- Data generation process omitted. Final record counts for both tables:MySQL [klose]>selectcount(*)from orders;+----------+|count(*)|+----------+|1000000|+----------+1rowinset(0.10 sec)MySQL [klose]>selectcount(*)from order_items;+----------+|count(*)|+----------+|1999742|+----------+1rowinset(0.39 sec)-- Check data distribution to ensure same-numbered partitions are in the same RG within peer nodes:SELECTb.rep_group_id, a.data_obj_name, a.schema_name, a.data_obj_name, c.leader_node_name,SUM(b.region_stats_approximate_size)AS size,SUM(b.region_stats_approximate_keys)AS key_numFROMINFORMATION_SCHEMA.META_CLUSTER_DATA_OBJECTS a,INFORMATION_SCHEMA.META_CLUSTER_REGIONS b,INFORMATION_SCHEMA.META_CLUSTER_RGS cWHEREa.data_obj_id = b.data_obj_idand b.rep_group_id = c.rep_group_idand a.data_obj_type notlike'%index%'and a.data_obj_type notlike'%AUTOINC%'and a.schema_name ='klose'and data_obj_name notlike'%bak%'GROUPBYb.rep_group_id, a.schema_name, a.data_obj_nameORDERBY1,2,3;+--------------+----------------+-------------+----------------+--------------------------+---------+---------+| rep_group_id | data_obj_name | schema_name | data_obj_name | leader_node_name | size | key_num |+--------------+----------------+-------------+----------------+--------------------------+---------+---------+|868437| orders.p1 | klose | orders.p1 | node-tdsql3-c1528b96-002|3909181|333334||868437| order_items.p1 | klose | order_items.p1 | node-tdsql3-c1528b96-002|7708701|666508||869169| orders.p0 | klose | orders.p0 | node-tdsql3-c1528b96-001|3792790|333333||869169| order_items.p0 | klose | order_items.p0 | node-tdsql3-c1528b96-001|7575671|666757||869736| orders.p2 | klose | orders.p2 | node-tdsql3-c1528b96-003|3782701|333333||869736| order_items.p2 | klose | order_items.p2 | node-tdsql3-c1528b96-003|7550956|666477|+--------------+----------------+-------------+----------------+--------------------------+---------+---------+-- Test JOIN queries using Jmeter to observe the performance of local scans:SELECT COUNT(*) FROM orders a JOIN order_items b ON a.order_id=b.order_id;-- Execution plan: Each node starts a worker thread to complete parallel subtasks on the local node. Using any sub-partition (p0-p2) of the orders table on the current node as the driving table, each row record is used to quickly find matching rows in the order_items table via index lookup. Results from all worker threads are then aggregated and returned. Execution efficiency will be significantly improved if all operations are local scans:|-> Aggregate: count(0)(cost=763055.75rows=2000000)-> Gather (slice: 1, workers: 3)(cost=563055.75rows=2000000)-> Aggregate: count(0)(cost=763055.75rows=2000000)-> Nested loopinnerjoin(cost=563055.75rows=2000000)->Index scan on a usingPRIMARY,with parallel scan ranges: 3(cost=112507.50rows=1000000)->Index lookup on b using idx_order_id (order_id=a.order_id)(cost=0.25rows=2)

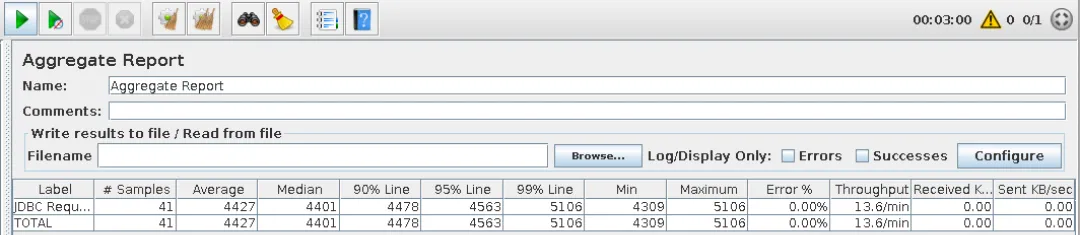

Single process, continuously executed for 3 minutes, completing 41 requests during this period with an average response time of 4.4s:

Simulating scenarios where older versions of TDSQL Boundless did not automatically apply partition affinity:

-- Currently, same-numbered partitions are all located on the same nodes:+--------------+----------------+-------------+----------------+--------------------------+---------+---------+| rep_group_id | data_obj_name | schema_name | data_obj_name | leader_node_name | size | key_num |+--------------+----------------+-------------+----------------+--------------------------+---------+---------+|868437| orders.p1 | klose | orders.p1 | node-tdsql3-c1528b96-002|3909181|333334||868437| order_items.p1 | klose | order_items.p1 | node-tdsql3-c1528b96-002|7708701|666508||869169| orders.p0 | klose | orders.p0 | node-tdsql3-c1528b96-001|3792790|333333||869169| order_items.p0 | klose | order_items.p0 | node-tdsql3-c1528b96-001|7575671|666757||869736| orders.p2 | klose | orders.p2 | node-tdsql3-c1528b96-003|3782701|333333||869736| order_items.p2 | klose | order_items.p2 | node-tdsql3-c1528b96-003|7550956|666477|+--------------+----------------+-------------+----------------+--------------------------+---------+---------+-- Simulating the state without default affinity of partitions in previous versions of TDSQL Boundless, splitting RGs and scattering them:ALTER INSTANCE SPLIT RG 868437BY'table';ALTER INSTANCE SPLIT RG 869169BY'table';ALTER INSTANCE SPLIT RG 869736BY'table';ALTER INSTANCE TRANSFER LEADER RG 869169TO'node-tdsql3-c1528b96-002';ALTER INSTANCE TRANSFER LEADER RG 868437TO'node-tdsql3-c1528b96-003';ALTER INSTANCE TRANSFER LEADER RG 869736TO'node-tdsql3-c1528b96-001';ALTER INSTANCE TRANSFER LEADER RG 72133681TO'node-tdsql3-c1528b96-001';-- Adjust TDMC parameters to prevent switch-back of the pre-created RG leader:Set the control parameter of TDMC check-primary-rep-group-enabled to 0.-- Check data distribution to confirm that same-numbered partitions are no longer located on the same peer nodes:SELECTb.rep_group_id, a.data_obj_name, a.schema_name, a.data_obj_name, c.leader_node_name,SUM(b.region_stats_approximate_size)AS size,SUM(b.region_stats_approximate_keys)AS key_numFROMINFORMATION_SCHEMA.META_CLUSTER_DATA_OBJECTS a,INFORMATION_SCHEMA.META_CLUSTER_REGIONS b,INFORMATION_SCHEMA.META_CLUSTER_RGS cWHEREa.data_obj_id = b.data_obj_idand b.rep_group_id = c.rep_group_idand a.data_obj_type notlike'%index%'and a.data_obj_type notlike'%AUTOINC%'and a.schema_name ='klose'and data_obj_name notlike'%bak%'GROUPBYb.rep_group_id, a.schema_name, a.data_obj_nameORDERBY1,2,3;+--------------+----------------+-------------+----------------+--------------------------+---------+---------+| rep_group_id | data_obj_name | schema_name | data_obj_name | leader_node_name | size | key_num |+--------------+----------------+-------------+----------------+--------------------------+---------+---------+|869736| orders.p2 | klose | orders.p2 | node-tdsql3-c1528b96-001|3782701|333333||72133681| order_items.p0 | klose | order_items.p0 | node-tdsql3-c1528b96-001|7575671|666757||869169| orders.p0 | klose | orders.p0 | node-tdsql3-c1528b96-002|3792790|333333||72132949| order_items.p1 | klose | order_items.p1 | node-tdsql3-c1528b96-002|7708701|666508||868437| orders.p1 | klose | orders.p1 | node-tdsql3-c1528b96-003|3909181|333334||72134504| order_items.p2 | klose | order_items.p2 | node-tdsql3-c1528b96-003|7550956|666477|+--------------+----------------+-------------+----------------+--------------------------+---------+---------+-- Execute the JOIN query again. Since same-numbered partitions are now all on different peer nodes, every row in the orders table requires an RPC call for index matching in the order_items table. Combined with approximately 1-3ms latency between three AZs, the execution efficiency is expected to drop significantly:SELECT COUNT(*) FROM orders a JOIN order_items b ON a.order_id=b.order_id;

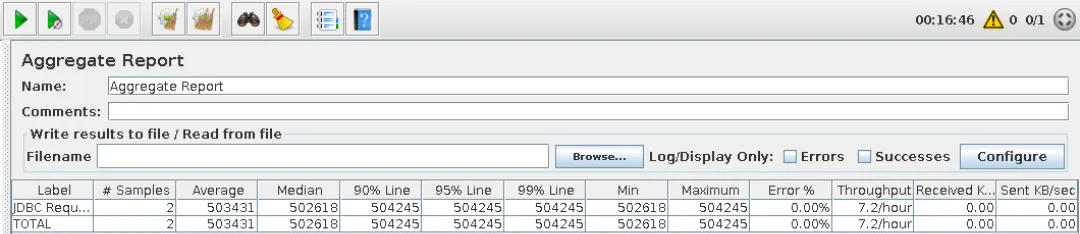

Single process, continuously executed for 16 minutes and 46 seconds, during which 2 requests were completed, with an average response time of 8 minutes and 23 seconds, the execution efficiency differs by a full 113 times.

Future Development of Partition Affinity in TDSQL Boundless

We can see that the integrated centralized-distributed capability of distributed databases effectively bridges the gap in the evolution from traditional relational databases to distributed architectures. Simultaneously, when business scale grows to reach the trigger threshold for distributed architecture, the data intelligent scheduling capability based on a multi-level rule system significantly enhances business access efficiency and actual performance. The combination of smooth architectural evolution and intelligent dynamic scheduling provides a robust and intelligent evolution path for digital transformation.

TDSQL Boundless is in a period of rapid development, with all features being user-demand-oriented and undergoing continuous rapid iteration and refinement. We are still continuously optimizing the data intelligent scheduling feature. The current version has pioneered adaptation of implicit partition affinity for commonly used primary HASH-partitioned tables. In future releases, we will further extend support for implicit partition affinity covering primary KEY-partitioned tables and range/list + hash secondary partitioned tables. Simultaneously, we will introduce flexibly customizable explicit partition affinity policies, with initial support for specification of explicit affinity for primary HASH-partitioned tables and regular tables.

피드백