TDSQL Boundless Selection Guide and Practical Tutorial

Download

Modo Foco

Tamanho da Fonte

1. TDSQL Boundless Specifications Selection

If migration is performed from InnoDB/B+ Tree storage, TDSQL uses LSM structure with compression enabled by default, the space can be estimated as 1/3 of the original space (single replica). For example: If there is 1T of data in InnoDB (single replica; if the system is configured as one primary and two secondaries, the total space is 3T), after migration is performed to TDSQL Boundless, the single replica space is approximately 333G, and with the default three replicas, it is about 1T. After data migration completes, disk utilization will be checked for scale in/out operations.

The selection of high single node configuration and fewer nodes quantities is prioritized: for instance, if the total resource requirement is 12c/24g/600G, a configuration of 4c/8g/200G x 3 nodes is preferable to 2c/4g/100G x 6 nodes. This is because small nodes incur a higher proportion of distributed transactions and communication overhead between nodes.

When scaling out is performed, vertical scaling should be prioritized; conversely, when scaling in is performed, reducing nodes should be prioritized.

TDSQL Boundless enables dynamic scaling of CPU / memory / disk resources without impacting business operations.

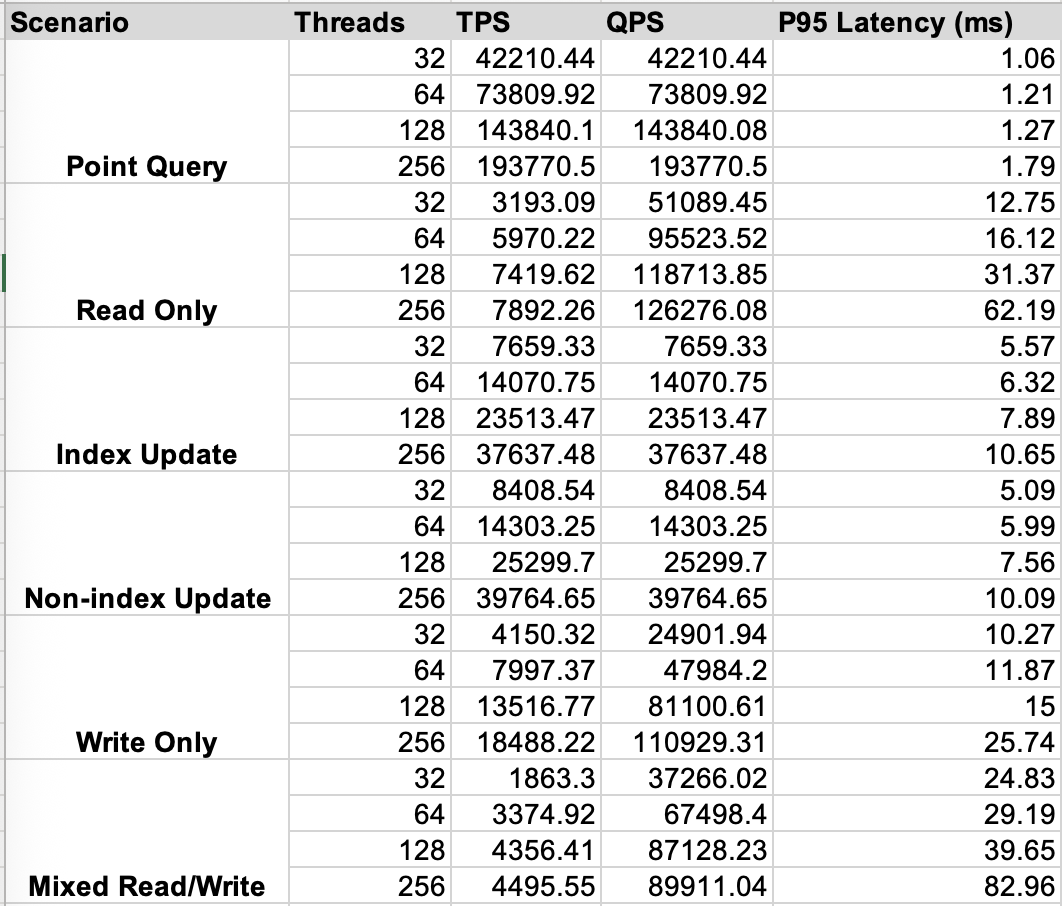

Reference Data for Performance

The following table presents: Sysbench performance data for a 3-node configuration with 16Core CPU / 32GB Memory / Enhanced SSD CBS, testing 32 tables each containing 10 million records.

2. Using TDSQL Boundless

Recommended Use Cases

Business Database and Table Sharding Scenario: After migrating to TDSQL Boundless, you no longer need to shard databases or tables. This applies whether your business originally used TDSQL MySQL InnoDB distributed architecture or implemented database/table sharding independently.

Business Scenarios with High Storage Costs: TDSQL Boundless features built-in compression capabilities that significantly reduce storage costs.

High Write Volume Scenarios: such as log streaming scenarios.

HBase Replacement Scenario: Provides support for secondary indexes, cross-row transactions, and so on.

SQL Limitations and Differences

TDSQL Boundless is syntactically compatible with MySQL 8.0, with minor limitations:

Foreign keys are not supported.

Virtual columns, GEOMETRY data type, descending indexes, and full-text indexes are not supported.

The cache for auto-increment fields defaults to 100, ensuring global uniqueness but not global monotonic increment. Setting the auto-increment cache to 1 guarantees globally monotonically increasing values, but impacts write performance for batch operations that explicitly specify auto-increment values.

Partition Table

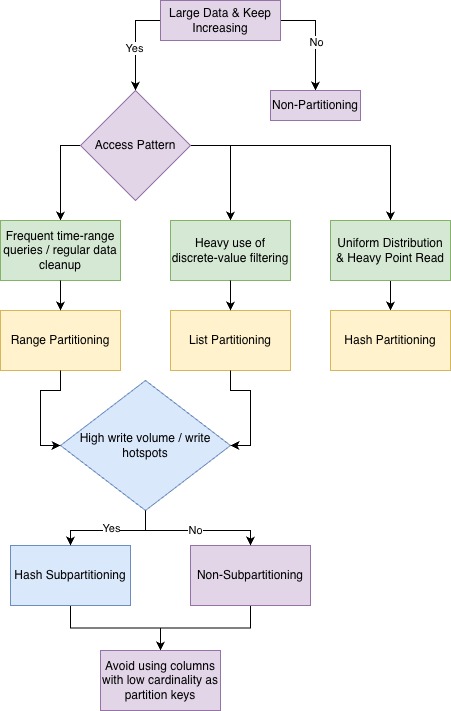

It is recommended to use HASH partitioning to distribute write hotspots. The number of partitions should be an integer multiple of the number of nodes. During queries, prioritize using HASH partition columns and avoid selecting columns with high duplicate values as partition keys.

For scenarios requiring periodic purging of expired data, RANGE partitioning is recommended. Meanwhile, if there is high write volume and a need to distribute write hotspots, it is advisable to create tables using RANGE + HASH subpartitioning. Queries should optimally include predicate conditions with both the time column and HASH partition key.

Avoid excessive partitioning. For example, if data needs to be retained for 3 years, daily partitioning would create over 1000 partitions. Consider weekly or monthly partitioning instead to reduce the number of partitions.

For the decision-making process regarding partition type selection, see the following figure:

Online DDL Capability

TDSQL Boundless supports most Online DDL operations (including certain Inplace DDL operations that only require modifying metadata, collectively referred to as Online DDL). For specific capabilities, see OnlineDDL Description.

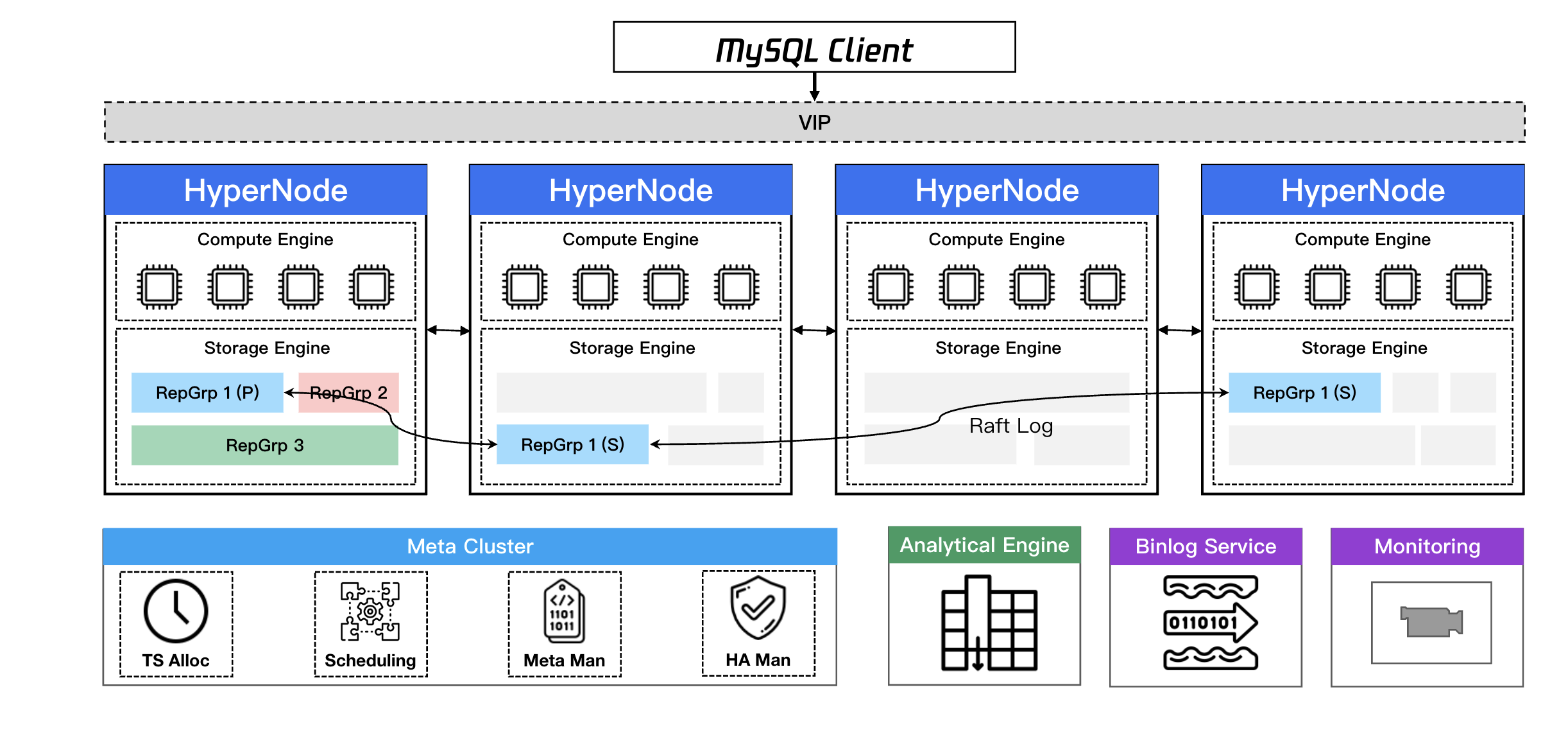

3. TDStore Storage

Unlike traditional MySQL master-slave replication, TDSQL Boundless employs smaller storage management units called RGs (replica groups). Each RG uses the Raft protocol to ensure replica consistency. The Leader of each RG can be distributed to any node in the instance. Therefore, any node in TDStore can handle read/write requests, enabling more efficient utilization of resources across all nodes.

Ajuda e Suporte

Esta página foi útil?

Você também pode entrar em contato com a Equipe de vendas ou Enviar um tíquete em caso de ajuda.

comentários