Making Your First Call

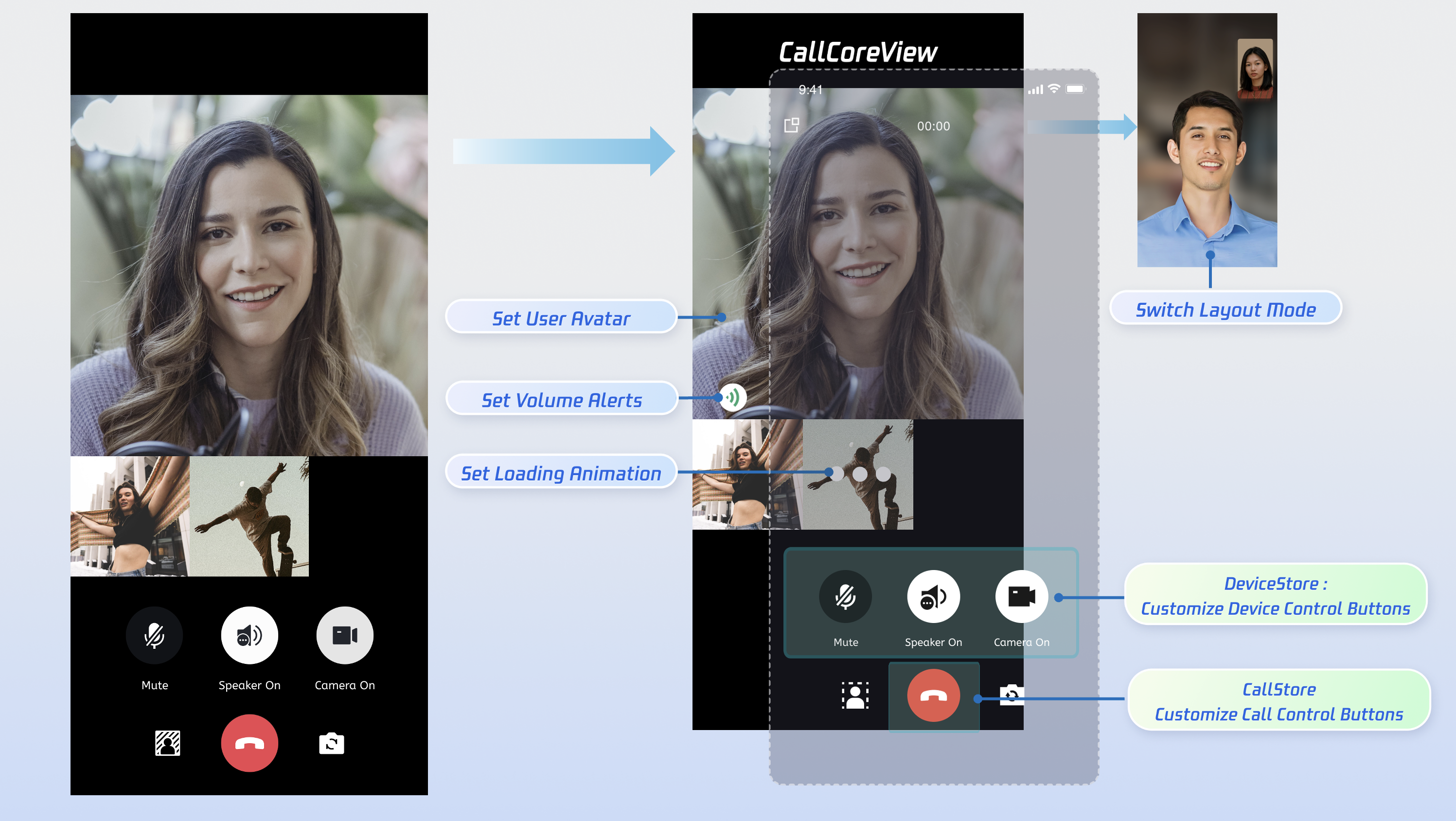

This guide walks you through implementing the "Make a Call" feature using the AtomicXCore SDK, leveraging the DeviceStore, CallStore, and the core UI component CallCoreView.

Core Features

To build multi-party audio/video calling scenarios with AtomicXCore, you’ll use the following three core modules:

Module | Description |

Core call UI component. Automatically observes CallStore data and renders video streams, with support for UI customization such as layout switching, avatar and icon configuration. | |

Manages the call lifecycle: make, answer, reject, and hang up calls. Provides real-time access to participant audio/video status, call duration, call history, and more. | |

Controls audio/video devices: microphone (toggle/on/off, volume), camera (toggle/on/off, switch, quality), screen sharing, and real-time device status monitoring. |

Getting Started

Step 1: Activate the Service

Step 2: Integrate the SDK

1. Add Pod dependency: Add

pod 'AtomicXCore' to your project's Podfile.target 'YourProjectTarget' dopod 'AtomicXCore'end

Tips:

If your project doesn’t have a Podfile, navigate to your

.xcodeproj directory in the terminal and run pod init to create one.2. Install the component: In the terminal, go to the directory containing your Podfile and run:

pod install --repo-update

Tips:

After installation, open your project using the

YourProjectName.xcworkspace file.Step 3: Initialize and Log In

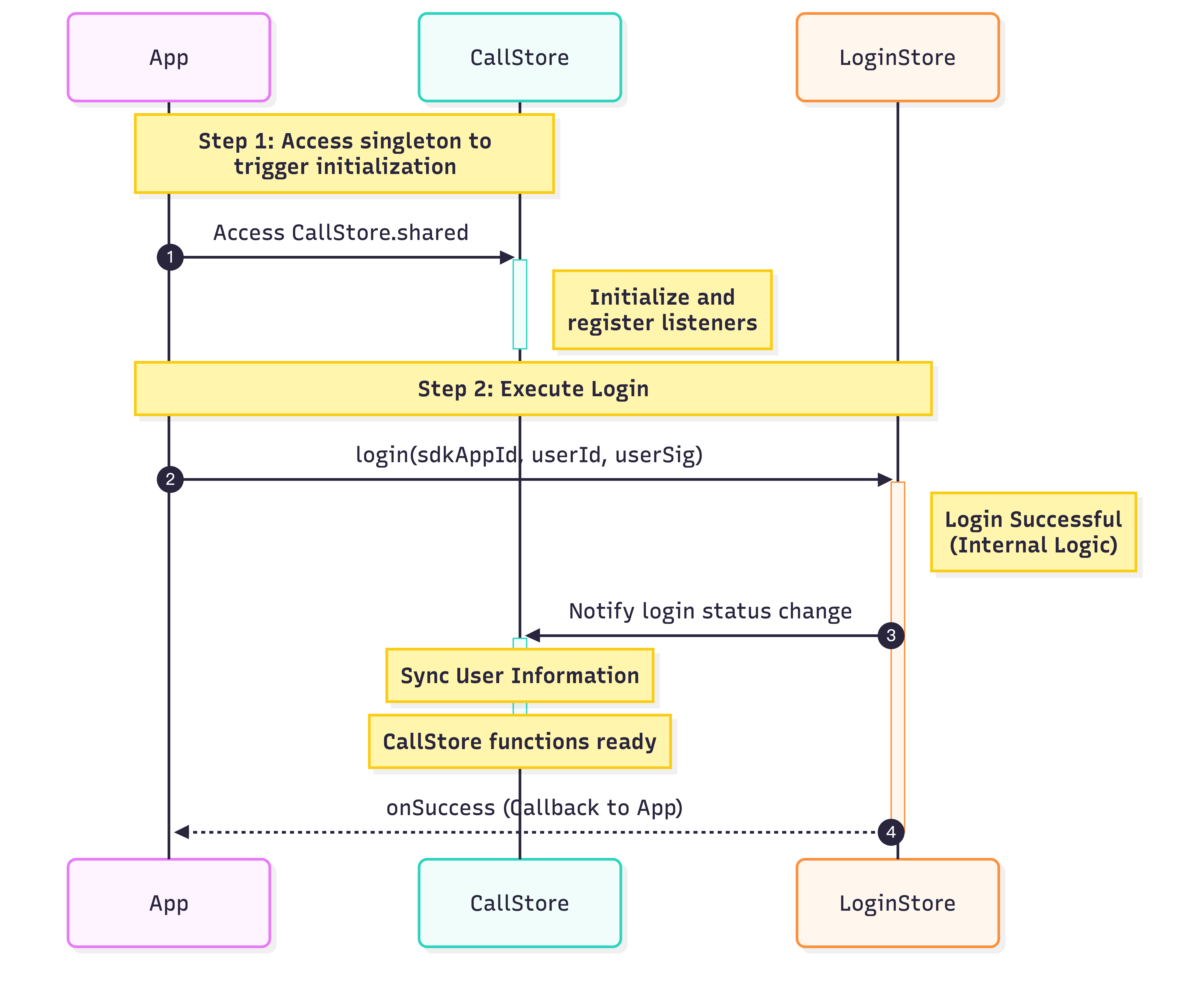

To start the call service, initialize CallStore and log in the user in sequence. CallStore automatically syncs user information after a successful login and enters the ready state. The following flowchart and sample code illustrate the process:

import UIKitimport AtomicXCoreimport Combineclass ViewController: UIViewController {var cancellables = Set<AnyCancellable>()override func viewDidLoad() {super.viewDidLoad()// Initialize CallStorelet _ = CallStore.shared// Set up user informationlet userID = "test_001" // Replace with your UserIDlet sdkAppID: Int = 1400000001 // Replace with your SDKAppID from the consolelet secretKey = "**************" // Replace with your SecretKey from the console// Generate UserSig (for local testing only; always generate UserSig on your server in production)let userSig = GenerateTestUserSig.genTestUserSig(userID: userID,sdkAppID: sdkAppID,secretKey: secretKey)// Log inLoginStore.shared.login(sdkAppID: sdkAppID,userID: userID,userSig: userSig) { result inswitch result {case .success:Log.info("login success")// Initialize TUICallEngineTUICallEngine.createInstance().`init`(Int32(sdkAppID), userId: userID, userSig: userSig) {Log.info("TUICallEngine init success")} fail: { code, message inLog.error("TUICallEngine init failed, code: \\(code), message: \\(message ?? "")")}case .failure(let error):Log.error("login failed, code: \\(error.code), error: \\(error.message)")}}}}

Parameter | Type | Description |

userID | String | Unique identifier for the current user. Only letters, numbers, hyphens, and underscores are allowed. Avoid using simple IDs like 1 or 123 to prevent multi-device login conflicts. |

sdkAppID | int | |

secretKey | String | |

userSig | String | Authentication token for TRTC. Development: Use the local GenerateTestUserSig.genTestUserSig function or the UserSig Tool to generate a temporary UserSig. Production: Always generate UserSig server-side to prevent SecretKey leakage. See Server-side UserSig Generation. For more details, see How to calculate and use UserSig. |

Implementation Steps

Before making a call, ensure the user is logged in—this is required for the service to function. The following five steps outline how to implement the "Make a Call" feature.

Step 1: Create the Call Interface

You need to create a call screen that is displayed when a call is initiated.

1. Create the call screen: Implement a new UIViewController to serve as the call interface. This will be used for both outgoing and incoming calls.

2. Attach CallCoreView: Add the core call view component to your call screen. CallCoreView automatically observes CallStore data and renders video streams, with options for customizing layout, avatars, and icons.

import UIKitimport AtomicXCoreclass CallViewController: UIViewController {override func viewDidLoad() {super.viewDidLoad()view.backgroundColor = .black// Attach CallCoreView to the call screencallCoreView = CallCoreView(frame: view.bounds)callCoreView?.autoresizingMask = [.flexibleWidth, .flexibleHeight]if let callCoreView = callCoreView {view.addSubview(callCoreView)}}}

CallCoreView Feature Overview:

Feature | Description | Reference |

Set Layout Mode | Switch between layout modes. If not set, layout adapts automatically based on participant count. | Switch Layout Mode |

Set Avatar | Customize avatars for specific users by providing avatar resource paths. | Customize Default Avatar |

Set Volume Indicator Icon | Set custom volume indicator icons for different volume levels. | Customize Volume Indicator Icon |

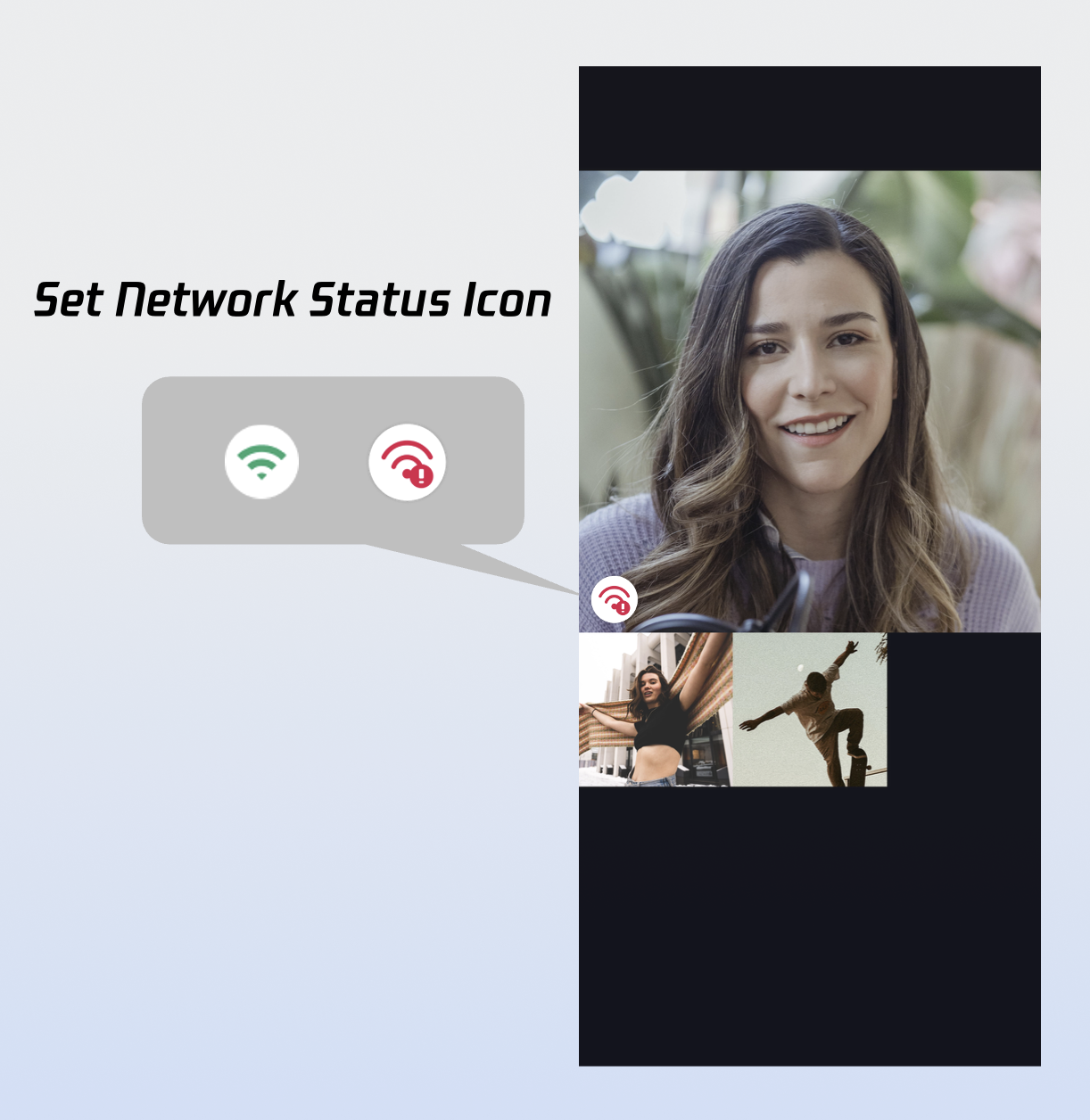

Set Network Indicator Icon | Set network status indicator icons based on real-time network quality. | Customize Network Indicator Icon |

Set Waiting Animation for Users | Support GIF animations for users in waiting state during multi-party calls. |

Step 2: Add Call Control Buttons

DeviceStore Controls: Microphone (toggle/on/off, volume), camera (toggle/on/off, switch, quality), screen sharing, and real-time device status monitoring. Bind button actions to the corresponding methods, and update button UI in real time by observing device status changes.

CallStore Controls: Answer, hang up, reject, and other core call actions. Bind button actions to the appropriate methods and observe call status to keep the UI in sync.

Icon Resources: Download button icons from GitHub. These icons are designed for TUICallKit and are free to use.

Example: Adding Hang Up, Microphone, and Camera Buttons

1.1 Add Hang Up Button: Create and add a hang up button. When tapped, call

hangup and close the call screen.import UIKitimport AtomicXCoreimport Combineclass CallViewController: UIViewController {private lazy var buttonHangup: UIButton = {let buttonWidth: CGFloat = 80let buttonHeight: CGFloat = 80let spacing: CGFloat = 30let bottomMargin: CGFloat = 80let totalWidth = buttonWidth * 3 + spacing * 2let startX = (view.bounds.width - totalWidth) / 2let buttonY = view.bounds.height - bottomMargin - buttonHeightlet button = createButton(frame: CGRect(x: startX + (buttonWidth + spacing) * 2, y: buttonY, width: buttonWidth, height: buttonHeight),title: "hangup")button.backgroundColor = .systemRedbutton.addTarget(self, action: #selector(touchHangupButton), for: .touchUpInside)return button}()override func viewDidLoad() {super.viewDidLoad()// Other initialization code// 1. Add a hang-up buttonview.addSubview(buttonHangup)}@objc private func touchHangupButton() {// 2. Call the hangup API in the click event and destroy the pageCallStore.shared.hangup(completion: nil)}private func createButton(frame: CGRect, title: String) -> UIButton {let button = UIButton(type: .system)button.frame = framebutton.setTitle(title, for: .normal)button.setTitleColor(.white, for: .normal)button.backgroundColor = UIColor(white: 0.3, alpha: 0.8)button.layer.cornerRadius = frame.width / 2button.titleLabel?.font = UIFont.systemFont(ofSize: 14)return button}}

1.2 Add Microphone Toggle Button: Create and add a microphone toggle button. When tapped, call openLocalMicrophone or closeLocalMicrophone.

import UIKitimport AtomicXCoreimport Combineclass CallViewController: UIViewController {private lazy var buttonMicrophone: UIButton = {let buttonWidth: CGFloat = 80let buttonHeight: CGFloat = 80let spacing: CGFloat = 30let bottomMargin: CGFloat = 80let totalWidth = buttonWidth * 3 + spacing * 2let startX = (view.bounds.width - totalWidth) / 2let buttonY = view.bounds.height - bottomMargin - buttonHeightlet button = createButton(frame: CGRect(x: startX + buttonWidth + spacing, y: buttonY, width: buttonWidth, height: buttonHeight),title: "Microphone")button.addTarget(self, action: #selector(touchMicrophoneButton), for: .touchUpInside)return button}()override func viewDidLoad() {super.viewDidLoad()// Other initialization code// 1. Add a microphone toggle buttonview.addSubview(buttonMicrophone)}// 2. Toggle the microphone (on/off) in the click event@objc private func touchMicrophoneButton() {let microphoneStatus = DeviceStore.shared.state.value.microphoneStatusif microphoneStatus == .on {DeviceStore.shared.closeLocalMicrophone()} else {DeviceStore.shared.openLocalMicrophone(completion: nil)}}// Helper method to create circular buttonsprivate func createButton(frame: CGRect, title: String) -> UIButton {let button = UIButton(type: .system)button.frame = framebutton.setTitle(title, for: .normal)button.setTitleColor(.white, for: .normal)button.backgroundColor = UIColor(white: 0.3, alpha: 0.8)button.layer.cornerRadius = frame.width / 2button.titleLabel?.font = UIFont.systemFont(ofSize: 14)return button}}

1.3 Add Camera Toggle Button: Add a camera toggle button to the bottom toolbar. When tapped, call openLocalCamera or closeLocalCamera.

import UIKitimport AtomicXCoreimport Combineclass CallViewController: UIViewController {private lazy var buttonCamera: UIButton = {let buttonWidth: CGFloat = 80let buttonHeight: CGFloat = 80let spacing: CGFloat = 30let bottomMargin: CGFloat = 80let totalWidth = buttonWidth * 3 + spacing * 2let startX = (view.bounds.width - totalWidth) / 2let buttonY = view.bounds.height - bottomMargin - buttonHeightlet button = createButton(frame: CGRect(x: startX, y: buttonY, width: buttonWidth, height: buttonHeight),title: "Camera" // Camera)button.addTarget(self, action: #selector(touchCameraButton), for: .touchUpInside)return button}()override func viewDidLoad() {super.viewDidLoad()// Other initialization code// 1. Add camera toggle buttonview.addSubview(buttonCamera)}// 2. Camera button click event@objc private func touchCameraButton() {let cameraStatus = DeviceStore.shared.state.value.cameraStatusif cameraStatus == .on {DeviceStore.shared.closeLocalCamera()} else {let isFront = DeviceStore.shared.state.value.isFrontCameraDeviceStore.shared.openLocalCamera(isFront: isFront, completion: nil)}}// Helper method to create circular buttonsprivate func createButton(frame: CGRect, title: String) -> UIButton {let button = UIButton(type: .system)button.frame = framebutton.setTitle(title, for: .normal)button.setTitleColor(.white, for: .normal)button.backgroundColor = UIColor(white: 0.3, alpha: 0.8)button.layer.cornerRadius = frame.width / 2button.titleLabel?.font = UIFont.systemFont(ofSize: 14)return button}}

1.4 Update Button Labels in Real Time: Observe microphone and camera status and update button labels accordingly.

import UIKitimport AtomicXCoreimport Combineclass CallViewController: UIViewController {private var cancellables = Set<AnyCancellable>()override func viewDidLoad() {super.viewDidLoad()// Other initialization code// 1. Observe microphone and camera statusobserveDeviceState()}private func observeDeviceState() {DeviceStore.shared.state.subscribe().map { $0.cameraStatus }.removeDuplicates().receive(on: DispatchQueue.main).sink { [weak self] cameraStatus in// 2. Update camera button textlet title = cameraStatus == .on ? "Turn Off Camera" : "Turn On Camera"self?.buttonCamera?.setTitle(title, for: .normal)}.store(in: &cancellables)DeviceStore.shared.state.subscribe().map { $0.microphoneStatus }.removeDuplicates().receive(on: DispatchQueue.main).sink { [weak self] microphoneStatus in// 2. Update microphone button textlet title = microphoneStatus == .on ? "Turn Off Mic" : "Turn On Mic"self?.buttonMicrophone?.setTitle(title, for: .normal)}.store(in: &cancellables)}}

Step 3: Request Microphone/Camera Permissions

Check for audio/video permissions before starting a call. If permissions are missing, prompt the user to grant them.

1. Declare permissions: Add the following keys to your app’s

Info.plist with appropriate usage descriptions. These will be shown to users when the system requests permissions:<key>NSCameraUsageDescription</key><string>Camera access is required for video calls and group video calls.</string><key>NSMicrophoneUsageDescription</key><string>Microphone access is required for audio calls, group audio calls, video calls, and group video calls.</string>

2. Request permissions dynamically: Request audio/video permissions based on the call media type when initiating a call.

import AVFoundationimport UIKitextension UIViewController {// Check microphone permissionfunc checkMicrophonePermission(completion: @escaping (Bool) -> Void) {let status = AVCaptureDevice.authorizationStatus(for: .audio)switch status {case .authorized:completion(true)case .notDetermined:AVCaptureDevice.requestAccess(for: .audio) { granted inDispatchQueue.main.async {completion(granted)}}case .denied, .restricted:completion(false)@unknown default:completion(false)}}// Check camera permissionfunc checkCameraPermission(completion: @escaping (Bool) -> Void) {let status = AVCaptureDevice.authorizationStatus(for: .video)switch status {case .authorized:completion(true)case .notDetermined:AVCaptureDevice.requestAccess(for: .video) { granted inDispatchQueue.main.async {completion(granted)}}case .denied, .restricted:completion(false)@unknown default:completion(false)}}// Show permission alertfunc showPermissionAlert(message: String) {let alert = UIAlertController(title: "Permission Required", // Permission Requiredmessage: message,preferredStyle: .alert)alert.addAction(UIAlertAction(title: "Settings", style: .default) { _ in // Settingsif let url = URL(string: UIApplication.openSettingsURLString) {UIApplication.shared.open(url)}})alert.addAction(UIAlertAction(title: "Cancel", style: .cancel)) // Cancelpresent(alert, animated: true)}}

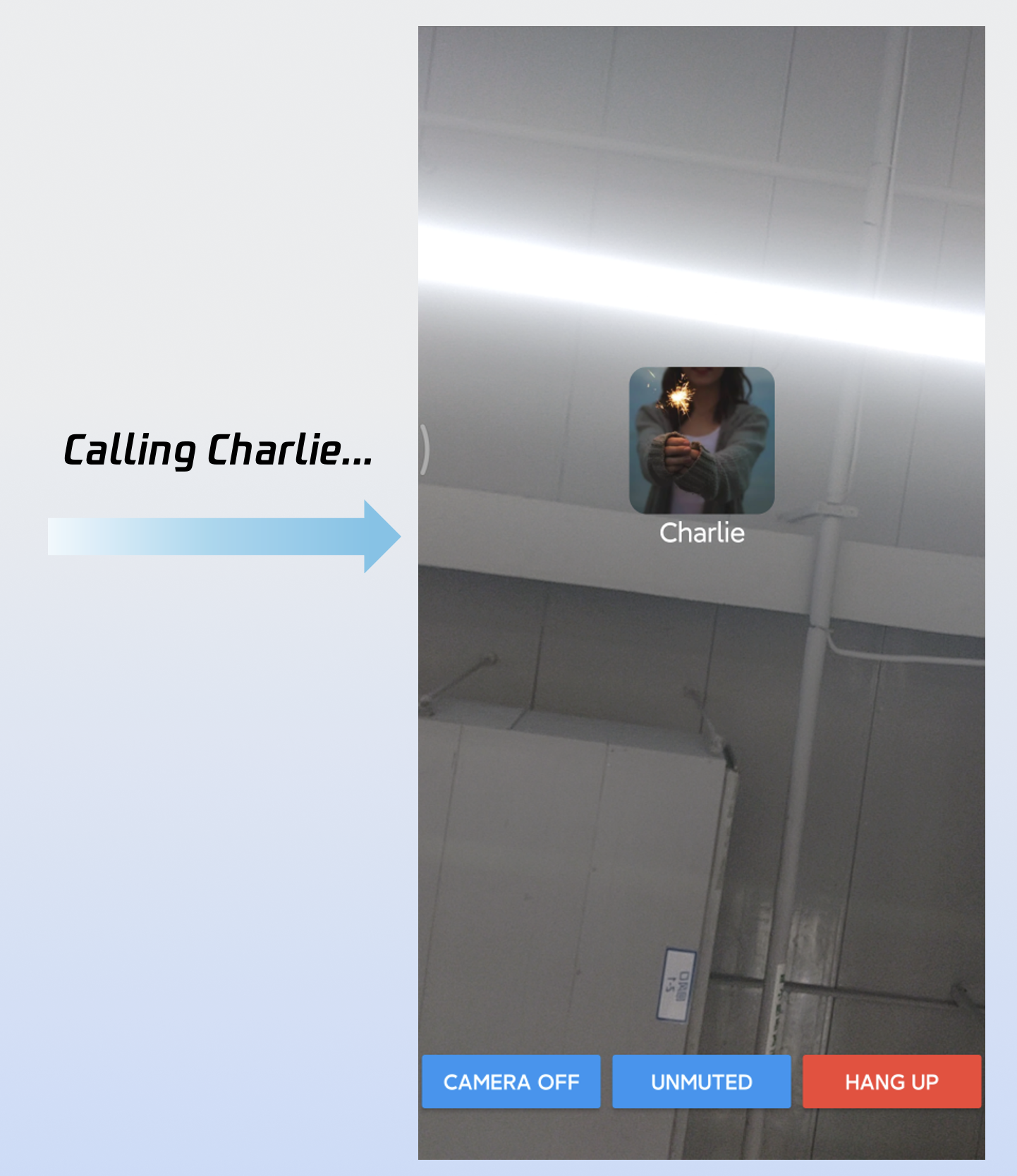

Step 4: Make a Call

After calling calls, navigate to the call screen. For the best user experience, automatically enable the microphone or camera based on the media type.

1. Initiate the call: Call

calls to start a call.2. Enable media devices: After the call is initiated, enable the microphone. If it’s a video call, also enable the camera.

3. Present the call screen: On successful call initiation, present the call screen.

import UIKitimport AtomicXCoreimport Combineclass MainViewController: UIViewController {// 1. Initiate a callprivate func startCall(userIdList: [String], mediaType: CallMediaType) {var params = CallParams()params.timeout = 30 // Set call timeout to 30 secondsCallStore.shared.calls(participantIds: userIdList,callMediaType: mediaType, // Call type: .audio or .videoparams: params) { [weak self] result inswitch result {case .success:// 2. Enable media devicesself?.openDevices(for: mediaType)// 3. Launch the call interfaceDispatchQueue.main.async {let callVC = CallViewController()callVC.modalPresentationStyle = .fullScreenself?.present(callVC, animated: true)}case .failure(let error):Log.error("Failed to initiate call: \\(error)")}}}private func openDevices(for mediaType: CallMediaType) {DeviceStore.shared.openLocalMicrophone(completion: nil)if mediaType == .video {let isFront = DeviceStore.shared.state.value.isFrontCameraDeviceStore.shared.openLocalCamera(isFront: isFront, completion: nil)}}}

calls API Parameter Reference:

Params | Type | Required | Description |

participantIds | List<String> | Yes | A list of target user IDs. |

callMediaType | Yes | The media type of the call, used to specify whether to initiate an audio or video call. CallMediaType.video : Video call.CallMediaType.audio : Audio call. | |

params | No | Extended call parameters, such as Room ID, call invitation timeout, etc. roomId (String) : Room ID. An optional parameter; if not specified, it will be automatically assigned by the server.timeout (Int) : Call Timeout (in seconds).userData (String) : Custom User Data for application-specific information.chatGroupId (String) : Chat Group ID, used for group call scenarios.isEphemeralCall (Boolean) : Ephemeral Call. If set to true, no call history record will be generated. |

Step 5: End the Call

When you call hangup or the remote party ends the call, the onCallEnded event is triggered. Listen for this event and close the call screen when the call ends.

1. Listen for call end event: Observe the

onCallEnded event.2. Close the call screen: When

onCallEnded is triggered, dismiss the call screen.import UIKitimport AtomicXCoreimport Combineclass CallViewController: UIViewController {override func viewDidLoad() {super.viewDidLoad()// Other initialization code// 1. Add call event listeneraddListener()}private func addListener() {CallStore.shared.callEventPublisher.receive(on: DispatchQueue.main).sink { [weak self] event inif case .onCallEnded = event {// 2. Dismiss the call interfaceself?.dismiss(animated: true)}}.store(in: &cancellables)}}

onCallEnded Event Parameters:

Params | Type | Description |

callId | String | Unique ID for this call. |

mediaType | The media type of the call: CallMediaType.video : Video call.CallMediaType.audio : Audio call. | |

reason | The reason why the call ended. unknown : Unable to determine the reason for termination.hangup : Normal termination; a user actively ended the call.reject : The callee declined the incoming call.noResponse : The callee did not answer within the timeout period.offline : The callee is currently offline.lineBusy : The callee is already in another call.canceled : The caller canceled the call before it was answered.otherDeviceAccepted : The call was answered on another logged-in device.otherDeviceReject : The call was declined on another logged-in device.endByServer : The call was forced to end by the server. | |

userId | String | The User ID of the person who triggered the call termination. |

Demo

After completing these five steps, the "Make a Call" feature will look like this:

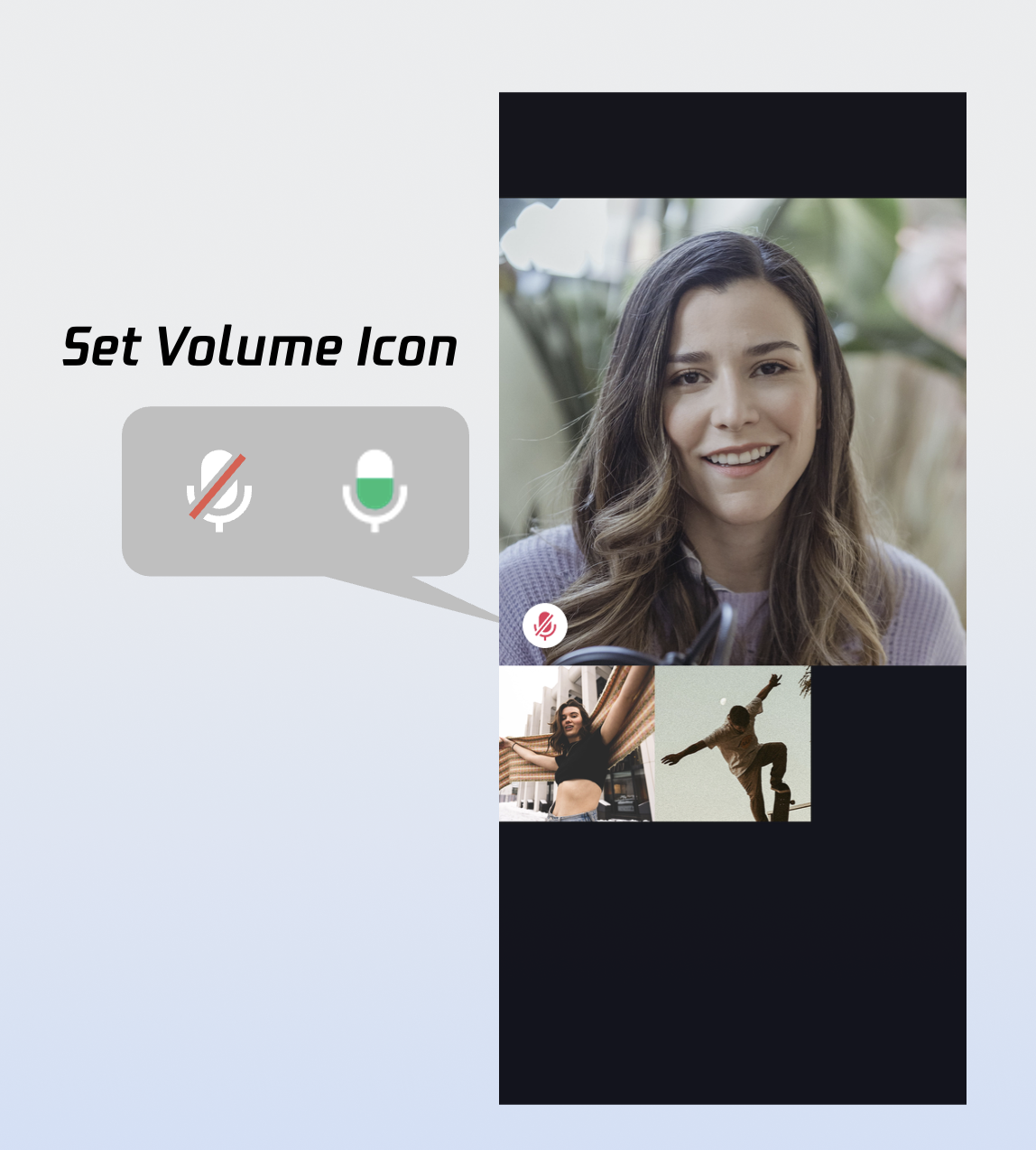

Customization

CallCoreView provides extensive UI customization, including support for custom avatars and volume indicator icons. To speed up your integration, you can download ready-to-use icons from GitHub. All icons are designed for TUICallKit and are free to use.

Customizing Volume Indicator Icons

// Set volume indicator iconslet volumeLevelIcons: [VolumeLevel: String] = [.mute: "Path to the corresponding icon resource"]callCoreView.setVolumeLevelIcons(icons: volumeLevelIcons)

setVolumeLevelIcons API Parameters:

Params | Type | Required | Description |

icons | [VolumeLevel: String] | Yes | A mapping table of volume levels to icon resources. The dictionary structure is defined as follows: Key ( VolumeLevel ) Represents the volume intensity level: VolumeLevel.mute :Microphone is off or muted.VolumeLevel.low :Volume range (0, 25].VolumeLevel.medium : Volume range (25, 50].VolumeLevel.high : Volume range (50, 75].VolumeLevel.peak : Volume range (75, 100].Value ( String ) The resource path or name of the icon corresponding to the volume level. |

Icon Configuration Guide:

Icons | Description | Download Links |

| Volume Indicator Icon. You can set this icon for VolumeLevel.low or VolumeLevel.medium. It will be displayed when the user's volume exceeds the specified level. | |

| Volume Indicator Icon. You can set this icon for VolumeLevel.mute. It will be displayed when the user is currently muted. |

Customizing Network Indicator Icons

// Set network quality iconslet networkQualityIcons: [NetworkQuality: String] = [.bad: "Path to the corresponding icon"]callCoreView.setNetworkQualityIcons(icons: networkQualityIcons)

setNetworkQualityIcons API Parameters:

Params | Type | Required | Description |

icons | [NetworkQuality: String] | Yes | Network Quality Icon Mapping Table. The dictionary structure is defined as follows: Key ( NetworkQuality ) : NetworkQuality NetworkQuality.unknown :Network status is undetermined. NetworkQuality.excellent:Outstanding network connection.NetworkQuality.good : Stable and good network connection.NetworkQuality.poor : Weak network signal.NetworkQuality.bad : Very weak or unstable network. NetworkQuality.veryBad :Extremely poor network, near disconnection. NetworkQuality.down :Network is disconnected. Value ( String ) : The absolute path or resource name of the icon corresponding to the network status. |

Network Warning Icon:

Icons | Description | Download Links |

| Poor Network Indicator. You can set this icon for NetworkQuality.bad, NetworkQuality.veryBad or NetworkQuality.down .It will be displayed when the network quality is poor. |

Customizing Default Avatars

Use the CallCoreView setParticipantAvatars API to set user avatars. Listen to the allParticipants reactive data: when you have a user's avatar, set and display it; if not, show the default avatar.

// Set user avatarsvar avatars: [String: String] = [:]let userId = "" // User IDlet avatarPath = "" // Path to user's default avatar resourceavatars[userId] = avatarPathcallCoreView.setParticipantAvatars(avatars: avatars)

setParticipantAvatars API Parameters:

Params | Type | Required | Description |

avatars | [String: String] | Yes | User Avatar Mapping Table. The dictionary structure is described as follows: Key : The userID of the user.Value : The absolute path to the user's avatar resource. |

Default Avatar:

Icons | Description | Download Links |

| Default Profile Picture. You can set this as the default avatar for a user when their profile image fails to load or if no avatar is provided. |

Customizing Waiting Animations

Use the CallCoreView setWaitingAnimation API to set a waiting animation for users who are waiting to answer.

// Set waiting animationlet waitingAnimationPath = "" // Path to waiting animation GIF resourcecallCoreView.setWaitingAnimation(path: waitingAnimationPath)

setWaitingAnimation API Parameters:

Params | Type | Required | Description |

path | String | Yes | Absolute path to a GIF format image resource. |

User Waiting Animation:

Icons | Description | Download Links |

| User Waiting Animation Animations for group calls. Once configured, this animation will be displayed when the user's status is "Waiting to Answer" (Pending). |

Adding a Call Duration Indicator

1. Subscribe to the data layer: Observe

CallStore.observerState.activeCall for changes.2. Bind the duration to your UI: The

activeCall.duration field is reactive and will automatically update your UI.import UIKitimport AtomicXCoreimport Combineclass TimerView: UILabel {private var cancellables = Set<AnyCancellable>()override init(frame: CGRect) {super.init(frame: frame)setupView()}required init?(coder: NSCoder) {super.init(coder: coder)setupView()}private func setupView() {textColor = .whitetextAlignment = .centerfont = .systemFont(ofSize: 16)}override func didMoveToWindow() {super.didMoveToWindow()if window != nil {// [Recommended Usage] Animation for group calls.// Once configured, this animation is displayed when the user's status is "Waiting to Answer".registerActiveCallObserver()} else {cancellables.removeAll()}}private func registerActiveCallObserver() {CallStore.shared.state.subscribe().map { $0.activeCall }.removeDuplicates { $0.duration == $1.duration }.receive(on: DispatchQueue.main).sink { [weak self] activeCall in// Update call durationself?.updateDurationView(activeCall: activeCall)}.store(in: &cancellables)}private func updateDurationView(activeCall: CallInfo) {let currentDuration = activeCall.durationlet minutes = currentDuration / 60let seconds = currentDuration % 60text = String(format: "%02d:%02d", minutes, seconds)}}

Note:

More Features

Customizing User Avatar and Nickname

var userProfile = UserProfile()userProfile.userID = "" // Your User IDuserProfile.avatarURL = "" // URL of the avatar imageuserProfile.nickname = "" // The nickname to be setLoginStore.shared.setSelfInfo(userProfile: userProfile) { result inswitch result {case .success:// Success callback: profile updated successfullycase .failure(let error):// Failure callback: handle the error}}

setSelfInfo API Parameters:

Params | Type | Required | Description |

userProfile | Yes | User info struct: userID: User ID avatarURL: User avatar URL nickname: User nickname |

Switching Layout Modes

CallCoreView supports three built-in layout modes. Use setLayoutTemplate to set the layout. If not set, CallCoreView will automatically use

Float mode for 1-on-1 calls and Grid mode for multi-party calls.Float Mode | Grid Mode | PIP Mode |

|  |  |

Layout: While waiting, display your own video full screen. After answering, show the remote video full screen and your own video as a floating window. Interaction: Drag the small window or tap to swap big/small video. | Layout: All participant videos are tiled in a grid. Best for 2+ participants. Tap to enlarge a video. Interaction: Tap a participant to enlarge their video. | Layout: In 1v1, remote video is fixed; in multi-party, the active speaker is shown full screen. Interaction: Shows your own video while waiting, displays call timer after answering. |

setLayoutTemplate Sample code:

func setLayoutTemplate(_ template: CallLayoutTemplate)

setLayoutTemplate API Parameters:

Params | Type | Description |

template | CallCoreView's layout mode CallLayoutTemplate.float :Layout: While waiting, display your own video full screen. After answering, show the remote video full screen and your own video as a floating window. Interaction: Drag the small window or tap to swap big/small video. CallLayoutTemplate.grid :Layout: All participant videos are tiled in a grid. Best for 2+ participants. Tap to enlarge a video. Interaction: Tap a participant to enlarge their video. CallLayoutTemplate.pip : Layout: In 1v1, remote video is fixed; in multi-party, the active speaker is shown full screen. Interaction: Shows your own video while waiting, displays call timer after answering. |

Setting Default Call Timeout

When making a call using calls, set the

timeout field in CallParams to specify the call invitation timeout.var callParams = CallParams()callParams.timeout = 30 // Set call timeout to 30 seconds.CallStore.shared.calls(participantIds: userIdList,callMediaType: .video,params: callParams,completion: nil)

Params | Type | Required | Description |

participantIds | List<String> | Yes | A list of User IDs for the target participants. |

callMediaType | Yes | The media type of the call, used to specify whether to initiate an audio or video call. CallMediaType.video : Video Call.CallMediaType.audio : Audio Call. | |

params | No | Extended call parameters, such as Room ID, call invitation timeout, etc. roomId (String) : Room ID. An optional parameter; if not specified, it will be automatically assigned by the server.timeout (Int) : Call Timeout (in seconds).userData (String) : User Custom Data for app-specific logic.chatGroupId (String) : Chat Group ID, used specifically for group call scenarios.isEphemeralCall (Boolean) : Ephemeral Call. Whether the call is encrypted and transient (will not generate a call history record). |

Implementing In-App Floating Window

The AtomicXCore SDK provides the

CallPipView component to enable in-app floating windows. When the call interface is covered by another screen (e.g., the user navigates away but the call is ongoing), a floating window displays call status and lets users quickly return to the call.Step 1: Create the Floating Window Controller.

import UIKitimport AtomicXCoreimport Combine/*** Floating Window Controller* * Used to display the call in a floating window, containing a CallCoreView internally.*/class FloatWindowViewController: UIViewController {var tapGestureAction: (() -> Void)?private var cancellables = Set<AnyCancellable>()private lazy var callCoreView: CallCoreView = {let view = CallCoreView(frame: self.view.bounds)view.autoresizingMask = [.flexibleWidth, .flexibleHeight]view.setLayoutTemplate(.pip) // Set to Picture-in-Picture (PIP) layout modeview.isUserInteractionEnabled = false // Disable interaction to allow taps to pass through to the parent viewreturn view}()override func viewDidLoad() {super.viewDidLoad()view.backgroundColor = UIColor(white: 0.1, alpha: 1.0)view.layer.cornerRadius = 10view.layer.masksToBounds = trueview.addSubview(callCoreView)// Add tap gesture recognizerlet tapGesture = UITapGestureRecognizer(target: self, action: #selector(handleTap))view.addGestureRecognizer(tapGesture)// Delay status observation to prevent the window from closing immediately upon creationDispatchQueue.main.asyncAfter(deadline: .now() + 1.0) { [weak self] inself?.observeCallStatus()}}@objc private func handleTap() {tapGestureAction?()}/*** Observe call status changes* Automatically closes the floating window when the call ends.*/private func observeCallStatus() {CallStore.shared.state.subscribe(StatePublisherSelector<CallState, CallParticipantStatus>(keyPath: \\.selfInfo.status)).removeDuplicates().receive(on: DispatchQueue.main).sink { [weak self] status inif status == .none {// Call ended, post notification to hide the floating windowNotificationCenter.default.post(name: NSNotification.Name("HideFloatingWindow"), object: nil)}}.store(in: &cancellables)}deinit {cancellables.removeAll()}}

Step 2: Implement floating window management logic in the main interface.

import UIKitimport AtomicXCoreclass MainViewController: UIViewController {private var floatWindow: UIWindow?override func viewDidLoad() {super.viewDidLoad()// Listen for the notification to show the floating windowNotificationCenter.default.addObserver(self,selector: #selector(showFloatingWindow),name: NSNotification.Name("ShowFloatingWindow"),object: nil)// Listen for the notification to hide the floating windowNotificationCenter.default.addObserver(self,selector: #selector(hideFloatingWindow),name: NSNotification.Name("HideFloatingWindow"),object: nil)}/*** Displays the in-app floating window.*/@objc private func showFloatingWindow() {// Check if the call is currently active/acceptedlet selfStatus = CallStore.shared.state.value.selfInfo.statusguard selfStatus == .accept else {return}// Prevent duplicate creation if the floating window already existsguard floatWindow == nil else { return }// ⚠️ CRITICAL: The current windowScene must be used to create the new windowguard let windowScene = UIApplication.shared.connectedScenes.first as? UIWindowScene else {return}// Define floating window dimensions (9:16 aspect ratio)let pipWidth: CGFloat = 100let pipHeight: CGFloat = pipWidth * 16 / 9let pipX = UIScreen.main.bounds.width - pipWidth - 20let pipY: CGFloat = 100// Create the floating window (associated with the windowScene)let window = UIWindow(windowScene: windowScene)window.windowLevel = .alert + 1 // Ensure it stays above standard UIwindow.backgroundColor = .clearwindow.frame = CGRect(x: pipX, y: pipY, width: pipWidth, height: pipHeight)// Initialize the floating window controllerlet floatVC = FloatWindowViewController()floatVC.tapGestureAction = { [weak self] inself?.openCallViewController()}window.rootViewController = floatVCself.floatWindow = window// Make the window visiblewindow.isHidden = falsewindow.makeKeyAndVisible()// Immediately restore the main window as the key window to maintain proper app focusif let mainWindow = windowScene.windows.first(where: { $0 != window }) {mainWindow.makeKey()}}/*** Hides the in-app floating window.*/@objc private func hideFloatingWindow() {floatWindow?.isHidden = truefloatWindow = nil}/*** Opens the call interface (triggered upon tapping the floating window).*/private func openCallViewController() {// Dismiss the floating window firsthideFloatingWindow()// Retrieve the current top-most ViewControllerguard let topVC = getTopViewController() else {return}let callVC = CallViewController()callVC.modalPresentationStyle = .fullScreentopVC.present(callVC, animated: true)}/*** Utility to retrieve the current top-most ViewController in the view hierarchy.*/private func getTopViewController() -> UIViewController? {guard let windowScene = UIApplication.shared.connectedScenes.first as? UIWindowScene,let keyWindow = windowScene.windows.first(where: { $0.isKeyWindow }),let rootVC = keyWindow.rootViewController else {return nil}var topVC = rootVCwhile let presentedVC = topVC.presentedViewController {topVC = presentedVC}return topVC}deinit {NotificationCenter.default.removeObserver(self)}}

Step 3: Add the floating window trigger logic to the Call Interface.

import UIKitimport AtomicXCoreclass CallViewController: UIViewController {override func viewWillAppear(_ animated: Bool) {super.viewWillAppear(animated)// When entering the call interface, post a notification to hide the floating windowNotificationCenter.default.post(name: NSNotification.Name("HideFloatingWindow"), object: nil)}override func viewWillDisappear(_ animated: Bool) {super.viewWillDisappear(animated)// When leaving the call interface, check if the call is still activelet selfStatus = CallStore.shared.state.value.selfInfo.statusif selfStatus == .accept {// If the call is still ongoing, post a notification to show the floating windowNotificationCenter.default.post(name: NSNotification.Name("ShowFloatingWindow"), object: nil)}}}

Enabling System Picture-in-Picture (PiP) Outside the App

AtomicXCore SDK supports system-level PiP via the underlying TRTC engine. When your app goes to the background, the call video can float above other apps as a system PiP window, so users can continue their video call while multitasking.

Note:

1. In Xcode, add

Background Modes under Signing & Capabilities and enable Audio, AirPlay, and Picture in Picture. 2. Requires iOS 15.0 or later.

1. Configure PiP Parameters: You need to set parameters such as the fill mode for the PiP window, user video regions, and canvas configurations.

import Foundationimport AtomicXCore// Fill Mode Enumerationenum PictureInPictureFillMode: Int, Codable {case fill = 0 // Aspect Fill (Scale to fill, may crop)case fit = 1 // Aspect Fit (Scale to fit, no cropping)}// User Video Regionstruct PictureInPictureRegion: Codable {let userId: String // Unique User IDlet width: Double // Width (0.0 - 1.0, relative to canvas)let height: Double // Height (0.0 - 1.0, relative to canvas)let x: Double // X coordinate (0.0 - 1.0, relative to top-left of canvas)let y: Double // Y coordinate (0.0 - 1.0, relative to top-left of canvas)let fillMode: PictureInPictureFillMode // Rendering fill modelet streamType: String // Stream type ("high" for HD or "low" for SD)let backgroundColor: String // Hex background color (e.g., "#000000")}// Canvas Configurationstruct PictureInPictureCanvas: Codable {let width: Int // Canvas width in pixelslet height: Int // Canvas height in pixelslet backgroundColor: String // Hex background color}// Picture-in-Picture Parametersstruct PictureInPictureParams: Codable {let enable: Bool // Toggle PiP functionalitylet cameraBackgroundCapture: Bool? // Whether to continue camera capture in backgroundlet canvas: PictureInPictureCanvas? // Canvas settings (Optional)let regions: [PictureInPictureRegion]? // List of user video regions (Optional)}// PiP API Request Objectstruct PictureInPictureRequest: Codable {let api: String // API identifier namelet params: PictureInPictureParams // Parameter payload}

2. Enable Picture-in-Picture: You can enable or disable the PiP feature using the

configPictureInPicture method.let params = PictureInPictureParams(enable: true,cameraBackgroundCapture: true,canvas: nil,regions: nil)let request = PictureInPictureRequest(api: "configPictureInPicture",params: params)// Encode to JSON string and call the Experimental APIlet encoder = JSONEncoder()if let data = try? encoder.encode(request),let jsonString = String(data: data, encoding: .utf8) {TUICallEngine.createInstance().callExperimentalAPI(jsonObject: jsonString)}

Keeping the Screen Awake During Calls

To prevent the screen from dimming or locking during a call, set

UIApplication.shared.isIdleTimerDisabled = true when the call starts, and restore it when the call ends.class CallViewController: UIViewController {override func viewDidLoad() {super.viewDidLoad()// Disable automatic screen lock to keep the screen onUIApplication.shared.isIdleTimerDisabled = true}override func viewWillDisappear(_ animated: Bool) {super.viewWillDisappear(animated)// Restore the automatic screen lock behaviorUIApplication.shared.isIdleTimerDisabled = false}}

Playing a Ringtone While Waiting for Answer

Listen to your own call status to play a ringtone while waiting for an answer, and stop the ringtone when the call is answered or ends.

import Combineprivate var cancellables = Set<AnyCancellable>()private func observeSelfCallStatus() {CallStore.shared.state.subscribe().map { $0.selfInfo.status }.removeDuplicates().receive(on: DispatchQueue.main).sink { [weak self] status inif status == .accept || status == .none {// Stop playing ringtonereturn}if status == .waiting {// Start playing ringtone}}.store(in: &cancellables)}

Enabling Background Audio/Video Capture

To allow your app to capture audio and video while in the background (e.g., when the user locks the screen or switches apps), configure iOS background mode permissions and set up the audio session.

Configuration Steps:

1. In Xcode, select your project

Target → Signing & Capabilities.2. Click

+ Capability.3. Add

Background Modes.4. Enable:

Audio, AirPlay, and Picture in Picture (for audio capture and PiP)Voice over IP (for VoIP calls)Remote notifications (optional, for offline push)Your

Info.plist will then include:<key>UIBackgroundModes</key><array><string>audio</string><string>voip</string><string>remote-notification</string></array>

Configure Audio Session (AVAudioSession):

Set up the audio session before the call starts, ideally in the call interface’s

viewDidLoad or before making/answering a call.import AVFoundation/*** Configure the Audio Session to support background audio capture.* * Recommended to call this method in the following scenarios:* 1. Inside viewDidLoad of the call interface.* 2. Before initiating a call (calls).* 3. Before answering a call (accept).*/private func setupAudioSession() {let audioSession = AVAudioSession.sharedInstance()do {// Set the audio session category to PlayAndRecord.// .allowBluetooth: Support for standard Bluetooth headsets.// .allowBluetoothA2DP: Support for high-quality Bluetooth audio (A2DP protocol).try audioSession.setCategory(.playAndRecord, options: [.allowBluetooth, .allowBluetoothA2DP])// Activate the audio session.try audioSession.setActive(true)} catch {// Audio session configuration failed.print("Failed to configure Audio Session: \\(error)")}}

Special Handling for Ringtone Playback (Optional):

To play a ringtone through the speaker while waiting for an answer, temporarily switch the audio session to

.playback mode./*** Switch the Audio Session for ringtone playback.* * Use Case: When using AVAudioPlayer to play the ringtone.*/private func setAudioSessionForRingtone() {let audioSession = AVAudioSession.sharedInstance()do {// Switch to playback mode (optimizes for output only)try audioSession.setCategory(.playback, options: [.allowBluetooth, .allowBluetoothA2DP])// Force the ringtone to play through the built-in speakertry audioSession.overrideOutputAudioPort(.speaker)try audioSession.setActive(true)} catch {// Failed to configure Audio Session for ringtoneprint("Ringtone audio session error: \\(error)")}}/*** Restore to Call Mode after the ringtone stops playing.*/private func restoreAudioSessionForCall() {let audioSession = AVAudioSession.sharedInstance()do {// Restore to PlayAndRecord mode (required for two-way VoIP communication)try audioSession.setCategory(.playAndRecord, options: [.allowBluetooth, .allowBluetoothA2DP])try audioSession.setActive(true)} catch {// Failed to restore Audio Sessionprint("Failed to restore call audio session: \\(error)")}}

Next Steps

Congratulations! You’ve completed the "Make a Call" feature. Next, see Answer Your First Call to implement the answer call functionality.

FAQs

If the callee is offline and comes online within the call invitation timeout, will they receive the incoming call event?

For one-on-one calls, if the callee comes online within the timeout, they will receive an incoming call invitation. For group calls, if the callee comes online within the timeout, up to 20 pending group messages will be retrieved. If there is a call invitation, the incoming call event will be triggered.

Contact Us

If you have any questions or suggestions during the integration or usage process, feel free to join our Telegram technical group or contact us for support.

Help and Support

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback